This post has been republished via RSS; it originally appeared at: Microsoft Stream Blog articles.

Today in Microsoft Stream, you can see analytics that show you the popularity of a video based on view, comment, and like counts. If you are a global administrator for Office 365, now you can set up a workflow to pull deeper analytics.

Today I’d like to share a way to access video engagement analytics by leveraging Office 365 Audit Logs, along with SharePoint and Microsoft Flow, to deliver reports in Power BI. I will note that I am not a Data Expert, Power BI Pro, or Developer – but if you are, you can likely take this much farther!

In this training we will cover the following:

- The data – Unified Audit Logs

- Setting up the SharePoint list as the data source

- Getting the data

- Pulling the data into Power BI

- Building a basic report

- Refreshing the data

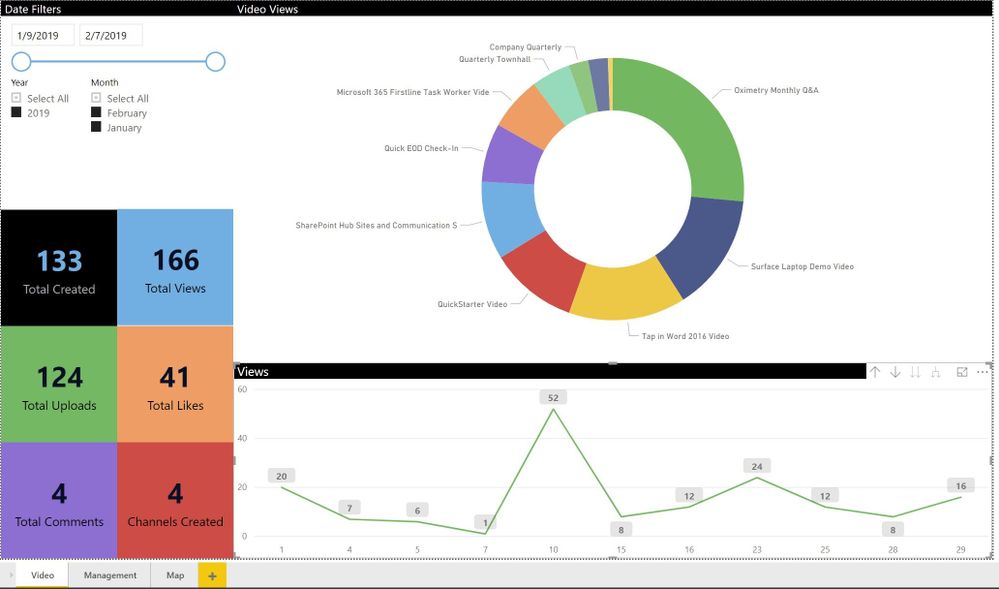

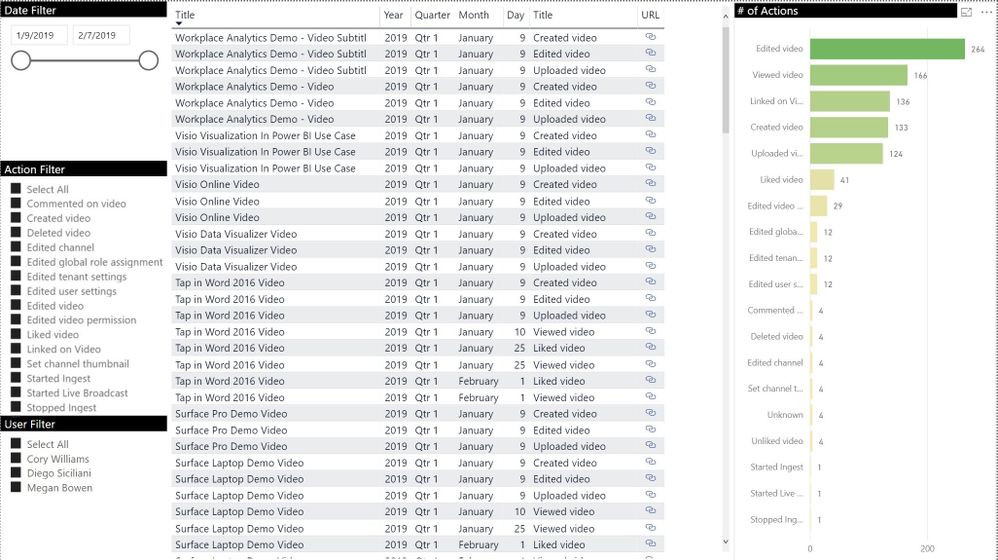

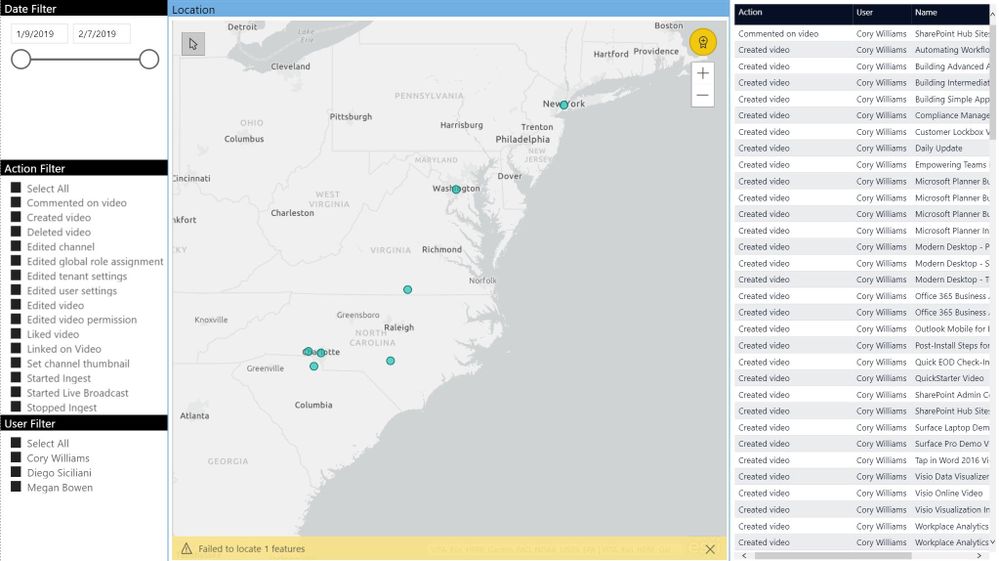

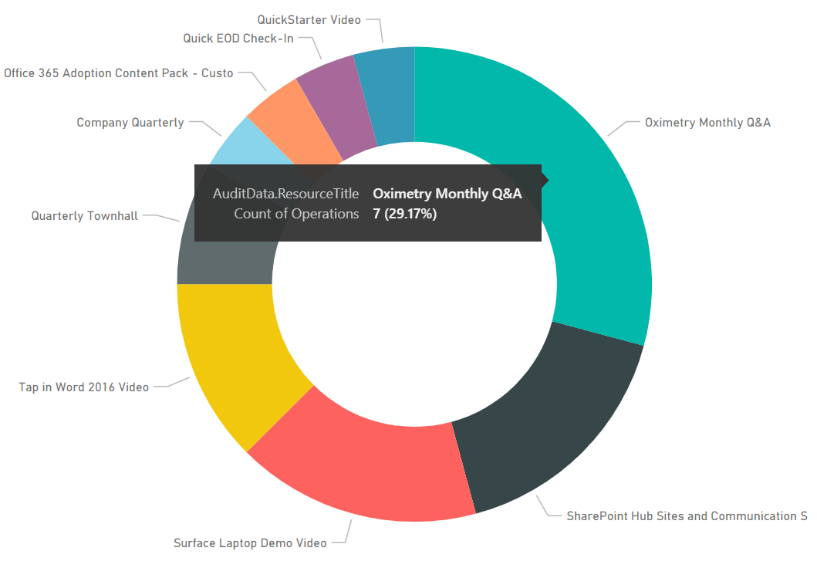

Below are some basic examples of the insights you can derive from the data you have access to.

The data - Unified Audit Logs

In the security and compliance center of Office 365 (https://protection.office.com) we have a unified logging experience. This service tracks many actions that occur across Office 365. With Microsoft Stream this includes the following:

Microsoft Stream video activities (Individual video actions)

|

Created video Edited video Deleted video Uploaded video Downloaded video Edited video permission Viewed video |

Shared video Liked video Unliked video Commented on video Deleted video comment Uploaded video text track Deleted video text track |

Uploaded video thumbnail Deleted video thumbnail Replaced video permissions and channel links Marked video public Marked video private |

Microsoft Stream group channel activities (Video organization actions)

| Created group Edited group Deleted group |

Edited group memberships Created channel Edited channel |

Deleted a channel Replaced channel thumbnails |

Microsoft Stream general activities (Tenant specific admin actions)

| Edited user settings Edited tenant settings Edited global role members |

Deleted User Deleted User’s Data Report Edited User |

Exported User’s Data Report Downloaded User’s Data Report |

Setting up SharePoint

We are going to want to dynamically update our dataset and ensure we have a consistent data connection for use in the Power BI report. To accomplish this, we will store the audit data in a SharePoint list. You could certainly send this to SQL as well, using SharePoint will make the data more accessible to all. For SharePoint we will create the list with columns to match up to what we get when we parse the audit logs. We will also add some additional columns to create friendly names that relate to some of data.

SharePoint List Fields

|

Field Name |

Field Type |

Notes |

|

Operation (Title) |

Single Line of Text |

Flow will add the friendly name from the AuditDataOperation field. (InvokeStreamVideoLike to Video Liked) |

|

CreationDate |

Date and Time |

Date the Action was performed |

|

UserIds |

Single Line of Text |

Email of the user that took the action |

|

AuditDataResourceUrl |

Single Line of Text |

Link to the item |

|

AuditDataResourceTitle |

Single Line of Text |

Name of the item (Video, Channel, Group) |

|

AuditDataOperationDetails |

Multiple Lines of Text (Plain text) |

Contains additional details that we don’t parse with a second pass. Some of the data is duplicative. The one field in here that may be of use is Description, but this data set varies depending on action so it could add additional complexities to the flow. |

|

AuditDataClientApplicationId |

Single Line of Text |

GUID of Application used to perform the action |

|

AuditDataObjectId |

Single Line of Text |

GUID of the item |

|

AuditDataUserId |

Single Line of Text |

Email of the user that took the action (duplicate) |

|

AuditDataClientIP |

Single Line of Text |

IP address the user took the action |

|

AuditDataId |

Single Line of Text |

|

|

AuditDataRecordType |

Single Line of Text |

Microsoft Stream service |

|

AuditDataCreationTime |

Single Line of Text |

Different way of consuming the action was taken down to the second. |

|

AuditDataOperation |

Single Line of Text |

The internal action name |

|

AuditDataOrganizationId |

Single Line of Text |

GUID that defines your tenant. Not important for single organization reports |

|

AuditDataUserType |

Single Line of Text |

|

|

AuditDataUserKey |

Single Line of Text |

|

|

AuditDataWorkload |

Single Line of Text |

|

|

AuditDataResultStatus |

Single Line of Text |

|

|

AuditDataVersion |

Single Line of Text |

|

|

Year |

Calculated |

=TEXT(CreationDate,"YYYY") |

|

Month |

Calculated |

=TEXT(CreationDate,"MMMM") |

|

Date |

Calculated |

This is for the date only, no time - =TEXT(CreationDate,"MM/DD/YYYY") |

|

User |

Person or Group |

Populated with ClientDataUserId |

|

Location |

Single Line of Text |

Optional (uses a location API based on ClientIP) |

|

Application |

Single Line of Text |

Flow automatically converts the Microsoft internal GUID to the matching application. (cf53fce8-def6-4aeb-8d30-b158e7b1cf83 = Stream Portal) |

Getting the data

The audit logs are only available to specific members of an organizations such as the Global Administrators or other personnel they may have been assigned access. In most organizations if you want this data you will need to request an export. If you have access you get the data, you can follow the manual steps here.

There are various APIs and PowerShell commands available within the Microsoft 365 platform. For this solution we leveraged Microsoft Flow and used the Search-UnifiedAuditLog PowerShell cmdlet. You may wish to change to the Office 365 Management API, but this easily fit the needs for this project.

Never created a Microsoft Flow. Check out the getting started documentation.

Here is what was done to create a nightly pull of the data to drop in the SharePoint list that was created above. You can download the template to import into your Microsoft Flow environment from here, of course you can build it yourself by following the steps below. As mentioned above you will need the Global Admin role. If you do not you will need to work with a Global Admin on how to implement. They will likely need to build the Flow for you. Make sure to rename each action, some of the expressions used with need the Action names, otherwise you will need to refactor the expressions.

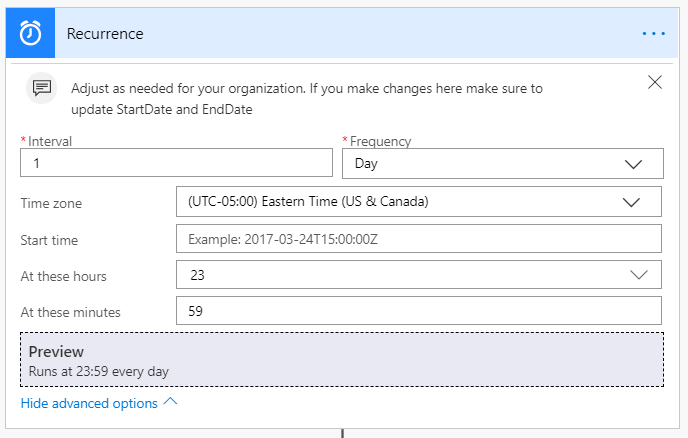

- In this scenario we have set the Recurrence trigger to run on a nightly basis at 11:59 PM. You can change this to fit your requirements. Just know that you will need to update the StartDate and EndDate variables to match.

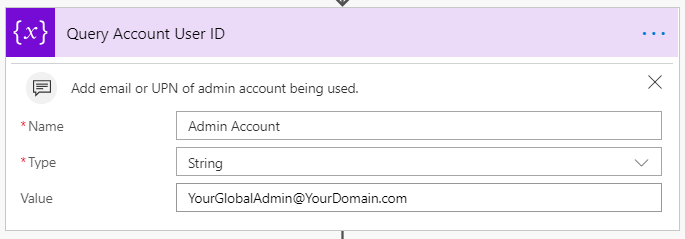

- Use the Initialize Parameter action to store a global admin account or specific service account to the value field. (Reminder that the account needs access to the audit logs). This account is used in multiple locations in this solution.

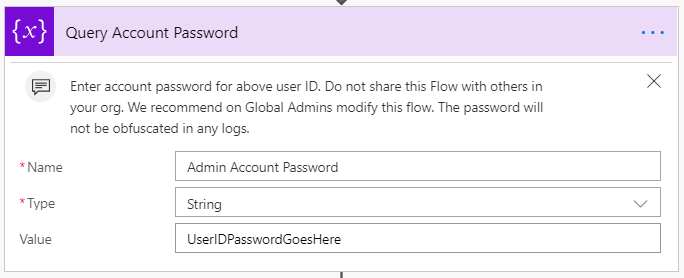

- Add another Initialize Parameter action to store the password for the account above in the value field

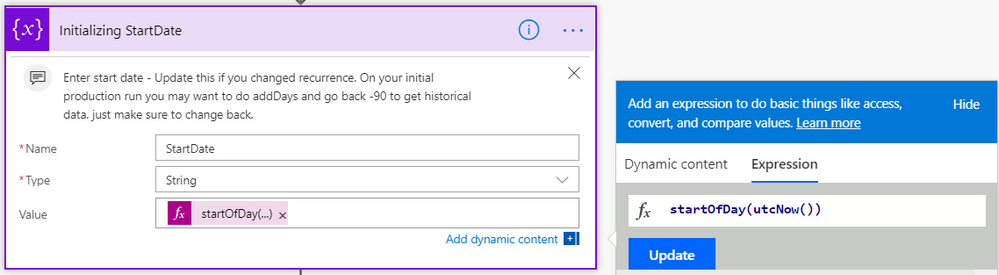

- Add another Initialize Parameter action to store the start date for the query using startOfDay(utcNow()) as the value. (Keep in mind this will need to be adjusted if you change the recurrence)

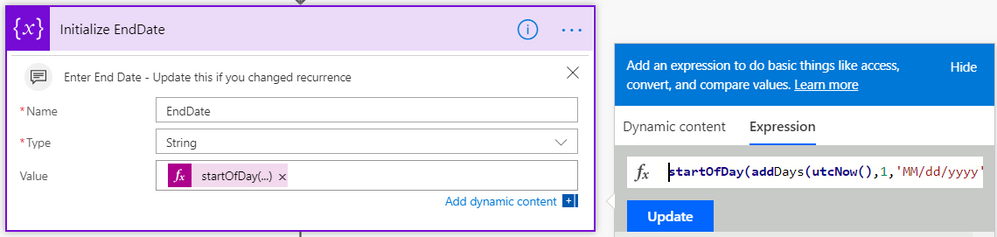

Tip: If you want to capture all of the data you may have in the audit logs before changing this to a nightly feed consider doing a test run using this expression, addDays(startOfDay(utcNow()), -90) - Add another Initialize Parameter action to store the end date for the query using startOfDay(addDays(utcNow(),1,'MM/dd/yyyy')) as the value. (Keep in mind this will need to be adjusted if you change the recurrence)

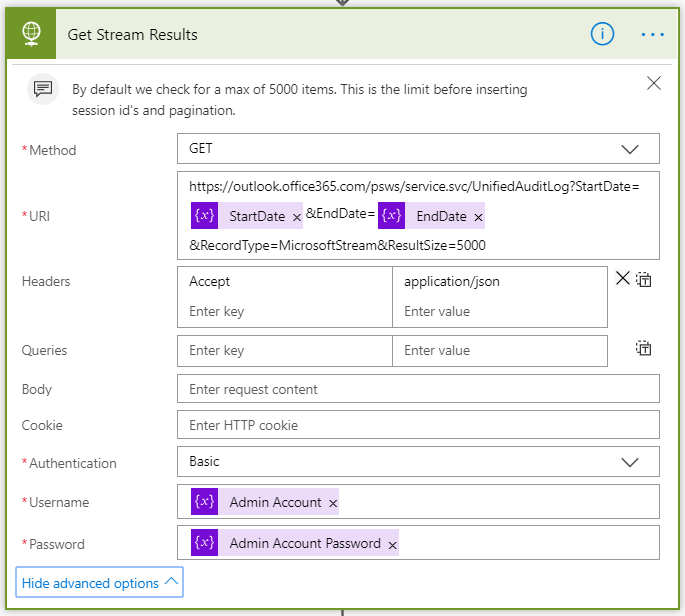

- Add the HTTP Request action to build the query against the PowerShell Webservice. Make sure to select Get for the Method. The URI structure includes our StartDate and EndDate variables. We also include RecordType=MicrosoftStream to make sure to only get the audit data for Stream. At the end of the string we add the ResultSize parameter to go to the max allowed of 5,000 items, otherwise this would default to 100 (You can call back as many as 50k items but it is not recommended, you would need to introduce paging to the query). Make sure to set the Headers as shown in the image below. Once you click on advanced options you will see the Username and Password, simply add these variables to these values.

- Add the Parse JSON action. This is where we will extract the data from the logs. Add the Body from the HTTP action

Add the Schema shown below

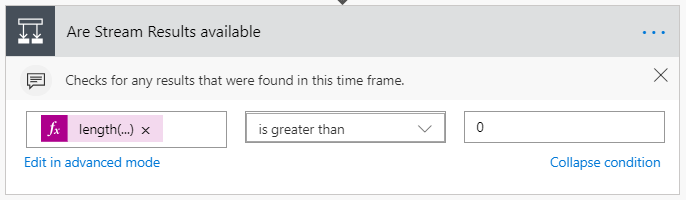

{ "type": "object", "properties": { "odata.metadata": { "type": "string" }, "value": { "type": "array", "items": { "type": "object", "properties": { "Identity": { "type": "string" }, "AuditData": { "type": "string" }, "CreationDate": { "type": "string" }, "IsValid": { "type": "boolean" }, "ObjectState": { "type": "string" }, "Operations": { "type": "string" }, "RecordType": { "type": "string" }, "ResultCount": { "type": "number" }, "ResultIndex": { "type": "number" }, "UserIds": { "type": "string" }, "ObjectIds": {}, "IPAddresses": {} }, "required": [ "Identity", "AuditData", "CreationDate", "IsValid", "ObjectState", "Operations", "RecordType", "ResultCount", "ResultIndex", "UserIds", "ObjectIds", "IPAddresses" ] } } } } - Add a Condition action. This will determine if any results were returned for the data range. For the value, add length(body('Parse_Stream_Results')?['value']). Change your condition to “is greater than” with the value as 0.

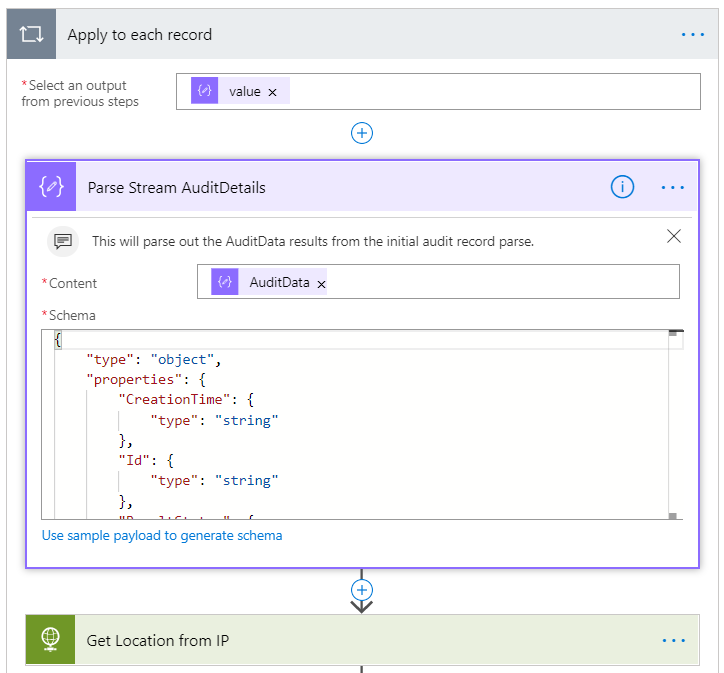

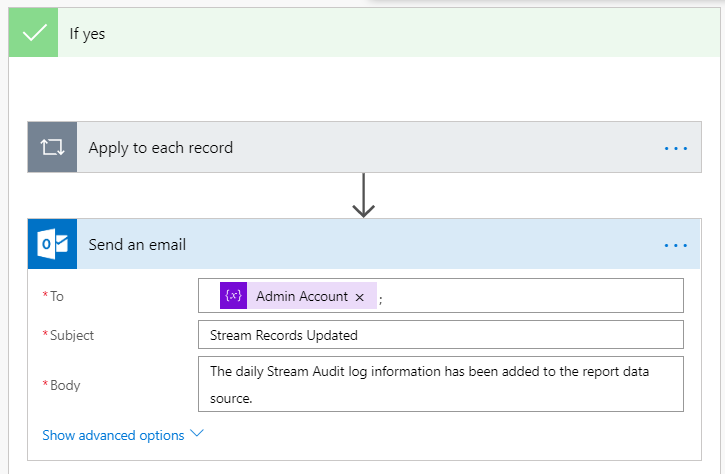

- In the Yes path add the Parse JSON action. This action will break out the AuditData field within the results as it contains most of the useful information for the reports. Select the AuditData from the earlier HTTP action, this will automatically add in the Apply to each record.

Add the Schema shown below.

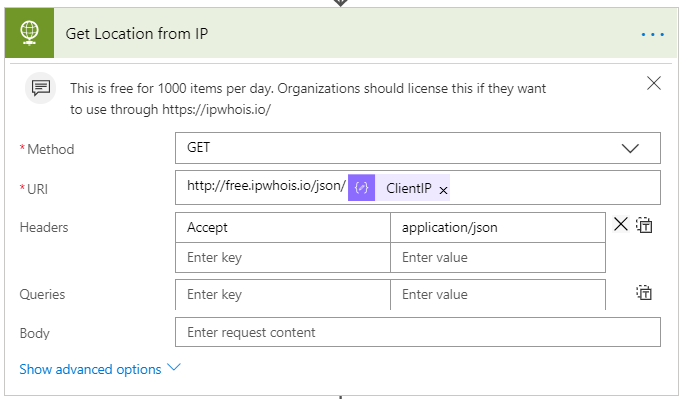

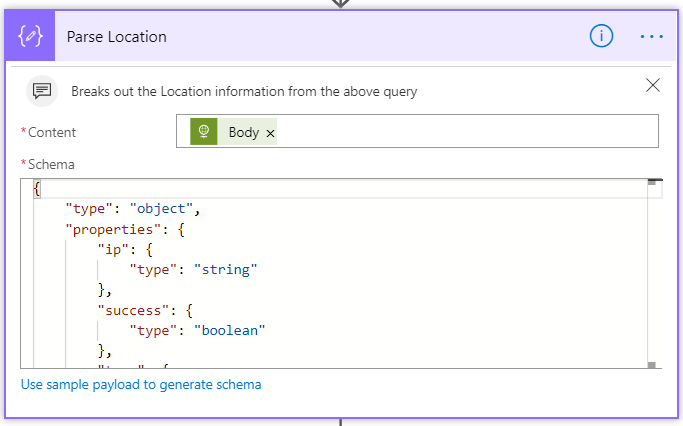

{ "type": "object", "properties": { "CreationTime": { "type": "string" }, "Id": { "type": "string" }, "ResultStatus": { "type": "string" }, "UserType": { "type": "number" }, "Version": { "type": "number" }, "UserId": { "type": "string" }, "ResourceUrl": { "type": "string" }, "ResourceTitle": { "type": "string" }, "EntityPath": { "type": "string" }, "OperationDetails": { "type": "string" }, "ClientApplicationId": { "type": "string" }, "ObjectId": { "type": "string" }, "ClientIP": { "type": "string" }, "RecordType": { "type": "number" }, "Operation": { "type": "string" }, "OrganizationId": { "type": "string" }, "UserKey": { "type": "string" }, "Workload": { "type": "string" } } } - This step is optional. If you want to get approximate location for where the actions were performed, you can leverage the IP address to get the details. This allows us to build the map into our reports. Alternatively, if you have your user’s location information tracked in their profile you could look up the user profile.

Note: This uses a service from http://ipwhois.io . Organizations will need to purchase this, for a very small fee if you would like to use it. It is $99 a year for unlimited queries. The free version allows for 1000 queries a day. If you already have a preferred service that can do this you can use that instead.

Add the HTTP action. Make sure to select Get for the Method. The URI value is http://free.ipwhois.io/json/ ClientIP value from the previous action. Make sure to add the Headers as shown below. The free version doesn’t require authentication, but you will need to add that in if you use the pay service. - Only required if you use the previous step. Add the Body from the previous action.

Add the Schema shown below.

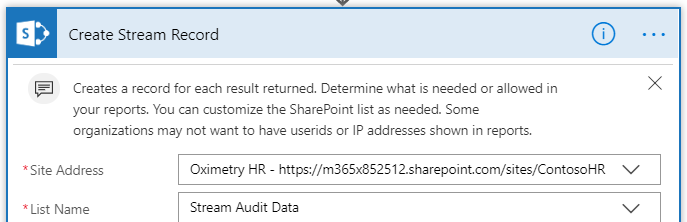

{ "type": "object", "properties": { "ip": { "type": "string" }, "success": { "type": "boolean" }, "type": { "type": "string" }, "continent": { "type": "string" }, "continent_code": { "type": "string" }, "country": { "type": "string" }, "country_code": { "type": "string" }, "country_flag": { "type": "string" }, "country_capital": { "type": "string" }, "country_phone": { "type": "string" }, "country_neighbours": { "type": "string" }, "region": { "type": "string" }, "city": { "type": "string" }, "latitude": { "type": "string" }, "longitude": { "type": "string" }, "asn": { "type": "string" }, "org": { "type": "string" }, "isp": { "type": "string" }, "timezone": { "type": "string" }, "timezone_name": { "type": "string" }, "timezone_dstOffset": { "type": "string" }, "timezone_gmtOffset": { "type": "string" }, "timezone_gmt": { "type": "string" }, "currency": { "type": "string" }, "currency_code": { "type": "string" }, "currency_symbol": { "type": "string" }, "currency_rates": { "type": "string" }, "currency_plural": { "type": "string" } } } - Add the SharePoint Create Item action. Select the site where you created the list. Select the Audit Data list.

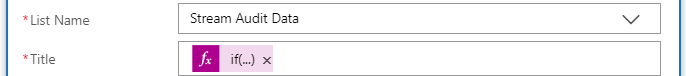

- For Title we converted to the Friendly Name of the action using an If expression.

if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamCreateVideo'),'Created video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamCreateVideoComment'),'Commented on video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamDeleteChannel'),'Deleted channel',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamDeleteGroup'),'Deleted group',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamDeleteUser'),'Deleted user', if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamDeleteUserDataExport'),'Deleted User Data Export',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamDeleteVideo'),'Deleted video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamDeleteVideoComment'),'Deleted video comment',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamDeleteVideoTextTrack'),'Deleted text track',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamDeleteVideoThumbnail'),'Deleted thumbnail',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditAdminGlobalRoleMembers'),'Edited global role assignment',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditAdminTenantSettings'),'Edited tenant settings',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditChannel'),'Edited channel',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditUser'),'Edited user',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditGroupMemberships'),'Edited group memberships', if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditGroup'),'Edited group',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditUserSettings'),'Edited user settings',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditVideo'),'Edited video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditVideoPermissions'),'Edited video permission',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditLiveIngest'),'Edited live ingest',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamEditLiveEvent'),'Edited live event',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeChannelSetThumbnail'),'Set channel thumbnail',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeExportUserData'),'Exported user data',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeUserDataExportDownload'),'Downloaded user data',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoDownload'),'Downloaded video', if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoLike'),'Liked video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoMakePublic'),'Made video public',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoMakePrivate'),'Made video private',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoSetLink'),'Linked on Video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoShare'),'Shared video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoTextTrackUpload'),'Uploaded text track',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoThumbnailUpload'),'Uploaded thumbnail',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoUnLike'),'Unliked video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoUpload'),'Uploaded video', if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoView'),'Viewed video',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeLiveStartBroadcast'),'Started Live Broadcast',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeLiveStartIngest'),'Started Ingest',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeLiveStopIngest'),'Stopped Ingest',if(equals(body('Parse_Stream_AuditDetails')?['Operation'],'StreamInvokeVideoPostProcess'),'Video in post process', 'Unknown'))))))))))))))))))))))))))))))))))))))) - Enter the next values as shown below. If you need to remove personal data do not add in UserId to anywhere shown here. You may also need to remove IP address.

- For Location add the value of concat(body('Parse_Location')?['city'],', ',body('Parse_Location')?['region'])

- For Application add the If Statement shown below. This will provide the friendly name of the application used to create the video. In the future it may be used to show what application the videos were viewed from.

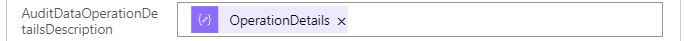

if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'cf53fce8-def6-4aeb-8d30-b158e7b1cf83'),'Stream Portal',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'844cca35-0656-46ce-b636-13f48b0eecbd'),'Stream Mobile',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'574df941-661b-4bfc-acb0-0a07de7de341'),'Dynamics Talent',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'00000005-0000-0ff1-ce00-000000000000'),'Yammer',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'4580fd1d-e5a3-4f56-9ad1-aab0e3bf8f76'),'Microsoft Teams Exporter',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'cc15fd57-2c6c-4117-a88c-83b1d56b4bbe'),'Microsoft Teams Middle tier',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'1fec8e78-bce4-4aaf-ab1b-5451cc387264'),'Microsoft Teams Native Client',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'5e3ce6c0-2b1f-4285-8d4b-75ee78787346'),'Microsoft Teams Web Client',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'7557eb47-c689-4224-abcf-aef9bd7573df'),'Microsoft Teams Scheduling and Broadcast Service',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'e478694e-c391-4a61-a6be-c953d2372058'),'OfficeMix.com',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'d3590ed6-52b3-4102-aeff-aad2292ab01c'),'Office First Party native app',if(equals(body('Parse_Stream_AuditDetails')?['ClientApplicationId'],'2acd2ef7-b80e-46d2-87f8-90e10adc3fca'),'Microsoft Learning 3rd party app','Unknown')))))))))))) - For the last value add OperationDetails

- For Title we converted to the Friendly Name of the action using an If expression.

- The last step is for emailing the user running the report and is not part of the apply to each section. Add the desired email in the To field, for this we just used the admin account variable. Add the desired subject and body. You can add HTML to the email if you wish, just make sure to expand the advanced options and change IsHTML to Yes.

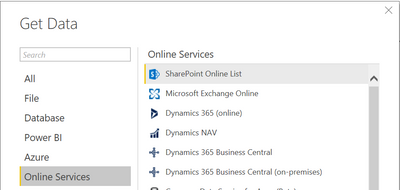

Pulling the Data into Power BI

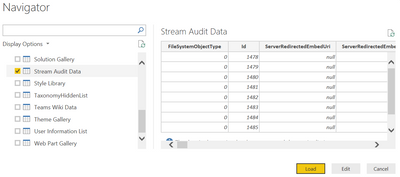

This is where we can pull in the data set to build some nice reports. You will need to download Power BI desktop to build these reports since we need to connect to the SharePoint list created earlier.

- Open Power BI Desktop

- Create New

- Click Get Data

- Select Online Services > SharePoint Online List

- Click Connect

- Add the URL to the site where you created the SharePoint List

- Click Ok

- Select the List you created for the Audit Data

- Click Load

Building a Basic Report

Now we can add some basic report information and filters. Below are basic examples of items you can add. You can download this prebuilt report to see how I built it out. Make sure to update the settings for your environment if you use this template.

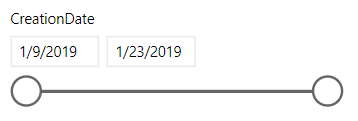

Slicers

- Select the Slicer under visualizations

- Check the Date field (note that you may need to convert the data type in your table to get this to display as a slider, Power BI may convert automatically but it depends on the amount of content)

Total views

This graph shows the total views per video. Depending on the number of videos this chart may not work for your needs.

- Select the Donut or Pie Chart

- Check the AuditData.ResourceTitle so that it is used by the chart

- Drag the Operations field into Values (this should convert to count)

- Drag the Operations filed to the Filters View and check StreamInvokeVideoView

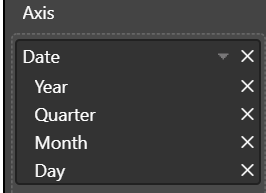

Total views per day

This line chart will show total views daily.

- Select the Line Chart

- Check the Date so that it is used by the chart

- You may want to remove items like Year and Quarter from the date field. Just click on the x to remove.

- Add the Title field to the values box

- Drag the Title field into the filter

- Select Video Viewed

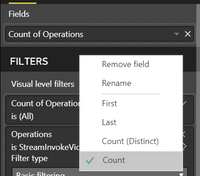

Total view cards

Use this to display the total views for all videos. When filtering based on video this will adjust accordingly.

- Select the Card

- Check Title

- Change the field to be count, via the drop-down of the menu.

- Drag Title into the Filters

- Select Video Viewed

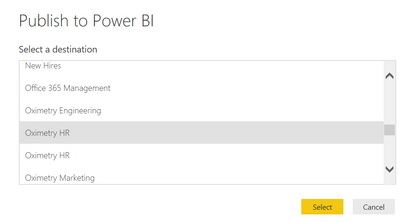

Publishing the Report

To schedule the refresh for the report it must first be published to the Power BI service. Users that view the report will need to have a Power BI Pro license if you utilize scheduled refresh to keep the data up to date.

- In your report, click Publish

- Select the workspace location to publish it to

- Click Select

- Click Got it

- Login to Power BI online and go to the workspace you published to above

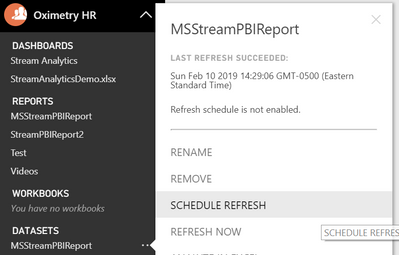

- Locate the report dataset

- Click on the … next to your dataset and click Schedule Refresh

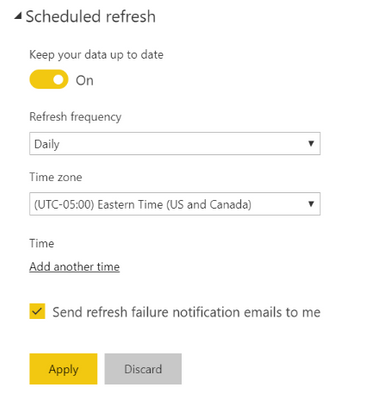

- Expand Schedule Refresh

- Turn on “Keep your data up to date”

- Choose your “Refresh frequency”

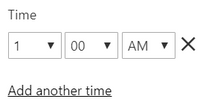

- Click on “Add another time” If you are doing the daily audit log, I recommend setting your update refresh at 1:00 am. This should provide the ability for all the new data to get pushed into SharePoint from the audit log import.

- Click Apply

References:

- Accessing Office 365 Security & Compliance Center Logs from Microsoft Flow

- Search the Audit Logs

- PowerShell – Search-UnifiedAuditLog

- Create a List in SharePoint

- Getting Started with Flow

- Power BI Desktop

- Power BI Guided Learning

- Stream Log Import Microsoft Flow

- Sample Power BI Report

Thanks for reading and happy streaming!