This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

Monitoring is one of the key business-critical functions for making sure infrastructure running Microsoft Endpoint Manager Configuration Manager (MEMCM) is healthy. We were using on-prem based System Center Operation Manager (SCOM) for many years leveraging the alerting framework and management packs for monitoring. Over the years, we also developed custom solutions to address various monitoring needs.

With our desire to move to one unified monitoring solution and align with cloud strategy, shifting from traditional monitoring to cloud based Azure monitoring solution was a natural choice due to its rich capabilities to collect and analyze data for both Azure and on-prem environments.

In this blog, we will be sharing how we migrated from SCOM to Azure Monitoring solution and best practices we followed to effectively monitor MEMCM. You can read more about Azure Monitoring and its capabilities. Since Log Analytics is part of the Azure Monitor pipeline, this gives us the platform to create alert rules, dashboards, views, export to Power BI, use PowerShell and access data via the Azure Monitor Logs API. The advantage of using Log Analytics is that we can utilize the Kusto query language to retrieve and analyze data in a variety of ways and build new workflows on this data, which opens the possibility to automate and customize.

Main goals with this project are to have one unified monitoring solution for all workloads and have ability to monitor with minimal noise in the system. Before I continue any further, I’d like to mention some of my colleagues from Tata Consultancy Services (Rakesh Silam and Dhiraj Agarwal) who helped with this migration.

Tools & Technology Used:

Azure Monitor, Operations Management Suit, Azure Log Analytics, Automation Account, Azure runbook & Azure Orchestration, Log/Kusto Query, Configuration Manger.

Getting Started:

As a first step, we captured data from the existing SCOM Monitoring system

- Total number of monitors and rules configured

- Total number of unique alerts triggered in the past one year

Given that we were leveraging SCOM Management Pack for configuration, over time we ended up enabling quite a bit of monitors and rules, to be exact 2672 monitors and 4470 rules

| Alert Category | Count of Monitors | Count of Rules |

| Custom | 163 | 52 |

| OS | 1098 | 1947 |

| SCCM | 806 | 564 |

| SCOM | 231 | 280 |

| SQL | 374 | 1627 |

| Total | 2672 | 4470 |

We didn’t want to migrate all the alerts to new Azure Monitor system and used this opportunity to evaluate and determine what is important for service availability for roles with in MEMCM (e.g., SQL, MPs, DP, SUPs, Cluster, IIS etc.). Using data captured from SCOM, we come up with list of alerts that need to be migrated as well as identified new scenarios. Based on the analysis below are the high-level classifications.

Classification | Description |

Basic Alerts | Windows Events, Heartbeat etc. |

Perf Counters | CPU, Memory, Process, Disc etc. |

Role Specific Alerts | Based on windows roles/components like SQL, IIS, Cluster |

Product Specific Alerts | Based upon the product requirements e.g. SCCM, where in we will understand the key factored require keeping the SCCM product healthy. In this we will consider MP, DP, Primary, CAS, inboxes etc. |

Custom Alerts | Custom alerts associated to Deployments, P2P success, Intune based alerts, etc. |

This analysis helped us bring down total monitors/alerts to ~232 unique scenarios – this reduced existing noise in our monitoring system, improved incident management efficiency, and improved Time To Respond (TTR) and Time to Mitigate (TTM) strategy.

Implementation

Below are the high-level steps followed for implementing Azure Monitoring. We basically send all events, audits, and operational logs to Log Analytics workspace and create alerts based on data and patterns.

Setup

- Create ‘Log Analytics Workspace’ and enable monitoring all Events & Configuration Manager log files. Make sure to setup appropriate access controls and policies ‘Manage log data and workspaces in Azure Monitor’

- Deploy Monitor Agent on Azure VMs or On-premises Infrastructure by following “Connect Windows computers to Azure Monitor” process. Refer to “Collect log data with the Log Analytics agent” to understand what Microsoft monitoring agent can collect (MMA) and setup log collection “Agent data sources in Azure Monitor”

- You can also connect your System Center Configuration Manager environment to Azure Monitor to sync device collection data and reference these collections in Azure Monitor and Azure Automation. Refer, “Connect Configuration Manager to Azure Monitor” document.

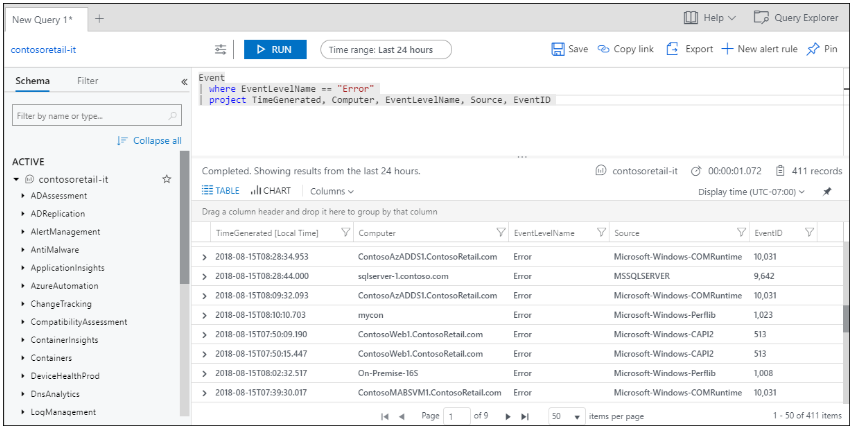

- Analyze the data collected by log analytics by running Kusto queries and create alerts based on the defined scenarios

Alerting Solution:

Creating Alert Rules, defining threshold values and frequency is critical for efficient monitoring. Refer to “Overview of alerts in Microsoft Azure” document for additional information. We used Azure runbook and setup a webhook that consumes alerts and routes them to an inhouse alerting solution. This can be leveraged extend this functionality to other solutions like Service now or Solar winds etc. You can either create a new runbook or leverage a exiting runbook from Runbook Gallery and setup custom connector from scratch or use code from Microsoft gallery connector with ticketing integration

Below are few examples of queries we used for certain scenarios. Refer to Overview of log queries in Azure Monitor

Perf

| where ObjectName == "SMS Outbox" and CounterName == "File Current Count" and InstanceName !contains "Statemsg" and Computer in (<< Computer Group like all Management Point role servers >>)

| where TimeGenerated > ago(15m)

| where CounterValue > 200

| summarize count(), LatestCounterValue=arg_max(CounterValue, *) by Computer, InstanceName

| where count_ > 60

| project Computer = strcat(Computer,"-",InstanceName), InstanceName, TimeGenerated, count_, LatestCounterValue, RenderedDescription = strcat(Computer, ": Management point ", InstanceName, " outbox backlog alert = ", LatestCounterValue)

Perf

| where ObjectName == "LogicalDisk" and CounterName == "Current Disk Queue Length" and InstanceName !in ("_Total", "HarddiskVolume", "B:", "H:", "T:", "O:")

| where TimeGenerated > ago(15m)

| where CounterValue > 32

| summarize count(),LatestCounterValue=arg_max(CounterValue , *) by Computer,InstanceName

| where count_ > 10

| project Computer, InstanceName, TimeGenerated, LatestCounterValue,count_, RenderedDescription = strcat(Computer, ": The current disk queue length of ", InstanceName, " drive is too high ", LatestCounterValue)

Perf

| where ObjectName == "Memory" and CounterName == "Pages/sec" and Computer in (<< Computer Group >>)

| summarize AggregatedValue = avg(CounterValue) by Computer, bin(TimeGenerated, 60m)

| summarize arg_max(TimeGenerated, *) by Computer

| where AggregatedValue > 18000

| project Computer, TimeGenerated, AggregatedValue, RenderedDescription = strcat("Memory Pages Per Second ", round(AggregatedValue), " is too High for ", Computer)

Event

| where EventLog == "System" and EventID == 1069

| parse RenderedDescription with * "Cluster resource '" ClusterResource "' of type '" ClusterType "' in clustered role '" ClusterRole "' failed." *

| project Computer, TimeGenerated, EventLog, Source, EventID, RenderedDescription = strcat(Computer, ": Cluster resource '", ClusterResource, "' of type '",ClusterType,"' in clustered role '",ClusterRole,"' has failed.")

Event

| where EventLog == "System" and EventID == 7036 and Source == "Service Control Manager"

| parse kind=relaxed EventData with * '<Data Name="param1">' Windows_Service_Name '</Data><Data Name="param2">' Windows_Service_State '</Data>'*

| where Windows_Service_Name contains "IIS Admin Service" and Windows_Service_State == "stopped" | sort by TimeGenerated desc

| project Computer, TimeGenerated, Windows_Service_Name, Windows_Service_State, RenderedDescription = strcat(Computer, ": ",RenderedDescription)

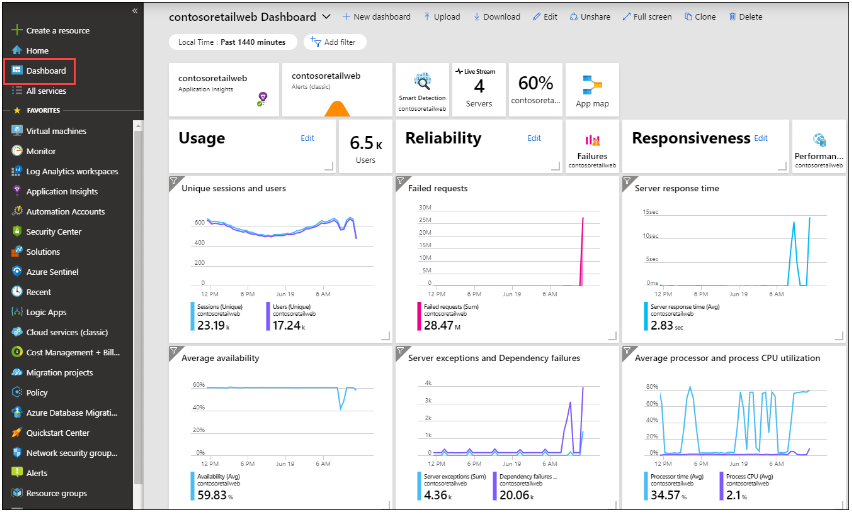

Dashboards:

Log Analytics dashboards can visualize all your saved log queries, giving you the ability to find, correlate, and share IT operational data in the organization. This tutorial covers creating a log query that will be used to support a shared dashboard that will be accessed by your IT operations support team, refer “Create and share dashboards of Log Analytics data” document

Conclusion:

It has been close to one year as of this posting since we migrated from SCOM and Azure Monitoring as our unified alerting solution and I can tell you that we have not missed SCOM. It helped greatly in saving cost as we transform from traditional Physical/Azure IaaS to SaaS based solution. We do not need to manage SCOM infrastructure anymore and worry about migrating and patching to keep it compliant. Hopefully, this post will give you basic idea how alerting can be setup using Azure Monitoring and help kick starting your migration journey. The possibilities are endless.