Thriving on Azure SQL

Microsoft Advertising is a powerful advertising platform designed to get your ads in front of the right customers. In the fiscal year 2019, search advertising revenue generated $7.6 billion for Microsoft. We have been on a journey with Azure SQL since 2015 and, looking back, it has been incredible – our availability, performance, scalability, reliability, and efficiency have drastically improved. Azure SQL has been the bedrock Microsoft Advertising Platform depends on to delight our millions of customers and to run a multibillion-dollar business. In this blog, we are going to share how we re-architected our systems on Azure, how we leveraged numerous exciting Azure SQL features, and how Microsoft Advertising Platform thrives as Azure SQL continues to deliver innovation and excellence.

Azure SQL Migration

Fast rewind to 5 years ago. We were running the Microsoft Advertising systems on-premises. Hypergrowth of the business led to an explosion of data, rapid queries per second (QPS) growth and increasingly sophisticated business requirements. As a multi-tenant system, we must perform well no matter the size of the account. The on-premises database system, however, was not able to scale and meet the increasing demand. On top of that, SAN storage was notoriously difficult to upgrade, maintain and service. The operational costs kept piling up.

Data Layer Re-architecture

We knew that we needed to drastically transform our architecture. Our design idea was to spread the data for a given account across a group of database servers; by doing so, the data becomes more uniformly distributed and services can pull from multiple databases simultaneously. We chose Azure SQL to be the platform to bet on because of its capabilities, elasticity, and operability.

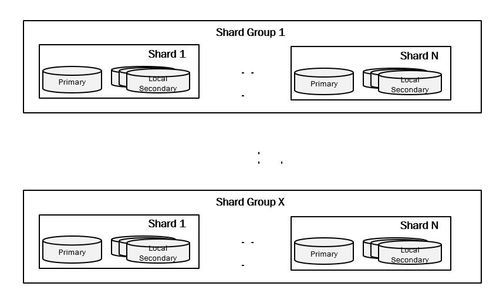

With Azure SQL, we can add/remove instances quickly and easily. It provides ACID transactions, something essential for our scenarios. We implemented horizontal partitioning to scale out the data, a technique known as sharding; for a given customer, data is spread across N number of shards evenly. In our implementation, those N number of shards construct what we call a shard group. As the amount of data in each shard group grows or shrinks, we split or merge amongst the existing shard groups. One of our design principles is that we shard every account regardless of the size, and there is no coupling between code and sharding logic – the service code assumes the database can be sharded in N ways.

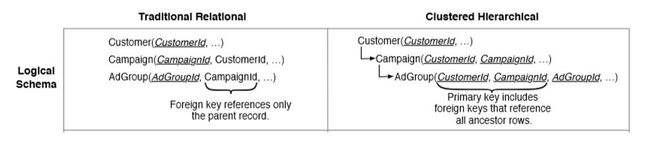

Inspired by the Google F1 design, we changed our database data organization to a hierarchy similar to the diagram below.

With this data representation, Ad Group level data belonging to a customer is likely to be clustered together in physical storage. In our use case, intentionally introducing controlled redundancy and duplicating key columns in child tables improved our performance, since data for a CustomerId can thus be retrieved from a set of contiguous pages on disk, without joins to other tables. Therefore, SQL requires loading fewer pages from disk, resulting in better performance. This technique has worked extremely well for us, allowing us to achieve our latency goals.

Service Layer Re-architecture

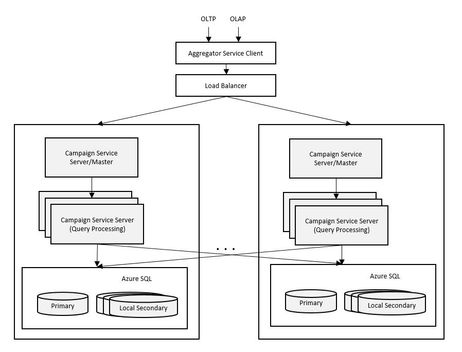

As the data layer was dramatically transformed, with the data now distributed, we had to significantly change our service layer as well.

A first principle was to design with scale out in mind. When a request comes in, whichever server receives the request becomes the master for that request. When the master estimates that increased parallelism will reduce query processing latency, it chooses distributed execution over centralized execution. In the distributed execution case, it fans out the request to its peers, waits for the responses, then merges the results. The final merged result is then sent back to the client.

We also decided that business logic should reside in the service, instead of the storage layer, as much as possible. This pattern works well for our use case of large-scale data processing. Throwing a bunch of cheap machines to perform the business logic and processing has proven very beneficial for our multi-tenant, large-data processing scenarios.

Finally, we established a new programming paradigm with our development team, as our services need to simultaneously connect to many databases. We built library code which takes care of SQL database connection reuse and life cycle management. We teach developers to avoid serial reads, use batching, stream data in parallel using IDataReader for forward only processing (as opposed to using DataSet and DataTable.) In our experience, using . Instead, we moved to constructing pure in-memory C# objects instead of relying on DataTable for in-memory joins and data processing. To maximize performance, we also avoid the use of any object relational mapping (ORM) libraries and instead write scenario-specific code (often using tasks running in parallel) to join and process all of the data. We use async throughout the stack to avoid unnecessary blocking of thread pool threads while waiting on I/O.

Leveraging Azure SQL New Capabilities

Azure SQL provides a great number of great features that we really appreciate. Here are a few notable ones:

- Columnstore helps us optimize storage for analytical and auditing scenarios where the data is not changed frequently. Using Columnstore instead of rowstore tables helps us save space by 30 to 40% and improves performance due to a batched mode of operation. Using Archive compression for archived data enables us to save an additional 30% space without significantly impacting performance.

- For tables where Columnstore index usage is not applicable, page compression helps us save up to 50% space without any noticeable performance decrease in our scenarios.

- Table partitioning, combined with regular index maintenance, helps us keep space consumption in check.

- AlwaysOn availability groups, Active geo-replication and Long-term Backup Retention (LTR) are great business continuity features.

- DSN Alias provides a translation layer that redirects the client application to different servers. This comes in very handy in case of Disaster Recovery scenarios.

- DMVs, XEvents, Query Store (QDS) provide excellent performance troubleshooting capabilities.

Running on Azure SQL

Microsoft Advertising systems running on Azure SQL are performing very well in supporting the ever-increasing engagement from our customers. Despite significantly increased load over the years, we continue to meet and exceed our performance SLA. Our customers are delighted. We did not stop there; we focus on driving system efficiency relentlessly. Given its elasticity, throwing money at Azure SQL can buy some performance, but we believe it is a lot more fun to squeeze performance out of our code rather than just paying big bucks. So how do we pay as little as possible but get the highest performance we can? Making our code better so we still run fast enough on cheap Azure SQL is the best technique. Our systems started with P11, then downgraded to P6, and now, after considerable improvements to our code, we run our OLTP systems on P4. In addition, we use the following approaches to take advantage of every ounce of power of Azure SQL.

Fully Leverage Read-only replicas

Many developers did not realize that once a single geo-replica is set up for a Premium/Business Critical database, we actually have four DB replicas that we can query – primary, local secondary of the primary, geo-secondary primary and local secondary of the geo-secondary primary.

One workload in Microsoft Advertising has two distinct query patterns: interactive UI calls, which are low QPS but have stringent latency requirements, and reporting calls, which are high QPS for large amount of data, but have less stringent latency requirements. To avoid having these two types of calls interfere with each other, we used to host extra replicas; that turned out to be quite expensive. The local secondary feature proved to be “lifesaving”. We devised a new scheme: calls from UI go to two replicas simultaneously: primary + geo-secondary primary, whichever call returns first is used. Report calls go to two replicas simultaneously: primary local secondary + geo-secondary local secondary, whichever call returns first is used. With that, we cut this part of our Azure SQL cost by half. One thing to note is occasionally replication lag between primary and secondary can be , so make sure your scenario can tolerate and handle the lag.

Regular Data Purge to Keep the House Clean

To keep Azure SQL cost down and to ensure excellent query performance, it is important for us to develop a robust data purge infrastructure that periodically cleans deleted entities and older audit data. Purging is CPU and I/O intensive because all of the tables need to be examined. With a lot of tuning, we have been able to optimize purging so that it has minimal impact to the system. We leverage Azure SQL elastic jobs for purging data.

Load Balancing

A Load Balancer is an infrastructure feature that helps balance data distribution to our database servers. The goal is to eliminate hot spots and spread out customer data, avoiding growth spikes. It moves customer data from a busy shard group to a more idle shard group in an online fashion, with minimal impact to customers. Minimal impact to customers is achieved by locking and switching partition only when customer activity is below a threshold. If the customer is still making a large amount of changes, the Load Balancer backs off and retries. It runs continuously and ensures our data is packed as efficiently as possible.

Conclusion

Azure SQL is the best destination for fully managed SQL in the cloud. We at Microsoft Advertising are super happy running and thriving on this fantastic platform. Currently we are testing out the latest cool technology – Hyperscale! It supports up to 100 TB of storage, nearly instantaneous DB backups, rapid scale out, and more. We have already seen significant performance improvements with the databases that moved to the Hyperscale service tier. We will move the remaining databases soon.

Join us on this journey and leverage Azure SQL to grow your business!