This post has been republished via RSS; it originally appeared at: AI Customer Engineering Team articles.

Introduction

Fast.AI is a PyTorch library designed to involve more scientists with different backgrounds to use deep learning. They want people to use deep learning just like using C# or windows. The tool uses very little codes to create and train a deep learning model. For example, with only 3 simple steps we can define the dataset, define the model, and start training:

The first step defines a dataset where 20% of the samples are reserved for validation, default image transformations are applied, and normalization is performed using the “imagenet_stats” statistics. The second step defines a pretrained ResNet34 CNN model, and the third step launches the training run. You can compare this code to what it would take to run PyTorch directly, for example: Start Your CNN Journey with PyTorch in Python, to find how much work you saved.

Deep learning normally needs heavy computation and benefits greatly from distributed computing. Distributed computing assigns computing to multiple computer (nodes) and multiple GPUs and eventually integrates their results. It saves computational time by using more compute resources. However, it is costly to maintain heavy compute resources but only use them occasionally. Azure Machine Learning (ML) the platform where the compute resources are assigned dynamically: the compute resources are assigned only after you submit a new training run and will be deleted after the training is done. .

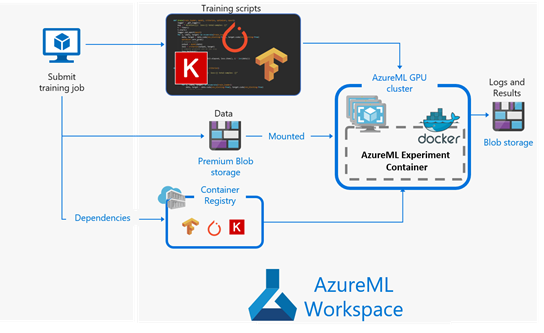

The following figure shows how the training works in the Azure ML workspace,

- Upload your data to premium blob storage and submit your training script.

- Compute resource is assigned, and the training starts.

- The training is done, results are saved, and the compute resource is deleted

Fast.AI only supports the NCCL backend distributed training but currently Azure ML does not configure the backend automatically. We have found a workaround to complete the backend initialization on Azure ML. In this blog, we will show how to perform distributed training with Fast.AI on Azure ML. The code is in this git repo: Distributed-training-Image-segmentation-Azure-ML.

Prepare Azure Resource

First, you need to create/have an Azure subscription, an Azure Storage account, and an Azure ML workspace. In the codes, you need to:

- Fill out the Azure subscription ID, resource group, and workspace name to connect to your workspace.

- Register your Azure storage container (where you have uploaded data) as the datastore to use as the data source.

- Connect or create compute target that will process data from the datastore:

All the codes are in the section "Prepare Azure Resource" of the notebook Ship-segmentation-Azure-ML.

Data Cleaning

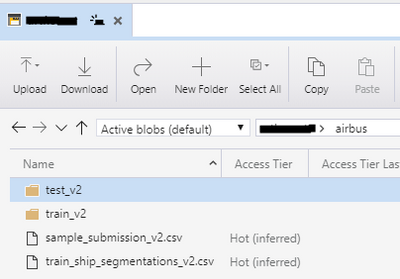

We used the data from a Kaggle challenge: airbus-ship-detection. The project is for detecting and segmenting ships in satellite images. First, create a container in Azure storage and upload the data in the folder "airbus" as shown in following:

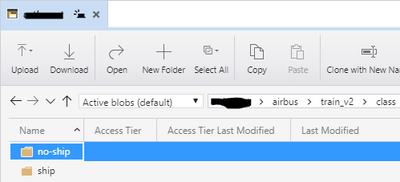

Then we will use a script "clean-data.py" to generate data for training: the folder "class" with 2 sub-folders () for classification:

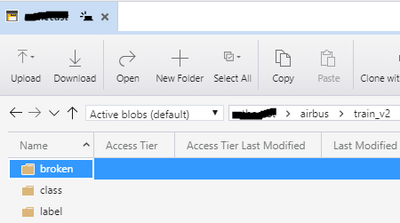

the folder "label" for the label images, and "256-filter99" for images with ship size > 99, which is for segmentation:

We kick off the data generation in Azure ML: define data reference from datastore as data source; create an experiment to submit the estimator :

It runs the script "clean-data.py", on 1 node without GPU. The codes can be found in the section "Data Clean" of the notebook Ship-segmentation-Azure-ML.

Distributed deep learning

Referring to Iafoss' solution, we take 2 steps for better ship segmentation

- Classify images with/without ships

- Segmenting ships from ship images

The two tasks will be completed by CNN and U-Net, respectively.

Classification

With Fast.AI, we use 3 for the dataset, modeling, and training, which are similar to the 3 ones in the introduction. Then we setup distributed training. Fast.AI only supports the NCCL backend, and we found a workaround to complete the backend initialization on Azure ML. The initialization functions are defined in the script azureml_adapter.py, to be called for the initialization

Then the defined model can be distributed to the local:

The details can be found in the script classification.py.

As shown in the previous section, the classification data are stored in the sub-folder "class". So we will define data reference from it as data source, and also define the estimator :

We used 3 nodes and all 4 GPU's (process_count_per_node = 4).

Azure hyper drive can setup multiple runs to tune the training parameters. First define the choices for each parameter:

Here we have 2 choices for start & end learning rate, respectively. Then the hyper drive config is defined from our estimator above:

We used dice/F1 as metric and set the primary goal as maximizing the metric. After submitting the hyper drive with an experiment, and finishing the runs, we can get the best run with best result by:

The codes can be found in the section "Ship/no ship classification" of the notebook Ship-segmentation-Azure-ML.

Segmentation

We use U-Net to complete the segmentation training – the code still has 3 steps:

The "get_y_fn" is a lambda function to find the labels from image names; the loss method "MixedLoss" is a combination of Focal loss and Dice loss, referred to lafoss. We used the same workaround of distributed initialization. The details can be found in segmentation.py.

Then we defined the estimator and an experiment to submit it. The codes can be found in the section "Segmentation" of the notebook Ship-segmentation-Azure-ML.

Conclusions

In this blog, we show a simple and easy example of running distributed deep learning by using the Fast.AI library on Azure ML, with an image segmentation and a distributed initialization workaround. Since Fast.AI also supports NLP (transformer) models, you can use Fast.AI to distribute your NLP training by using the same distributed initialization workaround on Azure ML.