This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

TinyML - aka the ability to run machine learning (ML) on tiny embedded devices - is now a thing. Let’s dig into how you can run TinyML models on a real-world microcontroller (MCU) architecture, in this case an Azure Sphere MCU. Microsoft’s Azure Sphere MCUs are designed to be the most reliable, lowest total cost solution to operate highly secure connected products. This comes in handy when seeking to protect the intelligence and intellectual property that we build into our products using ML. Running TinyML models on Azure Sphere MCUs unlocks a whole new class of insights for highly secure, deeply-embedded, and natively connected products.

The truth is, TinyML is already ubiquitous. Just say the 'wake words' for your favorite digital assistant - available in even our smallest electronics. The ‘wake-word recognition’ is a TinyML model running on the machine. As consumers, we have become used to the lag when the rest of our voice command is sent to the cloud for processing. But consumers and, increasingly, commercial applications are driving demand for low-cost electronics that are capable of running machine learning models directly on their device.

Contributing to the demand are challenges with data latency, network throughput and reliability, energy consumption, and model accuracy. Privacy is also emerging as a driver, particularly around biometric data. Many use cases previously considered impossible to run directly on the smallest endpoints are becoming commonplace:

- Sensor fusion – blending and optimizing sensor inputs using ML to drive insights earlier and more efficiently

- Anomaly detection – most systems, whether living or mechanical and over time, exhibit a pattern during “normal” operation. Variance from “normal” can often be profiled as a unique mathematical signature. Identifying normal and classifying variance conditions is the basis of anomaly detection and can be applied across many markets.

- Predictive maintenance – single-failure modes as well as large mechanical models can be monitored for variance from normal operation

- Signature analysis – digital signatures, either in network, communication, or even emission patterns can be monitored for unexpected behavior

- Person and object detection on streaming video – MCUs now have the capacity to run detection models on streaming video. The demo in this blog shows 1 event per second detection of both people and objects on the MediaTek MT3620.

Machine learning at the edge has historically been limited to either high-performance microprocessors or highly custom, optimized implementations. The term “TinyML” refers to an emerging set of general-purpose machine learning tools and models designed to execute on resource-constrained, low-cost microcontroller (MCU) architectures.

This has been made possible by major progress in the ML science on many fronts. Three major developments that have contributed to broad emergency of TinyML include:

- reductions in the resources required to train and execute ML models on MCUs

- improvements in ease of developing and deploying ML models

- convergence with IoT technologies – secure, connected MCUs and over the air (OTA) updates

The implications of this technology convergence are huge. On a yearly basis, microcontroller shipments outpace microprocessors by almost an order of magnitude and they sell for over an order of magnitude lower price. Pair those dynamics with easy to use tools and a secure deployment vehicle and you have the ingredients for a tipping point in the embedded systems market.

Reduction in Resources

Machine learning frameworks are useful because they create a common toolset for model development. They turn a bespoke development – which may require an in-depth knowledge of algorithm execution, hardware architecture, and mathematical parallelization for efficient execution – into an exercise of leveraging pre-built, optimized components.

Historically machine learning frameworks required resource-rich processor environments (memory, OS, language support, instruction sets). TinyML solutions are leveraging years of mathematical research and optimization to deliver similar capabilities in MCU environments where many of those resources are not available. For the sake of this blog post, we highlight TinyML ports to ARM Cortex-M 32-bit instruction sets, as there are two Cortex-M4 cores inside the MT3620 Azure Sphere MCU.

The resulting ecosystem of tools often adds a diminutive descriptor to other well-known frameworks in its marketing (e.g. “TensorFlow Lite” or “micro TVM”). Some companies also create their own proprietary runtimes as differentiators for size, efficiency, and accuracy.

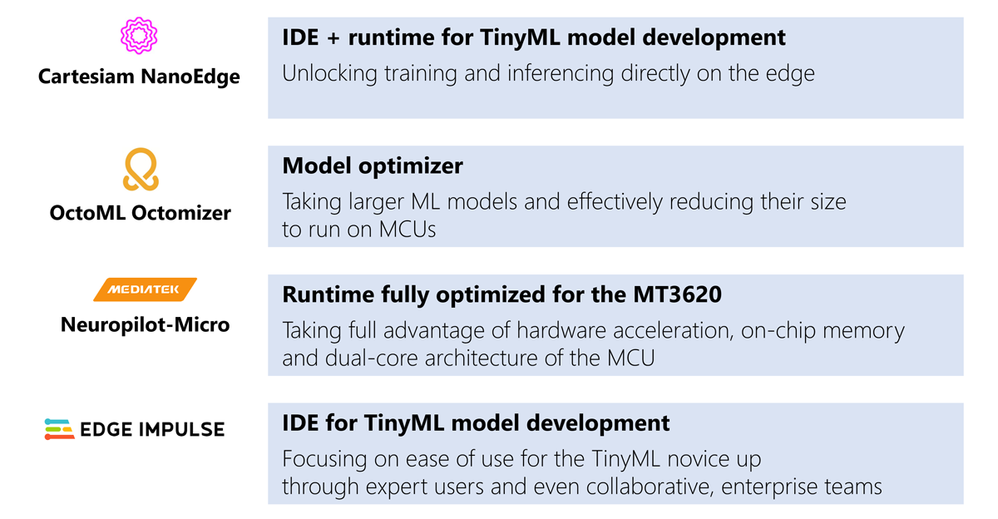

We will look into four ecosystem partner solutions that have been ported to run on the MT3620.

These partner solutions include examples of companies optimizing both “training” and “inferencing” to run on MCUs.

The first product highlight focuses on miniaturizing ML model training, as that typically comes first during ML model development. You train the model, almost always in the cloud or on your laptop, then you push it to a processor that supports the format of the model in question.

Cartesiam Nanoedge is a TinyML solution that includes an integrated development environment, or IDE, for model development. They specialize in anomaly detection models, which is a common approach for predictive maintenance. And their runtime includes a very cool feature to allow customers to build a template that gets pushed to the MCU and trains a unique model for every device directly on the MCU. This ability to train a model directly on the MCU is very powerful for anomaly detection and predictive maintenance, since the same model will not necessarily work for the same equipment that has either been maintained differently or deployed to different geographies. Their “inferencing” runtime, of course, has then been optimized to run on the Cortex-M core.

>> For information on Cartesiam tools for Azure Sphere, go here: https://cartesiam.ai/AzureSphere

The second category of optimization is the inferencing runtimes, and we have two examples that take different, but also very interesting approaches.

OctoML’s Octomizer product converts exiting ML models - eg: TensorFlow, Pytorch, ONNX – to a TinyML model. They push that model to a Micro-TVM runtime that has been miniatured to run on a Cortex-M MCU. This is very useful if you have known-good models running on larger processors you would like to be able to run on your MCU vs having to develop a new model from scratch.

>> For a demo of OctoML running on Azure Sphere – go here: https://medium.com/octoml/bringing-tiny-ml-to-the-cloud-connected-iot-with-tvm-2ab800b065b7?source=collection_home---6------1-----------------------

Mediatek NeuroPilot-Micro is another exciting solution because it is created by the silicon manufacturer of the target MT3620 MCU. NeuroPilot Micro enables TensorFlow Lite models to be executed in the Cortex-M cores of Azure Sphere. But what is truly interesting is that as the silicon manufacturer of the MT3620, MediaTek has unique insight on how to best leverage the silicon resources for ML execution – particularly memory, hardware acceleration, and the multi-core architecture of the MT3620.

A demonstration of the MediaTek Neuropilot Micro running person- and object-detection TinyML models, one model per each of the available Cortex-M cores, is highlighted in the Channel 9 episode: TinyML for IoT.

>> For information on MediaTek NeuroPilot Micro for Azure Sphere, go here: https://neuropilot.mediatek.com/resources/public/latest/en/docs/npu_introduction

Improvements in Ease of Use

As with any technology, availability and fitness of a framework, SDK or toolset does not guarantee broad-market adoption. Tools must become easy enough that users can easily apply them to create their own TinyML models.

Without visualization, optimization and compiler tools, managing ML model development and deployment can be quite challenging. Developers must build, train, deploy and then improve ML models, often requiring rare data-science expertise and experience.

Some ecosystem partners are differentiating themselves with graphical ML development tools to make model development intuitive and easy to use.

Edge Impulse is a development platform that ranges in capabilities from novice ML users to expert-level developers, and from hobbyist to enterprise users. What this means is you can leverage their SaaS development tool if you want to quickly input some data, play with visualizations, and need relatively low knowledge of the math generate a model on your bench, at home, for free. But if you are an enterprise team or expert developer, their licensed development environment has some collaboration tools built-in so multiple contributors can work on the same project, and even some hooks for test / CICD integration and model deployment.

>> >> For information on Edge Impulse for Azure Sphere, including an example application from Microsoft’s very own Benjamin Cabe go here:

https://github.com/kartben/EdgeImpulse_RTApp

Convergence with IoT technology - Secure, Connected MCUs and OTA updates

Solutions using ML models are likely using the models to drive a differentiated solution. But how much value can they really create if they live in an echo chamber? How can an application learn if you can’t collect its insights? To maximize the business value of an intelligent endpoint the application needs to be connected to a cloud or on-prem application. And if it is going to be connected then it should be securely connected and include an over-the-air update (OTA) channel for firmware and ML model updates.

This is where Azure Sphere pairs nicely with TinyML, as it brings the secure silicon, secure IoT connection and secure OTA channel to the equation. With the introduction of Azure Sphere as an available target, ML development on IoT endpoints has made big strides.

Azure Sphere is a microcontroller platform designed to implement the 7 properties of a highly secure device. The Azure Sphere platform standardizes the silicon IP, operating system, and cloud services such that TinyML models will run within a secure environment, will be natively connected to an IoT cloud service, and will benefit from a built-in channel for secure over-the-air (OTA) updates. Together, TinyML and Azure Sphere create a powerful new baseline of capability for embedded developments.

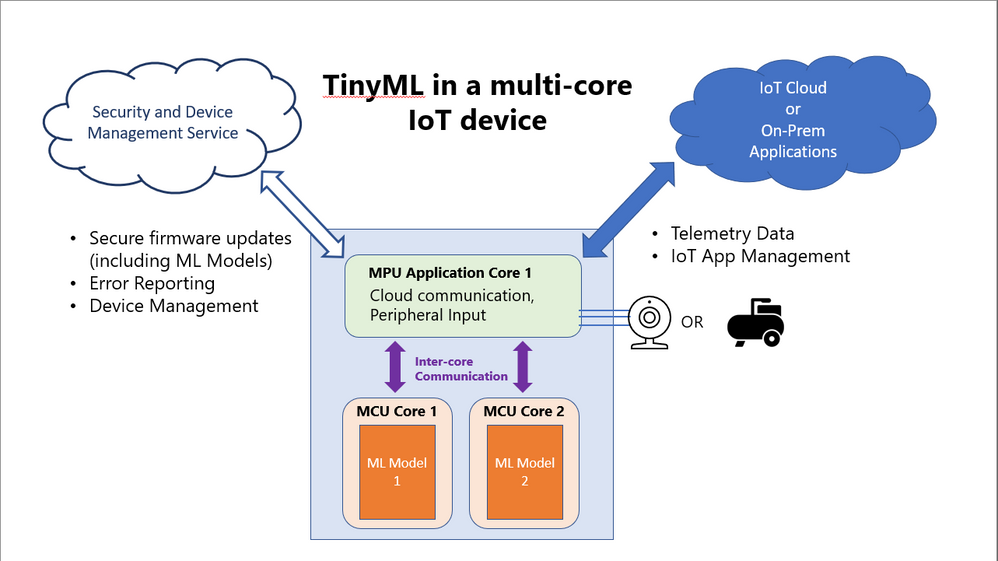

The following diagram is a reference framework for a general multi-core microcontroller running TinyML applications.

Note a few built-in IoT features to the reference framework.

- An independent security and device management cloud service and secure OTA channel – ML models and device firmware must have a secure channel and secure silicon to be remotely updated. Without a secure channel or secure silicon, it would be best to disable remote updates to avoid a serious attack surface on the device.

- An application core dedicated to secure cloud communication and peripheral input – one core dedicated to application execution and cloud communication.

- A separate connection to IoT cloud or on-prem application – The application core should also maintain the connection to the cloud for telemetry.

- n+1 application cores dedicated to TinyML models – Instead of developing a single, mega-model that would scale in complexity and size, split ML tasks into individual models that can be more easily maintained and optimized

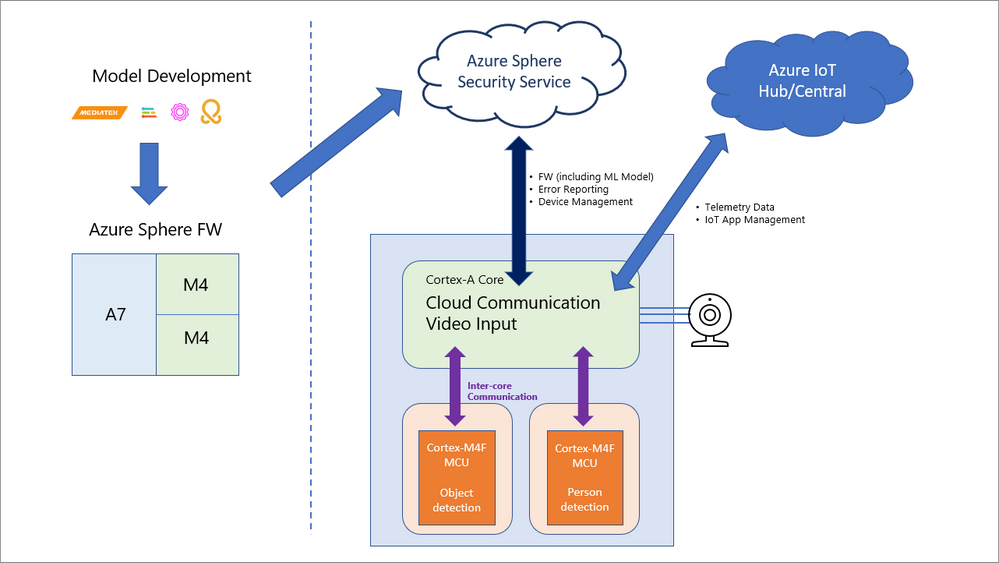

The diagram below depicts how the MediaTek Neuropilot Micro demo is using the multi-core MT3620 architecture to implement person and object detection on streaming video input.

The MT3620 has a Cortex-A7 core responsible for video data collection and secure network connectivity, while each of the two Cortex-M4’s runs MediaTek’s Neuropilot Micro runtime as well as independent models for people- and object-detection. This results in the ability to identify both people and objects at a rate of roughly one event per second. This problem would increase significantly in complexity if one were developing and training a single model to identify both people and objects, and neither might be possible if it weren’t for Neuropilot Micro’s runtime optimization of hardware resources.

A New Baseline for Highly Intelligent, Highly Secure IoT Solutions

If you are an IoT developer concerned that developing an ML solution is an overwhelming task, it is easy to understand why. Before these types of tools were available you needed to be proficient in data science, embedded systems design, device and even cloud security. But now with Azure Sphere providing a turnkey security solution and a comprehensive TinyML ecosystem, you can start developing a highly secure, highly intelligent IoT solutions on a MCU with confidence!

Using TinyML tools on Azure Sphere creates a powerful starting point for natively connected, highly intelligent and highly secure IoT solutions.

If you agree and want to get started, make sure to order your Azure Sphere development kit today (link here), download your TinyML toolset using the links above and please tell us in the comments what you are planning to build!

Until next time…

~Miguel and Lycus