This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

During the past few weeks Microsoft has experienced some unfortunate outages in our cloud services. These outages led to a number of organizations I support reaching out and asking, “How can I better proactively monitor the status of Office 365?”. This gave me an idea……but before we get to that, let’s discuss where you can find service status information for Office 365 and Azure.

Office 365 Service Status

The primary location to find the status of Office 365 Services is inside the Admin Portal using the Service Health Dashboard (https://portal.office.com/Adminportal/Home#/servicehealth) .

In addition to this portal, if you are a Twitter user you can follow Microsoft 365 Status (@MSFT365Status) to get notifications of incidents within Microsoft 365:

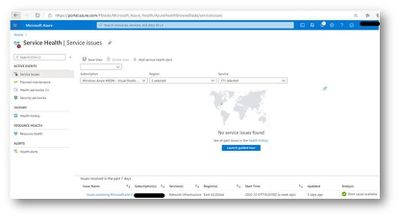

If you are interested in the status of Microsoft Azure, you can leverage the Service Health Blade (https://aka.ms/azureservicehealth :(

These are all very effective methods of tracking service status, but what if I am leveraging Azure Sentinel as my SIEM and I want to track the Office 365 Service Status? Well that was the question that got me started on this article. I find it is easiest to learn new technology by having a problem to resolve or an actual goal to achieve. So I decided this was a good use case to learn more about how to get custom data, in this case REST API data, into Azure Sentinel, use that data to alert on service degradation and then create a new workbook to visualize it. A pretty lofty goal for a guy with almost zero coding experience. Let’s see how it worked out…..

Step One: Getting Office 365 Service Status via API

As with just about every other component of the Microsoft Cloud, Office 365 Service Status can be accessed via the Office 365 Management API ( https://docs.microsoft.com/en-us/office/office-365-management-api/office-365-service-communications-api-reference ). I decided the most effective way to pull this data and send it to Azure Sentinel was to use an Azure Logic App. If you are not familiar with Azure Logic Apps, it is a low code/no code cloud service that helps you schedule, automate, and orchestrate tasks, business processes, and workflows when you need to integrate apps, data, systems, and services across enterprises or organizations. Azure Logic Apps are a sibling to Microsoft Power Automate that is part of Office 365, so learning one of these services translates to the other. This was very helpful because a Microsoft MVP in the UK, Lee Ford, had written a blog post in 2019 on accessing the Service Status via Power Automate (which was called Flow at the time): https://www.lee-ford.co.uk/get-latest-office-365-service-status-with-flow-or-powershell/ . I built on Lee’s idea to create my Logic App:

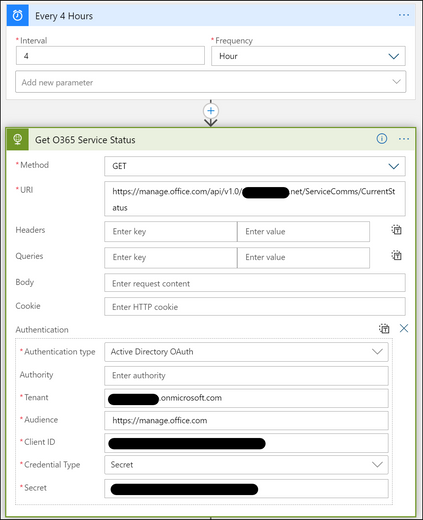

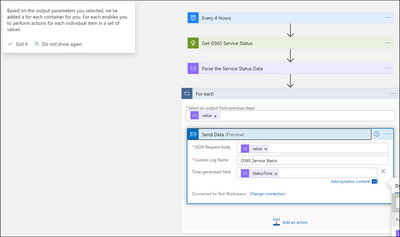

I started by creating a new Logic App that runs on a schedule and connects to the Office 365 Management API to get the Service Status via an “HTTP” Action. I chose every 4 hours, you can decide how often you want to pull the data for your use case.

The first thing that probably stands out to you is that the “Security Guy” hard coded the authentication secrets into the Logic App. I only did this for ease of development. If I were building this application for a production environment I would leverage Azure Key Vault to securely store this information (https://docs.microsoft.com/en-us/azure/logic-apps/logic-apps-azure-resource-manager-templates-overview#best-practices---workflow-definition-parameters ).

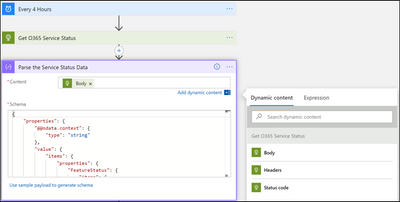

Next I used a “Parse JSON” action to manipulate the returned information from the HTTP Get. I used the schema from Lee Ford’s Blog post as my sample payload.

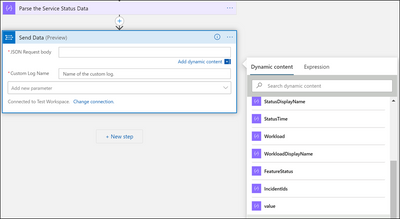

Now the last step is a little tricky. We need to take the returned JSON payload and send it to Azure Sentinel. This payload is an array, so it must be iterated through. Luckily, Logic Apps is built for people with minimal coding experience and helps guide you through the experience. Since we want to send this data to Azure Sentinel, which is built on Azure Log Analytics, we choose the “Send Data to Log Analytics” Action. When I click in the box for “JSON Request body” I am provided a pick list of returned information to choose from. However, the item we need to use is not shown, so you need to click the “see more” option in the pick list. This will expose the “value” item, which is what we need.

When we finish filling in the required parameters, Logic Apps will automatically recognize this is an array and create a For Each container to iterate through the values…pretty cool!

We are not finished yet. We don’t actually want “value” in the JSON Request Body field. We want whatever is the “Current Item” in the loop. So, delete “value” in the Send Data action and go back to the bottom of your pick list and choose Current Item.

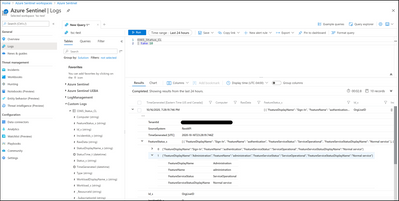

And that’s it! You have now ingested Office 365 Service Status to Azure Sentinel. One thing I forgot to point out, Azure Log Analytics will automatically create the custom log the first time the Logic App runs. It will add a table called “yourname_CL”.

Step Two: Making use of the data

Now that we have ingested the service status data into Azure Sentinel, let’s do something with it.

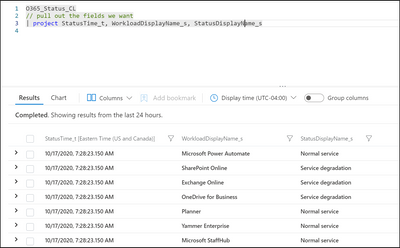

First let’s write a simple KQL (Kusto Query Language) query to pull out the basic data we need:

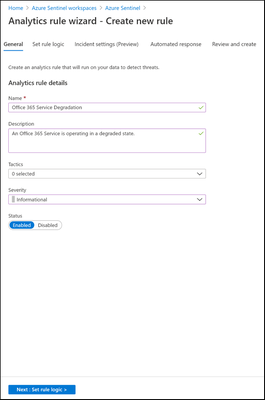

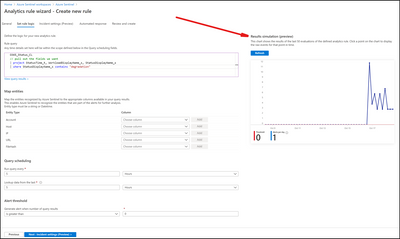

Now let’s create a scheduled query analytics rule that will create an incident when a service is degraded:

One of the cool new features in Azure Sentinel that you will notice above is where we can get a preview of what this query will produce. Based on the settings I have chosen; this will create 1 Alert per day. You don’t want to create an alert flood, but you do want to be notified appropriately. So, change the Query Scheduling for what makes sense for your organization.

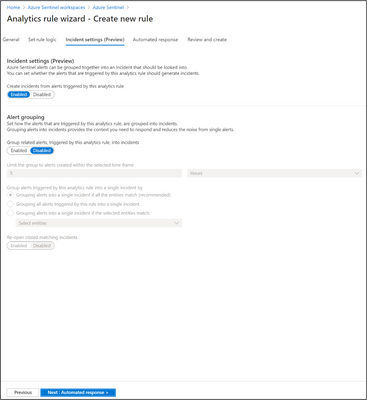

I’m just going to use the defaults for Incident Settings.

You can even use an Azure Logic App playbook to take some automated action based on the Incident.

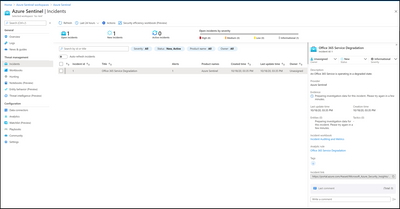

Done! Now we will see an incident generated if there is a service degradation in Office 365. See below:

For a production environment, I would probably want to be a little more detailed in my incident generation, getting down to individual services, but hopefully this has shown you the “Art of the Possible” and you can take it further.

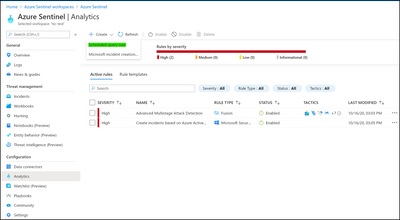

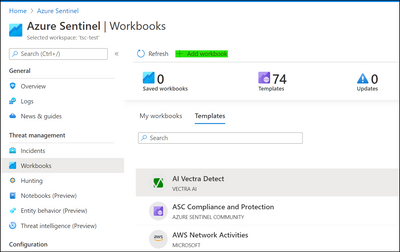

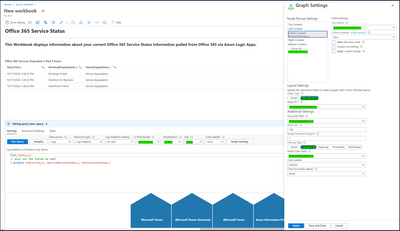

Step Three: Bonus Step! Let’s create a workbook in Azure Sentinel to display some of the information we have gathered.

Workbooks (https://docs.microsoft.com/en-us/azure/sentinel/tutorial-monitor-your-data ) provide a way to visualize your data in a custom dashboard experience.

Let’s see what we can come up with. First we need to create a new workbook:

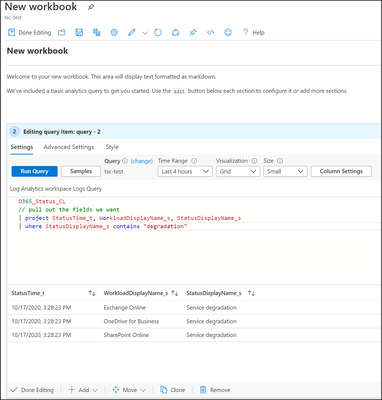

This will get a workbook populated with some sample data to start with, let’s edit it:

Let’s start off by just making a simple grid of the query we already built to show degraded services in the past 4 hours:

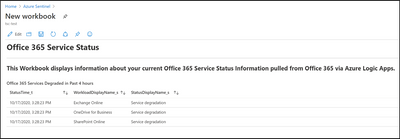

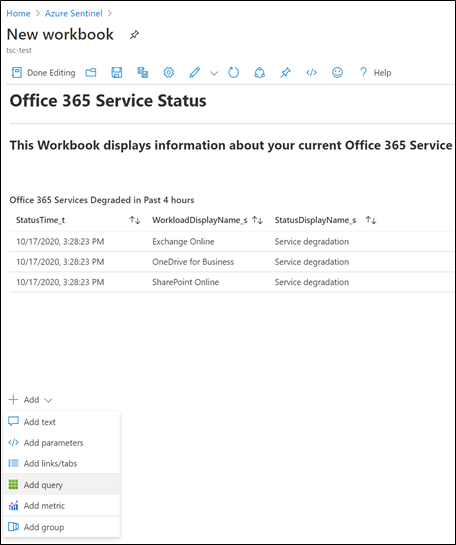

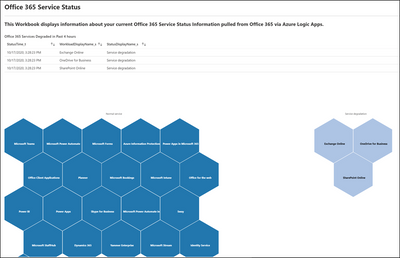

That will get us a simple workbook like this (I also edited the title before I captured the screenshot):

That’s not very exciting, so let’s add another Query section and try to build a graph:

We are going to build a “honey comb” graph that will show which services are operational and which are degraded:

Instead of creating multiple screenshots I have highlighted in green the items I changed. Also I used a query that returns all service status, not just degraded. (see above)

When you click “Done Editing” you will get this visualization which can be zoomed into and out of, as well as moved around. Not perfect, but it only took a few minutes to build. I’m sure you can come up with an even better one!

Thank you for getting this far in my post…..It went a little long :smiling_face_with_smiling_eyes:. I hope you found this useful and that you can use it to build something for your organization. Please post comments or questions below.

Thanks, Tony!