This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

Tom McElroy, Pete Bryan - Microsoft Threat Intelligence Center

Pete Bryan posted a blog in March detailing how to protect Microsoft Teams with Azure Sentinel. Since then a new Teams connector has entered public preview, this allows Teams data to be accessed without the need for a custom log. Using the official connector means you will be able to take advantage of new Teams log events as they become available. More information on setting the connector up can he found here. Teams logs are provided by the Office 365 connector as part of Office Activity logging so will not incur additional costs to ingest if Office Activity logs are already being ingested.

This blog post will cover how Teams logs can be expanded to provide deeper security insight by mapping additional data from other tables available in Azure Sentinel, the topics covered are:

- Extracting Teams file sharing information

- Mapping Teams logs to Teams call records

- Merging Teams logs with sign in activity to detect anomalous actions

Exploring Teams File Sharing

Teams allows users to share files in conversations, this feature is commonly used to share meeting notes, agendas or documents when collaborating on a piece of work.

When sharing a file on Teams it uses underlying Office technology to upload the file to the users OneDrive, it then shares the file using existing SharePoint operations. While file sharing information is not available in the local Teams Log, it can be extracted from the Office Activity log.

Teams file uploads are recorded in the Office activity log as SharePoint file operations. To distinguish Teams file uploads from other services the column “SourceRelativeURL” is populated with the entry “Microsoft Teams Chat Files”. The query below will extract Teams file uploads from the Office Activity log.

The query can now be expanded to find suspiciously named files. Either a list of potentially suspicious filenames can be provided, or an external data source can be used. In the below query, matching is being performed to detect users sharing passwords or files containing a confidential term. A list of terms can be passed in using a list, in the example below the list is called “suspiciousFilenames”.

This query can be modified to search for suspicious file extensions instead, the query below will show common Windows executable files being shared through Teams.

Joining either of the above queries back to the Office activity log allows the query to extract how many users received the file and how many users opened the file, with counts for each. In the below example the suspicious file name query has been expanded to collect the additional user information.

While the above examples use a fixed list of suspicious filenames for matching, the Kusto external data operator can be used to import a list of known-bad filenames.

Matching malicious filenames is often unreliable as attackers will name files to blend in with their target environment, however, this query could be used situationally in a response scenario to search of malicious documents that may have been passed between users. While uncommon, attacker spear phishing lures can prove so interesting that users share the lure beyond the initial recipient.

Mapping Teams log to Teams Call Records

As well as providing key administrative and user activity logs via the new Teams connector we can also access specific call record logs. These logs provide details about calls and meetings made via Teams, and provide details about who organized them, who participated, and what technologies were used. These logs need to be collected separately from the main Teams logs collected by the connector, for more details about how to enable collection of these events, please refer to this blog.

Once we have the call logs available to us, we can use our existing Teams queries to pivot from a user and identify what calls they have been present in. Below is an example of taking one of the suspicious file upload queries above and pivoting to see the call attendance of the user who originally shared the file. This is a good way to identify the scope of an incident and other impacted users.

Note: In the query above we are presuming that the custom table name used to ingest the Teams call logs is “TeamsCallLogs_CL”. If your configuration is different you will need to edit the query above to reflect that.

Finding Anomalous Teams Logins

Common techniques to identify anomalous sign in activity include analysing the login locations for suspicious countries, searching for IP subnets that are not used commonly, and looking for multiple failed accesses followed by a successful login to detect brute force activity. These techniques are effective at identifying potentially suspicious activity but can generate high false positive rates. With more users working from home, some of these techniques have become impractical given the network diversity when users connect from home offices.

With additional information from other log sources it is possible to improve confidence in the detections. Anomalous login techniques can be corelated with log data from applications to determine if an administrative action was performed during a time window after a successful anomalous login has occurred. The new Teams connector provides logging of Teams events, including administrative events, which can be used to detect potentially suspicious Teams activity taking place.

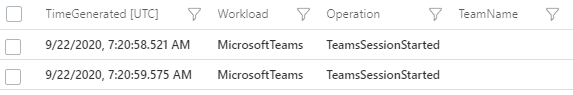

Certain actions in Teams will result in a log entry, this ranges from a Teams session starting to administrative actions e.g. deleting a team. This information is logged to the “Operation” column of the Teams log. As seen in the image below the most common event “TeamsSessionStarted” is shown.

There are a number of operations within the Teams log that can be considered administrative, and a subset of those have potential application in a threat actors campaign, in this example the following actions will be used as indicators of of potentially suspicious activity:

A TeamsAdminAction operation is logged when a team administrative action is performed, this covers a wide range of administrative actions that can be performed by an account with an administrator role, a full list of action can be found here. Many of these actions would be useful to an attacker, allowing management of meetings, call policies, viewing user profiles and accessing monitoring and reporting from Teams.

MemberAdded and MemberRemoved operations can be performed by any Teams user that is an administrator of a Team. These events are generated when a member is added to or removed from a Team. After compromising a legitimate account, a malicious actor may attempt to add their own account to meetings that they wish to eavesdrop on, the actor may remove that same member after data has been collected from the Team. Generally, these events are quite common, but when following an anomalous login, they should be treated as suspicious.

The AppInstalled and BotAddedToTeam log events are generated when an app or bot is installed into Teams. An attacker could use this to install malicious Teams addons or bots using the custom app upload feature. Apps and bots are installed on a per-user basis, but can be added to a Team by a user.

Now that potentially suspicious operations within Teams are identified, a query can be constructed that enriches the sign in log data with Teams data to find anomalous logins that were followed by an administrative action.

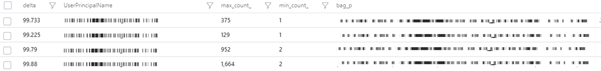

The query below first collects successful logins to Teams from sign in logs, it then calculates the delta for each user of the IP addresses used to login. Calculating the delta between the least used IP and the most used IP for each user will find accounts where an IP has been used to login only a handful of times vs. the user’s normal login activity. As no fixed values are used this also means that the detection will scale based on the user’s activity level. It will also adapt to activity where the user is roaming on a home connection. Even on internet service providers with dynamic IP addresses, the lease time will be long enough to evenly spread IP usage.

The example below shows a standalone calculation of the delta between IP’s used to login to accounts in sign in logs, followed by the output from Azure Sentinel.

The delta calculation alone may still produce false positives but can be further improved upon. The next two checks will use country-based analytics, they are optional and will need customisation based on environment. With all queries a point is reached where additional checks may begin to increase the false negative rate, so customization on a per-environment basis is often needed. In this instance the objective is a low noise detection quality query.

First, the number of distinct countries each user has logged in from is calculated, a threshold called “minimumCountries” is set to 2 by default meaning the user must have logged in from two distinct countries. Once users that have accessed their account for at least 2 countries have been identified the prevalence of each country used to login is calculated, only countries that appear less than 10% in sign in logs are identified.

The query below expands the original delta query to include the country checks.

Executing the above query will provide a list of accounts where a suspicious login has occurred, it will also provide the times those suspicious logins occurred. At this point the above query can be used as a hunting query to identify suspicious activity, but it may produce false positives.

Now candidate suspicious logins have been identified, a join can be performed on the Teams log data using the users email address. A time window join allows the query to determine if a Teams admin action was performed within a specified window. In the default query this will detect admin actions up to 1 hour after the successful login. The below query will perform the final join.

With the above queries combined the false positive rate will be reduced significantly allowing this query to be used as a detection. This detection is already published to the Azure Sentinel GitHub and can be found here.

While this example uses Teams data, it is possible to apply the same logic to any sign in anomaly query where the application or service provides logging of administrative activity.

In Conclusion

The blog has shown how to use additional data sources to enhance threat hunting in Azure Sentinel for threats within Microsoft Teams.

Extracting additional information from OfficeActivity logs allowed identification of potentially suspicious files being shared on Teams. The blog also showed how call records can be extracted and used to further expand visibility of Teams activity. Knowledge of where file sharing and call records are stored provides a starting point to developing queries to answer specific questions about user account activity.

As an example of how merging multiple data sources can lead to higher quality detections in Teams, the blog looked at how merging signing logs data with Teams activity enables the creation of a high-quality, low false positive detections. These principles can be applied in other scenarios where a high false-positive anomaly query can be combined with a suspicious behaviour.

If you'd like to know more about the Teams schema in Azure Sentinel this blog post contains information on the types of Teams operations you may see.

If you have questions or specific scenarios you would like to share, please leave a comment or reach out.