This post has been republished via RSS; it originally appeared at: Device Management in Microsoft articles.

This post covers the vertical scaling of ConfigMgr Site Systems in Azure – to realize efficiency gains made available by the elasticity of the Azure Cloud. The migration of the internal ConfigMgr infrastructure at Microsoft from on-prem to Azure has been described in a previous case study. We aim to describe in this post the efficiencies we have been able to drive by scaling ConfigMgr Site Systems such as the Management Point, Distribution Point and Software Update Point to “right size” based on client traffic and compute demands.

As the global scale of the Azure cloud continually expands, our team has been able to reap the associated benefits by seamlessly switching to newer VM SKUs. These typically offer better specs in the form of latest gen CPUs or potentially lower cost tiers, as seen with AMD SKUs. The VM resize involves a reboot/redeployment to the newer SKUs with little downtime and in some cases a prior request to Azure support to increase regional quota allocations. For our ConfigMgr Site Systems, this meant moving from the older F-series SKUs to the Fs_v2 series SKUs.

Client Traffic in the internal Microsoft environment typically follows a cyclical pattern where we observe CPU and Concurrent Connection Peaks early in the business day followed by a leveling off typically around afternoon hours. The rightsizing was aimed with this traffic pattern in mind, but our team initially limited the scope of the scaling solution to evenings and weekends to gather operational insights. Considering the example of Management Points, this meant that at the start of the business day our Site Systems would be scaled up to F8s_v2 to meet peak demands and when average CPU had been measured to be at a lower baseline level in the evening hours, the Site System would be scaled down to F4s_v2 or lower based on CPU load. The sections below describe the high-level design details of the scaling solution.

Runbook Overview

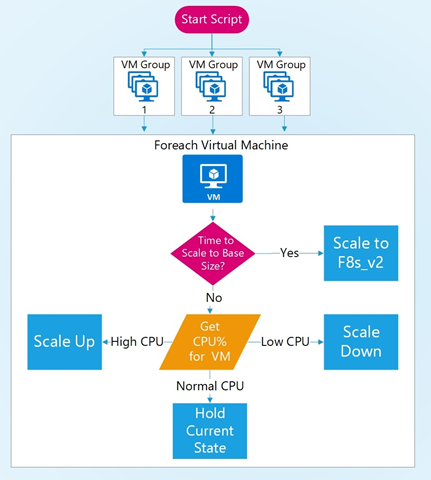

We use an Azure Automation PowerShell runbook to monitor various Virtual Machine groups. Each group typically has 3-8 VMs based on the Site System type and ConfigMgr Site/Region the VMs are located. For example, to handle client traffic in the Southeast Asia region, we have three VM groups for the DPs, MPs, and SUPs. The basic outline of the Runbook is located below:

For each VM group, the runbook will sequentially evaluate CPU load and the current SKU against a set of scale up and scale down thresholds. If the CPU is higher than the defined scale up threshold, say 90%, the automation will scale the VM up to the next SKU. Likewise, if the CPU is lower than the scale down threshold, say 40%, the automation will scale the VM down to the next lower SKU, else no changes are made.

The scaling logic allows us to ensure that there is only one VM per Site System type and ConfigMgr Site/Region that might be unavailable at a time. It also lets us set different configurations based on the Site System type. To allow multiple VMs to scale simultaneously and lower the time to fully execute the Runbook, we modified the logic to process each VM group in parallel. This allows VMs from separate groups to scale simultaneously while still adhering to the downtime requirement described previously. For more information about parallel execution, check out PowerShell Parallel Execution or PowerShell Workflows for Automation Runbooks.

Based on historical CPU and Connection trends, we found it useful to scale the Management Point VMs to F8s_v2 during business day mornings (around 6 am) to prepare for the inevitable traffic. Rather than letting each VM inevitably scale up during peak traffic resulting in VM downtime, we took a proactive approach by scaling up a few hours before peak traffic to ensure all client needs would be handled without those associated downtimes. Once the traffic decreases, the VMs would dynamically scale to a lower SKU as needed.

Log Analytics Query

At present, the most important metric for our Scaling algorithm is the current CPU load for the VM. Our current source of this data is a Log Analytics Workspace where the associated Perf counter is logged every 60 seconds. After querying for the data, we average the results over a specified timespan which gives a single numerical average for the CPU. We have found the CPU load a useful indicator of the utilization of a virtual machine and have not had to consider any alternate metrics such as Current Connections yet. Listed below is a sample Log Analytics query for getting the CPU load of a VM.

Scaling a Virtual Machine

When performing the scale up/down operation, we initially suppress alerts for the VM, update the VM and log the VM Name, CPU load, Old Size, New Size, Start Time, and End Time in a SQL Table. We have observed the average downtime for a VM performing a size change to be around 1-2 minutes, with services coming online in the next 30 seconds. A great blog post to read for more information about resizing VMs can be found here: Resize a Windows VM in Azure - Azure Virtual Machines | Microsoft Docs. As each environment has its own business/availability requirements and Site System configuration, we are sharing a basic version of the Runbook code we implemented in the following link. The code can be modified as needed, but the crux of the scaling solution centers around the following snippet:

A Typical Automation Day

A typical day sees the MP groups with the most scaling events and our DPs and SUPs rarely see scale changes. We’ve mentioned how the MPs in a region (say Redmond) would be forced to scale up to 6 AM. As the day progresses, each MP can downsize to F4s_v2 or F2s_v2 if the CPU falls below a threshold. By the end of the business day, the MPs will almost always downsize to F4s_v2 as CPU load decreases and may even downsize again to F2s_v2 during the night when activity is lowest. At 6 AM the next morning, the cycle repeats, bringing the MPs back to the baseline size of F8s_v2.

The number of size changes that occur throughout the day will depend on how often you run the Scaling Automation runbook. You want to be responsive to changing client traffic and varying CPU loads, so we run ours every 12 minutes. The minimum amount of time allowed when linking the Automation runbook to a schedule in Azure is 1 hour, but we use a workaround to call it more often. We call the runbook from a webhook using a Scheduled Task on a VM. More information regarding this solution can be found in the following blog post: Start an Azure Automation runbook from a webhook.

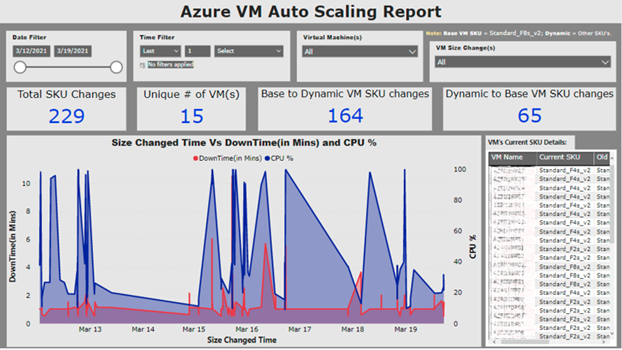

Auto Scale Power BI Report

To track the history of VM changes, we created a Power BI report that pulls data from the SQL table where each SKU change is stored. This report allows us to filter across different parameters and track detailed VM SKU change events. This report allows us to monitor abnormal automation behaviors in a way that is visually appealing.

Cost Savings

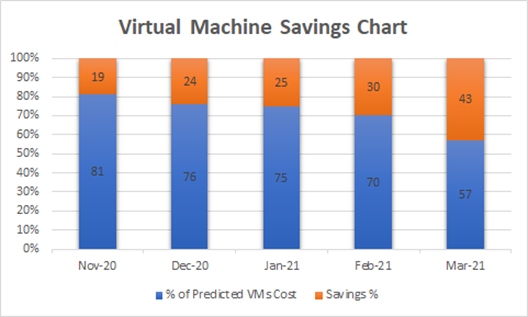

Over the past few months, we have seen steadily increasing savings rates for the VMs due to adding more VM groups to the scaling runbook and refining the logic for our specific needs. In the month of March 2021, we were able to cut the costs of using VMs by 43%. To calculate this savings percentage, the predicted cost was based on how we handled VMs before implementing the autoscaling runbook, keeping all VMs at a specific SKU for the entire month. By comparing this to our actual costs for the month, which were 57% of that predicted value, we calculated a savings of 43%.

Future Goals/Conclusion

We aim to improve our scaling efficiency by investigating a switch to native Azure metrics and piloting the new Azure Monitor agent (preview). This would also enable us to overcome any potential latency issues when ingesting data into Log Analytics.

We hope you’ve found this post useful, especially if you are leveraging a cloud provider for your ConfigMgr Infrastructure. We’ve tuned the solution to meet our business requirements and shifting a portion of our Co-Management workloads to Intune has allowed us to tolerate brief periods of downtime on individual VMs during business hours. We realize complexity and requirements will vary across environments, but there may be cost savings to generate even if similar scaling is restricted to nights or weekends.