This post has been republished via RSS; it originally appeared at: Azure Data Explorer articles.

Business continuity in Azure Data Explorer refers to the mechanisms and procedures that enable your business to continue operating in the face of a true disruption. There are some disruptive events that cannot be handled by ADX automatically such as:

- User accidentally dropped a table

- An outage of an Azure zone

- An entire Azure region is no longer available due to a natural disaster

This overview describes the capabilities that Azure Data Explorer provides for business continuity and disaster recovery. Learn about options, recommendations, and tutorials for recovering from disruptive events that could cause data loss or cause your database and application to become unavailable.

Accidentally dropping a table

Users with table admin permissions or higher are allowed to drop tables. If one of those users accidentally drops the table then it is still recoverable using the .undo drop table command. This is successful given the recoverability property has been enabled in the retention policy (data will be recoverable 14 days after its deletion).

Outage of an Azure Availability Zone

Availability zones are unique physical locations within the same Azure region.

Azure availability zones can protect an Azure Data Explorer cluster and data from partial region failure.

Deploying a new cluster to different availability zones means the underlying compute and storage components are deployed to different zones in the region with independent power, cooling and networking. In a case of a zonal downtime the cluster will continue to work, but it might have performance degradation until the failure will be resolved.

In addition, you can use zonal services which means allowing to pin an Azure Data Explorer cluster to the same zone as other Azure resources that are used in conjunction with that cluster.

Deployment to various or specific availability zones can be done only during cluster creation and it cannot be modified later.

For more details on enabling availability zones on Azure Data Explorer please read - https://docs.microsoft.com/en-us/azure/data-explorer/create-cluster-database-portal

Outage of an Azure Region

Azure Data Explorer currently does not support an automatic protection against the outage of an entire Azure region. Theoretically this can happen in case of a natural disaster like an earthquake. If you require a solution for this situation you must create two or more independent clusters, and make sure that the clusters are created in two Azure paired regions.

Once you created multiple ADX you must make sure that:

- All management activities (such as creating new tables or managing user roles) must be replicated on all ADX cluster.

- Data is ingested into all clusters in parallel.

ADX will make sure that maintenance operations (such as upgrades) are never conducted in parallel to two clusters if they are in Azure paired regions.

Regional outage - HowTo

Azure Data Explorer currently does not support an automatic protection against the outage of an entire Azure region. Theoretically this can happen in case of a natural disaster like an earthquake. If you require a solution for this situation you must create two or more independent clusters,

This HowTo article presents an example how an create an architecture that takes business continuity into account under those heavy conditions.

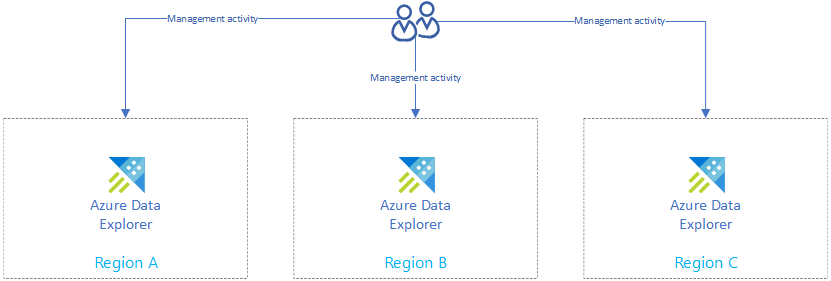

Create independent clusters

In the first step you must create more than one cluster in more than one region in order to protect against regional outages.

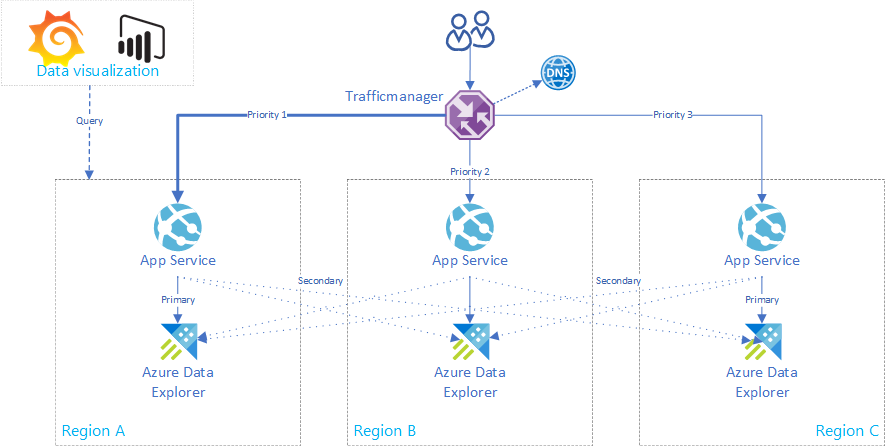

Please take a look on how to create clusters. Make sure that at least two of them are created in two Azure paired regions. The drawing is showing three clusters in three different regions. In the rest of the article we are referencing the ADX clusters as replicas.

Duplicate management activities

In order to have the same cluster configuration in every replica you must replicate the management activities.

- Create the same databases/tables/mappings/policies on all replicas

- Manage the authentication / authorization on all replicas

There are various ways how to manage an ADX. You could use the portal to create a new database or even one of our SDKs.

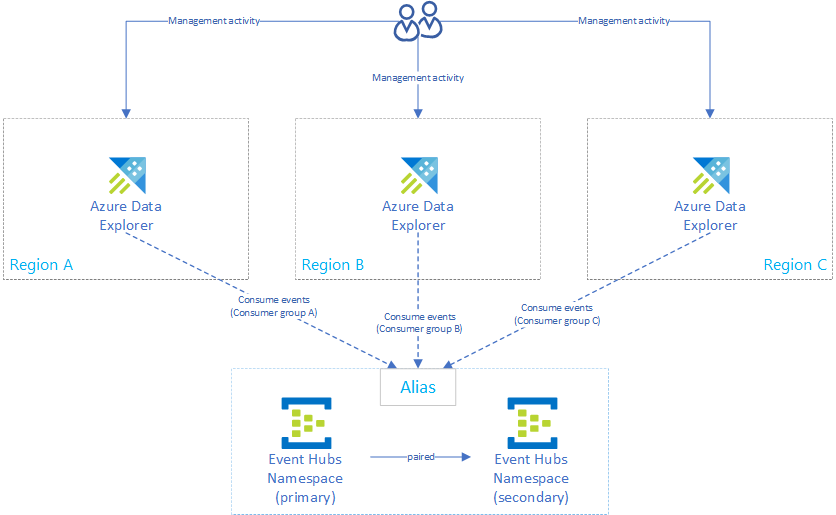

Setup data ingest

Besides the management activities of the previous step you need to make sure that you configure data ingest on every cluster in the same way.

Hardening ingestion methods leveraging using advanced business continuity options:

- Ingest from IotHub - The recovery options available to customers in such a situation are Microsoft-initiated failover and manual failover.

- Ingest from EventHub - The disaster recovery feature implements metadata disaster recovery and relies on primary and secondary disaster recovery namespaces.

- Ingest from storage using Event Grid subscription: The ingestion from storage works using Event Grid creating messages and sending them to an EventHub. So similar measures must be implemented for the BlobCreated messages which are sent to EventHub. The storage itself can be hardened by implementing the appropriate disaster recovery and account failover strategy.

In the following example you are using an ingestion via EventHub. A failover flow has been setup and Azure Data Explorer consumes from the Alias. You need to make sure to consume from the EventHub using a unique consumer group per ADX replica. Otherwise you would distribute the traffic instead of replicating it.

The ingestion via EventHub/IoTHub/storage is very robust. In case a cluster is not available for some time it will catch up on the to be inserted messages or blobs. The underlying technology makes use of checkpointing.

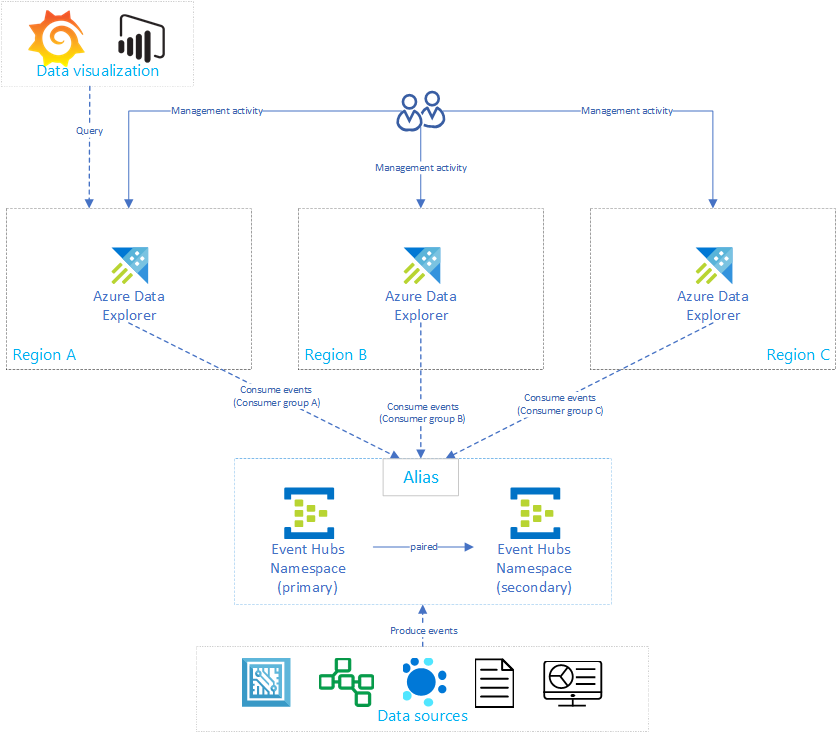

What you get until now

With the current state of the business continuity hardening you distributed your data and management to multiple regions and in case of a temporal outage the underlying technology of Azure Data Explorer will be able to catch up in the individual replicas.

As shown in the diagram your data sources are producing events to the failover configured EventHub and all Azure Data Explorer replicas are consuming from it.

On the other side the data visualization components like PowerBI, Grafana or SDK powered WebApps are querying one of the replicas.

The remaining part of this article sheds a light on the following optimizations

- Architecture for an active / hot standby

- How to implement a highly available application service

- How to optimize cost in an active / active architecture

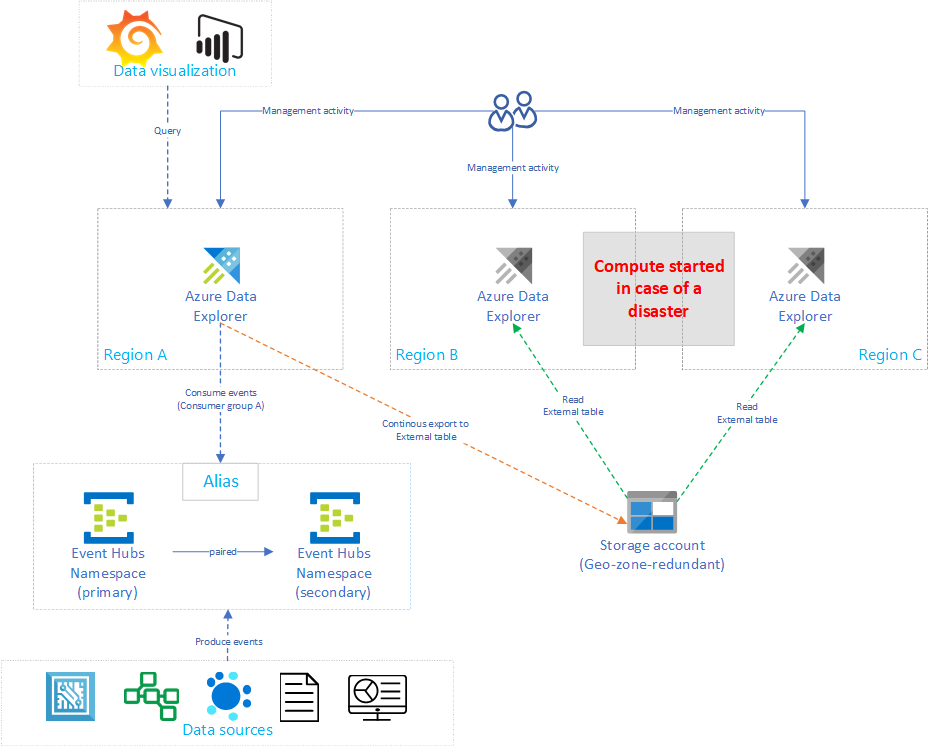

Architecture for an active / hot standby

Having the exact Azure Data Explorer setup on all replicas including a 24/7 uptime on all of them is linearly increasing the cost by number of replicas. In order to optimize the cost this section explains a variant of the architecture shown which makes a compromise between time to failover and cost.

The cost optimization has been implemented by introducing passive Azure Data Explorer instances which are only turned on in case of a disaster in the primary region (i.e. region A). Some examples on how to start / stop Azure Data Explorer cluster:

- Flow

- The “Stop” button in the portal

- Azure CLI: “az kusto cluster stop --name=<clusterName> --resource-group=<rgName> --subscription=<subscriptionId>”

As you can see in the drawing only one cluster is consuming from the EventHub. The primary cluster in Region A is performing a continuous export of all data to a storage account. The secondary replicas are getting access to the data using external tables.

Now the secondary clusters in Region B and C do not need to be turned on 24/7 which reduces the cost significantly. The drawback of this solution is that the performance on the secondary clusters will not be as good as in the primary cluster for most of the cases.

How to implement a highly available application service

This section should demonstrate how to create an Azure App Service which supports a connection to a single primary and multiple secondary Azure Data Explorer cluster. The following picture is illustrating the setup (intentionally removed the management activities and the data ingest).

Having multiple connections to replicas in the same app service increases the availability of the overall solution (not only regional outages can cause an interruption of the service). Recently we pushed some boilerplate code for an app service to github : https://github.com/Azure/azure-kusto-bcdr-boilerplate. In order to implement a multi-ADX client the AdxBcdrClient class has been created. Each query that is executed using this client will be send first to the primary ADX and in case it fails to the secondaries.

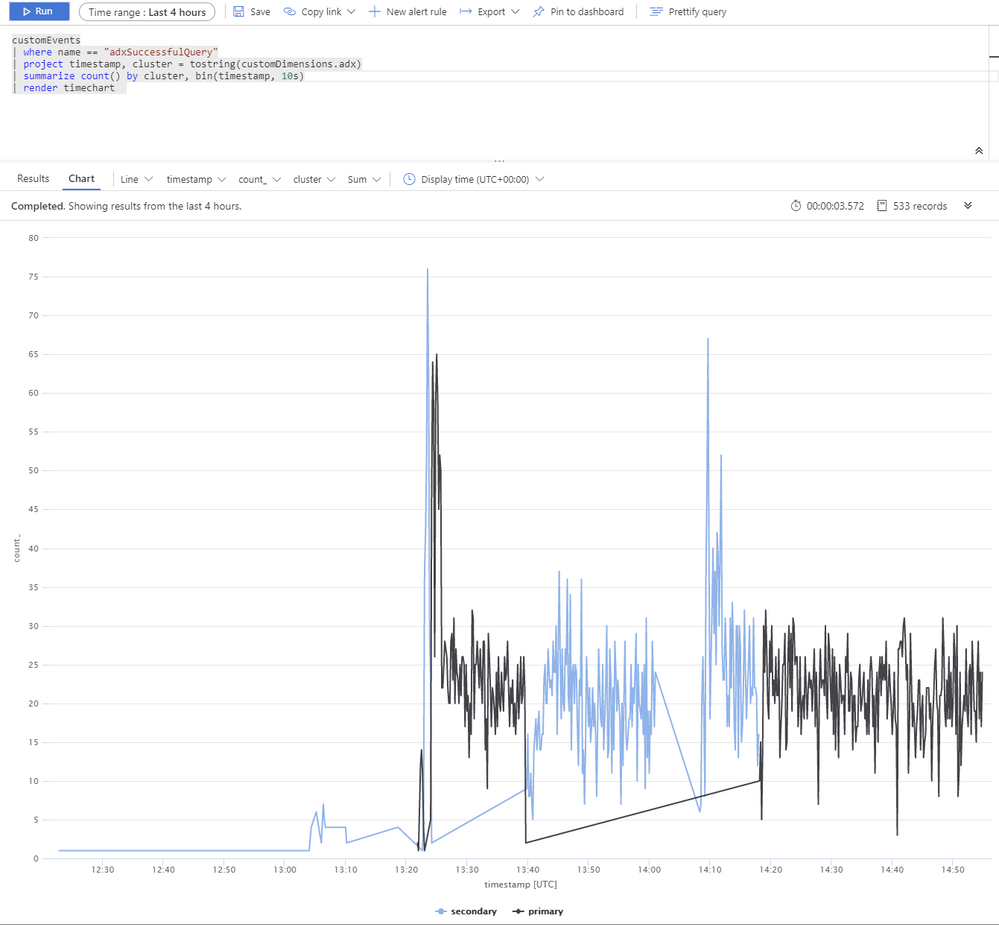

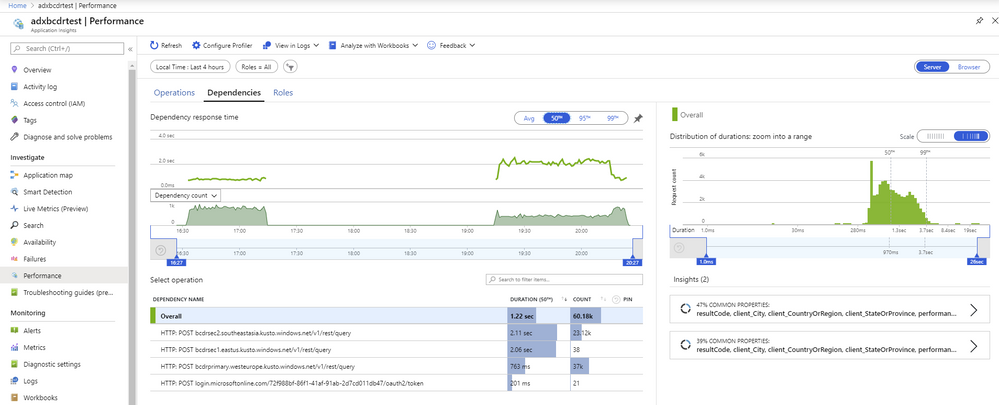

In order to measure the performance and request distribution to primary/secondary cluster custom application insights metrics have been used. Some of the results which have been captured during the test:

The following picture shows that during the test multiple Azure Data Explorer cluster have been used. The reason for this is a simulated outage of primary and secondary clusters to verify that the app service BCDR client is behaving as intended.

The Azure Data Explorer cluster have been distributed across West Europe (2xD14v2 primary), South East Asia and East US (2xD11v2). The slower response time can be explained by the different SKUs and by doing cross planet queries.

One last extension to this architecure could be the dynamic or static routing of the requests using Azure Traffic Manager routing methods. Azure Traffic Manager is a DNS-based traffic load balancer that enables you to distribute the app service traffic optimally to services across global Azure regions, while providing high availability and responsiveness. Alternatively one could use Azure Front Door based routing as well. An excellent comparison between both can be found here.

How to optimize cost in an active / active architecture

Adding replicas to an active / active architecture increases the cost linearly. Besides the cost for compute-nodes, storage and markup one needs to take increased networking cost for bandwidth into consideration.

Using the optimized autoscale feature one can configure that the horizontal scaling for the secondary clusters. They should be dimensioned to be able to handle the load of the ingest. Once the primary cluster is not reachable, they will get more traffic and scale out according to the configuration. In my previous example this saved roughly 50% of the cost compared to having the same horizontal and vertical scale on all replicas.

Conclusion

Even if Azure Data Explorer is not offering an out-of-the-box business continuity and disaster recovery solution this article outlined different strategies to mitigate the risk. Depending on the investment, the user can minimize the impact of a regional outage.