This post has been republished via RSS; it originally appeared at: Azure Data Explorer articles.

Introduction

Azure Data Explorer (ADX) is commonly used to store and analyze event logs of business processes. These logs are generated everywhere – applications emit events capturing user actions, IoT devices send sensors data, cloud services generate monitoring logs etc. Once the data is stored in ADX, it can be analyzed for insights. Kusto Query Language (KQL) has extensive set of operators and functions for various types of analysis, including relational operators (filtering, joining, aggregations etc.), time series analysis (anomaly detection, forecasting etc.), advanced plugins (root cause analysis, user analytics) and even extensibility to embed external Python/R packages. Today we are delighted to introduce the scan operator, adding native process mining and temporal analytics to ADX!

Process Mining

Process mining is a new emerging domain, dealing with analysis of events logs to discover the underlying process models, validate, and potentially improve them. In that context, an event contains at least process instance id, timestamp, and action. A process instance id (a.k.a. case id) might be identifier(s) of the specific user, device, session, or any other native partitioning of the events log. A process model describes a sequence of actions, commonly with time constraints, to achieve specific task. Some examples for process models:

- Users login to a shopping web site, browse products, add to cart, check out, pay and logout.

- A patient is admitted at a clinic, tested for pulse and blood pressure by the triage team, checked by the specialist, diagnosed, and dismissed.

- A washing machine cycle: starting, weighing the laundry, filling water, heating, washing, rinsing, spinning, and finishing.

There are 3 classes of process mining techniques:

- Discovery – automatically extract process model(s) out of the raw event logs.

- Conformance checking – comparing existing process model with actual event log, detect and analyze discrepancies.

- Performance mining – enhance the existing process to improve performance – reduce time between steps, improve retention etc.

The ‘scan’ operator

The scan operator is arguably the most advanced analytics operator in ADX. It extends ADX native analytics to include process mining, user analytics, recursive calculations and more. The user defines a process model in KQL, then feeds in a huge events log table. ADX scans it and extracts the instances (each one is an events sequence) conforming to that process model, filtering those deviating from it. The model definition syntax lets the user define sequential states and forward transition rules from the first to the last state. These states and rules are translated to a set of simple state machines that define the scan logic. Let’s review a few examples to understand the syntax, as well as its power and versatility for a wide range of scenarios.

Searching for ‘rallies’ of top stocks

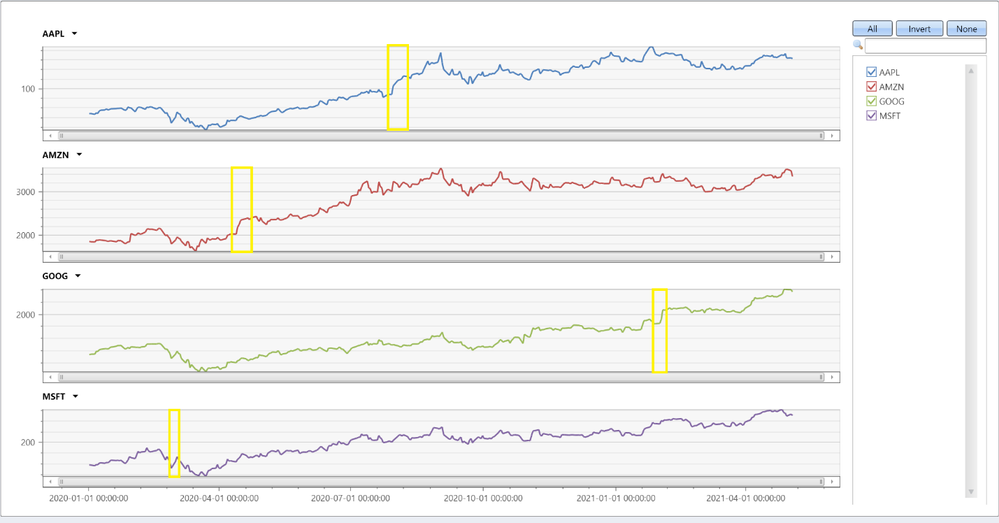

Here we analyze historical prices of top tech stocks from 2019 till today. This period was interesting, from start of 2020 till today the market is impacted by the Covid-19 pandemic, still the overall trend of these stocks is positive. In the query below we look for longest sequences of increasing price per each stock. The following query is built by these steps:

- Pre-processing:

- For each stock order the prices by time and calculate the daily change.

- Extracting sequences using the ‘scan’ operator:

- A sequence starts when the price increases.

- The sequence continues as long as the price increases or doesn’t change.

- The sequence ends when the price decreases.

- Post-processing:

- For each sequence delete the last event, as it contains the price decrease.

- Summarize the length and delta per sequence.

- For each stock extract the sequence with the biggest percentage increase.

|

Ticker |

start_date |

end_date |

trading_days |

delta_pct |

start_AdjClose |

total_delta |

|

AAPL |

2020-07-29 |

2020-08-07 |

7 |

20.752 |

93.75 |

19.455 |

|

AMZN |

2020-04-13 |

2020-04-21 |

6 |

18.461 |

2040 |

376.610 |

|

MSFT |

2020-02-28 |

2020-03-03 |

2 |

14.034 |

152.410 |

21.389 |

|

GOOG |

2021-01-28 |

2021-02-03 |

4 |

12.422 |

1843.939 |

229.060 |

We can see that Apple had the longest and biggest rally, 7 consecutive trading days with almost 20% increase! Let’s chart all stocks:

We can nicely see the biggest increases, yellow highlighted.

Analyzing users’ mitigations for failing KQL queries

In this example we analyze sequences of users' queries following a failed one, trying to extract interesting insights. The following query is quite complex, its steps are:

- Pre-processing:

- For each user order the queries by time.

- Filter consecutive queries with less than a second gap – these are likely automated queries.

- Extracting sequences using the ‘scan’ operator:

- Start a sequence by a query that was not completed successfully.

- Continue the sequence until successful query or time out.

- Each step updates the sequence length and the number of retries (of exactly the same query).

- Post-processing:

- Aggregate sequences by user and retries only flag.

- Final aggregation by sequences length, final state and retries flag.

Results:

|

SequenceLen |

FinalState |

RetriesOnly |

SequenceNum |

|

2 |

Completed |

False |

306 |

|

2 |

Completed |

True |

20 |

|

3 |

Completed |

False |

66 |

|

3 |

Completed |

True |

2 |

|

4 |

Completed |

False |

25 |

|

4 |

Completed |

True |

1 |

|

5 |

Completed |

False |

11 |

|

6 |

Completed |

False |

4 |

|

2 |

Failed |

False |

8 |

|

2 |

Failed |

True |

20 |

|

3 |

Failed |

False |

2 |

|

3 |

Failed |

True |

7 |

|

4 |

Failed |

False |

2 |

|

4 |

Failed |

True |

1 |

|

5 |

Failed |

False |

2 |

|

5 |

Failed |

True |

32 |

|

6 |

Failed |

False |

13 |

|

7 |

Failed |

False |

3 |

|

11 |

Failed |

False |

1 |

Let’s first look on sequences of 2 events, i.e. a failed query followed by a successful one or a time out. These sequences account for 354/526=67% of all sequences. We can see that 326/526=62% of the failures were resolved by a single follow-up query. Out of these, 306/326=94% queries completed successfully after modifying the query, while only 20/326=6% completed just by retrying the same query. Looking on sequences of 3 events, additional 68/526=12.9% of the failed queries were resolved, but retrying the same query twice is much less effective: only 2/68=2.9% succeeded. The last thing to note is that as the sequences become longer, the chance of successful completion decreases, only a few sequences of 6 queries end successfully, longer ones just continued to fail, until the users abandoned them.

Summary

In this short blog we have seen only 2 examples of ‘scan’, however the scope and power of this operator is far beyond these specific use cases. This operator is very effective for various scenarios, from simple fill forward table columns or calculating cumulative sums, through analyzing events preceding some failure for root cause analysis, and up to funnel analysis, rectifying obstacles in UX workflows, improving performance and beyond. Have a look at scan doc, where you can learn how to use it and review more interesting examples. Note that the scan operator is new, currently in preview, still you are very welcome to try it and share your feedback with us!