This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

Until now, Jupyter notebooks in Microsoft Sentinel have been integrated with Azure Machine Learning. This functionality supports users who want to incorporate notebooks, popular open-source machine learning toolkits and libraries such as TensorFlow, as well as their own custom models, into security workflows.

We are delighted to announce that Microsoft Sentinel Notebooks now integrates with Azure Synapse Analytics for large-scale security analytics!

The new Azure Synapse integration provides additional analytic horsepower, enabling:

- Security big-data analytics, using cost-optimized, fully managed, serverless Azure Synapse Apache Spark compute pool.

- Cost-effective Data Lake access, building analytics on historical data via Azure Data Lake Storage Gen2, which is a set of capabilities dedicated to big data analytics and is built on top of Azure Blob Storage.

- Integration with multiple datastores and support for different data formats into security workflows.

- PySpark, a Python-based API for using the Spark framework in combination with Python, reducing the need to learn a new programming language if you're already familiar with Python.

For example, you may want to use notebooks with Azure Synapse to:

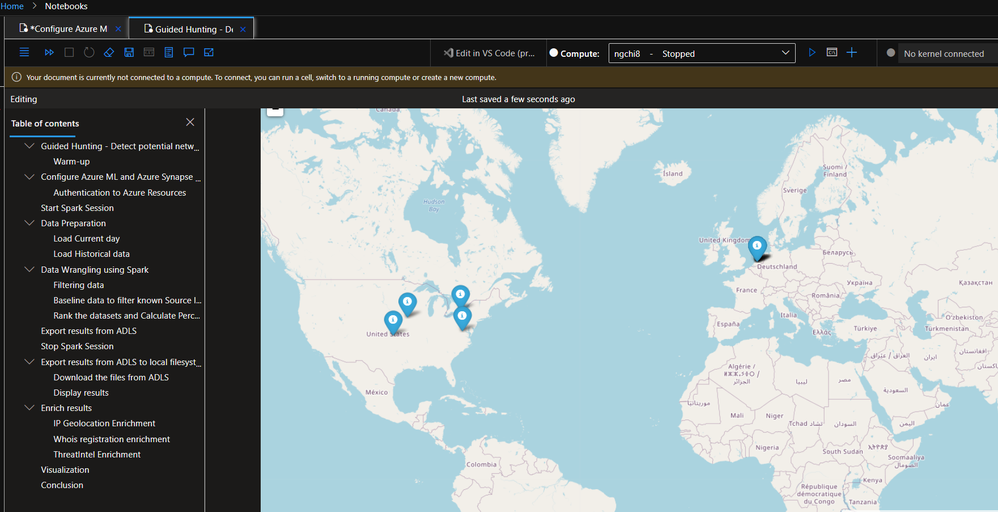

- Hunt for anomalous behaviors from large network firewall logs to detect potential network beaconing, or to

- Train and build machine learning models on top of data collected from a Log Analytics workspace.

Why should you care?

In the era of Big Data, Artificial Intelligence, and Internet-of-Things, data collection volumes are ever growing. It’s common for many organizations to collect billions of security events and terabytes of logs per day for their daily security monitoring. These numbers are projected to only expand exponentially over time.

To stay ahead of cyber threats, leveraging big data analytics in cybersecurity is now more essential than ever. Big data analytics enables the fast processing of enormous amounts of log data. The combination of both scale and speed in data processing is critical for timely detection of anomalies and attack patterns. As a result, this reduces vulnerabilities and improves cyber resilience.

Building big data analytics can be challenging, or even impossible, without proper tools and infrastructure.

One requirement is having a data storage that is cost effective enough to store vast quantities of logs for long term. It should provide easy integration with multiple data stores and support different data formats, whether it’s structured, semi-structure or unstructured data. For this, we leverage Azure Data Lake Storage Gen2 which provides file system semantics, file-level security, and scale.

Another critical component is a parallel processing framework that supports in-memory processing to boost the performance of batch-based analytics. For this, we leverage Apache Spark in Azure Synapse Analytics, one of Microsoft's implementations of Apache Spark in the cloud. Azure Synapse makes it easy to create and configure a serverless Apache Spark pool in Azure. Spark pools in Azure Synapse are compatible with Azure Storage and Azure Data Lake Generation 2 Storage, so you can use Spark pools to process your security data stored in Azure. The Spark pools can be configured and enabled for data preparation and data processing directly via Microsoft Sentinel notebooks that run on Azure Machine Learning environment.

With deep integration with Spark technologies for Big Data and Azure Machine Learning, Azure Synapse enables you to build batch-based analytics on Microsoft Sentinel and external security data via Microsoft Sentinel notebooks. This capability customizes and complements the core Microsoft Sentinel hunting and investigation experience.

How does the integration work?

The Microsoft Sentinel Notebooks integration with Azure Synapse provides these capabilities:

- An integrated UX that enables users to create a new Azure Synapse workspace directly from the Microsoft Sentinel Notebooks page. Learn more on Creating a new Azure Synapse workspace.

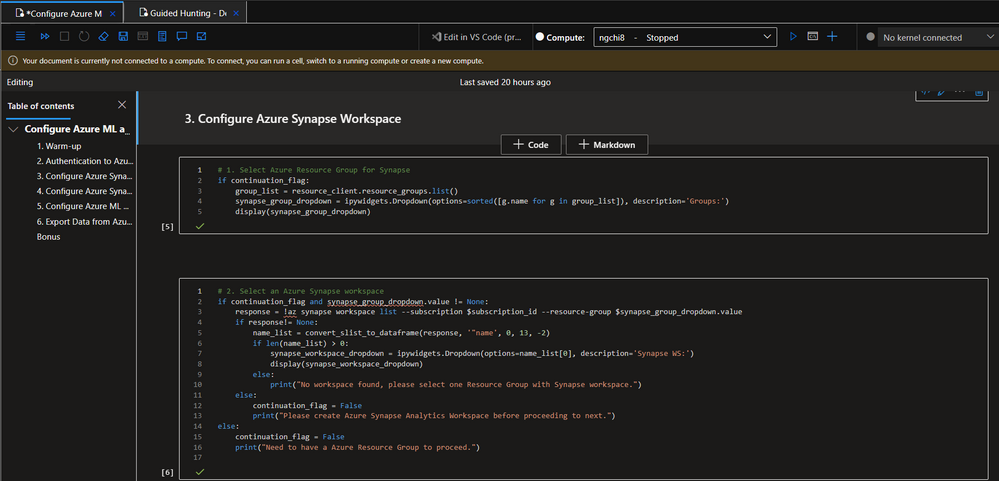

- A sample notebook that provides step-by-step, one-time configuration for the Azure Synapse environment. The notebook guides you to

- set up a continuous data export pipeline from your Log Analytics workspace to Azure Data Lake

- create a linked service between your Azure Synapse and Azure Machine Learning workspaces

- and generate a Spark pool and register it to the linked service, the compute capabilities necessary for the data preparation and data processing steps.

- A sample notebook that provides a real-world security use case that leverages big data capabilities from the integration. In a separate blog post, we’ve detailed prerequisites, data preparation, and data analysis with Synapse Spark pool, as well as what you can expect to see in the results: hunt for anomalous behaviors from network firewall logs at scale to detect potential network beaconing.

This is just one example use case of how you can leverage the Synapse integration with Notebooks to hunt for anomalies on large historical dataset at scale.

Summary

I hope you find some inspiration from this sample security scenario and start building your own analytics using this integration.

Try out the new capability and let us know what you think!

Further reading resources:

- Large-scale security analytics with Microsoft Sentinel Notebooks and Azure Synapse

- Detect network beaconing patterns using Azure Synapse and Sentinel Notebooks

- Azure Synapse Analytics overview

- Apache Spark on Azure Synapse overview

- Azure Data Lake Storage Gen2 overview

Special thanks to