This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

The key to unlocking usage of GPUs in the cloud has been the cloud’s ability to make GPU usage more affordable and accessible. Customers need their parallelized processing power but want it with a more flexible consumption model. Fortunately, the Azure ND A100 v4 series virtual machines (in public preview) powered by NVIDIA A100 Tensor Core GPUs answers this call…and then some.

Back in November 2020, we made the initial announcement about the ND A100 v4 series as being ideal for high-end deep learning training, machine learning and analytics tasks, and tightly coupled scale-up and scale-out GPU-accelerated HPC workloads. The ND A100 v4 VM series starts with a single virtual machine (VM) and eight NVIDIA Ampere A100 Tensor Core GPUs with third generation NVIDIA NVLink connections based on the NVIDIA HGX platform, and can scale up to thousands of NVIDIA A100 GPUs with an unprecedented 1.6 Tb/s of interconnect bandwidth per VM.

We initially allocated a single NVIDIA HDR 200 Gb/s InfiniBand interconnect for the NDv2, which was our first deep learning offering. With our most recent addition of NDv4 to the series, we offer a dedicated InfiniBand interconnect per physical GPU, giving you up to eight times greater interconnect performance per VM. With a 1:1 relationship between network adapter and physical GPU processor, the NDv4, with A100 GPUs, now meets a cloud performance metric that compares well in value and versatility to the bare metal GPU clusters one may find on premise.

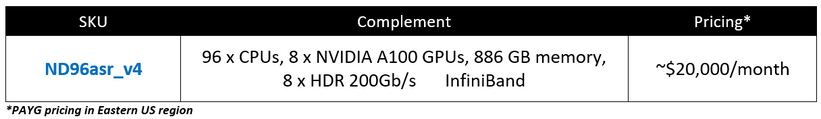

There is a single ND A100 v4 SKU currently in public preview:

This blog covers the following areas for the ND A100 v4 SKU:

- Understanding the basic complement of resources

- Validating proper allocations of both physical and virtual resources

- Measuring the performance capabilities of your VM to the benchmark data

- Performing simple health checks

Let’s get started!

The Basics

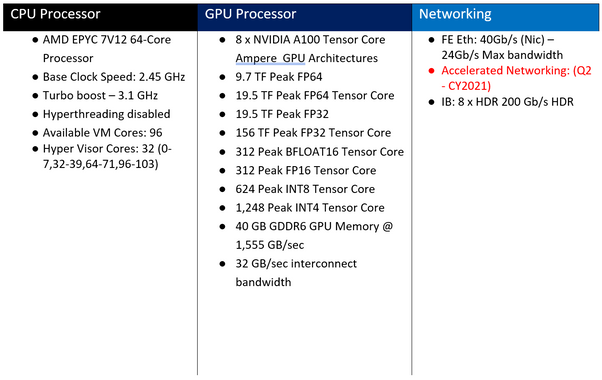

Here’s a quick, concise rundown of the componentry and specification for CPU, GPU, and networking:

Supported Regions

- East US

- West US

- South Central US

- West Europe

Recommended Marketplace Images

- CentOS HPC 7.9

- Ubuntu HPC 18.04

- Ubuntu HPC 20.04

Getting to know your VM

Physical core allocation

First, let’s confirm that your VM has the appropriate number of physical GPUs allocated. The first line of the diagram below shares the right command to use, as indicated by the text outlined in green. After that command is entered, the following table is displayed in which an indicator (outlined in orange) will tell you of the number of physical GPUs you have associated with your VM. Remember, the numbering of these physical GPU is base “0”, not base “1”. As indicated below, the list should start at “0” and go up to “7” to properly represent eight physical GPU cores.

Node Layout (Standard_ND96asr_v4):

Once the physical complement of GPUs is validated, most users like to confirm the layout of their resources - the processors, memory, network, etc – so they can proceed to their work while maintaining high confidence. The layout tables below will help users confirm they have the right resources for the right purposes.

If you provision this VM and then type in the command outlined in green, you should see something similar to the layout below. As in the previous section, the area outlined in orange in the layout image below indicates the number of physical A100 GPUs on the VM. This will help you understand the layout of the VM in order to properly configure your application code to achieve the best performance possible.

Since this VM has multiple GPU cores, it uses non-uniform memory access (NUMA) to give a developer better ability to tune their application(s) to the VM.

Here’s another view of the underlying cores and memory layout but sharing the distance between the NUMA nodes and an overall simplified layout view. Perform the command outlined in green and you should see this table displayed.

Note: Make sure that you have already installed numactl. This can be done with “sudo apt -y install numactl”

Memory Performance Verification

There are two main memory tests you can employ to verify the memory performance for your system: the Stream test, which verifies performance of the memory-to-CPU bandwidth while the second test, GPU to Host PCIe, measures memory bandwidth between each GPU and the Host PCIe channel. We will share both test processes here so you may do the same with your VM.

Stream Test

- Download https://bmhpcwus2.blob.core.windows.net/share/AMD/20210126-stream.tgz

- Uncompress the file (TAR) and change to the Stream directory

- Run the below command:

./stream_lowOverhead -nRep 50 -codeAlg 7 -cores 1 2 5 9 10 13 17 18 21 25 26 29 33 34 37 41 42 45 49 50 53 57 58 61 65 66 69 73 74 77 81 82 85 89 90 93 -memMB 1000 -alignKB 4096 -aPadB 0 -bPadB 0 -cPadB 0 -testMask 15

The TRIADD Bandwidth number (outlined in green) should be showing you a value in the range of ~300 GB/s, as it is here. This means you’re good to go!

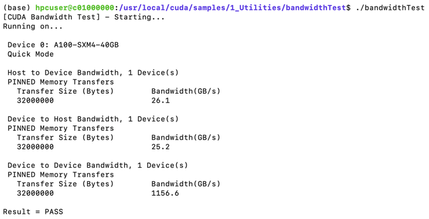

GPU to host PCIe bandwidth

- Note:

- cd /usr/local/cuda/samples/1_Utilities/bandwidthTest/

- sudo make (This creates bandwidthTest executable)

Expected Performance Results

Benchmarks are commonly used to validate just how far you can push a computing resource while still maintaining confidence in the processing outcome. We have performed some benchmarking of the NVIDIA A100 GPUs on Azure using these new VM SKUs and provided the results in the data tables below.

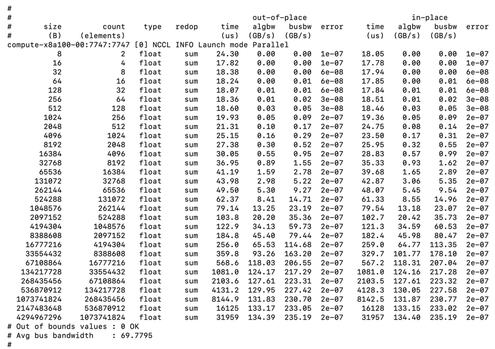

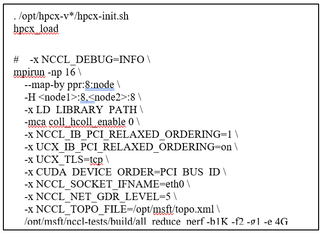

Benchmark Type: Communication Routine

Name: NCCL - Allreduce

Single VM (8 GPUs)

2 VMs (16 GPUs)

Results for 1 – 32 VMs

HPL

- NVIDIA Testing results using their proprietary HPL container.

Azure Testing results using the NVIDIA HPC container

(https://ngc.nvidia.com/catalog/containers/nvidia:hpc-benchmarks)

Health Checking

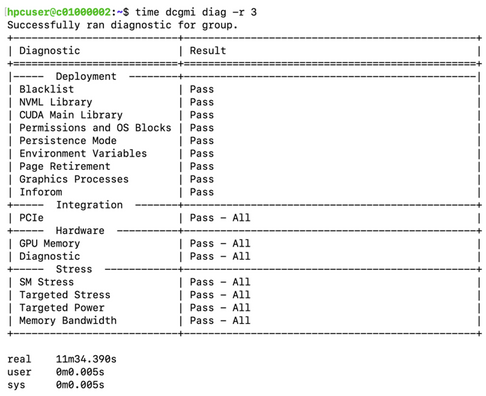

To further verify that the GPUs are working in a way that’s healthy for your system, you can use the Data Center GPU Manager (DCGM) to run some stress tests. DCGM may need to be installed if it is not in the VM. You can run three levels of diagnostics with DCGM.

If you see failures in the hardware or stress section of dcgmi diag then there is a good chance that something is wrong with one or more GPUs on the VM.

dcgmi diag -r 1 (runs in under a minute and verifies that it has the right software and can see the GPUs:

To remove this error run (as root) “nvidia-smi -pm 1”:

dcgmi diag -r 2 (runs in ~2 minutes and does the above tests along with a GPU Memory Check:

dcgmi diag -r 3 (runs in ~12 minutes. It runs the above tests as well as some stress tests)

Additional Resources: