This post has been republished via RSS; it originally appeared at: Azure Database Support Blog articles.

When supporting Azure SQL DB Connectivity case we sometimes receive errors message saying that was an Azure SQL DB issue, but sometimes this is just an application problem.

We as Database Administrators do not need to be WebApp experts but we need to have some tools to check if issue is on Azure SQL DB or on the client side

I've created an Azure Function sample code for testing https://github.com/FonsecaSergio/AzureFunctionAppTestConnectivity (Function_ConnectSQL_PoolLimit) and in this test I will just open multiple connection in a while loop. This troubleshooting steps should be similar also to a WebApp.

while (Aux++ < LoopLimit)

{

log.LogInformation(String.Format("Loop {0}", Aux));

SqlConnection sqlConnection2 = new SqlConnection(ConnectionString);

sqlConnection2.Open();

}

So lets start breaking the limits in the scenarios below:

1 - Application connection pool setting

2 - Pre-login handshake error / WebApp high CPU

3 - Azure SQL DB connection limit

4 - SNAT Port Exhaustion

1 - Application connection pool setting

For this test I'll limit the application pool limit to 10 connections using connection string parameter "Max Pool Size=10"

Server=tcp:SERVERNAME.database.windows.net,1433;Initial Catalog=sandbox;

Persist Security Info=False;User ID=XXXXXXXXX;Password=XXXXXXXXX;

Encrypt=True;TrustServerCertificate=False;Connection Timeout=30;Max Pool Size=10;

And I get error like below

Timeout expired. The timeout period elapsed prior to obtaining a connection from the pool.

This may have occurred because all pooled connections were in use and max pool size was reached.

As the message says its a connection pool setting, not an Azure SQL DB limit

Solution

In this scenario you can just config your application to be able to open more connections, or avoid opening too many connections

2 - Pre-login handshake error / WebApp high CPU

For this test used a STANDARD Tier (S1) for the App Service Plan. Also sending parameter to Azure Function (HighCPU = 1) that will do some math just to increase CPU usage.

And we may get an error like sample below

2020-05-08T21:49:23.749 [Information] Executing 'Function_ConnectSQL_PoolLimit' (Reason='This function was programmatically called via the host APIs.', Id=2fcc3b09-e3e4-4125-a615-282be3d76f7d)

...

2020-05-08T21:49:34.527 [Information] Loop 1078

2020-05-08T21:49:34.528 [Information] Loop 1079

2020-05-08T21:49:34.528 [Information] Loop 1080

2020-05-08T21:49:34.528 [Information] Loop 1081

Log output threshold exceeded.

2020-05-08T21:49:41.141 [Error] 2020-05-08 09:49:24 CONNECTION ERROR -

Connection Timeout Expired. The timeout period elapsed while attempting to consume the pre-login handshake acknowledgement.

This could be because the pre-login handshake failed or the server was unable to respond back in time.

The duration spent while attempting to connect to this server was - [Pre-Login] initialization=5895; handshake=29;

2020-05-08T21:49:41.249 [Information] Executed 'Function_ConnectSQL_PoolLimit' (Succeeded, Id=2fcc3b09-e3e4-4125-a615-282be3d76f7d)

This error may have many reasons, but the most common scenario is the WebApp have with CPU so HIGH that it does not have CPU power even to process this connection fast enough. If your client cannot complete a connection in Max 5 sec it may fail with the error below, because this is how the Azure SQL Gateway was designed and we can check the time that took based on the message "[Pre-Login] initialization=5895"

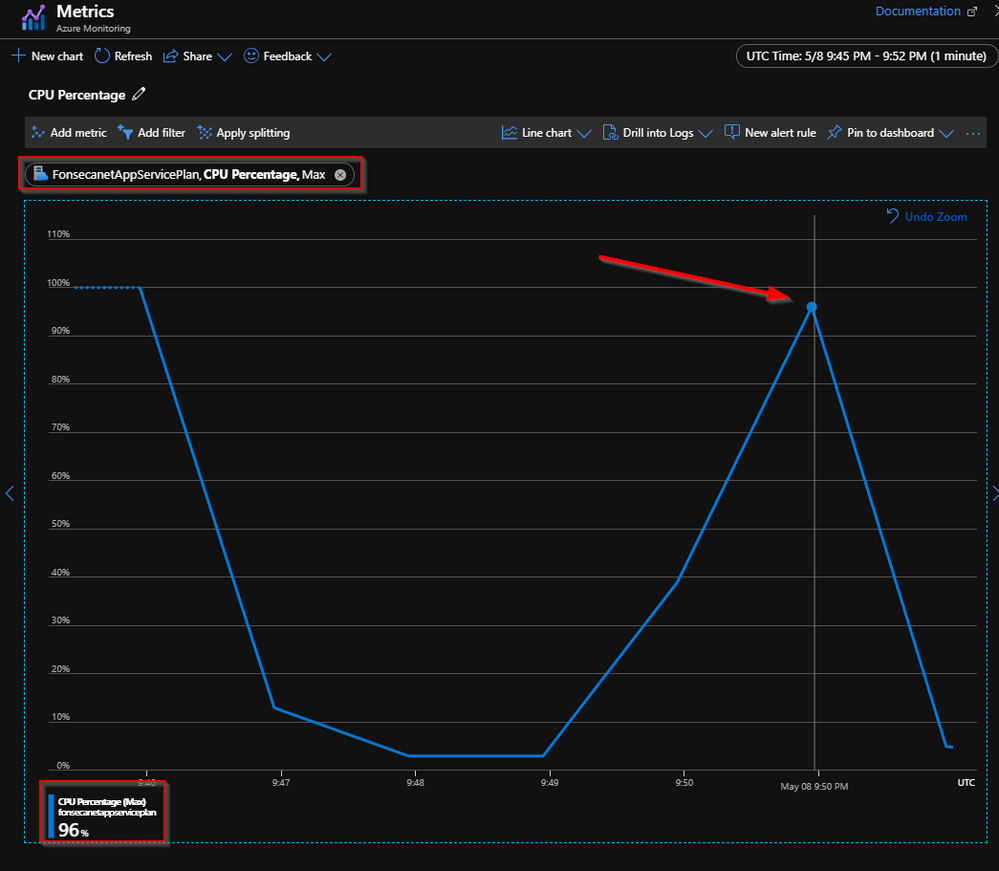

For further investigation you can go to your Azure Function Application Service Plan > Metrics and look for CPU Percentage (MAX) and check for long CPU peaks that match your errors

In this case is just a test scenario with just one request making all the pressure, on production workloads you may find high cpu for longer periods of workloads in a production environment.

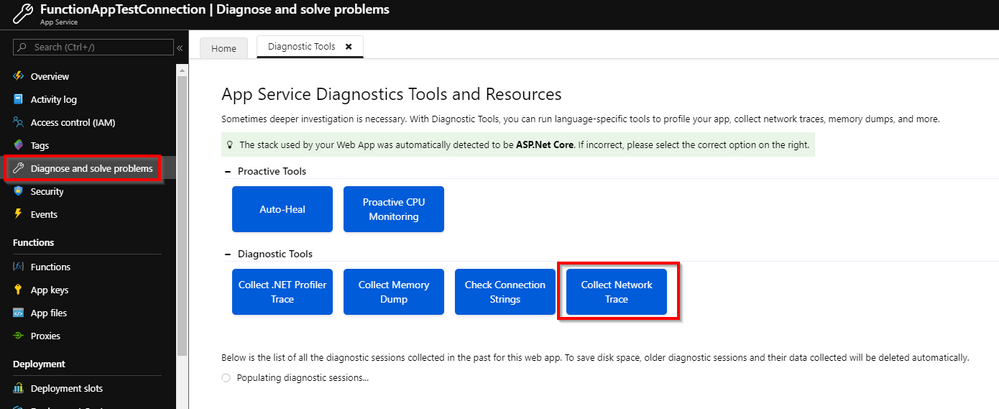

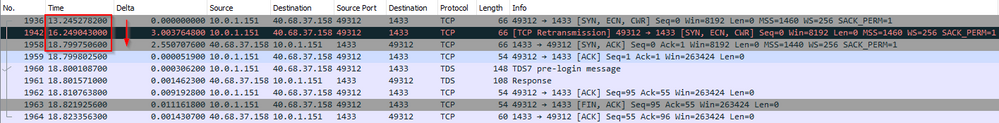

You can also capture a network trace from the WebApp you can see how long the connection time took

Just go to Diagnose and solve problems > Collect Network trace

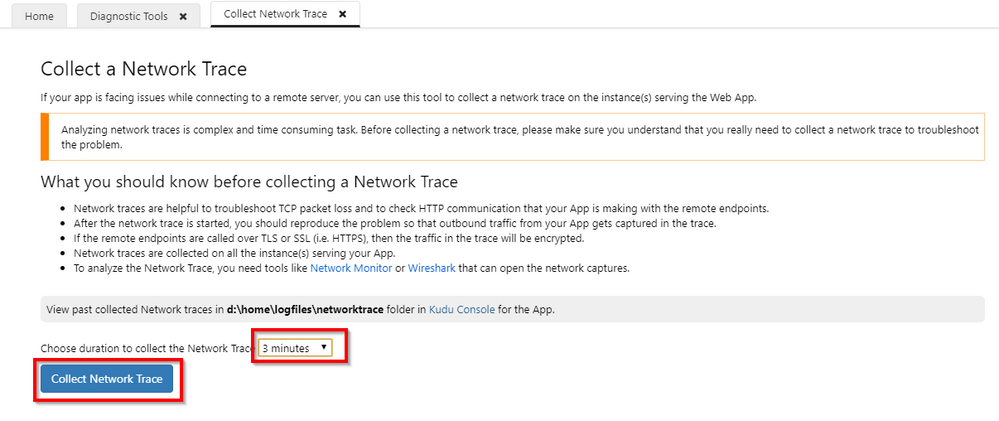

Change the period of time the collection will run and repro the issue

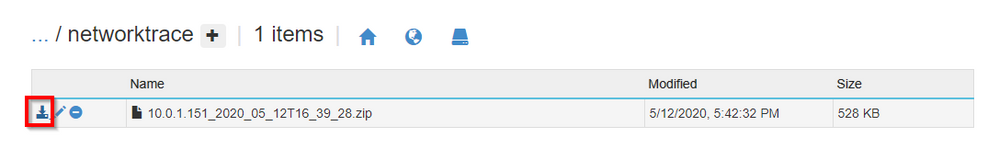

After that you can just download it from Kudu Console as explained in the window above

Then you can open it on Netmon or Wireshark

This issue could have been caused my some other reasons like network and If we look on just this trace it looks like it that it just took a long time for reply, and if you are unsure, check for CPU as mentioned above, or open a support request for further investigation. But in this trace we can see that the initial connection took more than 5 sec, and that's why it was disconnected.

For this second test the error message was "[Pre-Login] initialization=5555; handshake=14;"

Solution

In this scenario a quick mitigation is just scale up the Azure Function to higher tier to have more CPU. And you may also investigate why the CPU got so high like this huge loop I created.

3 - Azure SQL DB connection limit

If application / CPU limit is not a problem, lets change parameter to try to open now 5000 connections. "Max Pool Size=5000" and I may get error like below

Resource ID : 2. The session limit for the database is 300 and has been reached.

See 'http://go.microsoft.com/fwlink/?LinkId=267637' for assistance.

In this scenario I'm using a "Basic" SQL DB database that have limit of max 300 sessions because of number of cores available that can handle a specific number of connections as documented at

| Compute size | Basic |

|---|---|

| Max DTUs | 5 |

| Included storage (GB) | 2 |

| Max storage choices (GB) | 2 |

| Max in-memory OLTP storage (GB) | N/A |

| Max concurrent workers (requests) | 30 |

| Max concurrent sessions | 300 |

Solution

In this scenario or you need to control the number of connections in the application side, or if you really need more concurrent session you need to scale up to higher tier. As a sample if you use S9 it can handle 30.000 sessions.

4 - SNAT Port Exhaustion

Now that you application have enough CPU and Database Tier can handle more concurrent session we may hit on another limit: The number of TCP Ports open

2020-05-08T10:21:59.754 [Information] Executing 'Function_ConnectSQL_PoolLimit' (Reason='This function was programmatically called via the host APIs.', Id=4f62abab-6369-42fc-bbd3-a818e5a54b57)

... 2020-05-08T10:22:05.342 [Information] Loop 1656

2020-05-08T10:22:05.365 [Information] Loop 1657

2020-05-08T10:22:05.392 [Information] Loop 1658

2020-05-08T10:22:05.417 [Information] Loop 1659

2020-05-08T10:22:26.486 [Error] 2020-05-08 10:21:59 CONNECTION ERROR -

A network-related or instance-specific error occurred while establishing a connection to SQL Server.

The server was not found or was not accessible. Verify that the instance name is correct and that SQL Server is configured to allow remote connections.

(provider: TCP Provider, error: 0 - A connection attempt failed because the connected party did not properly respond after a period of time, or established connection failed because connected host has failed to respond.)

2020-05-08T10:22:26.512 [Information] Executed 'Function_ConnectSQL_PoolLimit' (Succeeded, Id=4f62abab-6369-42fc-bbd3-a818e5a54b57)

The message in this case is not clear. It looks like a network issue or an Azure SQL DB issue as the message is "server was not found", but lets look deeper.

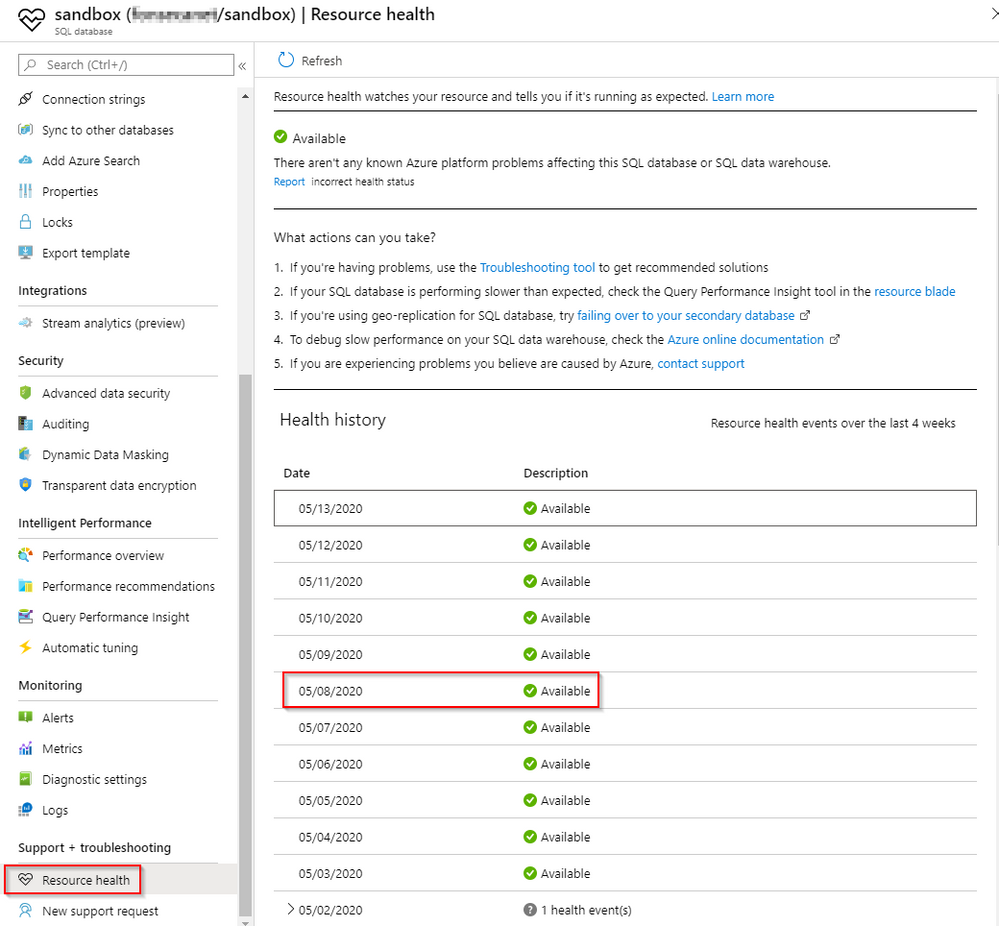

If we look on Azure SQL Resource Health we can see no issues on 8th May

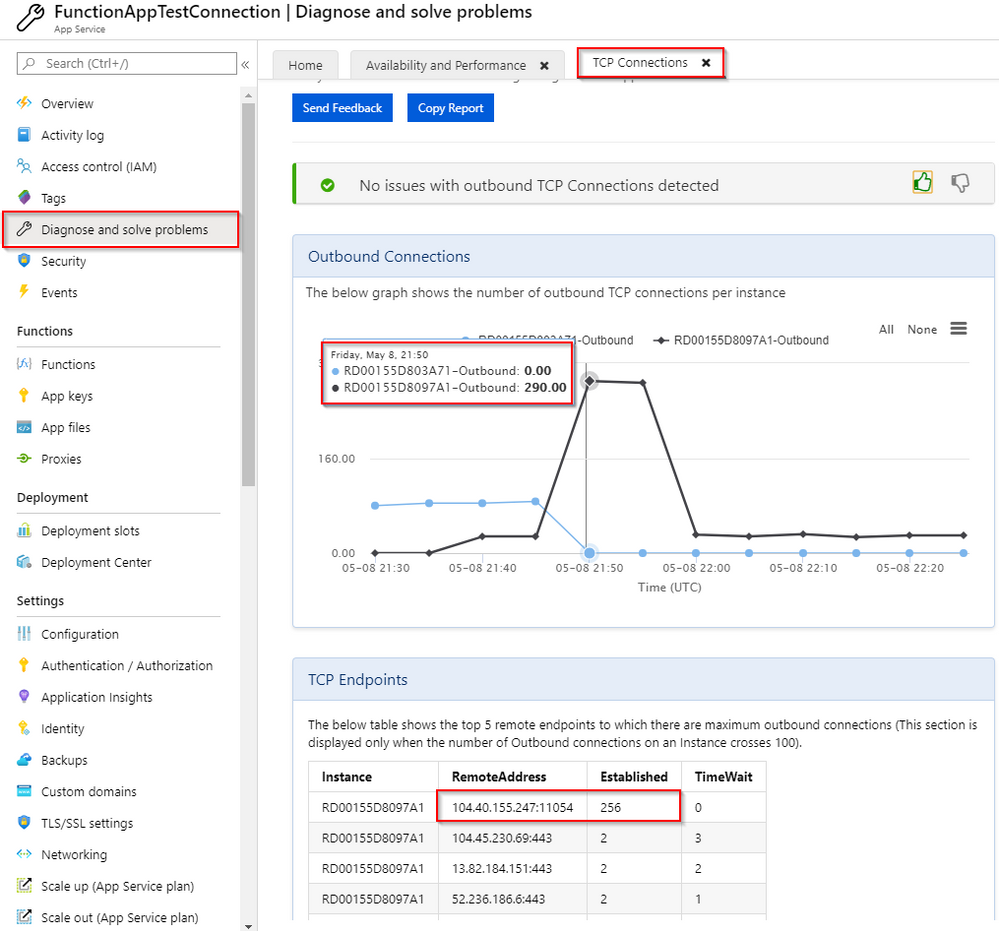

From the Azure Function we can check how many connection were opened using:

- Function App > Diagnose and Solve Problems > TCP Connections

In the image below we can see the number of outbound connections at some point and we can all see what endpoint was reached in this case 104.40.155.247:11054. Azure SQL uses port 1433 for the gateway and ports 11000-11999 for the SQL that hosts your database. More info at https://techcommunity.microsoft.com/t5/azure-database-support-blog/azure-sql-db-connectivity-troubleshooting/ba-p/1158677

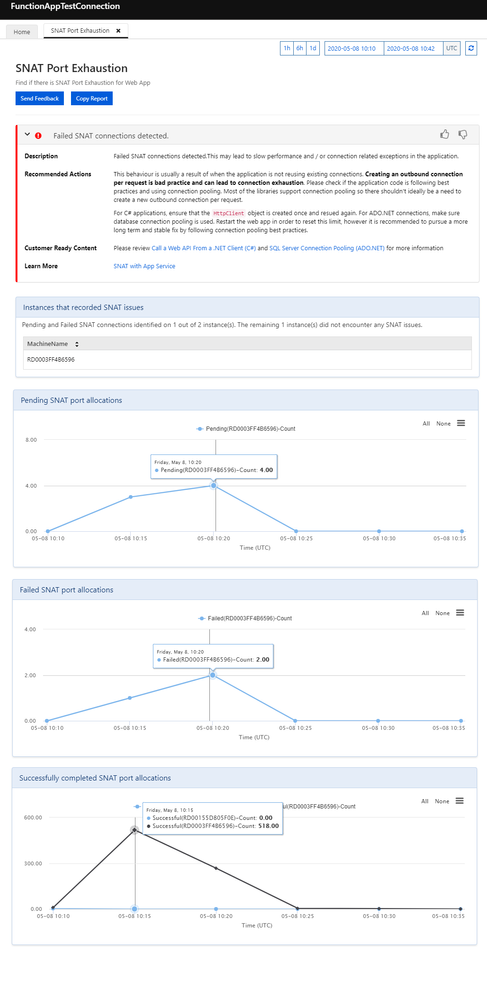

But how to be sure that you hit the SNAT Port Exhaustion problem in this scenario, follow the procedure below

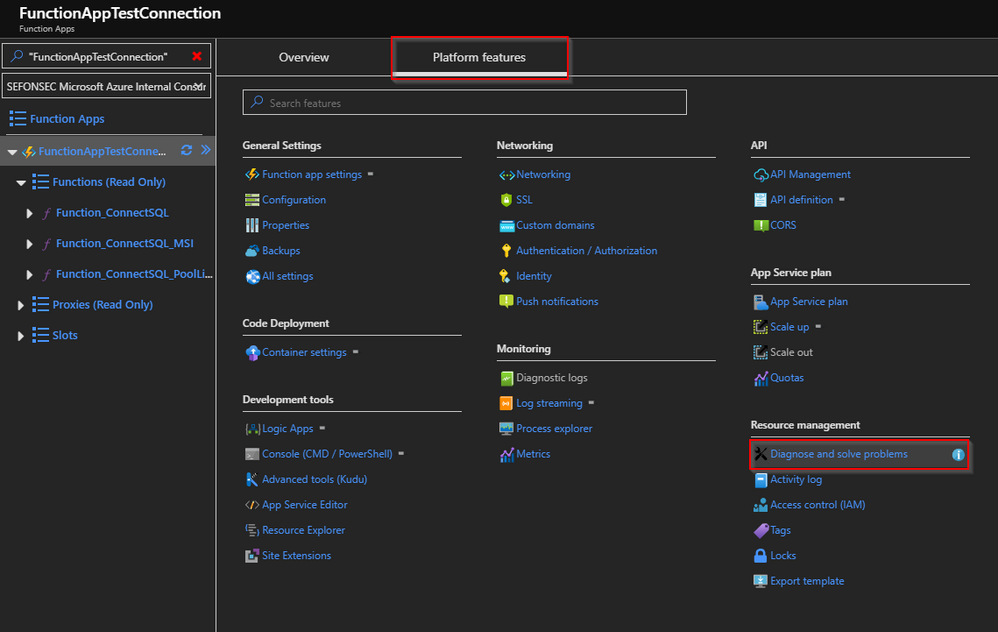

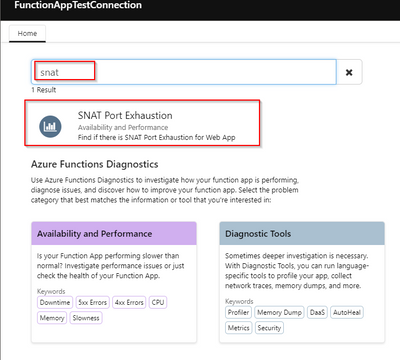

Go to Azure Function > Diagnose and solve problems > Look for SNAT Port Exhaustion.

*WAIT SOME MINUTES BEFORE CHEKING IT. On My tests the data didn´t load right after the issue happened

Look for SNAT Port Exhaustion

*NOTE: this will not be available for Free App Service Plan

We can see in the report that some ports failed to open

Solution

But in this scenario you can quickly mitigate scaling up your App Service plan and you need to understand why so many connections are open at same time. Use connection pooling to reuse connections and follow some .NET best practices

Check more information below:

- https://4lowtherabbit.github.io/blogs/2019/10/SNAT/

- https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-outbound-connections

- https://azure.github.io/AppService/2018/03/01/Deep-Dive-into-TCP-Connections-in-App-Service-Diagnostics.html

- https://docs.microsoft.com/en-us/azure/azure-functions/manage-connections