This post has been republished via RSS; it originally appeared at: Azure Compute articles.

We recently encountered a kernel bug in CentOS 7.6 and CentOS 7.7 VMs, that was causing a kernel worker thread to hog CPU core. As a result of this, HPC applications running on those VMs were seeing poor or variable performance results.

The kernel bug is in the "rhastable" shrink logic and is present in kernel versions 3.10.0-1062.12.1.el7 and below. As described in this post, this bug was causing endless “insert_work” invocations because of repeated calls to rht_deferred_worker(). This kernel bug is fixed in kernel versions 3.10.0-1127.el7 and above.

Please read more details about his bug here:

Description: https://lkml.org/lkml/2019/1/23/789

Bug Fix: https://github.com/torvalds/linux/commit/408f13ef358aa5ad56dc6230c2c7deb92cf462b1

Symptoms:

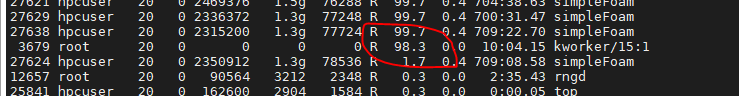

When this bug is encountered, you will see one of the kernel worker threads consuming nearly 100% of CPU core as shown below:

And if you trace the kernel thread, you will see continuous invocations of rht_deferred_work.

Impact of this bug on HPC Application Performance:

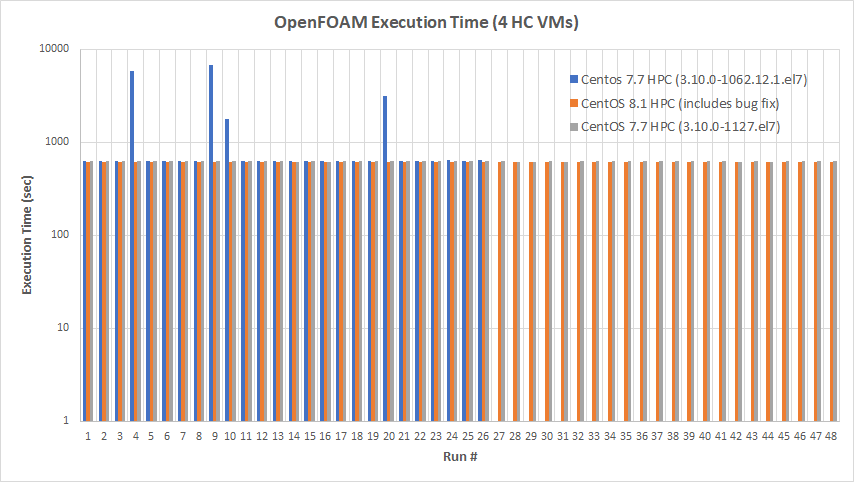

The performance impact is severe especially when all the CPU cores are being used for the application run. As you can see in the following OpenFOAM results using four HC VMs, CentOS 7.7 HPC VM running kernel version 3.10.0-1062.12.1.el7 randomly shows huge performance variations over different runs. But you can see that the performance is stable and consistent on CentOS 7.7 HPC VM with kernel version 3.10.0-1127.el7 as well as CentOS 8.1 HPC VM.

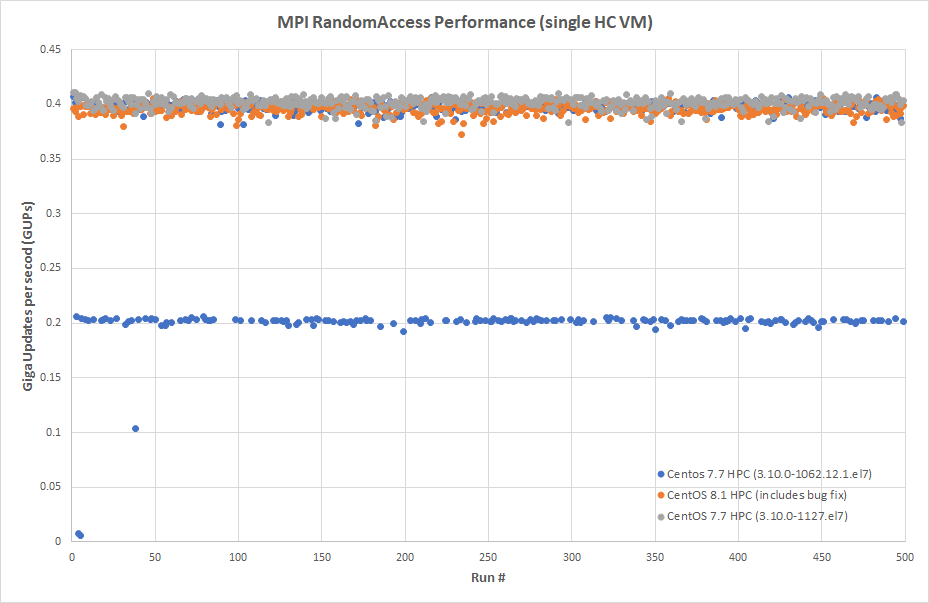

Similar behavior can be seen with MPI RandomAccess performance results shown below. CentOS 7.7 HPC VM running kernel version 3.10.0-1062.12.1.el7 shows big performance variations. But, performance is stable and consistent on CentOS 7.7 HPC VM with kernel 3.10.0-1127.el7 and CentOS 8.1 HPC VM.

Resolution Steps:

- If you are currently using CentOS 7.6/7.7 HPC images and with kernel version is 3.10.0-1062.12.1.el7 or below, then use one of the following methods for resolution.

1. Update Kernel: The kernel bug fix is included in 3.10.0-1127.el7.x86_64, and this update is available for both CentOS 7.6 and CentOS 7.7. Please do the following to update your kernel:

2. Switch to CentOS 8.1 HPC Image: You can switch to CentOS 8.1 HPC image as it has the kernel bug fix already.

3. New CentOS 7.6/7.7 HPC Image: A new update of CentOS 7.6/7.7 HPC images is being prepared and will be available soon in the Azure Marketplace. We will update this blog post when the new update version is available.

- If you are currently using CentOS 8.1 HPC images:

No action is required, you are not impacted by this kernel bug.