This post has been republished via RSS; it originally appeared at: Microsoft Research.

The need to express oneself is innate for every person in the world, and its roots run through art, technology, communication, and the acts of learning and building things from the ground up. It’s no coincidence, then, that a new platform being released by Microsoft Research, called Expressive Pixels, stems from this belief. Expressive Pixels introduces an authoring app combined with open-source firmware, peripherals, documentation, and APIs that allow users and makers to create animations and then display them on a range of colorful LED display devices. With applications in areas spanning from accessibility to productivity, creativity, and education, the technology’s potential for growth is limited only by the imaginations of its users and community—and the app can now be downloaded for free from the Microsoft store.

The concept began with a simple idea—empowering individuals who require alternative tools for communication with others in their lives. The collaboration began in the Microsoft Research Enable Group in 2015 and has since grown to include members of the Microsoft Research Redmond Lab and the Small, Medium, & Corporate Business team. Its unique path to realization has led the Expressive Pixels team to embrace the creation of hardware display devices that integrate with other maker devices, an educational opportunity with support for Microsoft MakeCode, and a full open-source release of the firmware for developers. To learn more about the Expressive Pixels journey, read on or explore one of its many facets by clicking on a topic above.

Origins: The power of an idea to enrich communication and people’s lives

Expressive Pixels did not begin with a hardware or software concept. Instead, it emerged from a participatory design collaboration between the Enable Group and members of the ALS Community. Enable researchers and technologists partnered closely with people living with ALS (PALS), caregivers, families, clinicians, non-profit partners, and assistive technology companies to identify, design, and test new experiences and technologies designed to improve the lives of people and communities affected by speech and mobility disability.

From the outset, the Enable team established an ethos of inclusive, user-centered design, deep empathy, and a scrappy, can-do approach to innovating and problem solving within an extreme set of constraints.

The extended team has always embraced the spirit of designing not just for the user, but with the user, resulting in a fruitful, five-year collaboration with the PALS community on a range of research studies, hackathon projects, exploratory prototypes, and released software.

One central theme the team tackled was enhancing the experience of augmentative and alternative communication (AAC) systems, which allow people with limited mobility and speech to control computers and speech devices using their eyes, a head mouse, or other alternate forms of access based on individual needs. Many PALS rely on eye tracking or head mouse input for computer access and synthetic speech in the late stages of disease progression.

It made sense for the team to invest heavily in eye tracking as a primary input because the eye muscles are typically more resilient to ALS than the muscles needed for movement, speech, swallowing, and breathing. Eye-tracking technologies and AAC systems have improved over the years, but the barriers to access, entry, and adoption remain insurmountable for many users, leading to device abandonment and social isolation when users are no longer able to speak or gesture.

Internally, the Enable team worked closely with collaborators across the company, including researchers in the Microsoft Research Ability team and engineers in the Microsoft Research Redmond Central Engineering Test Team. They knew that typing words and phrases letter-by-letter using just the eyes was time-consuming and error prone, which created several opportunities for innovation unique to Microsoft Research.

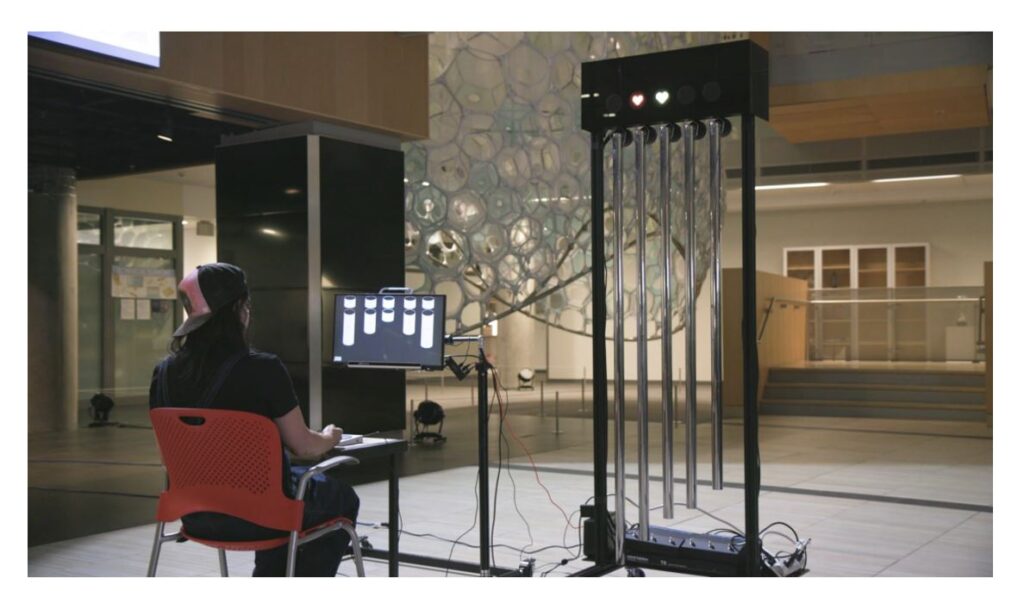

The team suspected that secondary, LED-based displays could serve as an important adjunct to AAC-based communication by serving as a visual proxy for body language, social cues, and to convey emotion—all critical aspects of nonverbal communication that can be compromised by ALS. They conducted research around expressivity and creative endeavors central to the human experience, such as playing or composing music.

They developed hands-free, multi-modal interfaces and musical instruments, built an innovative speech keyboard with an integrated awareness display, and investigated various approaches to improve speed and accuracy for eye-tracking input. The speech keyboard was a key reference design for Windows Eye Control, and the awareness display seeded the idea for Expressive Pixels.

Evolution of the Sparklet and platform design: Custom-built hardware and firmware for broad access to Expressive Pixels

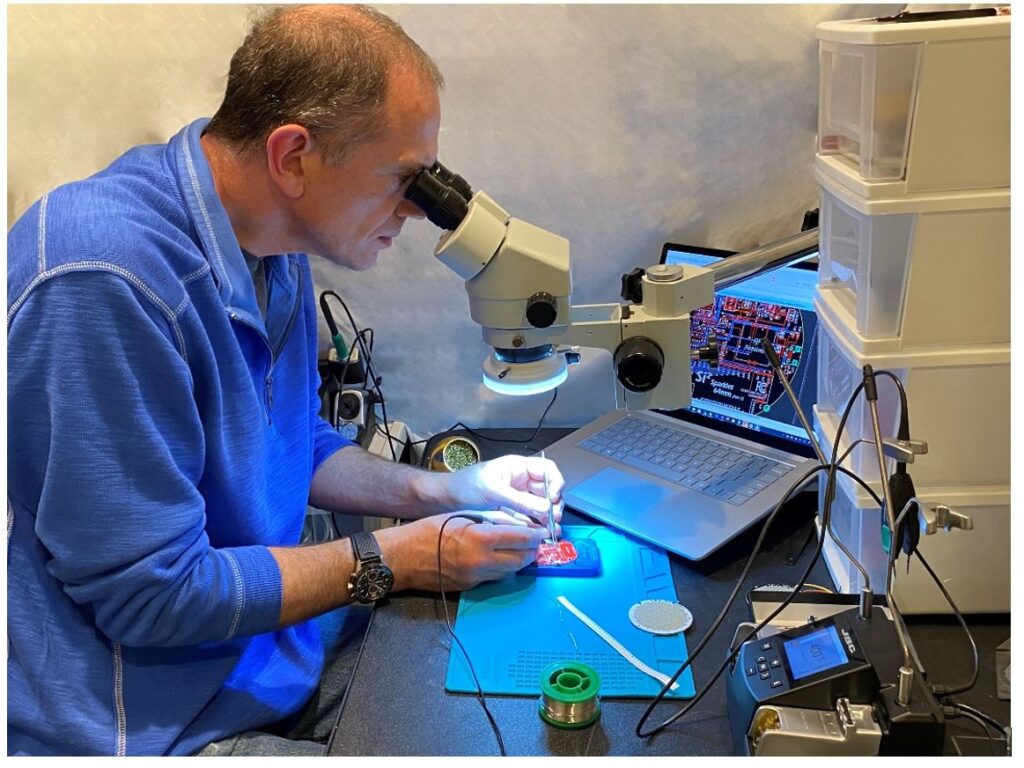

After brainstorming with the Enable team on how to develop a prototyping environment to author animations for their awareness display, Gavin Jancke—General Manager Engineering, Microsoft Research—was hooked on this project right away, so much so that he devoted a couple years of his hobby time to make Expressive Pixels a reality. Jancke began by working on the animation authoring app for the project, but his role quickly evolved to hardware design when the team realized there was no existing displays of sufficient resolution or standalone capability in the market. As he got more involved, he came up with a new set of hardware designs and started prototyping.

The end goal, Jancke soon realized, required gaining knowledge of electrical engineering, which would lead to an improved hardware design that could reach more people. He set to work creating a new display device that could display much more resolution. The outcome is the Sparklet: a Bluetooth-capable high-resolution display, that was battery powered, in a single package. “The thing with the Sparklet is, no one has created an end-to-end device that is packaged up and ready to go,” says Jancke of the hardware. This lowers the bar of entry for people without electronics knowledge to jump right in and experiment with the basic Expressive Pixels capabilities.

“This is from schematics, PCB [printed circuit board] design, firmware which is C-level code running on the microprocessor, communications protocol which is Bluetooth and USB, all the way through to a full-blown fluid user interface experience in C#. This is what I call full stack!”

Gavin Jancke on the process of creating the Expressive Pixels platform

The story of the Expressive Pixels doesn’t end there. The team is working on new versions that can accomplish different tasks—such as triggering animations synchronized on musical notes from a MIDI interface or from external accessible buttons and adaptive switches, for example. Plus, Expressive Pixels has many opportunities for makers and developers to work with and program LED devices beyond the Sparklet. Even for Jancke himself, this hardware work opened doors in his own career, enabling him to undertake other, more advanced hardware engineering projects in his day job. Designing the hardware and firmware for Sparklet gave him the confidence to face new challenges in Passthrough AR, Project Emma, and Project Eclipse—three projects he has worked on since where skills developed while working on Expressive Pixels have been integral.

Technical design and features

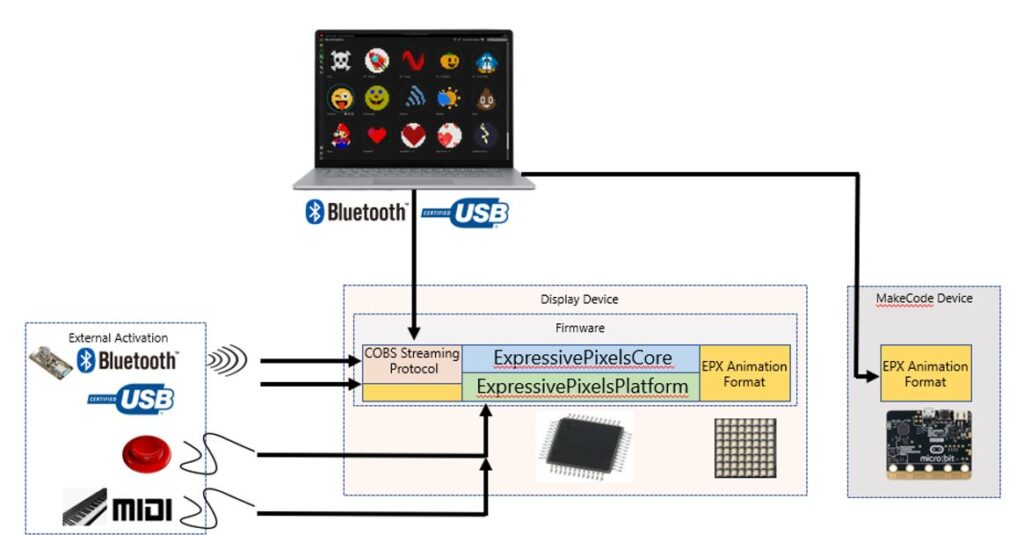

Going under the hood of Expressive Pixels reveals more about how it is designed to foster inclusive end-experiences for users with a variety of needs and goals—many of its design considerations are made to get more people involved in more ways. The firmware incorporates a mix-and-match philosophy so that makers and developers can use parts based on their individual needs. The application logic implements the features of Expressive Pixels (such as animation rendering, file Storage, and behaviors). It is also designed with a hardware abstraction layer so that it can be used across different hardware implementations. This provides system-level capabilities that isolate the application logic from the underlying type of microprocessor and development toolchain (such as Arduino, Nordic SDK, or other platforms that makers use).

More specifically, the firmware includes support for Bluetooth and USB connectivity, enlisting a COBS (Consistent Overhead Byte Stuffing) streaming protocol to enable robust communications between two devices. The Expressive Pixels authoring app produces animations using a compression video codec that allows for optimizing the transmission and storage of image frames for constrained microcontrollers, and there’s support for storing multiple animations on devices. See Figure 1 below for a visual layout of the design, and explore the Expressive Pixels documentation for more details.

Expressive Pixels and Microsoft MakeCode: A perfect pairing

After developing the authoring app and firmware, the team saw the potential fun factor that it opened up to makers. However, there was something more that began to take root throughout the process—the power of learning and experimentation inherent in the design of the platform.

After creating the user interface for Expressive Pixels and refining the compelling visual nature of animated LED displays, the team realized there was an untapped educational resource in Microsoft MakeCode, which could be integrated with Expressive Pixels. The goal here was twofold: create a way for the maker community to easily adapt the platform for their own purposes and encourage people to develop their own scenarios around expression. The authoring environment of MakeCode, with block-style programming, gives those who are interested in learning the basics of code an accessible entry point.

MakeCode lowers the barrier for entry for programming small devices, such as the BBC micro:bit, which are usually relegated to those who learn C programming. The barrier is lowered to such a point that people who are completely new to programming can create new inventions with relative ease. Integrating displays with expressive capability opens up a new range of creative and inventive scenarios, and it allows makers a new range of potential for experimentation. For example, a small robot could display a small stop sign when the robot came into a pre-determined proximity with an object.

Developments in MakeCode and connecting to other hardware could also lead to new opportunities in accessibility and beyond. With the power of MakeCode and Expressive Pixels together, people with little knowledge of code can start to use the technology in tangential areas like costume design and fashion.

So what does it take for Expressive Pixels to link up with MakeCode and the micro:bit? About 4 MakeCode jigsaw programming blocks. Ultimately, connecting Expressive Pixels to MakeCode creates the opportunity for people to learn in a fun way and for makers to expand the possibilities of the platform. This vision is one of collaboration at large scale, a way for people to learn and grow the technology at the same time.

Building an interactive community for both creators and developers

The beauty of Expressive Pixels is that there are many ways for various people to engage with the technology—from makers to learners to artists and more. With Expressive Pixels, the platform encourages people to become creators. From the bottom up, the Expressive Pixels app and its hardware considerations were designed to give as many people access to the underlying technology as possible. It’s easy to get started, but there is a depth built into the platform that encourages even the most serious developers to get involved and lend their talents.

The firmware source code is now open source and available on GitHub, so if you are a developer interested in modifying or experimenting with the code in ways beyond the limits of the app, the resources are available for creating the next evolutions of Expressive Pixels now.

Creativity is collective: Growing the reach of Expressive Pixels

There are many different groups and areas that Expressive Pixels has encountered on its path to being released, but one thing that the team has certainly learned along the way: the more people involved, the merrier. In collaboration with people who have a wide range of backgrounds, abilities, and perspectives, the team is looking out for new ways of making this technology work to meet a diverse set of needs. There are future irons in the fire.

Members of the Expressive Pixels team are hustling to test 3D-printed mounts and various other designs for makers and the community to use for modification, and they have already created prototypes for alternate switch access and eye tracking triggering. Designs for these are and will continue to be released for makers and creatives to use and modify along with the software. Down the road, the dream for Expressive Pixels includes integration with Windows Eye Control and other universal productivity scenarios—imagine being able to sync your Sparklet with your Microsoft Teams and Outlook status to show people outside your physical office or room whether you’re available or busy. The team has already started testing these out in their home offices.

Coming full circle, the Enable Group has recently been mentoring student teams at University of Washington and University College London to develop a collection of eye tracking-based applications built on top of eye-tracking APIs and samples the team released for developers. Projects included an eye-tracking piano interface, a drawing app that allows users to create mandalas and other shapes with their eyes, and two eye-tracking games. They plan to introduce Expressive Pixels to the next group of students and are excited to see where their imaginations will take the platform in the future.

Acknowledgments

Expressive Pixels and its winding journey could not have been possible without so many people. We are forever inspired by the collaborators, partners, advisors, and friends who have dedicated their time and energy in service to our mission. Learn more about our contributors at the Expressive Pixels team page.

The post Expressive Pixels: A new visual communication platform to support creativity, accessibility, and innovation appeared first on Microsoft Research.