This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

Long-running Stored Procedures for Power Platform SQL Connector

The SQL Server connector in Power Platform exposes a wide range of backend features that can be accessed easily with the Logic Apps interface, allowing ease of business automation with SQL database tables. However, the user is still limited to a 2-minute window of execution. Some stored procedures may take longer than this to fully process and complete. In fact, some long-running processes are coded into stored procedures explicitly for this purpose. Calling them from Logic Apps is problematic because of the 120-second timeout. While the SQL connector itself does not natively support an asynchronous mode, it can be simulated using passthrough native query, a state table, and server-side jobs.

For example, suppose you have a long-running stored procedure like so:

Executing this stored procedure from a Logic App will cause a timeout with an HTTP 504 result since it takes longer than 2 minutes to complete. Instead of calling the stored procedure directly, you can use a job agent to execute it asynchronously in the background. We can store inputs and results in a state table that you can target with a Logic App trigger. You can simplify this if you don’t need inputs or outputs, or are already writing results to a table inside the stored proc.

Keep in mind that the asynchronous processing by the agent may retry your stored procedure multiple times in case of failure or timeout. It is therefore critically important that your stored proc be idempotent. You will need to check for the existence of objects before creating them and avoid duplicating output.

For SQL Azure

An Elastic Job Agent can be used to create a job which executes the procedure. Full documentation for the Elastic Job Agent can be found here: https://docs.microsoft.com/en-us/azure/azure-sql/database/elastic-jobs-overview

You’ll want to create a job agent in the Azure Portal. This will add several stored procedures to a database that will be used by the agent. This will be known as the “agent database”. You can then create a job which executes your stored procedure in the target database and captures the output when it is completed. You’ll need to configure permissions, groups, and targets as explained in the document above. Some of the supporting tables and procedures will also need to live in the agent database.

First, we will create a state table to register parameters meant to invoke the stored procedure. Unfortunately, SQL Agent Jobs do not accept input parameters, so to work around this limitation we will store the inputs in a state table in the target database. Remember that all agent job steps will execute against the target database, but job stored procedures run on the agent database.

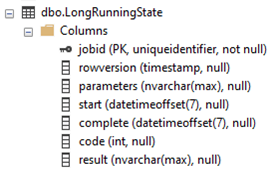

The resulting table will look like this in SSMS:

We will use the job execution id as the primary key, both to ensure good performance, and to make it possible for the agent job to locate the associated record. Note that you can add individual columns for input parameters if you prefer. The schema above can handle multiple parameters more generally if this is desired, but it is limited to the size of NVARCHAR(MAX).

We must create the top-level job on the agent database to run the long-running stored procedure.

We will also need to add steps to the job that will parameterize, execute, and complete the stored procedure. Job steps have a default timeout value of 12 hours. If your stored procedure will take longer, or you’d like it to timeout earlier, you can set the step_timeout_seconds parameter to your preferred value in seconds. Steps also have (by default) 10 retries with a built in backoff timout inbetween. We will use this to our advantage.

We will use three steps:

This first step waits for the parameters to be added to the LongRunningState table, which should occur fairly immediately after the job has been started. The first step merely fails if the jobid hasn’t been inserted to the LongRunningState table, and the default retry/backoff will do the waiting for us. In practice, this step typically runs once and succeeds.

The second step queries the parameters from the state table and passes it to the stored procedure, executing the procedure in the background. In this case, we use the @callparams to pass the timespan parameter, but this can be extended to pass additional parameters if needed. If your stored procedure does not need parameters, you can simply call the stored proc directly.

The third step completes the job and records the results:

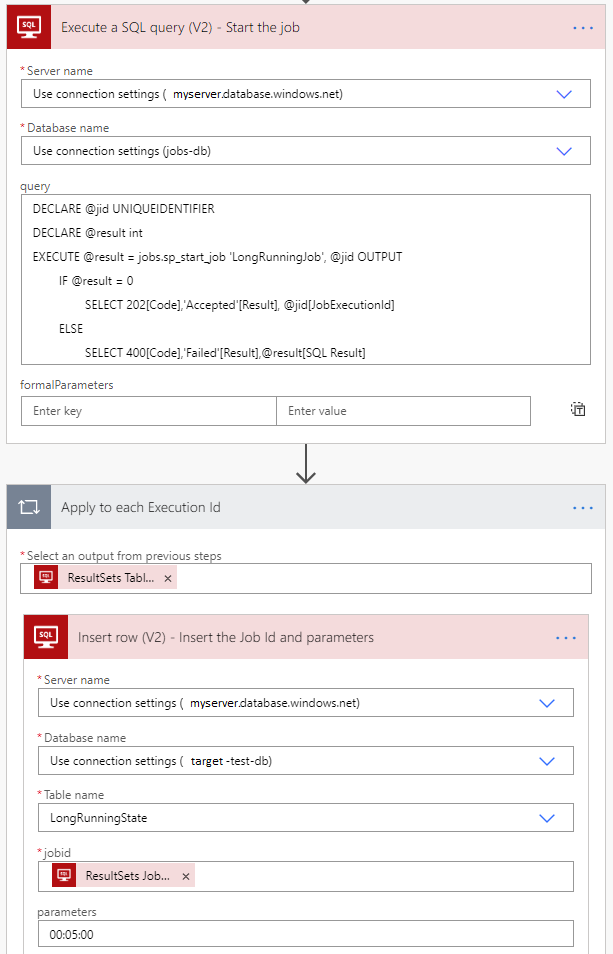

We will use a passthrough native query to start the job, then immediately push the parameters into the state table for the job to reference. We will use the dynamic data output ‘Results JobExecutionId’ as the input to the ‘jobid’ attribute in the target table. We must add the appropriate parameters for the job to unpackage them and pass them to the target stored procedure.

Here's the native query:

Here's the Logic App snippet:

Notice that the Native Query runs on the job database, while the Insert Row acts on the target database.

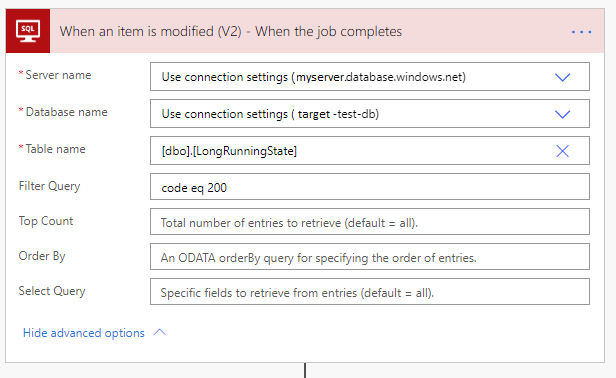

When the job completes, it updates the target LongRunningState table so that you can easily trigger on the result. If you don’t need output, or if you already have a trigger watching an output table, you can skip this part.

For SQL Server on-premise or SQL Azure Managed Instances:

SQL Server Agent can be used in a similar fashion. Some of the management details differ, but the fundamental steps are the same.

https://docs.microsoft.com/en-us/sql/ssms/agent/configure-sql-server-agent