This post has been republished via RSS; it originally appeared at: Windows Dev AppConsult articles.

I already had the chance to talk about Docker in this blog. Docker is the standard de-facto solution in the market for containerazing applications, so that you can easily move them to a modern and cloud world. Here is a recap of the topics we have seen so far:

- Introduction

- Dockerizing your fist application

- Create a multi-container application

- Deploy a multi-container application

I strongly suggest you to read these articles before moving on with this one because I will consider many of the concepts I've already explained as granted. Additionally, we're going to leverage the same web application we have built in the upcoming samples.

Nowadays it's hard to not seeing Docker mentioned together with Kubernetes. Why? In the previous posts we have learned how Docker is a great solution to turn your multi-tier application into multiple containers, so that they can be easily deployed and maintained independently. In our case, we took a 3 tier application (frontend, backend and cache) and we packaged it into three different containers. Thanks to Docker, we were easily able to deploy all the layers at the same time in different containers and to maintain, scale and upgrade them separately. Additionally, thanks to Docker, we can encapsulate inside the container not only the application but also its configuration (frameworks, dependencies, etc.). This way, we can be sure that no matter where we're going to deploy our solution, it will always work as exepected and with the desired configuration. However, this solution still requires manual management from our side. If something goes wrong with one of the containers, we need to manually take it down and spin up a new one. Or in case of heavy traffic, we need to manually add new containers to scale out our application. As you can imagine, this isn't an ideal approach. We are using containers and the power of the cloud so that we don't have to worry anymore about these kind of issues, so having to manually react to events kills our great story.

This is where Kubernetes comes in. It's what we call "an orchestrator" and it's an open source project created by Google which has quickly become another standard in the industry. Exactly like the director of an orchestra make sure that everyone is playing in the right way, Kubernetes "orchestrates" our solution to make sure everything is running in the desired state. Typically, you feed an orchestrator with the definition of the default state for your application: which are the layers that compose it, how many instances of each layer you need, how much CPU each layer can consume, etc. Then the orchestrator will take care of keeping the state of the application consistent with the definition. For example, if you have specified that you always need at minimum 5 instances of the frontend up & running and one of them fails, the orchestrator will take care of spinning up a new instance to replace the failing one.

In this post we're going to see how we can leverage Kubernetes to manage the web application we have built in the previous posts.

Disclaimer! I'm not a Kubernetes guru, so don't expect to become a super Kubernetes expert after reading this post. I'm a tech enthusiast who likes to play with technology and I've tried to put in words how I ramped up on it and what I've learned in the process, hoping that it can be useful also for you!

Creating a Kubernetes playground

In the real world, you will hardly have to setup a Kubernetes infrastructure on your own. Kubernetes, in fact, shines when it's used in a cloud architecture, where it has the chance to quickly spin up all the required resoruces to maintain the desired state or to scale in case of high traffic. This is why all the major cloud providers offers a dedicated PaaS (Platform-As-A-Service) offer for Kubernetes, where you don't have to worry about the underlying infrastructure. Of course, also Microsoft Azure offers its own service, called Azure Kubernetes Service (AKS, in short). However, for the moment, we will play with Kubernetes on our local machine, which is the easiest way to understand how it works at no costs.

If you have already followed the previous posts about Docker, you should already have on your machine Docker for Windows, which has been recently rebranded as Docker Desktop. Thanks to this application, we can install on our machine everything we need to play with Docker containers. Since a while, Docker Desktop also comes with built-in support for Kubernetes, meaning that you don't have to install anything special to spin a Kubernetes cluster on your machine.

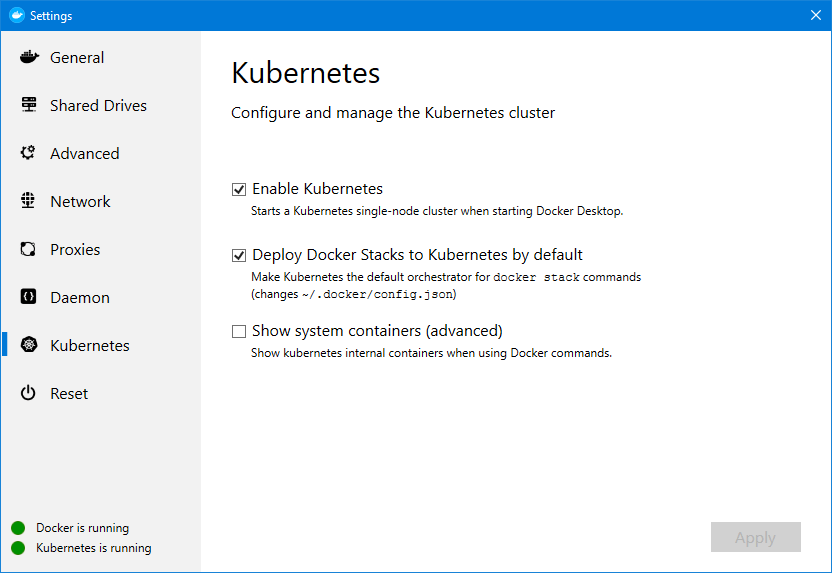

Once Docker Desktop is up & running, just right click on the icon in the systray and choose Settings. You will see a new section called Kubernetes:

Please note! If you don't see the section, it's because you're using Windows containers. Kubernetes currently supports only Linux containers, so you have first to right click on the Docker icon and choose Switch to Linux containers.

Check the Enable Kubernetes option. Docker Desktop will start the process to setup Kubernetes on your machine. After the operation is completed, you should see in the lower left corner the message Kubernetes is running marked by a green dot. To make things easier for later, make sure to check also the option Deploy Docker Stacks to Kubernetes by default.

We can verify that Kubernetes is indeed working by opening a command prompt. Feel free to choose the one you like best, like PowerShell or the standard Windows prompt. Once you have deployed Kubernetes on your machine, in fact, you will have access to a new command line tool called kubectl, which allows to interact with the various Kubernetes APIs. It's very similar to the docker command we have seen when we have talked about Docker: you can use it to control a Kubernetes cluster, but it doesn't necessarily have to be on the same machine. In the next post, in fact, we're going to use kubectl to control a cluster deployed on Azure Kubernetes Service.

When we use the kubectl command we're talking with the Kubernetes Master, which is the process in charge of maintaining the desired state for your cluster. The master can control one or more nodes, which are the machines (phisical or virtual) where your application is indeed running.

Let's start by typing the following command:

PS> kubectl cluster-info

Kubernetes master is running at https://localhost:6445

KubeDNS is running at https://localhost:6445/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

Our Kubernetes cluster is indeed up & running. We can see the available nodes with the following command:

PS> kubectl get nodes

NAME STATUS ROLES AGE VERSION

docker-desktop Ready master 5h17m v1.13.0

We are connected to the cluster that Docker Desktop has created for us, which has only one node. However, right now the cluster is empty. There are no applications running. So let's create one!

PS> kubectl run webserver --image=nginx:latest --port 80

Remember that Kubernetes is an orchestration tool, not a container technology. As such, we always need to start from an already containerized application. In this example, we're using a Docker image we have already seen in action: NGINX, the popular and lightweight web server. With this command we're asking to Kubernetes to add a new application to the cluster, based on the nginx image from Docker Hub, and to expose it on port 80.

This command has created a new deployment, which is the definition of the desired state we want for our application. We can see it by using the following command:

PS> kubectl get deployments

NAME READY UP-TO-DATE AVAILABLE AGE

webserver 1/1 1 1 3m48s

Since we haven't specified any special parameter, the deployment has been created with the standard minimum configuration: it's a single instance application. This is the desired state: Kubernetes will do his best to keep one instance of this application always up & running. But where is our application running? Let's try to use another command:

PS> kubectl get pods

NAME READY STATUS RESTARTS AGE

webserver-cfd4bd475-klbt2 1/1 Running 0 6m17s

We are introducing another important Kubernetes concept: pods. The pod is the container for our application, which has its own storage, network, etc. A pod can host one or more containers even if, typically, each pods maps a single container. The desired state we have expressed with the deployment is translated into one or more pods, based on the configuration. Our previous deployment has a desired state of using a single instance, so Kubernetes has automatically spinned up a pod for us to host the application defined in the webserver deployment (our NGINX instance). Kubernetes will use pods to make sure the application always stays in the desired state. We can easily see this if we try to terminate the pod with the following command:

PS> kubectl delete pods webserver-cfd4bd475-klbt2

pod "webserver-cfd4bd475-klbt2" deleted

Now try again to see the list of pods. If you're fast enough, you should see something like this:

PS> kubectl get pods

NAME READY STATUS RESTARTS AGE

webserver-cfd4bd475-8mv2x 0/1 ContainerCreating 0 3s

As you can see, Kubernetes is already creating a new pod. If we try again in a few seconds, we'll see our pod back in the Running status. Can you understand what just happened? The deployment has specified a desired state of a single instance of our webserver application always up & running. As soon as we have killed the pod, Kubernetes has realized that the desired state wasn't respected anymore and, as such, it has created a new one. Smart, isn't it?

However, by default the pod is isolated and it can't communicate with other pods or with an external network, unless we expose it through a service . Let's use the following command:

PS> kubectl expose deployments webserver --type=LoadBalancer

service/webserver exposed

We're telling to Kubernetes to create a new service for the webserver deployment, which type is LoadBalancer. Thanks to this command, Kubernetes creates an external load balancer for us, which is able to automatically handle the workload in case we have multiple instances of our web application running. We can see the status of the service with the following command:

PS> kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 22m

webserver LoadBalancer 10.103.92.124 localhost 80:31126/TCP 4s

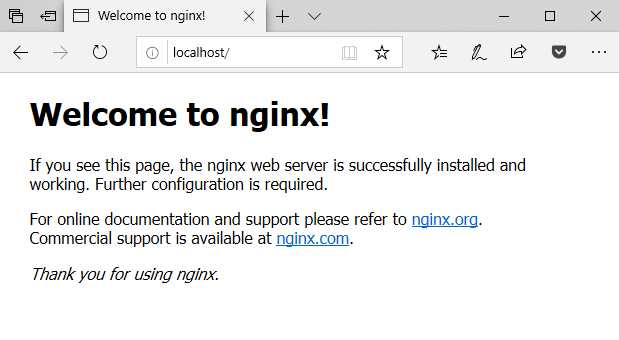

Since we have specified LoadBalancer as type, Kubernetes will automatically assign an external IP, other than an internal IP, to our application. Since Kubernetes is running on our own machine, the external IP will be the localhost. As such, we can open a browser and type http://localhost to see the default NGINX page being displayed:

What if we want to scale our application up? The nice part of Kubernetes is that, exactly like what happened when we have killed the pod, we don't have to manually take care of creating / deleting the pods. We just need to specify which is the new desired state, by updating the definition of the deployment. For example, let's say that now we want 5 instances of NGINX to be always up & running. We can use the following command:

PS> kubectl scale deployments webserver --replicas=5

deployment.extensions/webserver scaled

Now let's see again the list of available pods:

PS> kubectl get pods

NAME READY STATUS RESTARTS AGE

webserver-cfd4bd475-8mv2x 1/1 Running 0 17m

webserver-cfd4bd475-jgll4 1/1 Running 0 51s

webserver-cfd4bd475-rjbfq 1/1 Running 0 51s

webserver-cfd4bd475-v7jd4 1/1 Running 0 51s

webserver-cfd4bd475-z9dqt 1/1 Running 0 51s

Now we have 5 pods up & running: the first one is the original one (you can notice it by the age, it's the oldest one), while the other four have been created as soon as we have updated the definition of the desired state for the webserver deployment. However, thanks to the service we have previously created, our web application is still exposed through a single endpoint:

PS> kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 34m

webserver LoadBalancer 10.103.92.124 localhost 80:31126/TCP 11m

As such, our browser instance opened on the http://localhost URL will continue to work just fine.

Deploy a multi-tier application

The previous sample has been useful to unerstand the basics of Kubernetes but, in a real world scenario, you would never manualy create a new deployment and a new service for a single application. Instead, you would leverage solutions to deploy multi-tier applications. Let's do something a little bit more complex and, instead of deploying just a single NGINX instance, let's try to reuse the same project we have built in the previous posts about Docker. The solution can be found here and, as a reminder, it's composed by three layers:

- A backend, which provides a REST API that returns a RSS feed

- A frontend, which shows the values coming from the REST API

- A redis cache, which is used to cache the data from the REST API

One of the advantages of using Docker Desktop as Kubernetes engine is that it's able to leverage the same Docker Compose files we used in the previous post also to deploy a solution on a Kubernetes cluster. As a reminder, here is the Docker Compose file we have previously built, called docker-compose.yml. You will find it in the root of the GitHub repository.

version: '3'

services:

web:

image: qmatteoq/testwebapp

ports:

- "8080:80"

newsfeed:

image: qmatteoq/testwebapi

redis:

image: redis

Our solution is composed by three layers: web, newsfeed and redis. However, only the web one is exposed to the public; the Web API and the Redis are accessed only by the website itself. Every layer is composed by a container, which image is available on Docker Hub. To deploy this solution we can just launch the following command:

PS> docker stack deploy --compose-file .\docker-compose.yml mywebapp

Waiting for the stack to be stable and running...

redis: Ready [pod status: 1/1 ready, 0/1 pending, 0/1 failed]

web: Ready [pod status: 1/1 ready, 0/1 pending, 0/1 failed]

newsfeed: Ready [pod status: 1/1 ready, 0/1 pending, 0/1 failed]

Stack mywebapp is stable and running

Do you remember that, when we have configured Kubernetes in the beginning, we have enabled the option to use Kubernetes as default target for Docker Stack? This is exactly what happened. Thanks to this option, the docker stack command has deployed our solution on Kubernetes instead of using Docker Swarm, which is another orchestrator created by Docker and which was the only available built-in option until a while ago.

Let's take a look in more details at what happened during the deploy. First let's check the deployments:

PS> kubectl get deployments

NAME READY UP-TO-DATE AVAILABLE AGE

newsfeed 1/1 1 1 74s

redis 1/1 1 1 74s

web 1/1 1 1 74s

We have a new deployment for each layer of our application. Since our original Docker Compose file didn't have any reference about replicas, the desired state is one instance for each of the layers. Now let's take a look at the pods:

PS> kubectl get pods

NAME READY STATUS RESTARTS AGE

newsfeed-5dc44dfc45-dwkg8 1/1 Running 0 119s

redis-7bd547f649-2r9hm 1/1 Running 0 119s

web-6968978b5b-6cxbr 1/1 Running 0 119s

As expected, since the desired state is one instance for each layer, we simply have one pod running for each container. Now let's take a look at the services:

PS> kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 161m

newsfeed ClusterIP None <none> 55555/TCP 3m20s

redis ClusterIP None <none> 55555/TCP 3m20s

web ClusterIP None <none> 55555/TCP 3m20s

web-published LoadBalancer 10.105.9.67 localhost 8080:30963/TCP 3m20s

Here we might have a little bit of a surprise, especially if we try to match what we see to what we have learned when we did the same thing with Docker Compose. If you recall the original Docker Compose file, we had to specify the exposed port only for the web layer, because it's the only one exposed to the user. In Kubernetes, instead, each layer must be exposed through a service, even if it's just internal. The difference is in the service's type. As you can see, only web-published has LoadBalancer as type, which means that it will be exposed to the outside. Docker, additionally, uses a slightly different approach than the one we did when we have exposed our NGINX server. Instead of directly exposing the pod to the outside world, Docker has created two services: one called web, which is used for internal communication, and one called web-published, which is instead the one exposed externally.

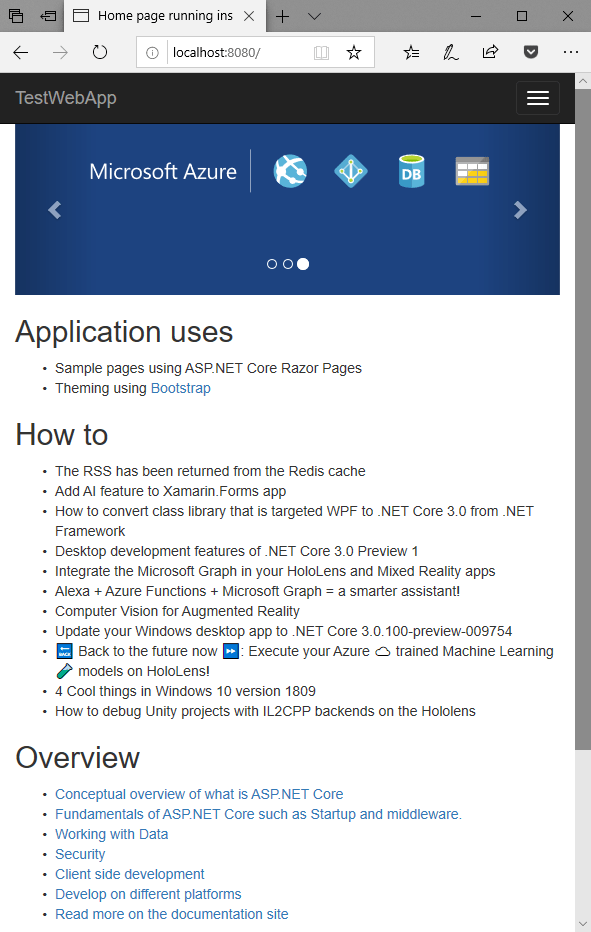

We can verify that our application is indeed up & running simply by opening our favorite browser at the URL http://localhost:8080. After a few seconds, you should see the same familar website we have built in the previous posts about Docker running just fine:

Every piece of the puzzle will be at the right place:

- The frontend is connecting to the Web API to retrieve the list of articles from the RSS feed

- If you refresh the page, you will notice that the list will have an extra item from the top, which is coming from the Redis cache.

This experiment we just did is a great showcase of the power of containers and Kubernetes. We took a solution we have built many months ago (and which, personally, I didn't touch since then) and we have deployed it without hitting any issue.

Updating the application

Another great example of the power of Kubernetes is when we need to update one of the layers of the application. Thanks to its ability to easily scale, Kubernetes is able to update pods gradually, to make sure that there's always one or more instances up & running, which means no downtime for the users. Let's try this!

Before updating our application, however, we need to change the desired state in Kubernetes. By using the Docker Compose file, in fact, we have spinned only a single instance of each layer, including the web frontend. In an upgrade scenario, this isn't a good fit: once we start the upgrade, we don't have any other instance of the web app which is able to serve the users until the upgrade is completed. As such, as first step we need to scale a bit our web frontend. However, we can't do it using the same kubectl scale command we've seen before. When we deploy a solution using docker stack, in fact, the desired state is the one described in the Docker Compose file and we can't dinamically change it. If we try to execute a command like this:

PS> kubectl scale deployments/web --replicas=5

and then we immediately check the list of available pods, we would see something like this:

PS> kubectl get pods

NAME READY STATUS RESTARTS AGE

newsfeed-5dc44dfc45-kptnc 1/1 Running 0 55m

redis-7bd547f649-qnh9p 1/1 Running 0 55m

web-6968978b5b-f8pf8 1/1 Running 0 55m

web-6968978b5b-k4rww 0/1 Terminating 0 1s

web-6968978b5b-ls2dz 0/1 Terminating 0 1s

web-6968978b5b-rvbjn 0/1 Terminating 0 1s

web-6968978b5b-stxhm 0/1 Terminating 0 1s

Kubernetes has spinned up new pods to satisfy the new scaling requirement, which however has been quickly reverted to the original desired state described in the Docker Compose file. As such, they've been immediately terminated. In order to change this behavior we need to specify the number of instances we want directly in the Docker Compose file:

version: '3'

services:

web:

image: qmatteoq/testwebapp

ports:

- "8080:80"

deploy:

replicas: 5

newsfeed:

image: qmatteoq/testwebapi

redis:

image: redis

We have added a new entry deploy -> replicas to specify that we want 5 instances of our web frontend. Now we need first to take the solution we have created down with the following command:

PS> docker stack rm mywebapp

Removing stack: mywebapp

Now we can deploy it again:

PS> docker stack deploy --compose-file .\docker-compose.yml mywebapp

Waiting for the stack to be stable and running...

redis: Ready [pod status: 1/1 ready, 0/1 pending, 0/1 failed]

web: Ready [pod status: 5/5 ready, 0/5 pending, 0/5 failed]

newsfeed: Ready [pod status: 1/1 ready, 0/1 pending, 0/1 failed]

Stack mywebapp is stable and running

As you can see, this time Kubernetes have created 5 pods for the web deployment. We can observe this also using the familiar kubectl get pods command:

PS> kubectl get pods

NAME READY STATUS RESTARTS AGE

newsfeed-5dc44dfc45-rsq9g 1/1 Running 0 2m29s

redis-7bd547f649-bvpcx 1/1 Running 0 2m29s

web-6968978b5b-b7zzh 1/1 Running 0 2m29s

web-6968978b5b-sv72z 1/1 Running 0 2m29s

web-6968978b5b-t4t2m 1/1 Running 0 2m29s

web-6968978b5b-vtnbc 1/1 Running 0 2m29s

web-6968978b5b-xncsq 1/1 Running 0 2m29s

Now that we have more instances of the web layer up & running, we can start updating our web application.

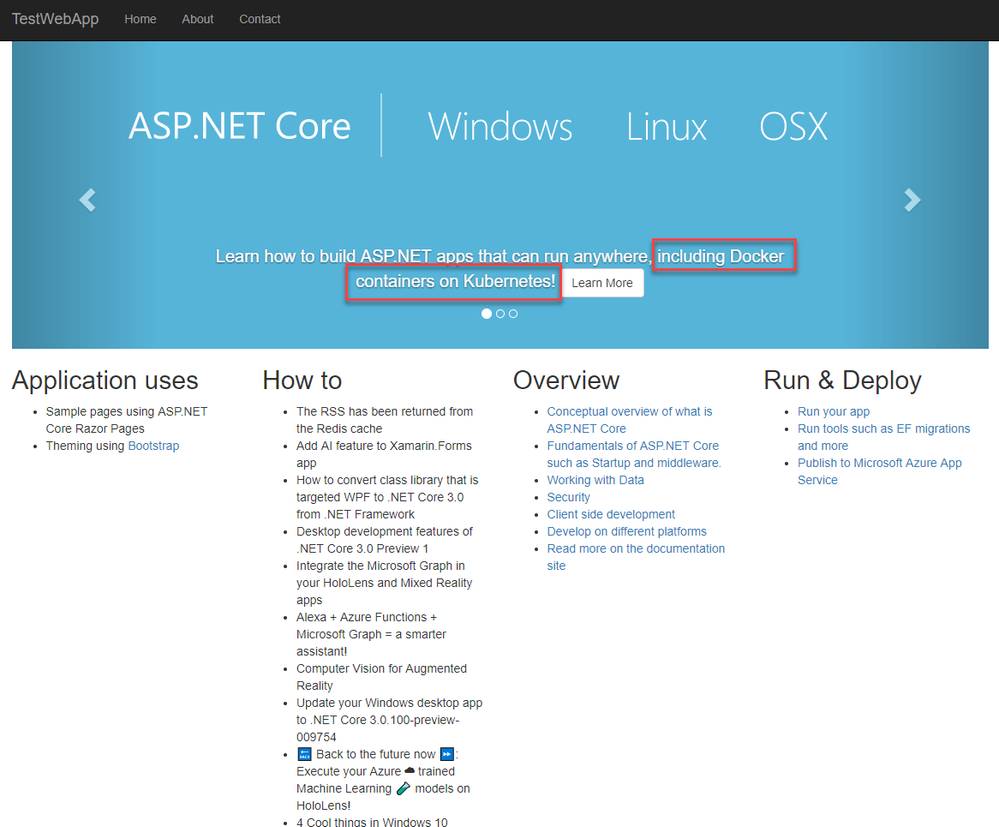

First, let's make a simple change to one of the layers of our application. In my case, I've chosen the web frontend. If you recall, the web application is using the standard ASP.NET Core template, so I've just replaced the following section in the Index.cshtml file:

<div class="item active">

<img src="~/images/banner1.svg" alt="ASP.NET" class="img-responsive" />

<div class="carousel-caption" role="option">

<p>

Learn how to build ASP.NET apps that can run anywhere.

<a class="btn btn-default" href="https://go.microsoft.com/fwlink/?LinkID=525028&clcid=0x409">

Learn More

</a>

</p>

</div>

</div>

with

<div class="item active">

<img src="~/images/banner1.svg" alt="ASP.NET" class="img-responsive" />

<div class="carousel-caption" role="option">

<p>

Learn how to build ASP.NET apps that can run anywhere, including Docker containers on Kubernetes!

<a class="btn btn-default" href="https://go.microsoft.com/fwlink/?LinkID=525028&clcid=0x409">

Learn More

</a>

</p>

</div>

</div>

As you can see, I've added an extra message at the end of the sentence. Now we need to update the image of the application and to publish it on Docker Hub. First, let's build the image with the docker build command, which we need to execute in the same folder which contains the web app and which hosts the dockerfile:

PS> docker build -t "qmatteoq/testwebapp" .

I won't explain from scratch the meaning of the various Docker commands. You can find all the details in the previous posts mentioned at the beginning of this article.

Once the image has been built, we can push it to Docker Hub (or to any other registry, like Azure Container Registry) so that our Kubernetes cluster is able to pick it. This is the command to execute:

PS> docker push qmatteoq/testwebapp

Now we're ready to tell to Kubernetes that the image has been update and the deployment should leverage the new version of our application. Let's use the following command:

PS> kubectl set image deployment web web=qmatteoq/testwebapp:latest

deployment.extensions/web image updated

We're telling to Kubernetes that we want to update the deployment identified by the web label with the Docker image called qmatteoq/testwebapp and that we want to use the latest version. Now, if you wait a few seconds and then you get the list of available pods, you should see something like this:

PS> kubectl get pods

NAME READY STATUS RESTARTS AGE

newsfeed-5dc44dfc45-rsq9g 1/1 Running 0 9m12s

redis-7bd547f649-bvpcx 1/1 Running 0 9m12s

web-6968978b5b-b7zzh 1/1 Running 0 9m12s

web-6968978b5b-qpz26 0/1 ContainerCreating 0 2s

web-6968978b5b-sv72z 1/1 Terminating 0 9m12s

web-6968978b5b-t4t2m 1/1 Running 0 9m12s

web-6968978b5b-vtnbc 1/1 Running 0 9m12s

web-6968978b5b-xncsq 1/1 Running 0 9m12s

web-856c549c65-4fhgw 0/1 Terminating 0 2s

web-856c549c65-4jg4c 0/1 Terminating 0 2s

web-856c549c65-dqt68 0/1 Terminating 0 2s

Kubernetes is replacing the existing pods with new ones based on the updated image. However, it isn't taking down all the pods at the same time, but it's recycling them in batches. As a consequence, if you keep reloading your browser on the http://localhost:8080 URL, you will never see the request failing, but the website will always be up & running. The only difference is that, based on the pod you're connecting to, you might see the old version of the website or the new one. Once the deployment is completed and all the pods are in the Running status, you should see the change we have just made to the home page:

Cool, isn't it?

The Kubernetes dashboard

The command line tool helps us to be fast and productive, but understanding at a glance the current status of our cluster isn't easy, because we need to manually go through the list of deployments, pods and services. Luckily, Kubernetes offeres a web dashboard which makes much easier to get an overview of the cluster. Unfortunately, it doesn't ship out of the box, so we need to manually install it like if it's a new application running in our Kubernetes cluster. In this page you will find all the steps to properly configure the dashboard in a real environment. However, the process isn't really straightforward and it requires to deals with secrets and certificates in order to provide a high level of security. Since we're in testing phase and the Kubernetes cluster is actually running on our local machine, we can safely ues an alternative setup which doesn't require any special configuration.

Just type the following command:

PS> kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v1.10.1/src/deploy/alternative/kubernetes-dashboard.yaml

We're seeing a glimpse of how a real deployment typically works in Kubernetes. During this post, in fact, we have manually created a deployment using the kubectl run command and then we have exposed it using the kubectl expose command; or we have reused our Docker Compose file, thanks to the Kubernetes support offered by Docker Desktop. However, in the real world, you typically create a YAML file with the definition of your entire application and then you deploy it using the kubectl apply command.

This command will deploy on your local machine a new set of roles and services, which hosts a web dashboard built around the Kubernetes APIs. In order to expose these APIs to your local machine, you need to setup a HTTP proxy, which is achieved by running the following command:

PS> kubectl proxy

Starting to serve on 127.0.0.1:8001

Once the proxy is started, open your browser and hit the following address: http://localhost:8001/api/v1/namespaces/kube-system/services/http:kubernetes-dashboard:/proxy/#!/overview?namespace=_all

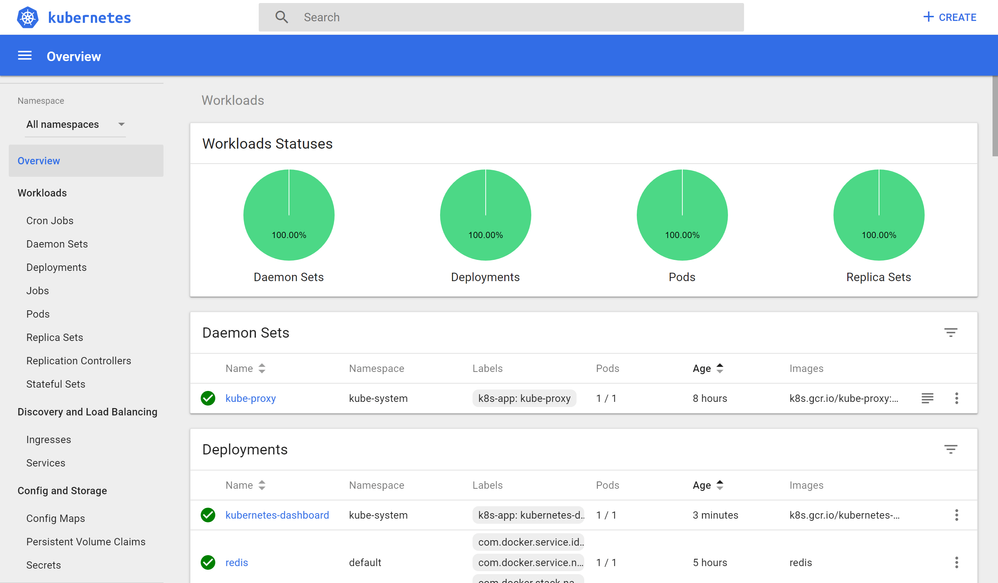

You should see the following dashboard being loaded:

As you can see, it's much easier to see at a glance the status of our Kubernetes cluster, how many pods are running, which services are exposed, etc.

Wrapping up

In this post we have learned the basics of Kubernetes and why it's such a great companion for Docker. Docker is a great solution to modernize our applications and to simplify their deployment, but it still would require lot of manual work in order to make it really flexible and scalable. Kubernetes can do this for us, so that we can focus on building and improving our application rather than managing the underlying infrastructure.

In the next post we're going to move our solution to the cloud, thanks to Azure Kubernetes Service.

Stay tuned!