This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

Mithun Prasad, PhD and Jaya Mathew, Data Scientists at Microsoft

In our previous blog, we gave a brief introduction to machine translation, explored various topics like identifying the language and how to perform translation/transliteration of spoken or typed text using Microsoft’s Translator Text API. In addition, we also discussed how translated or transliterated text can be integrated within a LUIS app.

Assuming the reader was able to successfully build and publish a LUIS model with their list of desired intents/entities, in this blog we give an overview of how to quickly access the performance of their LUIS app using the inbuilt LUIS Dashboard which is available in the ‘Dashboard’ section of your LUIS app as shown in Figures-1 to Figure-4. In the next figure, we give an outline of the details provided by the Dashboard section of your LUIS app which starts off with an overview of the published app, then dives into the results from training evaluation, followed by predictions per intent and then wraps up with problematic intents with the most prediction error.

Figure-Dashboard Overview

Understanding the various aspects of the LUIS Dashboard

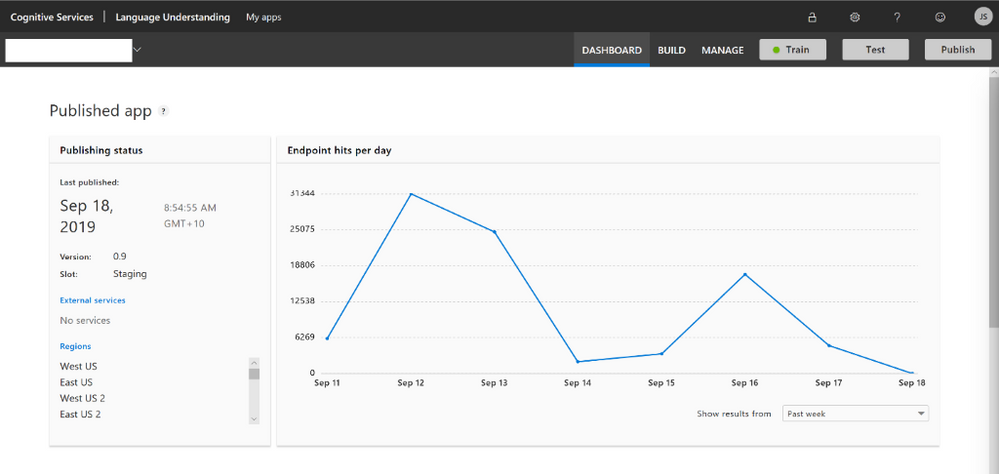

The first part of the Dashboard as shown in Figure-1 gives an overview of when the app was last published along with the version/slot/region details. The Endpoint hits per day gives a line chart of the consumption of the app, this can be viewed by week/month/quarter etc. This section can be used to determine the app usage and check for any seasonality. However, the metrics are not updated instantly and is only an approximation. For additional information about usage, use the Azure portal.

Figure-1

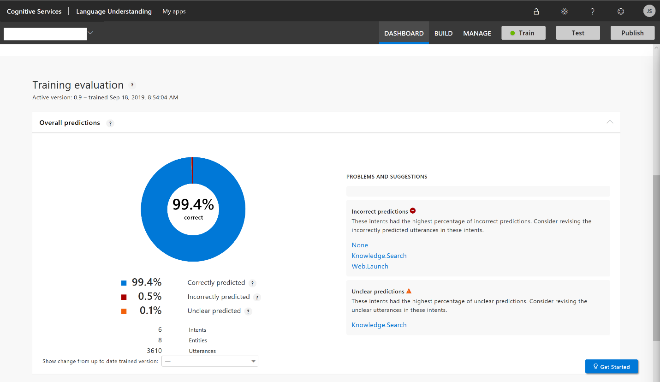

Next in the Dashboard as shown in Figure-2, details on the overall Training evaluation is shown as a donut-chart. The donut-chart is a summary of the overall prediction quality, here the user needs to focus on the unclear and incorrectly predicted intents to improve their app’s performance. Percentage correct is defined as the percentage of utterances for which the predicted intent matches the labeled intent.

Figure-2 shows that roughly 99.4% of the predicted/labeled intents match for this app and lists the intents where there were maximum number of incorrect/unclear predictions. In addition, one can also obtain a comparison of performance with an earlier version of the app.

Figure-2

When the quantity of example utterances varies significantly, this can be potentially due to imbalanced data. All intents need to have roughly the same number of example utterances - except the ‘None’ intent. It should only have 10%-15% of the total quantity of utterances in the app. If the data is imbalanced but the intent accuracy is above certain threshold, this imbalance is not reported as an issue.

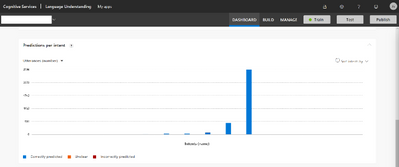

Figure-3a/3b dives into the prediction by intent as a bar plot either as a percentage/count of utterances, so the app author can now access the prediction quality by intent. Here the user needs to check for the intents where there are the greatest number of incorrect/unclear predictions. Effective apps have a high degree of correctly predicted example utterances, both within the intent and across intents.

Predictions per intent

Figure-3a |

Figure-3b |

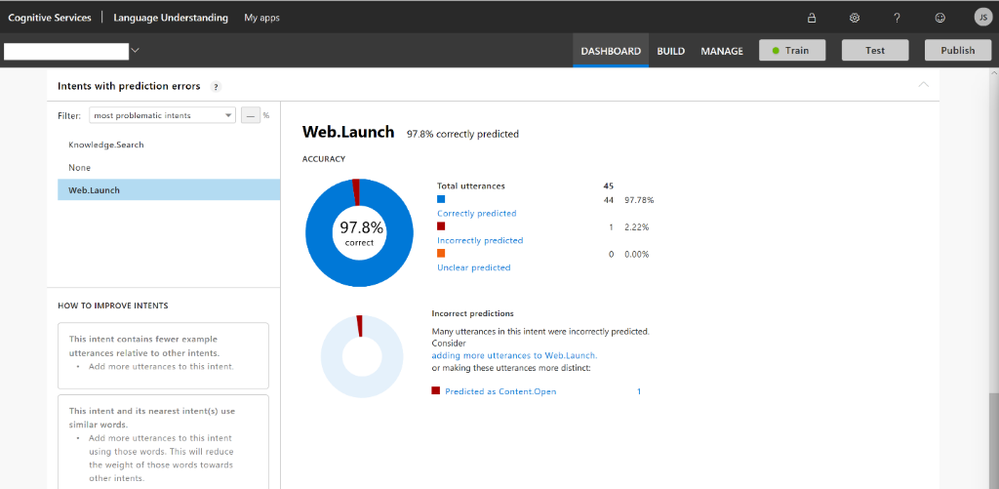

After checking the above visuals, in Figure-4, the author can further pinpoint the most problematic intents and determine what needs to be done for the problematic intents to improve the predictions.

Figure-4

As shown, the inbuilt ‘Dashboard’ is a very good option for the app author to quickly determine the performance of the app and differentiate problematic intents.

Addressing issues in your model

Adding or editing example utterances and retraining is the primary method of improving the performance of your app. The new or changed utterances need to follow guidelines for varied utterances.

As a rule of thumb, adding example utterances should be done by someone who:

- has a high degree of understanding of what utterances are in different contexts.

- knows how utterances in one intent may be confused with another intent.

- is able to decide if two intents, which are frequently confused with each other, should be collapsed into a single intent. If this is the case, the different data must be pulled out with entities.

The analytics page doesn’t provide insight about when to use patterns or phrase lists. If you do add them, it can help with incorrect or unclear predictions but won’t help with data imbalance.

References

- https://techcommunity.microsoft.com/t5/AI-Customer-Engineering-Team/Adding-multi-language-support-for-Azure-AI-applications-quickly/ba-p/812028

- https://www.luis.ai/

- https://docs.microsoft.com/en-us/azure/cognitive-services/luis/what-is-luis

- https://docs.microsoft.com/en-us/azure/cognitive-services/luis/luis-concept-prediction-score