This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

Mine Knowledge on File Names and Paths with a Python Custom Skill

Azure Cognitive Search is the Microsoft product for Knowledge Mining, a process to extract information from unstructured or semi-structured data. However, extracting knowledge of file names or paths is not trivial. In this article you will learn how to do it using Azure Functions for Python, that went to GA on August 19th, 2019.

Why mine knowledge of file names and paths?

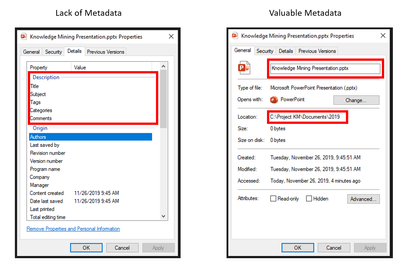

The lack of metadata is a common scenario in all companies in the world, usually people don’t have time or discipline to add tags or comments to their documents, leaving the file name and its path the only information available for searches. This problem is addressed with Knowledge Mining, metadata will be created for you. Azure Cognitive Search uses AI to extract insights from your files content.

But look at the image below. With the file name and its path have we already discovered the name of the project, the year, the type of the file, its title, among other things. It is very common to see dates, hours, names, and locations in the names and paths of the files.

Figure 1: Lack and valuable metadata

So, what is the problem? Why do we need a Custom Skill?

Today, November 2019, you will face a challenge if you want to mine knowledge from files names and paths using Azure Cognitive Search: it is not supported out of the box.

This situation may change soon, but for now the service only allows you to extract insights, like key phrases and entities, from OCR Text written in images or from the textual content of files like pdfs, txt, and Microsoft Word.

Imagine that your dataset has pdf and words documents, all of them with images, that may have text or not, and text itself, called as content within the Azure Cognitive Search Enrichment Pipeline. When your skillset does OCR on the images, you will have 2 “bodies” of text for each file: the document’s content and the OCR Text of the images of that same document.

If you want to extract key phrases from both bodies of text, you have 2 options: 1) Do it twice, meaning that you will need 2 fileds for the same thing in the index. This option will require extra work from developers to allow an unified search experience in application interface. 2) Merge both bodies with the Merge Text Skill, which documentation includes details and exaples.

But what is not mentioned in the documentation is: Without offsets, it won’t work. And only OCR will return offsets for you. That’s why you need a custom skill to merge any other text body from your enrichment pipeline: You want to detect insights from the file name and store them in the same fields you have for Content and OCR Text. This article focus on the file name, but it would work with file’s dates, path, author, etc.

You may also need to merge other skills outputs, like sentiment or image analysis. Let’s imagine another situation, where your dataset includes images, not embeded into a document and without any text. Like photos… There will be no content neither OCR Text. Your alternative is to use the Image Analysis skill, to get tags, captions, and more. But what if you want to extract key phrases from this data? Has the photo name some information? Again, you will need a custom skill to merge annotations, or metadate, within your enrichment pipeline.

Code and Deployment

The code of the solution suggested is available in this GitHub repo and here are some important guidelines:

- If you are curious to know why I am using Python and Azure Functions, check this previous blog post.

- Start with this tutorial, to create and deploy your environment

- The recommended Python version is 3.7.4, meaning that if you use Anaconda actual version, November 2019, you are free of any version preoccupation. If you are in the future and have a newer version, you can use conda to create the requested environment: conda create -n your-env-name python=3.6

- When you create a local project, with the command func init your-project-name, all necessary files are created within your project folder. Including one file for requirements (like an yml file) and py, that is a template for your code. At the end of the day, Azure Functions will simulate conda with the requirements you specify into the requirements.txt file.

- Please note that you need to use mimetype=”application/json” for your http-response, since the Cognitive Search interface expects a json file as a return.

- You will need to pip install functions from your command line interface.

- The code removes special characters. Please check your business requirements and the lessons learned below to define what transformations you need.

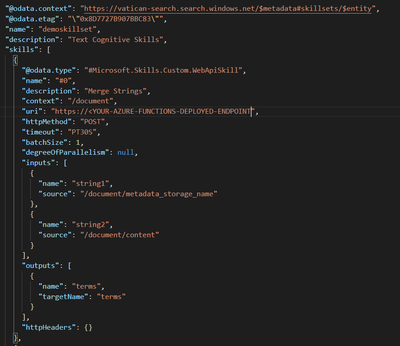

- As you can see in the image below, the metadata_storage_name and the content are the input strings for the skill.

Figure 2: How to connect the Custom Skill to your Skillset

Key Lessons Learned

Here is a list of good practices from our experience when creating this solution for a client:

- When possible, leverage global cached data for the reference data. It is not guaranteed that the state of your app will be preserved for future executions. However, the Azure Functions runtime often reuses the same process for multiple executions of the same app. In order to cache the results of an expensive computation, declare it as a global variable.

- Always prepare your code to deal with empty result sets, if a term is filtered, the result is empty string to be added to the result set.

- VS Code and Postman will work great for local debugging, you just need to save the new version of your python code and the changes are effective immediately, not requiring you to restart the service. This dynamic process allows you to quickly change your code and see the results.

- In your code, use dumps on your output variable to validate what your skill returns to Cognitive Search. This will give you the opportunity to fix the layout in case of error.

- The Text Analytics API, that is used under the hood, will remove characters like "_" or "-". But if you submit "vacation_summer_in_Brazil_01.jpg", you will get "Brazil" as an entity of the location type, and nothing else. However, if you submit "vacation summer in Brazil 01 jpg", you will get:

- Key Phrases: vacation summer, Brazil, jpg

- Entities: Brazil (location), summer (datetime-dateRange), 01 (quantity-number).

Is there an alternative for a Custom Skill?

Yes, there is. Also not supported or documented, and only using built in skills. I don’t recommend this alternative since I have never tested it and much more steps are required to achieve a similar objective.

Offsets and itemsToInsert are Merge Skill properties that expect arrays. You can submit the file name, or any other string, to the Split Skil. It will split the content into an array of 2 positions, and then need to use the Conditional Skill to create an array with the hard coded values. The last step is to run the Merge Skill to merge the contents in the array to the rest of the content.

PowerSkills – Azure Search Team Official Custom Skills

For Azure Search click-to-deploy C# Custom skills, created by the Azure Search Team, use the Azure Search Power Skills. Previous C# knowledge or software installations aren't required, they have the "one click deployment" concept. There are Custom Skills for Lookup, distinct (duplicates removal), and more.

Related Links

Useful links for those who want to know more about Knowledge Mining:

- The code of this blog post - GitHub

- Knowledge Mining Accelerator - ms/kma

- Knowledge Mining Bootcamp – ms/kmb

- Knowledge Mining posts – ms/ACE-Blog

Conclusion

This post helps you to create Python Custom Skill, for Azure Cognitive Search, based on Azure Functions for Python. It merges 2 strings in a third one. Typical usage is when you want to concatenate, within an Enrichment Pipeline, the file name or path with the content. This skill is indicated for scenarios when the file name or path have dates, organizations, names, or key phrases.