This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

R is an open-source language used for statistical computing and graphics. It's used in the statistical analysis of genetics, natural language processing, analysing financial data, and more. R provides an interactive command line experience. RStudio is an interactive development environment (IDE) available for the R language. The free version provides code editing tools, an integrated debugging experience, and package development tools. It includes a console, syntax-highlighting editor that supports direct code execution, as well as tools for plotting, history, debugging and workspace management. RStudio is available in open source and commercial editions and runs on the desktop (Windows, Mac, and Linux) or in a browser connected to RStudio Server or RStudio Workbench (Debian/Ubuntu, Red Hat/CentOS, and SUSE Linux). RStudio deployment requires a shared file storage to store project files and user specific files. For example, code, documents, user configuration and session data.

RStudio Connect is a publishing platform for the work your teams create in R and Python. Share Shiny applications, R Markdown reports, Plumber APIs, dashboards, plots, Jupyter Notebooks, and more in one convenient place. Use push-button publishing from the RStudio IDE, scheduled execution of reports, and flexible security policies to bring the power of data science to your entire enterprise. RStudio Connect manages uploaded content within the server's data directory. This data directory must be a shared location

RStudio Team is a bundle of RStudio professional products for doing statistical data-analysis, sharing data products, and managing packages.

Most of the RStudio products require a high performance / low latency shared storage option. Particularly, for configurations that load balance across two or more nodes you will need a networked storage solution. Shared storage is used to persist content such as project files and application data across your network. They recommend and support the NFS protocol.

Azure NetApp Files is a first party Azure service for migration and running the most demanding enterprise file-workloads in the cloud: native SMBv3.0 and NFS (v3.0 and v4.1) file shares, databases, SAP, and high-performance computing applications, with no code changes. R workloads are characterised by high IOPS and throughput requirements. Azure NetApp files can handle between ~130,000 pure random writes and ~460,000 pure random reads and extremely low latency with up to 4.4 GBps of throughput. This makes it particularly suitable to host R workloads.

With Azure NetApp Files, we can set up native NFS v3 or NFS v4.1 volume as described below:

Pre-requisites

- You need to have access to Azure portal and active subscription to provision resources

- You must have already set up a capacity pool.

- A subnet must be delegated to Azure NetApp Files.

- The NFS client should be in the same VNet or peered VNet as the Azure NetApp Files volume. Connecting from outside the VNet is supported; however, it will introduce additional latency and decrease overall performance.

- Ensure that the NFS client is up-to-date and running the latest updates for the operating system.

Deciding which NFS version to use:

NFSv3 can handle a wide variety of use cases and is commonly deployed in most enterprise applications. You should validate what version (NFSv3 or NFSv4.1) your application requires and create your volume using the appropriate version. For example, if you use Apache ActiveMQ, file locking with NFSv4.1 is recommended over NFSv3.

Security

Support for UNIX mode bits (read, write, and execute) is available for NFSv3 and NFSv4.1. Root-level access is required on the NFS client to mount NFS volumes.

Steps

1. Create an NFS volume

Click the Volumes blade from the Capacity Pools blade. Click + Add volume to create a volume.

In the Create a Volume window, click Create, and provide information for the following fields under the Basics tab:

Volume name

Specify the name for the volume that you are creating.

A volume name must be unique within each capacity pool. It must be at least three characters long. The name must begin with a letter. It can contain letters, numbers, underscores ('_'), and hyphens ('-') only.

You cannot use default or bin as the volume name.

Capacity pool

Specify the capacity pool where you want the volume to be created.

Quota

Specify the amount of logical storage that is allocated to the volume.

The Available quota field shows the amount of unused space in the chosen capacity pool that you can use towards creating a new volume. The size of the new volume must not exceed the available quota.

Throughput (MiB/S)

If the volume is created in a manual QoS capacity pool, specify the throughput you want for the volume.

If the volume is created in an auto QoS capacity pool, the value displayed in this field is (quota x service level throughput).

Virtual network

Specify the Azure virtual network (VNet) from which you want to access the volume.

The Vnet you specify must have a subnet delegated to Azure NetApp Files. The Azure NetApp Files service can be accessed only from the same Vnet or from a Vnet that is in the same region as the volume through Vnet peering. You can also access the volume from your on-premises network through Express Route.

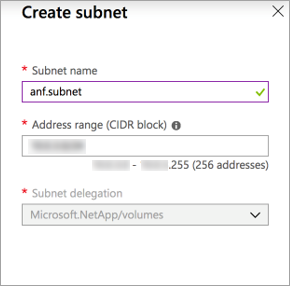

Subnet

Specify the subnet that you want to use for the volume.

The subnet you specify must be delegated to Azure NetApp Files.

If you have not delegated a subnet, you can click Create new on the Create a Volume page. Then in the Create Subnet page, specify the subnet information, and select Microsoft.NetApp/volumes to delegate the subnet for Azure NetApp Files. In each Vnet, only one subnet can be delegated to Azure NetApp Files.

If you want to apply an existing snapshot policy to the volume, click Show advanced section to expand it, specify whether you want to hide the snapshot path, and select a snapshot policy in the pull-down menu.

For information about creating a snapshot policy, see Manage snapshot policies.

Click Protocol, and then complete the following actions:

Select NFS as the protocol type for the volume.

Specify a unique file path for the volume. This path is used when you create mount targets. The requirements for the path are as follows:

It must be unique within each subnet in the region.

It must start with an alphabetical character.

It can contain only letters, numbers, or dashes (-).

The length must not exceed 80 characters.

Select the Version (NFSv3 or NFSv4.1) for the volume.

If you are using NFSv4.1, indicate whether you want to enable Kerberos encryption for the volume.

Optionally, configure export policy for the NFS volume.

Click Review + Create to review the volume details. Then click Create to create the volume. The volume you created appears in the Volumes page.A volume inherits subscription, resource group, location attributes from its capacity pool. To monitor the volume deployment status, you can use the Notifications tab.

- Mount the ANF NFS volume on your RStudio clients.

Click the Volumes blade, and then select the volume for which you want to mount.

Click Mount instructions from the selected volume, and then follow the instructions to mount the volume.

R analytics jobs may require different levels of underlying storage performance based on the processing algorithms. With Azure NetApp Files we can non-disruptively tune the storage performance based on the requirement.

Refer – Dynamic Changing of volume service level.

https://docs.microsoft.com/en-us/azure/azure-netapp-files/dynamic-change-volume-service-level

This article is authored by:

Cloud Solutions Architect at NetApp