This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

This is a continuation of How to Monitor the Azure storage Account data, Part I of this blog can be found here. In this blog, we will discuss additional Monitoring capabilities of azure storage account. This blog will contain the following parts:

- How to check authorizing requests with Shared Key or SAS

- Prerequisites for switching shared key authentication to user delegation SAS

- LCM Logging info and how to check policy management failure:

- Transactions, Metrics and targets for Azure Storage

- Guidance on timeout and Server Busy errors

How to check authorizing requests with Shared Key or SAS:

Be default, Shared Key Access will be enabled on all the existing/new storage accounts. Azure Storage logs in Azure Monitor include the type of authorization that was used to make a request to a storage account i.e., Shared key or SAS.

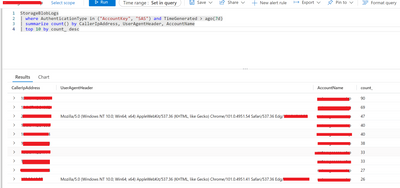

Using Analytics workspace, we can retrieve the logs that were authorized with Shared Key or SAS. Below query displays the IP addresses, AccountName and AgentHeader for frequently sent requests that were authorized with both Shared Key or SAS.

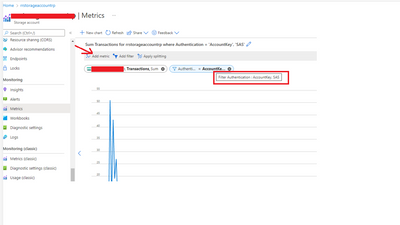

In addition, you can also track how requests to a storage account are being authorized with the help of Metrics Explorer in the Azure portal. Follow these steps to create a metric that tracks requests made with Shared Key or SAS.

The following table shows how each type of SAS is authorized and how Azure Storage will handle that SAS when the AllowSharedKeyAccess property for the storage account is false.

|

Type of SAS |

Type of authorization |

|

||

|

User delegation SAS (Blob storage only) |

Azure AD |

Request is permitted. Microsoft recommends using a user delegation SAS, when possible, for superior security. |

||

|

Service SAS |

Shared Key |

Request is denied for all Azure Storage services. |

||

|

Account SAS |

Shared Key |

Request is denied for all Azure Storage services. |

You can prevent your client application using Shared Key authorization. To understand how disallowing Shared Key authorization may affect client applications before you make this change, enable logging and metrics for the storage account. You can then analyse patterns of requests to your account over a period to determine how requests are being authorized.

After you have analysed how requests to your storage account are being authorized, you can take action to prevent access via Shared Key. you can set the AllowSharedKeyAccess property for the storage account to false.

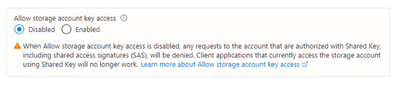

To disallow Shared Key authorization for a storage account in the Azure portal, follow these steps:

- Navigate to your storage account in the Azure portal.

- Locate the Configuration setting under Settings.

- Set Allow storage account key access to Disabled.

When you disallow Shared Key authorization for a storage account, requests from clients that are using the account access keys for Shared Key authorization will fail.

Prerequisites for switching shared key authentication to user delegation SAS:

Current SAS token generation mechanisms require access to the all-powerful storage access key. With the advent of Azure AD based authentication for Azure Storage, users and services can be granted limited access to data. A user delegation SAS is secured with Azure Active Directory (Azure AD) credentials and by the permissions specified for the SAS. A user delegation SAS applies to Blob storage only.

A user delegation SAS offers superior security to a SAS that is signed with the storage account key. Microsoft recommends using a user delegation SAS when possible. For more information, see Grant limited access to data with shared access signatures (SAS).

Assign permissions with Azure RBAC:

To create a user delegation SAS from Azure PowerShell, the Azure AD account used to sign into Azure PowerShell must be assigned a role that includes the Microsoft.Storage/storageAccounts/blobServices/generateUserDelegationKey action. This permission enables that Azure AD account to request the user delegation key. The user delegation key is used to sign the user delegation SAS. The role providing the Microsoft.Storage/storageAccounts/blobServices/generateUserDelegationKey action must be assigned at the level of the storage account, the resource group, or the subscription.

If you do not have sufficient permissions to assign Azure roles to an Azure AD security principal, you may need to ask the account owner or administrator to assign the necessary permissions.

The following example assigns the Storage Blob Data Contributor role, which includes the Microsoft.Storage/storageAccounts/blobServices/generateUserDelegationKey action. The role is scoped at the level of the storage account.

You can leverage this article to create a user delegation SAS for container or Blob

The user delegation SAS URI returned will be similar to:

For more information about the user delegation SAS, see official documentation

LCM Logging info and how to check policy management failure:

Lifecycle management offers a rich, rule-based policy for general purpose v2 and blob storage accounts. Use the policy to transition your data to the appropriate access tiers or expire at the end of the data's lifecycle. A new or updated policy may take up to 48 hours to complete.

You can use lifecycle management to automatically transition old blob versions to a cooler storage tier (hot to cool, hot to archive, or cool to archive) or delete old blob versions to optimize for cost.

This sample policy rule below defines a lifecycle policy that moves a block blob whose name begins with log to the cool tier if it has been more than 30 days since the blob was modified.

{

"rules": [

{

"enabled": true,

"name": "move-to-cool",

"type": "Lifecycle",

"definition": {

"actions": {

"baseBlob": {

"tierToCool": {

"daysAfterModificationGreaterThan": 30

}

}

},

"filters": {

"blobTypes": [

"blockBlob"

],

"prefixMatch": [

"sample-container/log"

]

}

}

}

]

}

Note: Each rule can have up to 10 case-sensitive prefixes and up to 10 blob index tag conditions.

prefix match field of a policy is a full or partial blob path, which is used to match the blobs you want the policy actions to apply to. The path must start with the container name. If no prefix match is specified, then the policy will apply to all the blobs in the storage account. For more details, see the documentation here

Blob storage lifecycle management now supports blob versions.

When blob versioning is enabled for a storage account, Azure Storage automatically creates a new version of a blob each time that blob is modified or deleted.

Before you configure a lifecycle management policy, you can choose to enable blob access time tracking. When access time tracking is enabled, a lifecycle management policy can include an action based on the time that the blob was last accessed with a read or write operation

How customers can Check Last Access Time on Blob Objects:

Get Blob Properties : The LAT property will be part of the response header in this case.

List Blobs For the list blob, it’s under LastAccessTime element in response body.

For more details, refer to Official documentation

Troubleshoot policy management failure:

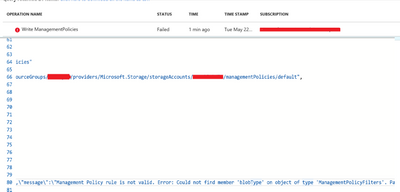

User configures a policy and gets the below error on the portal.

Error: Error saving lifecycle policy

Error saving lifecycle policy for storage account ‘storage accountname’

Error: Bad Request

Details can be found under Activity Logs. This provides us more information around the error. We can quickly check JSON policy customer is using and see if "blobType" member is missing. This documentation can be used as a quick reference for sample policies and member names and keywords.

Note: If you enable firewall rules for your storage account, lifecycle management requests may be blocked. You can unblock these requests by providing exceptions for trusted Microsoft services. For more information, see the Exceptions section in Configure firewalls and virtual networks.

Transactions, Metrics and targets for Azure Storage:

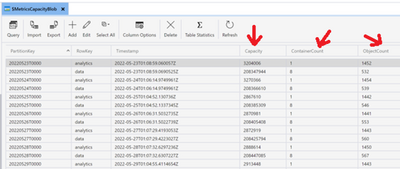

Transaction data is recorded at the service level and the API operation level. At the service level, statistics that summarize all requested API operations are written to a table entity every hour, even if no requests were made to the service. At the API operation level, statistics are only written to an entity if the operation was requested within that hour.

For example, if you perform a GetBlob operation on your blob service, Storage Analytics Metrics logs the request and includes it in the aggregated data for the blob service and the GetBlob operation. If no GetBlob operation is requested during the hour, an entity isn't written to $MetricsTransactionsBlob for that operation.

Currently, capacity metrics are available only for the blob service.

- Capacity: The amount of storage used by the storage account's blob service, in bytes.

- ContainerCount: The number of blob containers in the storage account's blob service.

- ObjectCount: The number of committed and uncommitted block or page blobs in the storage account's blob service.

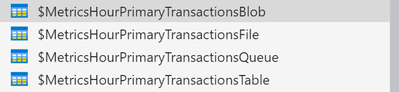

All metrics data for each of the storage services is stored in three tables reserved for that service. One table is for transaction information, one table is for minute transaction information, and another table is for capacity information. Transaction and minute transaction information consists of request and response data. Capacity information consists of storage usage data

Capacity information consists of storage usage data. Hour metrics, minute metrics, and capacity for a storage account's blob service is accessed in tables that are named as described in the following table.

Example: Hourly metrics, primary location. For the Blob, Table, and Queue services, supported for all versions.

Guidance on timeout and Server Busy errors:

In some scenarios your application reaches the limit of what a partition can handle for your workload, Azure Storage begins to return error code 503 (Server Busy) or error code 500 (Operation Timeout) responses. If 503 errors are occurring, consider modifying your application to use an exponential backoff policy for retries. The exponential backoff allows the load on the partition to decrease, and to ease out spikes in traffic to that partition. See, public documentation for more details.

Applications should retry requests that fails with an HTTP 503 or 500. Refer to the retry guidance here

We document throttling and ServerBusy errors here

Note: Azure Storage standard accounts support higher capacity limits and higher limits for ingress and egress by request. To request an increase in account limits, contact Azure Support.

Hope this can be helpful!