This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

We are pleased to announce preview of MySQL Data Migration - Consistent Backup feature, which allows users to take a Consistent Backup of a MySQL server without losing data integrity at source because of ongoing CRUD (Create, Read, Update, and Delete) operations. This feature is useful to maintain data integrity between source and target server and also enables it to be used in conjunction with Data-in replication or third-party tools (such as Striim or Qlik) that provides bin log replication to perform online migration with minimal downtime.

Transactional consistency is achieved without the need to set the source server to read-only mode through this feature.

Current Implementation

In the current implementation, users can enable the Make Source Server Read Only option during offline migration. This maintains the data integrity of the target database as the source is migrated by preventing Write/Delete operations on the source server during migration

Making the source server read only prevents users from modifying the data, rendering the database unavailable for any update operations. However, if this option is not enabled, there is a possibility for data updates to occur during migration. As a result, migrated data could be inconsistent because the database snapshots would be read at different points in time.

Consistent Backup allows users the flexibility of ongoing operations along with data integrity at source.

How Consistent Backup works

When you initiate a migration, the service flushes all tables on the source server with a read lock to obtain the point-in-time snapshot. This is done because a global lock is more reliable than attempting to lock individual databases or tables. As a result, even if you are not migrating all databases in a server, they are locked as part of setting up the migration process. The migration service initiates a repeatable read and the source server combines the current table state with contents of the undo log for the snapshot. The snapshot is generated after obtaining the server wide lock and spawning several connections for the migration. After the creation of all connections that will be used for the migration, the locks on the table are released.

The migration connections are leveraged to perform the migration with repeatable read enabled for all transactions and the source server hides all new changes from the offline migration. Clicking on the specific database in the Azure Database Migration Service (DMS) Portal UI during the migration displays the migration status of all the tables - completed or in progress - in the migration. In case of connection issues, the status of the database changes to Retrying and the error information is displayed if the migration fails.

Repeatable reads are enabled to keep the undo logs accessible during the migration, which will increase the storage required on the source because of long running connections. It is important to note that the longer a migration runs the more table changes that occur, the undo log's history of changes will be more extensive. The longer a migration, the more slowly it runs as the undo logs to retrieve the unmodified data from will be longer. This could also increase the computer requirements and load on the source server.

Please refer to the MySQL :: MySQL 8.0 Reference Manual :: 5.4.4 The Binary Log and MySQL :: MySQL 8.0 Reference Manual :: 15.6.6 Undo Logs for more information regarding binary logs and undo logs respectively.

Prerequisites

To complete the migration successfully with Consistent Backup enabled

- Ensure that the user who is attempting to flush tables with a read lock has RELOAD or FLUSH permission.

- Use the MySQL command line tool of your choice to determine whether log_bin is enabled on the source server. The Bin log is not always turned on by default and should be checked to see if it is enabled before starting the migration. You can determine whether log_bin is enabled on the source by running the command: SHOW VARIABLES LIKE 'log_bin.’

- If you are targeting online data migration, configure the binlog_expire_logs_seconds parameter on the source server to ensure that binlog files are not purged before the replica commits the changes. We recommend at least 2 days to start. Post successful cutover, the value can be reset.

Getting Started with Consistent Backup

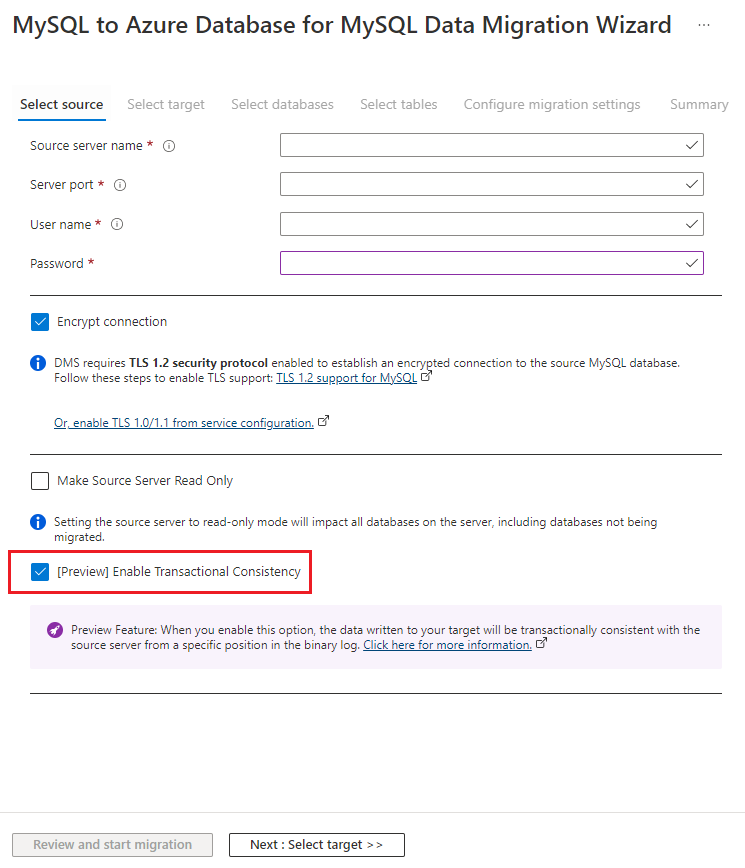

You can use this feature by selecting the “Enable Transactional Consistency" checkbox in our existing offline migration activity in Azure Database Migration Service:

Consistent backup is performed by following these steps:

- Flushing all tables on the source server with a global read lock to obtain the point-in-time snapshot.

- Creation of all connections that will be used for the migration and creating a transaction from that snapshot.

- Record the bin log position that can be used for replaying bin log position.

- Drop the global lock and begin reading data from source using the spawned connections. The connections are used to perform the migration with repeatable read enabled for all transactions and the source server hides all new changes from the offline migration.

For additional information about this new functionality including known issues and limitations, see this doc MySQL to Azure Database for MySQL Data Migration - MySQL Consistent Backup (Preview) | Microsoft Docs

Also detailed information on MySQL migration to Azure Database for MySQL using DMS can be found here Migrate MySQL to Azure Database for MySQL offline using DMS

We will add support for additional migration features in future releases.