This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

Some weeks ago, my manager encouraged us to create some relevant scenarios that help us to learn new things and before that I was already learning Azure Synapse Analytics. There are some “Get Started Labs” that I see are like the most, regularly about getting the information from a source and sending it to a target, and that’s totally fine but I want to explore more options about Real-time ingestion. I worked several years in a big solution for a customer who needed information with a short time of delay and in that time the options were limited.

Now, we have a lot of options, services, platforms, and it could be complex to try to figure out which is our best option. One of the main problems we had some years ago is the capacity of an artifact or a service to receive massive information and have all those services available 24/7.

With Azure you have these options to implement real-time ingestion in your solutions.

I won’t go in so much detail about each one because the documentation is amazing, if you want to know more about it, go to: Choose a real-time message ingestion technology in Azure

There’s not a best option or a right or wrong choice, everything depends on the scenario and sometimes is about time, about contracts, about money, just because the architect says, or the circumstances makes to have one or another. In this case I picked Event Hubs because, as I’ll show in the example, we’ll receive different types of information, and we reduce the amount of code in our solution with the Capture feature which automatically stores your data in a data lake.

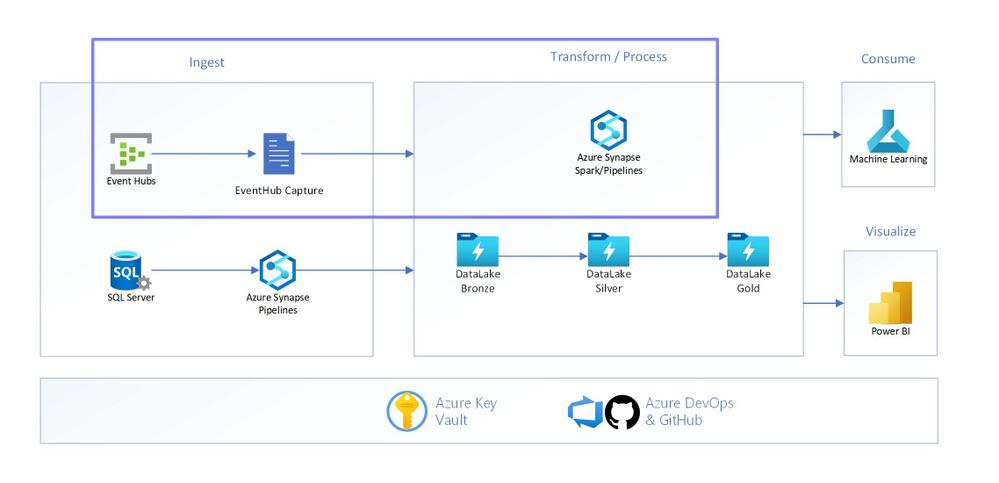

To have a complete scenario we have ingestion, transformation, analysis, and visualization as you can see in the image below. It’s possible to have different sources in the ingestion, combining streaming and batching and everything goes into our Data Lake (in this case we are using Azure Synapse).

To implement this first part of the ingestion you can follow and check the following GitHub repository: vasegovi/synapse-demo (github.com)

But let me tell you here some of lessons I learned when I was implementing this, first of all I search for some similar examples on internet and found this one Trying out Event Hub Capture to Synapse. It covers almost all the things that I was interested in so you can also go there and follow it, and then come here and review some topics that you can add in your learning.

Event Hub

With the capture feature you can easily configure the way and the place where you need your data. Some important aspects that you should consider are:

- Define your path and convention names to avoid large paths in your data lake

- Define from the beginning the partitions of your data lake

- Define your compression format

To extend all this information visit: Capture events through Azure Event Hubs in Azure Blob Storage or Azure Data Lake Storage

Blob Permissions

This is one of the most interesting points that you want to know about in detail because it was the most complex task that I had to deal with.

The table shows us all the users and access that you must add into your blob storage, the one that is working as Data Lake. the is the user that is going to run scripts manually, or for unit test, then the managed identity that we created. Then the name of your synapse workspace creates a Service Principal which you also have to add in the permissions because is the one that executes the script once is published, and last one the Azure service principal for Azure Data Factory to run triggers.

Why Azure Data Factory? Because inside Azure Synapse engine the ADF engine is involved and is the one that will execute the scripts when they are launched with the trigger event.

|

role/user |

description |

purpose |

|

your user |

this is for the user logged |

When you are running scripts manually |

|

managed identity |

the managed identity we created |

Because it will be assigned as administrator |

|

wssynapse (synapse workspace name) |

the workspace synapse resource |

For pipelines |

|

ADF |

ADF Service Principal |

For triggers |

For deeper explanations please visit: Create a trigger that runs a pipeline in response to a storage event

Git Integration

There are many branch strategies that you can follow in your application and infrastructure as well depending on several factors but there are important points that you should consider.

- Define a branch strategy. Sometimes it is common that the project needs to be done quickly and just create branches and branches and at some point, there’s a mess and you and your team will need to invest more time fixing that.

- You can only publish from your collaboration branch

- When you publish your changes the branch that you set in your configuration as Publish branch will create and only have the ARM with all the artifacts in your workspace (except for pools)

- Highly recommended to protect your collaboration branch with PR so you avoid works losses.

- Before going further in your development read this articles that will help you to understand more about this topic: Continuous integration and delivery in Azure Data Factory, 5 types of Git workflows that will help you deliver better code

Python Client

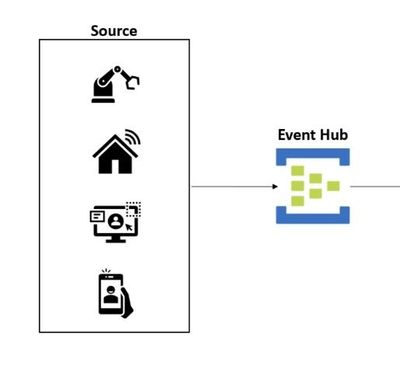

This Python client is useful to send information to your Event Hubs. The unique goal of this piece is to simulate a device which sends all data to Event hubs, and ideal architecture looks like the image below, where you have several sources to capture the info needed for your solution.

Challenge

When you finish the steps in the repo and complete the challenge, please come back and let me know in the comments how was it

Next Steps:

I will continue with my end-to-end scenario so keep in touch to see part 2 when we’ll add Machine Learning to analyze this information, meanwhile you can learn more about streaming and synapse let you here some useful links

Capture Event Hubs data in Azure Storage and read it by using Python