This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Context

Many ISVs (Independent Software Vendors) are exchanging information with devices, applications, services, or humans. In many cases the information passed can be a file or a blob. Each of these ISVs would need to implement such a service. In the past few months, I discussed this capability with several (over 4) different customers, each with slightly unique needs. When I tried to generalize the need, it was clear: they wanted a quick, safe means to exchange files with customers or devices.

So, I tried to translate these asks into user stories:

As a service provider, I need my customers to upload content in a secure, easy-to-maintain micro-service exposed as an API (application programming interfaces) so that I can process the content uploaded and perform an action on it.

As a service provider, I would like to enable the download of specific files for authorized devices or humans so that they could download the content directly from storage.

As a service provider, I would like to offer my customers the ability to see what files they have already uploaded so that they can download them when needed with a time restricted SaS (shared access signatures) token.

Cool, nice start, but if we look at the underline ask, does it have to be exposed to humans? Why not create a micro service that would handle this requirement and delegate the interaction with humans to the application already interacting with users?

The Approach

I decided to use this opportunity and learn Azure Container Apps. For more information on ACA (Azure Container Apps) please review this documentation.

The use of ACA provides significant security benefits (among others) with respect to VNet (Virtual Network) integration. I did consider using Azure Functions, however, when comparing the SKU of Azure Function that supports VNet integration to the potential cost of use of ACA, the ACA would incur lower costs.

While ACA can integrate with a VNet, my initial sample repo does not include it yet. I decided to focus on minimal applicative and network capabilities keeping it simple.

I also decided to ensure readers who want to experiment with the code would have a quick way to do it. This is the reason time was spent on creating the bicep code that spins up the entire solution.

There are no application settings which include secrets; all connection strings or keys would be stored in Azure Key Vault, while the access to this vault is governed by RBAC (role-based access control) and only specific identities can access it.

I used .NET Core 6 as the platform using the C# language. The secured web Api template was my initial version, as it provides most of what is required to create such a service, wrapping it as a container and deploying it to ACA was the additional effort.

The Solution

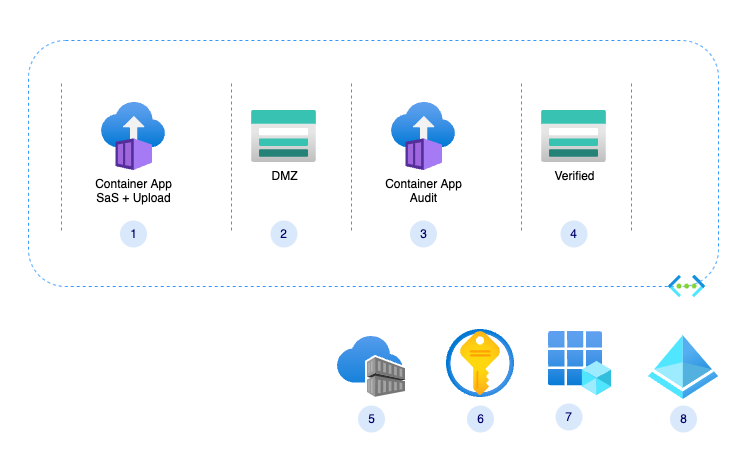

Here is a diagram of the solution components:

Components

- Container App - create SaS tokens and containers, it also provides SaS for given file within a given container.

- Storage Account - DMZ (demilitarized zone), all content is considered unsafe

- Container App - verify content and move it to verified storage

- Verified storage, content is assumed to be verified and has minimal or no threat to the organization

- Container Registry - holds the container app images

- Azure Key Vault - holds connection strings and other secured configuration

- App registration for the ACA app

- Azure Active Directory – initial solution is for single tenant applications

With the initial drop, content validation is out-of-scope.

My repo (will be moved to Azure Samples) also includes few GitHub actions that perform the following activities:

- Build the image and push to ACR (Azure Container Registry)

- Deploy an image to the ACA

- Create a release – note this might not be required by developers using this sample. This action was created to allow developers to use this sample.

Bicep is used to provision all required resources, excluding the AAD (Azure Active Directory) entities and the resource group in which all components would be provisioned.

My Learnings

Azure Container Apps : Container Image pull policy

The best practice is to avoid using the “latest” tag; as a user of ACA, you currently do not have the ability to control the image-pulling trigger, which is the equivalent of “Image Pull Policy” in Kubernetes. Instead, use a unique, autogenerated tag, which can be generated by your CD (Continuous Deployment) pipeline. In the sample repo, The GitHub Action uses the git commit hash as the image tag.

Azure Container Apps : Container Environment variables

When working locally, you can leverage the setting file, but when working with ACA, i decided to leverage enviorment variables. My next learning was based on the following question:

How ami I going to inject these values into an environment provisioned by the Bicep script?

Well, the answer is, to use environment variables. Also, when working locally, you would be able to use the pattern 'AzureAd:Audience'. However, when using ACA, you would need to use a slightly different pattern: 'AzureAd__Audience', with the double underscore indicating a section drill-down. (The reason is the operating system)

Note, It will takes time for changes to reflect in the GUI (graphical user interfaces) is minutes.

.NET Core 6 : Key Vault integration

Using .NET Core 6 allows programmers to focus on the applicative content they want to create. It is, in some cases, a double-edged sword since some of the logs and activities are masked.

For example, when you wish to use a secret from Azure Key Vault, you can access it as if it were part of your configuration, assuming you registered it correctly:

builder.Configuration.AddAzureKeyVault(

new Uri($"https://{builder.Configuration["keyvault"]}.vault.azure.net/"),

new DefaultAzureCredential(new DefaultAzureCredentialOptions

{

ManagedIdentityClientId = builder.Configuration["AzureADManagedIdentityClientId"]

}));

This single line (separated for ease of reading) registers the Key Vault, assigning the managed identity as its reader. Note that in many cases managed identities would require just a subset of the secrets – for further reading and best practices please follow these guidlines.

Once you have done this, accessing a secret from your code would look like this, where the 'storagecs' is a secret configured by the bicep code.

string connectionString = _configuration.GetValue<string>("storagecs");

.NET Core 6 : Authorization / Authentication

Adding authorization is similarly 'difficult':

builder.Services.AddAuthentication(JwtBearerDefaults.AuthenticationScheme)

.AddMicrosoftIdentityWebApi(builder.Configuration.GetSection("AzureAd"));

Again, one line that assumes you have a JSON (JavaScript Object Notation) section in your App setting named 'AzureAd' which contains all required details to perform an authentication and authorization.

"AzureAd": {

"Instance": "https://login.microsoftonline.com/",

"Domain": "<your domain>",

"ClientId": "<your app registration client id>",

"TenantId": "<your tenant>",

"Audience": "<your app registration client id>",

"AllowWebApiToBeAuthorizedByACL": true

}

Let us unpack the above settings, to explain that it took some time to understand that without the last two items, the default authentication will fail. The .NET platform will check that the value of the ‘audience’ claim in the JWT (JSON Web Token) matches the one defined in the registered application.

The last setting tells the platform not to check for any other claims or roles. If you need that type of authorization, it is up to you to implement, here is an example how-to guide.

GitHub : Releases

One of my initial dilemmas was, how can I spin a fully functional environment, which requires an image to be available for a pull when the ACA is provisioned. With the help of Yuval Herziger I created a GitHub action that is triggered on a release, which would build a vanilla image of my code, and store it in the ghcr.

Authentication : The right flow

Long story short, unless you know which flow you are trying to implement, you can find time passed with minimal progress. So, choose the right flow. Henrik Westergaard Hansen helped me here. He listened to my use cases and said my flow should be the client credential flow, as its service-to-service communication. I cannot emphasize enough how important it is; the moment I understood it, the time for completion was hours.