This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

Azure Stack HCI (hyperconverged infrastructure) is a virtualisation host that runs on-premise on validated partner hardware. AKS Hybrid is an on-premise implementation of Azure Kubernetes Service (AKS) orchestrator which automates running containerised applications at scale and runs on the Stack HCI platform. Together they provide a solution for hosting highly available workloads on-premise. Azure Arc is a cloud based control plane which can be used for managing on-premise AKS Hybrid instances.

Introduction

Sometimes high availability is the top business priority. There are situations where even the high availability provided in the cloud by redundant systems, availability zones, and failovers isn’t enough.

I recently worked with a customer in just this situation. They needed to deliver their service with 5 9’s of availability – that’s less than 5 minutes of downtime per year – but by the nature of how we use the cloud, this is hard to achieve. The SLAs of all of the cloud services that you plug together to support your solution need to be considered in its overall availability. There’s a great article on high availability by Lavan Nallainathan here that includes a description of how to calculate the composite SLA for dependent cloud services.

The high volume service in question supported the telephone triage and re-direction of users to an appropriate downstream specialist service. When a user calls, they are routed to one of many external suppliers located around the country. The receiving operator will run through a ‘pathway’ of questions, delivered to their screen and designed to get the caller to the correct destination in the minimum time with all the necessary details collected. All collected pathway data filters to downstream systems in the cloud, where it should be available in near real-time to allow the caller to continue their journey onward.

Challenges and Requirements

The team I was helping had responsibility for maintaining and distributing these optimised pathways to suppliers. As a new pathway becomes available, the suppliers are notified and asked to download a file from an FTP server which is then imported into the locally hosted suppliers' systems in a variety of ways. Supplier sites vary in size and technical capability so this process is far from ideal.

The team approached Microsoft when they were considering the next generation of their service. They had already done some thinking, with help from Microsoft, on the architectural options available to them. The requirement was to deliver the pathway content to suppliers in an efficient, reliable, repeatable, and supportable way which reduced the technical burden placed on the supplier. They wanted to deliver the pathway content to the on-premise supplier systems via an API, which would allow them to seamlessly deliver changing content. However, due to its criticality, the service needed to support 5 9's of availability - this would not be easily achieved through a centrally hosted API. A decentralised model was needed. That solution needed to support:

- A simplified supplier operation to address the variability in supplier capabilities,

- Possible loss of connectivity between on-premise and cloud,

- Deployment options to suit suppliers’ varied landscape, including VMWare.

Proposed Solution

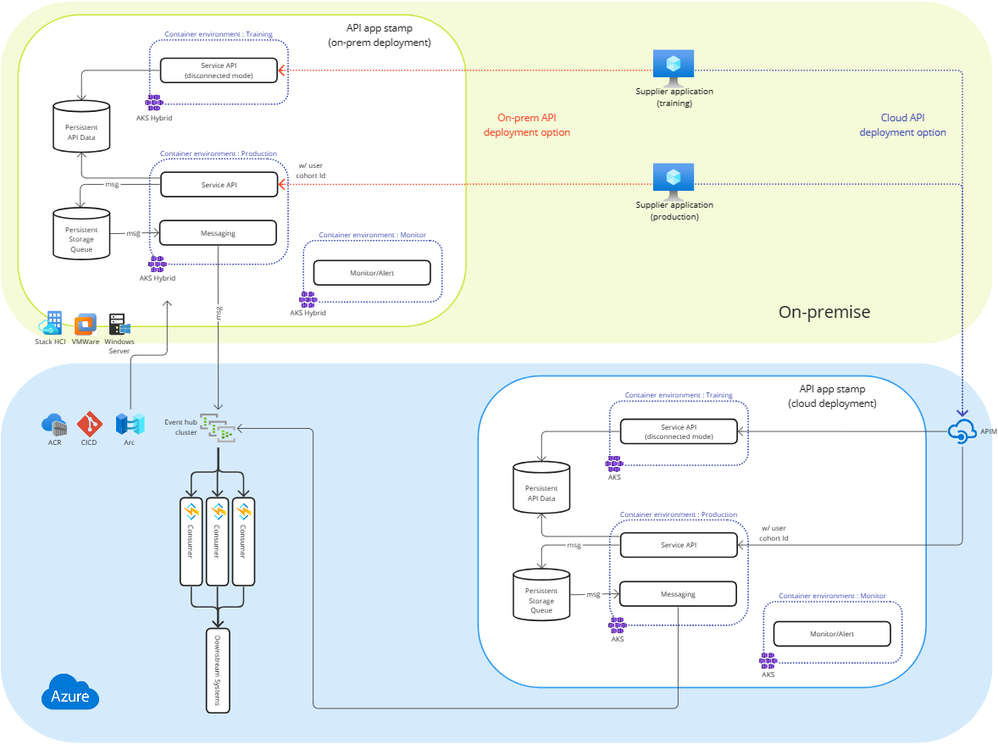

The solution proposed was to containerise and place the API on-premise, where it could continue to service operator requests even if there was an outage between the site and cloud. In the event of an outage the API, using resiliency and reliability patterns, would buffer messages destined for the cloud until the connectivity was restored. No matter how long that took, no messages would be lost, all would be delivered. The overlapping coverage of many suppliers providing failover for each other would meet the availability needs.

AKS Hybrid deployments are currently supported on Windows Server and Stack HCI. Support for VMWare is currently in Private Preview with Public Preview expected later in 2023, and General Availability in 2024.

After consultation internally and discussions with the AKS product team, we decided to propose AKS Hybrid. From a practical perspective, the supplier simply had to allocate a highly available on-premise virtualisation host, for example Stack HCI or VMWare, where AKS Hybrid could be deployed. Once deployed AKS could be managed centrally through Azure Arc by the central cloud team. This would make deployment of the latest pathways and API lifecycle management simple, taking the burden of technical support off of individual suppliers.

On the server side an Event Hub cluster was able to capture the velocity of real-time pathway data sent from the suppliers. In turn this would be processed by Azure Functions, handling de-duplication and forwarding of the data to downstream systems, allowing other operators to access the details provided by the caller as they transition within and between services.

Impact and Future Enhancements

We were able to be impactful by creating a high quality solution which met the customer requirements and worked in harmony with all of the customer's existing systems. We provided component and deployment options to the customer, which worked within the constraints of the architecture stamp we had designed for them, but which could be tailored to in-house skills and supplier preference.

We were also able to provide a roadmap to other features supported in Azure that the customer had not considered and that supported their availability and resiliency goals, such as monitoring and alerting capabilities and deployment processes.