This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

There are many cases where you need to operationalize inference routines that can be decomposed into multiple independent steps. What is more, many times some of these steps need to be exactly the same thing that was done during training.

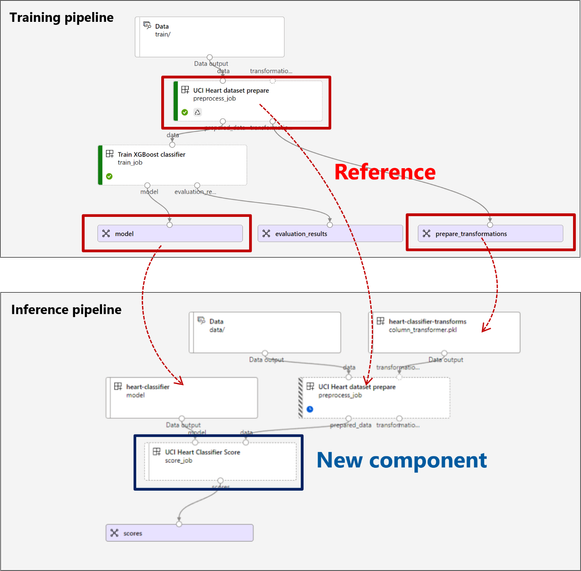

Take the following example into account. It displays a simple yet typical scenario for training machine learning models. It contains a preprocessing step that performs data transformations. However, as part of the preprocessing some parameters are learnt, like the normalization coefficients. As a consequence of this design, the model the next step trains can’t work on raw data, but only on preprocessed data.

If you are thinking of deploying this model to production you need to ensure you apply the same transformation on both places, training and inference.

The proposed “Inference pipeline” solves the issue. As you see, not only the code in the step UCI Heart dataset prepare has been reused, but also other assets are being pulled down, like the model heart-classifier and the data normalization parameters heart-classifier-transforms.

If you are thinking of moving to production, then you need to put the entire processing graph under the deployment. We are happy to announce that this kind of deployment is now possible in Batch Endpoints using Pipeline Component Deployments for Batch Endpoints, in preview.

Pipeline component deployments in Batch Endpoints

Batch Endpoints was able to take a machine learning model and deploy it for batch inference. From today, Batch Endpoints will be able to take both Models and Pipeline components. As a consequence, Batch Endpoints now supports two types of deployments: Model deployments and Pipeline components deployments.

Why pipeline components instead of pipelines?

In Azure Machine Learning, a pipeline defines the compute graph that needs to be executed as a sequence of steps. Each of those steps is called a component. Components have interesting properties including that they can be reused and moved across workspaces and registries as a single unit, hence they encourage reusability. More importantly, because you are reusing something, they help to ensure reproducibility.

Those are very interesting properties to keep around not just at the “step” level, but also at the entire pipeline level. You want to be able to move the pipeline from one place to another and reuse it in your staging and production environment. Azure Machine Learning then provides the user with the capability of creating a component out of an entire pipeline and hence given them the versatility any component has (notice that this also enables you having a step that, in fact, is a pipeline on itself – a.k.a. nested pipelines).

Since Batch Endpoints seeks to help customers achieve real MLOps, we designed them to take pipeline components as the thing you deploy under them and hence contribute to have a robust mechanism for deployment:

You can start using the new type of deployment from today. Visit our documentation to learn how to deploy your first pipeline component deployment or check our examples repository for batch endpoints. If you have any feedback, we are more than happy to hear from you.