This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

Introduction

This post is the second in a three-part series on troubleshooting common networking issues with Azure Kubernetes (AKS), a managed container orchestration service. Scenarios in this post were the result of an intensive one-day bootcamp specifically targeting advanced AKS networking triage and troubleshooting scenarios. It offers comprehensive guidance on how to set up a fully functional environment and presents various fault scenarios that participants can troubleshoot using familiar tools.

The previous post addressed connectivity and DNS issues. This article specifically covers endpoint connectivity issues across virtual networks and port configuration problems for services and pods.

Prerequisites

To set up AKS, ensure you have the necessary Azure account and subscription permissions, and PowerShell available. Follow instructions in this Github link for AKS and scenario setup. Familiarize yourself with troubleshooting inbound and outbound networking scenarios in AKS. The AKS environment in the figure uses a custom VNet and NSG attached to its subnet, with AKS creating its own NSG attached to the Nodepool's Network Interface. Implicit AKS NSG changes are automatically added, but explicit addition is necessary to apply them to the custom Subnet NSG.

Scenario 3: Endpoint Connectivity issues across Virtual Networks

Objective: The goal of this exercise is to troubleshoot a scenario where pods fail to connect to endpoints in other virtual networks and to resolve connectivity issues.

Layout: The existing AKS VNet will be joined by a new VNet containing a Linux host virtual machine. A Virtual Network (VNet) peering with a Private Endpoint (PE) will connect the two VNet’s and provide connectivity between the webserver running on the VM and applications running on AKS.

Set up the environment

- Setup up AKS as outlined in this script.

- Create and switch to the newly created namespace

kubectl create ns student

kubectl config set-context --current --namespace=student

# Verify current namespace

kubectl config view --minify --output 'jsonpath={..namespace}'

- Clone solutions Github link and change directory to Lab3 > cd Lab3

Step 1: Create VM VNet and Peer with AKS VNet

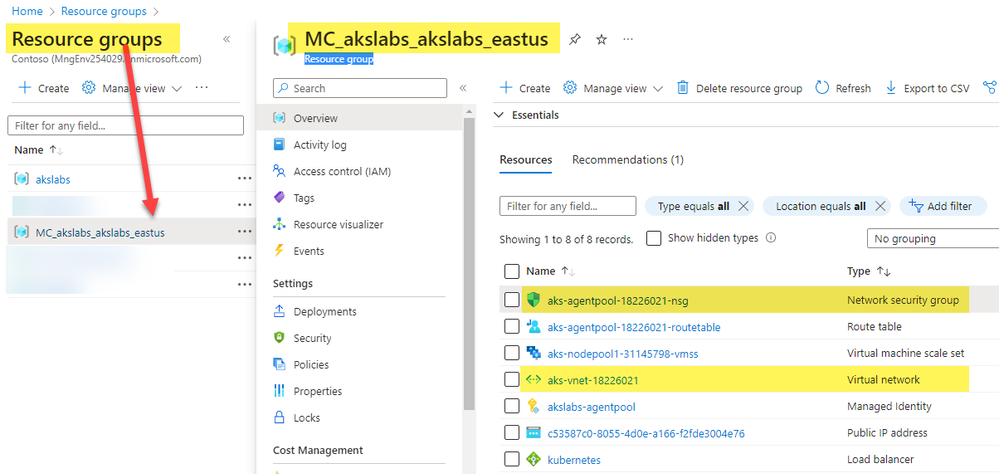

Steps below will install VM, VM_VNet and Nginx. They will create NSG and attach to VM Subnet and Peering will connect the 2 VNets. Values required for the below variables can be found in AKS MC (Managed Cluster) Resource Group as seen in figure.

Step 2: Setup Test pod and verify that the VM web server is accessible

# Create test-pod

kubectl run test-pod --image=nginx --port=80 --restart=Never

kubectl exec -it test-pod -- bash

# Run below on test-pod bash shell

apt-get update -y

apt-get install ping -y

exit

# End-to-End test: Curl should return HTML page

kubectl exec -it test-pod -- curl -m 5 $vm_ip

Step 3: Break Networking

From Lab3, run broken1.ps1

cd Lab3; .\broken1.ps1

Step 4: Troubleshoot connectivity issue

We will assume the VM Web Server is functional and web application is running. Hence there’s no need to SSH and validate.

a. For further testing to verify connectivity is needed, options are to create public VM in same VNet and run curl test.

b. Hosting Bastion VM is another option which should be setup in the VM VNet

c. Associate a newly created Public IP with existing VM instance for external access via public interface.

- Validate peering is setup right: Steps to setting up VNet peering

- Check curl connectivity which should result in connection timeout after 5s

kubectl exec -it test-pod -- curl -m 5 $vm_ip

- Check if NSG on the AKS or VM subnets have any DENY rules that might block incoming/outgoing traffic. Check link on custom network security group blocking traffic.

az network nsg rule list -g $resource_group --nsg-name $aks_nsg

az network nsg rule list -g $resource_group --nsg-name $vm_nsg

# From VM NSG, below should return "access": "Deny"

az network nsg rule list -g $resource_group --nsg-name $vm_nsg --query "[?destinationPortRange== '80']" --query "[0].{access:access}"

- Check if Peering setup is Connected and up from both ends. Check link on peering in same subscription.

# Specifically check the "allowForwardedTraffic" and "allowGatewayTransit" values are enabled.

az network vnet peering show --name peerAKStoVM -g $resource_group --vnet-name $aks_vnet

az network vnet peering show --name peerVMtoAKS -g $resource_group --vnet-name $vm_vnet

# Below should return a value of False

az network vnet peering show --name peerVMtoAKS -g $resource_group --vnet-name $vm_vnet --query "{allowForwardedTraffic:allowForwardedTraffic,allowGatewayTransit:allowGatewayTransit}"

Step 5: Restore service

- Enable peering on VM VNet

az network vnet peering update -n peerVMtoAKS -g $resource_group --vnet-name $vm_vnet --set allowForwardedTraffic=true allowVirtualNetworkAccess=true

- Check curl connectivity which should result in connection timeout after 5s. Issue persists.

kubectl exec -it test-pod -- curl -m 5 $vm_ip

- Remove NSG rule to Allow web traffic from VM Web Server on port 80.

az network nsg rule delete -n DenyPort80Inbound -g $resource_group --nsg-name $vm_nsg

Step 6: Validate connectivity

After 60s, check curl connectivity which should return HTML page from Web Server on hosted VM.

kubectl exec -it test-pod -- curl -m 5 $vm_ip

kubectl exec -it test-pod -- ping $vm_ip

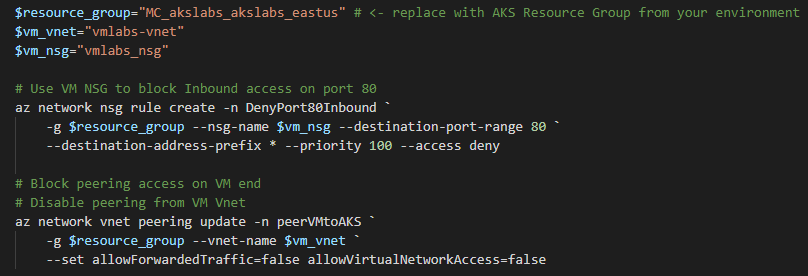

Step 7: What was in the broken files

Broken1.ps1 was used to

- Create a rule on VM NSG to deny access to HTTP traffic

- Disable critical peering parameters i.e., Block Traffic Forwarding and Block remote VNet access

Step 8: Cleanup

kubectl delete pod/test-pod

az network vnet peering delete -n peerAKStoVM -g $resource_group --vnet-name $aks_vnet

az network vnet peering delete -n peerVMtoAKS -g $resource_group --vnet-name $vm_vnet

# DETACH VM NSG from VM subnet and DELETE NSG

az network vnet subnet update -n $subnet_name -g $resource_group --vnet-name $vm_vnet -n $subnet_name --network-security-group '""'

az network nsg delete -g $resource_group -n $vm_nsg

# Delete VM, NSG and it VNet

az vm delete --resource-group $resource_group --name $vm_name -y

az network vnet delete --name $vm_vnet --resource-group $resource_group

az network nsg delete -g $resource_group -n $vm_name”NSG”

k delete ns student

Scenario 4: Web Server fails to respond

Objective: The aim of this lab is to identify and resolve an issue where traffic directed through a Load Balancer fails to reach the intended pod. The focus will be on troubleshooting the problem until it is resolved.

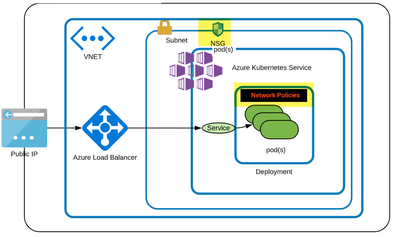

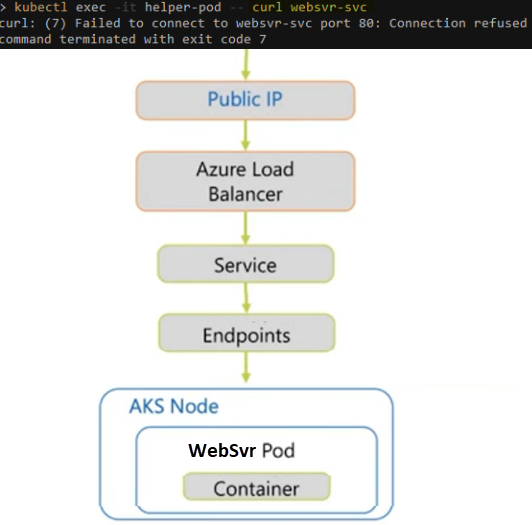

Layout: Web Server Pod with Service of type LoadBalancer allowing External IP access. Cluster layout as shown below has NSG applied to AKS subnet, with Network Policies in effect.

Step 1: Set up the environment.

- Setup up AKS as outlined in this script.

- Create and switch to the newly created namespace

kubectl create ns student

kubectl config set-context --current --namespace=student

# Verify current namespace

kubectl config view --minify --output 'jsonpath={..namespace}'

- Clone solutions Github link and change directory to Lab4 > cd Lab4

- Run .\working.ps1 in Powershell.

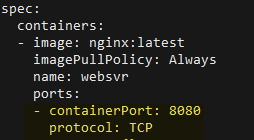

Spec in working.ps1 is seen below. This sets up the Web Server Pod and Service of type Load Balancer. There’s an External-IP that points to the web server making it accessible from outside cluster.

$kubectl_apply = @"

apiVersion: v1

kind: ConfigMap

metadata:

name: nginx-html

data:

index.html: |

...

Hello from Websvr

...

---

apiVersion: v1

kind: Pod

metadata:

name: websvr

labels:

app: websvr

spec:

containers:

- name: websvr

image: nginx:latest

ports:

- containerPort: 8080

volumeMounts:

- name: webcontent

mountPath: /usr/share/nginx/html/index.html

subPath: index.html

volumes:

- name: webcontent

configMap:

name: nginx-html

---

apiVersion: v1

kind: Service

metadata:

labels:

app: websvr

name: websvr-svc

spec:

ports:

- port: 80

protocol: TCP

targetPort: 8080

selector:

app: websvr

type: LoadBalancer

"@

$kubectl_apply | kubectl apply -f -

# svc similar to: k expose pod nginx --name= websvr-svc --port=80 --target-port=8080 --type LoadBalancer

- Ensure Websvr is Running. Ensure LB service has an External IP assigned.

kubectl get po -l app=websvr -o wide

kubectl get svc -l app=websvr -o wide

kubectl get node -o wide

Step 2: Create and use a helper pod to troubleshoot.

- Create a helper pod and install the required utilities.

kubectl run helper-pod --image=nginx

k exec -it helper-pod –- bash

# Install below in bash shell

apt-get update -y

apt-get install -y nmap

exit

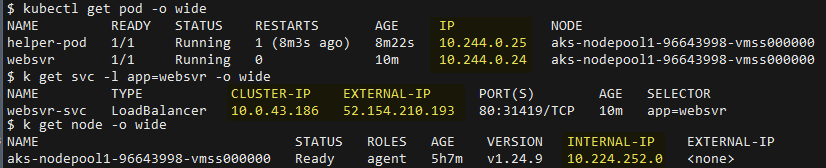

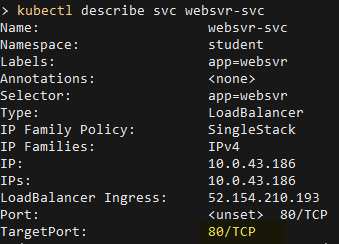

- Make a note of EXTERNAL_IP, CLUSTER_IP, WebsvrPod_IP, Helper_Pod_IP, and Node name.

$EXTERNAL_IP=$(kubectl get svc websvr-svc -o jsonpath="{.status.loadBalancer.ingress[*].ip}")

$CLUSTER_IP=$(kubectl get svc websvr-svc -o jsonpath="{.spec.clusterIP}")

$WebsvrPod_IP=$(kubectl get pod websvr -o jsonpath="{.status.podIP}")

$Helper_Pod_IP=$(kubectl get pod helper-pod -o jsonpath="{.status.podIP}")

$Node_Name= $(kubectl get node -o jsonpath="{.items[*].status.addresses[?(@.type=='Hostname')].address}")

# Values below are only meant as a guide in understanding the lab layout.

echo $EXTERNAL_IP # 52.154.210.193

echo $CLUSTER_IP # 10.0.43.186

echo $WebsvrPod_IP # 10.244.0.24

echo $Helper_Pod_IP # 10.244.0.25

echo $Node_Name # aks-nodepool1-96643998-vmss000000

Step 3: Verify connectivity to Web Server.

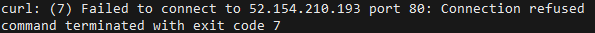

- Check connectivity by attempting to reach the web server using Public IP. This check should fail.

kubectl exec -it helper-pod -- curl -m 7 ${EXTERNAL_IP}:80

Step 4: Troubleshoot networking

Below is the networking path we need to analyze.

- Do a port scan using ‘nmap’ on Websvr-Service, EXTERNAL_IP, and WebsvrPod_IP.

kubectl exec -it helper-pod -- nmap -F websvr-svc

kubectl exec -it helper-pod -- nmap -F $EXTERNAL_IP

kubectl exec -it helper-pod -- nmap -F $WebsvrPod_IP

kubectl exec -it helper-pod -- nmap -F $CLUSTER_IP

- In cases of External and Service (Cluster IP), we see port 80 is in closed state and refuses to accept incoming connections. However, websvr-pod has port 80 open.

- Since curl on the Pod IP port 80 works, this confirms Web Server is running and Pod configuration is valid.

Step 5: Run network capture from Node running Web Server Pod and Helper Pod.

Get below output which will be needed for tcpdump.

Note: values returned are only meant as guide to understand lab layout

echo $Helper_Pod_IP

10.244.0.25 # this is only an example.

echo $WebsvrPod_IP

10.244.0.24 # this is only an example.

echo $Node_Name

aks-nodepool1-96643998-vmss000000 # this is only an example.

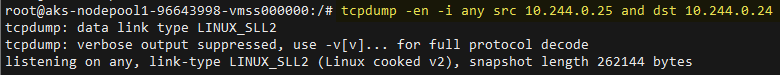

- Open a new terminal. Running below creates a debug Pod on Node running Web Server Pod. Install tcpdump on the debug Pod as shown below. Aside from Node-Name above, you can also use ‘kubectl get nodes’

kubectl debug node/<Node-Name> -it --image=mcr.microsoft.com/dotnet/runtime-deps:6.0

# Install below

apt-get update -y; apt-get install tcpdump -y

- From Step 1, run tcpdump using SRC IP (Helper pod in Step 3) and DST IP (Web server pod in Step 1)

Order is important. src=Helper-Pod and Dst=Websvr-Pod

tcpdump -en -i any src <HELPER_POD_IP> and dst <WEBSVR_POD_IP>

- On the original terminal. Generate traffic to capture output by running below from Helper pod terminal.

kubectl exec -it helper-pod -- curl websvr-svc

Based on the trace provided, the helper-pod's IP address establishes a connection with websvr-svc, utilizing the Cluster IP address. This subsequently redirects the connection to the IP address of Websvr. However, the connection is made using port number 8080.

Looking at the Web Server Pod YAML, it has containerPort set to 8080.

Service definition targetPort also set to 8080.

From port scan earlier, we found that the Websvr application was listening on port 80. This means the Websvr-svc target port configuration is incorrect and the configuration of the Websvr container Port does not make any difference.

Step 6: Fixing the issue.

- To fix this issue, we must update websvr-svc targetPort and set it to 80.

Use ‘kubectl edit svc websvr-svc’ to update targetPort to 80.

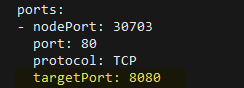

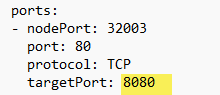

Before

After

Use ‘kubectl get ep’ to confirm its pointing to port 80 on web server Pod.

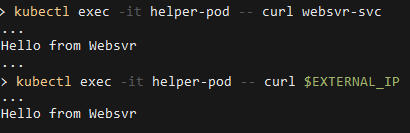

- Once done, the web server should be reachable over the External IP as well as the internal service Cluster IP.

kubectl exec -it helper-pod -- nmap -F websvr-svc

kubectl exec -it helper-pod -- nmap -F $EXTERNAL_IP

kubectl exec -it helper-pod -- nmap -F $CLUSTER_IP

kubectl exec -it helper-pod -- curl websvr-svc

kubectl exec -it helper-pod -- curl $EXTERNAL_IP

The traffic flow between the external client and the application pods is managed by the targetPort and containerPort fields of the service definition.

The targetPort is the port used by the Service to route traffic to the Application pods. The containerPort is the port on which the application listens in the pods.

When a client sends a request to the external IP address of the LoadBalancer service, the request is directed to the targetPort on the pods.

The service routes the traffic to the correct pod based on the rules defined in the service's selector. The traffic then reaches the container listening on the containerPort and is processed by the application.

In this way, the targetPort and containerPort fields ensure that external traffic is correctly mapped to the correct pods and processed by the correct container within the pod.

Step 7: Challenge

In the above example, image nginx only listens on port 80. Test this web server image tadeugr/aks-mgmt, which listens on 80 and 8080 using Pod sample as shown. Hence if you edit Service, targetPort to 80 and 8080 both ports should work.

Step 8: Cleanup

kubectl delete pod/helper-pod pod/websvr service/websvr-svc pod/node-debugger-aks-systempool-<custom>

Conclusion

This post illustrates connectivity issues between endpoints across VNets and port configuration issues between services and their respective pods. The first scenario showed how misconfigured VNet peering and NSG settings can block inbound access to required ports and protocols, and how resolving them restores end-to-end connectivity. In the second scenario, the issue was caused by an application that wasn't listening on the same port that the service back-end/targetPort was sending traffic to. This problem was resolved by replacing the application image with one that listens on both ports, allowing for successful traffic flow. In the final article of this three-part series, we will explore troubleshooting scenarios related to the Linux kernel and how they map to the Kubernetes view. Additionally, we will demonstrate how AKS observability can be enhanced using Container Insights and Diagnostics, enabling better monitoring and troubleshooting of your Kubernetes environment.

Disclaimer

The sample scripts are not supported by any Microsoft standard support program or service. The sample scripts are provided AS IS without a warranty of any kind. Microsoft further disclaims all implied warranties including, without limitation, any implied warranties of merchantability or of fitness for a particular purpose. The entire risk arising out of the use or performance of the sample scripts and documentation remains with you. In no event shall Microsoft, its authors, or anyone else involved in the creation, production, or delivery of the scripts be liable for any damages whatsoever (including, without limitation, damages for loss of business profits, business interruption, loss of business information, or other pecuniary loss) arising out of the use of or inability to use the sample scripts or documentation, even if Microsoft has been advised of the possibility of such damages.