This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

Author: Debananda Ghosh, Global Black Belt - Sr. Specialist, Data & AI, Microsoft.

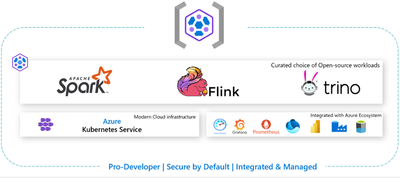

On October 2023 Microsoft Events - Enterprise scale open-source analytics on containers event, Microsoft announced public preview of HDInsight on AKS. This is the latest evolution of Microsoft Azure HDInsight PaaS foundation stack. Azure HDInsight is now completely rearchitected on Azure Kubernetes Service infrastructure and currently offering Trino, Flink and Spark workloads. HDInsight on AKS provides end to end integration with Azure ecosystem. Using Spark, Flink and Trino offering HDInsight AKS, we can deploy big data analytics computation capability in container. At the same team Enterprises and Digital native organizations do not need to manage container separately. We can learn more about HDInsight on AKS here What is HDInsight on AKS? - Azure HDInsight Preview Documentation | Microsoft Learn.

In this blog we aim to focus on Trino capabilities of HDInsight on AKS cluster. We will also discuss why we need to modernize our on-premises Trino deployments and adopt such PaaS capabilities.

We will cover the following topics in this blog.

- Overview of Trino

- Trino data architecture challenges

- Trino Modernization in Azure HDInsight benefits

- How to connect with Azure HDInsight AKS Trino

- Further read.

Overview of Trino

Trino is a tool to query humongous volume of data using distributed query. Note that Trino is not an OLTP (Online transaction processing) database like My SQL, Postgre SQL, HBase. It is neither an open-source data lake, lakehouse alternate like Hadoop file system or Delta format. Trino is a tool to execute ad hoc query in petabyte scale data management system like Hive and Delta Lake. However, Trino is not restricted to connecting only Datalake or Lakehouse. Trino extends its query capability to multiple sources. As per Trino community definition it is,

A query engine that runs at ludicrous speed, fast distributed SQL query engine for big data analytics that helps you explore your data universe.

Trino is known primarily for,

- Interactive analytics speed

- Federated Query across multiple systems

- Ad hoc Querying

- Supports Ansi SQL

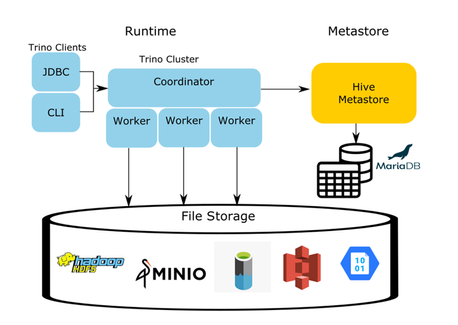

To support data federation and descent interactive analytics speed, current open-source Trino leverages following architecture components and query execution model.

- Trino Cluster- Trino cluster consists of coordinator and worker. Trino coordinator node collaborates with worker nodes to access the connected data source.

- Coordinator- It is a server and core of Trino architecture. The coordinator receives SQL statement and manages worker nodes, parse sql statement and plan query execution.

- Worker-Worker node is a Trino server that fetches the data from external sources using connector and do intermediate data exchange.

- Connector- A connector is like a third-party data source driver. It helps to connect that with the external data source which users want to leverage for data federation. This is the heart of storage and computation separation framework inside Trino. Each connector provides an abstraction layer of the external data.

- Catalogue- Trino catalogue contains the metadata/references of the data sources.

- Schema and Table- Trino organizes the data in Schema and Table format like relational database.

- Statement, Query, Stage- Trino executes ANSI Compatible SQL Statement. After parsing the statement, it is converted to Query and Query plan subsequently. Query further splits itself into stages.

- Query Task, Split- Once the Query plan is decomposed to stage level, it is further translated to a series of task. A large dataset is further decomposed into small splits. A task eventually operates on dataset splits. Co-Ordinator does overall orchestration between splits and tasks.

- Operator, Driver- An operator is key for doing any data transformation, consumption, and production. Example Trino fetch operation fetches data from data source via connector. The sequence of operators is known as driver.

- Exchanges-Trino nodes transfer data between different nodes in various stages using Exchange capability.

The following architecture diagram from Trino community blog depicts Trino components and external data connections.

Trino architecture patterns and challenges.

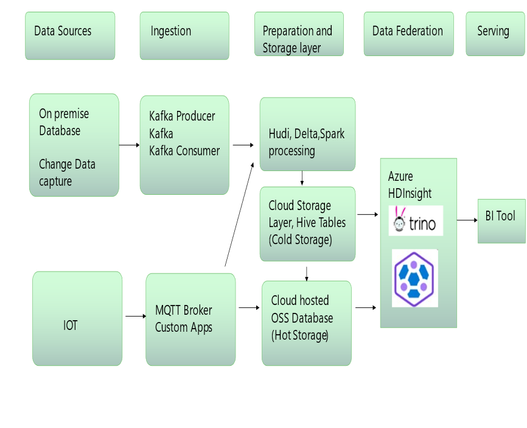

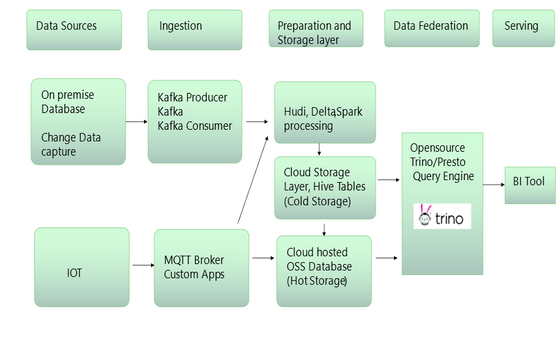

Trino /presto deployments are mostly used in organization for data federation purpose. There could be different other purpose like ad hoc interactive querying and visualization layer on top of multiple data sources. One example of Trino architecture pattern for data federation is shown in the following diagram. In this architecture pattern the organization is using change data capture mechanism, MQTT Broker, custom Apps and other middleware mechanism to feed the data in cloud storage account. Streaming data is further transformed by Delta, Spark, or other processing capability and subsequently stored in cloud storage account like (Azure data lake ADLS Gen2/AWS S3). For near real time streaming analytics purpose data is also loaded in cloud big data real time database for fast data exploration activity. Open-source Trino/Presto is used as a data federation layer between Cold storage (Cloud storage accounts) and Cloud OSS real time database (Hot storage). Business intelligence tools can connect directly to Trino/Presto for visualization purpose. Trino environment also acts as an ad hoc querying tool for interactive users who want to explore multiple data sources inside the same tool.

In previous architecture framework, let us zoom into data federation block/Trino execution engine part. Note that such Trino OSS deployment is usually on premises based and comes with multiple challenges. Some of those challenges are depicted below.

- Trino Installation– Any OSS Trino deployment needs high effort for installation and packaging. If we need to run Trino in Enterprise productionized environment, we need to download tar balls, rpms, container image and other packaging and then deploy them into large clusters.

- Post deployment effort – Post deployment configuration, container management and even sometime re deployment is unavoidable which are resource intensive.

- Security management overhead- Setting up Trino cluster security involves significant configuration management like Authentication framework establishment, User mapping and management.

- Cost Ownership- Cost of ownership increases to run capacity-based hardware.

- Scalability- Large scale deployment always comes with scalability needs which are difficult to achieve in on premises deployment.

- Version Upgrade- Keeping the Trino version UpToDate with Open source involves much effort and technical expertise.

Architecture Modernization benefits.

When we migrate to Trino with Azure HDInsight AKS service on the on-premises, we leverage the following benefits.

- Fast Service deployment.

- Enteprise Scale security, Vnet support.

- Data infra monitoring with Prometheus, Grafana.

- Federated SQL Monitoring with Trino UI.

- Elasticity, Support of Manual Scale and Auto scale.

- Service Configuration Management.

- Ease of Migration like lift and shift.

- TCO (Total cost ownership) benefits.

Fast service deployment- Like any Azure 1st party Service, Azure HDInsight AKS Trino cluster is created over few clicks. To deploy we need to follow the simple two-step process described below.

Step1- Go to Azure portal Home - Microsoft Azure ,search for ‘Azure HDInsight on AKS cluster pools (preview)’ and then Click ‘+Create’ to create and deploy ‘Azure HDInsight on AKS Cluster pools’ .

Step2- We need to click ‘+New cluster’ to create a Trino Cluster.

Learn more about portal-based deployment here Create a Trino cluster - Azure portal - Azure HDInsight Preview Documentation | Microsoft Learn

Enterprise scale security, Support of Vnet.

HDInsight on AKS Trino provides multi-layer security pillars out of box as part of its inbuilt security offering for enteprise customers.

- Perimeter security- HDInsight on AKS Supports virtual network and network security group to restrict network access.

- Authentication- HDInsight supports AD authentication, managed Identity feature natively. The current version of HDI Authentication framework does not need any more separate ‘Enterprise security package’ and ‘Active directory domain service’ for AD Authentication support.

- Authorization, Role based access- Native support of Cluster pool and cluster admin level roles to manage cluster.

- Meta data security- Attach Microsoft managed sql database as hive catalogue over few click and then leverage Key vault for secret management.

- Cluster Log security-Attache Azure data lake gen2 and maintain security logs for security information and event management.

Learn more about Enteprise security here- Security in HDInsight on AKS - Azure HDInsight Preview Documentation | Microsoft Learn

Data Infra monitoring with Prometheus, Grafana, Workbook, Azure Monitor - Microsoft provides Grafana (visualization tool) and Prometheus (cloud native monitoring tool) capabilities as azure managed service offerings. We can configure Microsoft managed Grafana instance, Microsoft Managed Prometheus and then monitor HDI AKS via simple checkbox as shown in following screenshot.

Fig 1.4 One click Microsoft Managed Prometheus, Grafana integration.

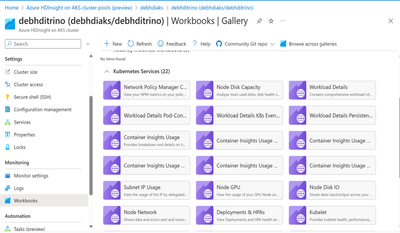

Azure workbook provides prebuilt and custom-built canvas capability for data analysis. HDInsight AKS Trino cluster supports native Workbook integration as shown in the following screenshot.

Native integration capability of Azure HDInsight on AKS with Azure Monitoring service gives the ability to explore, interact with log and monitor the workloads in seamless manner. Setting up alert based on query threshold becomes much easier when we set up alert.

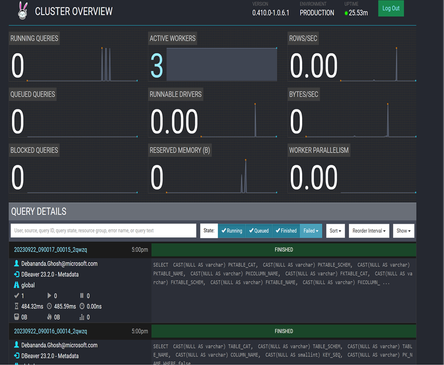

Federated SQL monitoring with Trino UI– Trino provides native UI to monitor query logs triggered in Trino cluster. To access Trino Logs, we need to perform the following two steps.

Step 1. Go inside HDInsight Trino cluster and click on ‘Trino UI’ as shown in following screenshot.

Fig 1.6 Trino UI Dashboards.

Step2. Click on ‘Trino UI, a Trino UI monitoring dashboard with query detail will like below as shown in following screenshot. Query details appear in this canvas which can be used for monitoring and deepdive of query.

Fig 1.7 Trino UI Dashboards

Elasticity- Manual Scale, Auto scale & delegated container management-

HDInsight on AKS supports both manual and auto scale capability. We need go to portal and manually drag the ‘Number of Worker nodes’ then turn on ‘Auto scale’ toggle via HDI AKS user interface to leverage the cloud elasticity. Underlying cluster pod management as shown in the next screenshot takes care of the HDI AKS elasticity in a high performant manner.

Fig 1.8 HDInsight on AKS implementation architecture

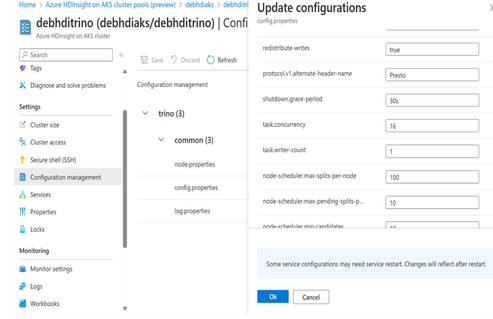

Ease of Service Configuration Management- There are multiple Trino configurations which we need to leverage for application development and tuning. Some are as below.

- join-distribution-type.

- query.max-execution-time.

- query. cache. enabled.

- query.cache.ttl.

- query. enable-multi-statement-set-session.

- query.max-memory.

HDInsight cluster provides intuitive user interface to configure Trino service-related configurations. Please refer sample trino configuration here- hdionaksresources.blob.core.windows.net/trino/samples/arm/arm-trino-config-sample.json.

Example we can enable query caching (for better query performance in certain scenarios) by adding new parameters as shown in following screenshot.

Fig 1.10 Trino Query catching enablement.

Ease of Migration- Since underlying open-source tooling (in this case Trino) is compatible with on-premises deployment, modernization of Trino in Azure HDInsight will not be complete disruption for organization. Even though this migration is not complete lift and shift migration, it does not need complete rewriting of application as well.

TCO benefits- PaaS benefits like various SKU Choices, subscription-based pay per use model, scalability, ease of deployment always brings together cost advantage for this modernization.

After migration of Trino in HDInsight AKS, our conceptual TO-BE architecture is depicted in the following diagram.

Fig 1.11 Trino Modernization with Azure HDInsight AKS.

How to connect HDI AKS Trino Cluster

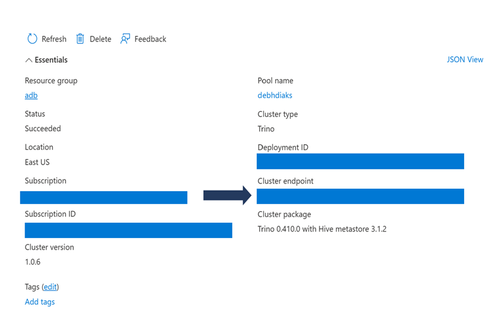

Once we deploy HDInsight AKS Trino cluster, we need to connect Trino cluster. Subsequently we need to connect with multiple data sources to build a data federation framework for further development purpose. This comprises of three step process broadly.

- Fetch the Cluster Endpoints for further development purpose.

- Access cluster via desktop/command line tooling’s.

- Connect Trino with external data sources for federation purpose.

Step1- To begin development we need to first fetch the Trino Cluster endpoint from portal as shown in the following screenshot.

Fig 1.12 Trino Cluster Endpoint.

Step2- As the next step we need to connect with Trino cluster and access the environment. HDInsight Trino AKS can be accessed via following mechanism.

- Trino CLI.

- Trino Dbeaver/JDBC driver.

- Trino Web SSH.

To access Trino cluster via CLI please follow the prerequisite documentation here- https://review.learn.microsoft.com/en-us/hdinsight-hilo/prerequisites-subscription?branch=main Trino CLI - Azure HDInsight Preview Documentation | Microsoft Learn

Once prerequisites are installed, we can access Trino cli using ‘command prompt’ and run sample command like ‘show catalogs’ as shown below.

trino-cli --server <cluster_endpoint>

Trino-cli --server debhditrino.xxxxxxxxx.eastus.hdinsightaks.net

Fig 1.13 Trino CLI query

Likewise we can leverage one of the most popular tool Deaver and use Trino JDBC connector for ad hoc querying .As pre requisite we need to download Dbeaver from here Download | DBeaver Community .Then follow the configuration pre requisites step here -Trino with DBeaver - Azure HDInsight Preview Documentation | Microsoft Learn

After we do Azure Trino JDBC driver setup, we can test connectivity by running the following query (checks metadata).

select * from tpcds. information_schema.columns

The following screenshot shows the outcome of previous query related to system catalog exploration.

Fig 1.14 Trino query using Dbeaver tool.

Step3- As a next step we need to leverage Trino connectors to connect with external data sources. Following connectors are supported today- Trino connectors - Azure HDInsight Preview Documentation | Microsoft Learn

Example to connect with AWS S3 we have detailed documentation here via ARM Template - Query data from AWS S3 and with Glue - Azure HDInsight on AKS | Microsoft Learn

Like wise to configure delta lake we can leverage this document- Configure Delta Lake catalog - Azure HDInsight Preview Documentation | Microsoft Learn

Further reading

To learn more about other HDInsight services here Azure HDInsight on AKS - Azure HDInsight Preview Documentation | Microsoft Learn .Conceptual anatomy of HDInsight AKS latest offerings is shown in following diagram.

Fig 1.15 HDInsight on AKS current offerings

We can refer to the following resources:

- To learn more about opensource trino- https://trino.io/docs/current/

- To learn more about HDInsight on AKS- Azure HDInsight on AKS (Preview) - Azure HDInsight on AKS | Microsoft LearnReading Delta Lake - Read Delta Lake tables (Synapse or External Location) - Azure HDInsight Preview Documentation | Microsoft Learn