This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Introduction:

In today's data-driven landscape, efficient data orchestration is crucial for the success of any organization. Managed Airflow, within the Azure Data Factory (ADF) ecosystem, offers a powerful solution for managing data workflows. To leverage its full potential.

This blog post will take you on a journey through Managed Airflow's diagnostics capabilities within ADF & Azure Monitor.

Prerequisites:

- Azure Monitor workspace

- ADF workspace

- Managed Airflow Instance in ADF

To orchestrate ADF with Managed Airflow please follow my previous tutorial:

mastering the art orchestrating ADF with the power of Airflow

I'll be using the same pipeline as mentioned in the blog post with the same managed airflow instance.

Table of Contents:

- Understanding the Essentials

- Logging for Clarity

- Metrics for Optimization

- Conclusion

- Links

- Call-To-Action

Understanding the Essentials:

- An Introduction to Managed Airflow in Azure Data Factory

What is Managed Airflow in ADF?

Managed Airflow is a robust orchestration and scheduling service integrated into Azure Data Factory (ADF). It serves as the backbone for automating, and monitoring data workflows with high levels of customization and scalability.

Key Benefits:

- Automation of complex data pipelines.

- Efficient management of data workflows.

- Reliability in data processing and transformation.

- Scalability to handle data at any scale.

- Simplified maintenance of data workflows.

Target Users:

Managed Airflow in ADF caters to data engineers, developers, and organizations seeking to streamline and automate their data workflows. It equips users with the tools and features necessary to efficiently manage their data processing and transformation tasks, ensuring data reliability and scalability. - The Importance of Diagnostics

Diagnostics in Azure Data Factory (ADF) are essential for monitoring and optimizing data workflows. They provide insights into system health, performance, and reliability. In this blog post, we'll explore their pivotal role in ensuring efficient data management and orchestration within ADF.

Logging For Clarity:

- A Deep Dive into Diagnostic Logs

Azure Data Factory provides diagnostic logging as a feature to help users monitor and troubleshoot their data integration pipelines. These logs capture information about the activities and operations happening within your ADF environment.

There are different types of logs that Azure Data Factory can generate, including activity logs, pipeline logs, trigger logs and now we have Airflow logs.

Airflow logs encompass a comprehensive set of records, including task execution logs, worker logs, DAG processing logs, scheduler logs, and web logs, all designed to provide insights into the execution, management, and monitoring of data workflows in the Airflow platform. These logs collectively enable users to track and troubleshoot tasks, monitor workflow execution, and manage the scheduling and user interactions within their Airflow environment. - Configuring and Customizing Logging

In order to get Airflow Logs, we need to configure ADF logs in Azure monitor.

In Azure monitor under Settings tab -> Click on diagnostic settings -> Select your ADF workspace like so:Click on 'Add diagnostic setting' to configure Airflow Logs

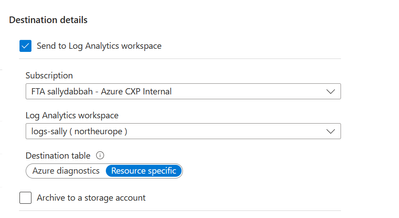

Make sure to select Airflow logs and All metrics tab like so:In Destination details -> select your logs analytics workspace like so:

Note:

- If you select AllMetrics, various Data Factory metrics are made available for you to monitor or raise alerts on. These metrics include the metrics for Data Factory activity and Managed Airflow IR such as AirflowIntegrationRuntimeCpuUsage, AirflowIntegrationRuntimeMemory.

Metrics for Optimization:

- Configure Metric Alert

In Azure Monitor -> Click on Metrics -> Fill your ADF details like soAfter selecting the ADF Workspace , now its time to configure alert according to your needs.

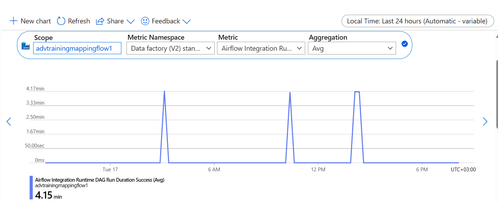

In Azure Monitor -> click on Metrics -> select your ADF workspace -> Click on new chart -> select Airflow Integration Runtime DAG Run Duration Success metric -> aggregation is AVG

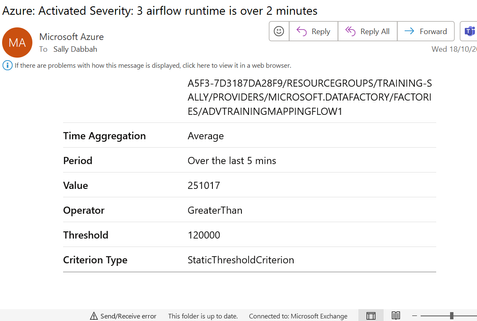

I want to measure DAG duration on success, if its more that 2 minutes i want to be notified.After viewing results in chart , we can see that the average of DAG runtime is 4.15 minutes, I would like to set an alert and send email to my alias : sallydabbah@microsoft.com, if the runtime is over 2 minutes which is 120000 in milliseconds.

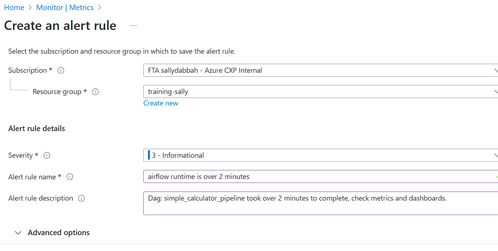

Click on Add new alert rule, add the details like so:Add custom details to the alert:

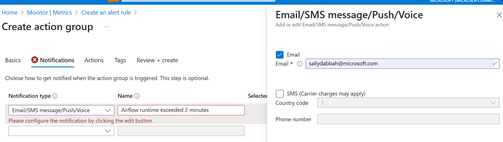

Create action group -> in Notification tab -> configure your email and msg to be sent to your email

Click on Review and Create.

In Monitor UI -> click on Alerts tab -> Click on Action Group

You can see your action group that you just created.

Now in order to receive an email from the alert you need to wait for one more run of your pipeline so ADF will send the logs to Azure Monitor.

Alert via email:

Conclusion:

In conclusion, logs and metrics are essential for efficient data workflow management in Azure Data Factory, offering insights, troubleshooting capabilities, and data-driven decision-making.

Further step: you can visualize your data by building a dashboard using Azure Monitor metrics with Grafana tool, check it out in MS documentation: Quickstart: create an Azure Managed Grafana instance using the Azure portal | Microsoft Learn

Links:

- Diagnostics logs and metrics for Managed Airflow - Azure Data Factory | Microsoft Learn

- Mastering the Art: Orchestrating ADF with the Power of Managed Airflow - Microsoft Community Hub

- Monitor data factories using Azure Monitor - Azure Data Factory | Microsoft Learn

Call-To-Action:

- Make sure to establish all connections before starting to work on managed airflow.

- check MS documentation on managed airflow.

- Please help us improve by sharing your valuable Managed Airflow Preview feedback by emailing us at ManagedAirflow@microsoft.com

- Follow me on LinkedIn for more content: Sally Dabbah | LinkedIn