This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

Intro

In my previous blog I showed you how to migrate from Firebase to Cosmos DB with the old Azure Cosmos DB Data Migration tool, today I want to make your life even easier by using the new Open Source Azure Cosmos DB Desktop Data Migration Tool , feel free to visit the repo and contribute. We will explore the new capabilities of this new data migration tools.

Why use this tool and not the previous one?

- Currently supporting 11 extensions for data sources where you can sync your data with Azure cosmos DB.

- Having both CMD and UI interface to use the tool.

- Custom plugin: Integrating this tool with your own data migration workflow.

- Copying data by querying

- Importing multiple JSON files into Azure Cosmos DB containers

Overview

The Azure Cosmos DB Desktop Data Migration Tool is an open-source project containing a command-line application that provides import and export functionality for Azure Cosmos DB.

Quick Installation

To use the tool, download the latest zip file for your platform (win-x64, mac-x64, or linux-x64) from Releases and extract all files to your desired install location. To begin a data transfer operation, first populate the migrationsettings.json file with appropriate settings for your data source and sink (see detailed instructions below or review examples), and then run the application from a command line: dmt.exe on Windows or dmt on other platforms.

After extracting you will find the following files

Click dmt.exe to run the application, you will get a list of data source plugins to work with.

How does the tool work?

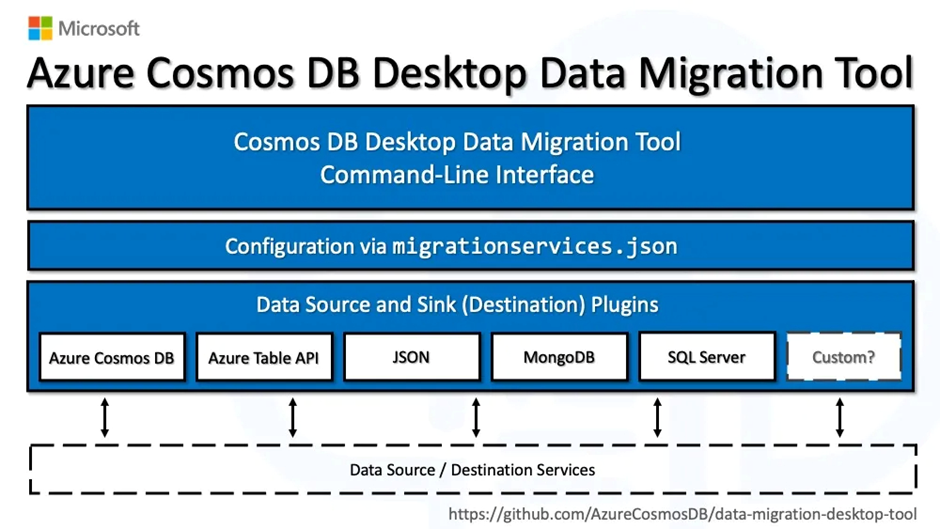

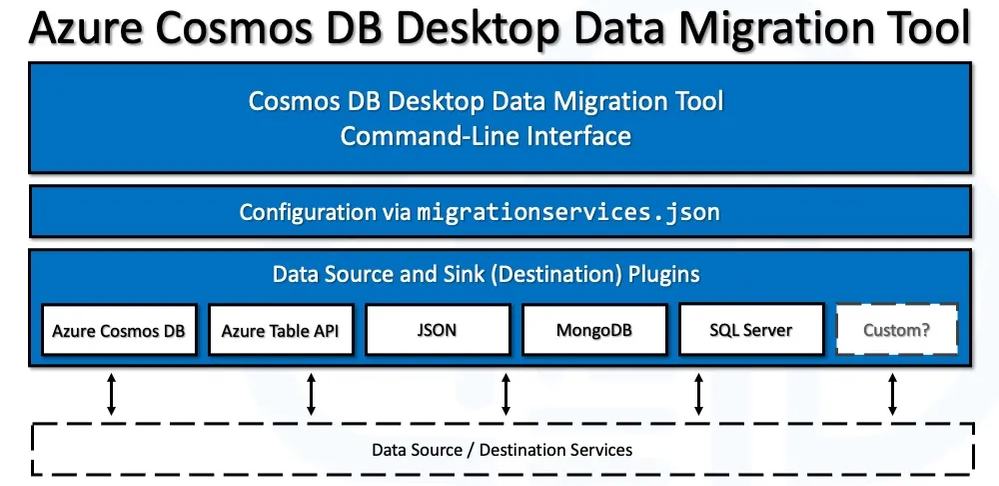

As seen in the above diagram, the process for using the Azure Cosmos DB Desktop Data Migration Tool is as follows:

- On your local computer, a data migration is started using the command-line interface (CLI) of the Azure Cosmos DB Desktop Data Migration Tool.

- The migrationservices.json file, which is set up with the exact Source and Destination (also known as Sink) plugin settings, including service connections strings and other Source and Sink plugin specifications, is read in by the CLI tool.

- The tool then performs the configured data migration by calling the necessary plugins for the Source and Destination (also known as Sink) data services.

Working with Mongo DB extension?

The sample that follows provides an overview of how the migrationservices.json setup is specified with MongoDB as the data Source and Cosmos DB as the Destination (also known as the Sink):

Data migration configurations are made more reusable by using the migrationservices.json file while configuring the tool. With the exact same options each time, you can now run and restart the data migration procedure as many as necessary.

To get everything right in development and test environments, you'll probably need to conduct the migration process more than once, but you usually only need to do it once when moving data to production. This test/debug, then migrate to production procedure will be assisted by the migrationservices.json file.

The dmt.exe executable is called when the Azure Cosmos DB Desktop Data Migration Tool is run from the command line.

Working with JSON extension?

Executing Multiple Operations

In a typical migrationsettings.json file, the transfer procedure is defined by a single set of SourceSettings and a single set of SinkSettings. In some cases, it may be more practical to perform various tasks at once rather than having to create different files and launch the application numerous times.

An array of SourceSettings/SinkSettings pairs can be added to the Operations property in the settings file to handle these kinds of scenarios. Each of these processes will proceed consecutively, but only one command is needed to complete them. They can also make use of common settings to minimize the number of unique settings in the file. The need that the Source and Sink types be the identical for all operations is one current restriction; however, this could change in the future.

Example: Multiple JSON Files Into Cosmos Containers

In this example, several JSON files are read and then written to several databases and containers inside the same Cosmos account. Observe that the parent settings, which in this example include an environment variable for the connection string, can supply the necessary ConnectionString and PartitionKeyPath values, so the individual actions don't need to specify them. It's also important to note that one operation uses a distinct value that overrides the inherited parent value, even if the other three utilize the /id partition key path that is established at the top.

Working with Azure Cosmos DB extension?

Copying Cosmos Data By Querying

Here, data is duplicated between two distinct containers, each of which represents a subset of the original data, from a single partition in a Cosmos container. The queries used to filter the data and the containers that each will write to are the only variations between the separate operations when shared settings are used. In the below example we are migrating users from user-management database to audit-logs database by separating users into active-users, admin and inactive-users into different containers. This is via querying this data from user-management database. Huuh is this cool.

Read More:

Check out all the supported data source extensions here

Walk down tutorial on JSON to Azure Cosmos-NoSQL migration