This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

Set up

- Azure Synapse Analytics (Or Fabric Data Science)

- Spark Notebook

- Synapse Dedicated SQL Pool (or Fabric Managed Table/Datawarehouse table) You can write the data out to any relational database using some code modifications}

- Azure Key Vault {Slight modification in code if you are using Fabric}

- PowerBI

Set up components (if you are using Synapse Analytics)

Note : You are going to need admin permissions (or request an admin) to do some of the set up components

PowerBI REST API set up

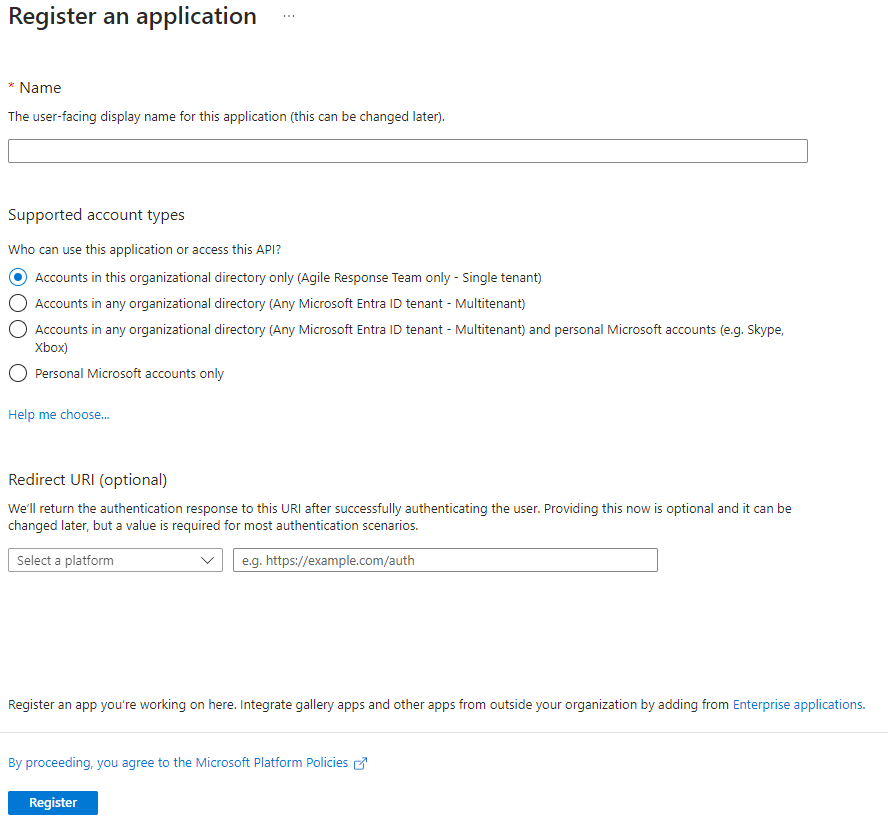

We are using an App Registration for access to the PowerBI REST API

Use Entra to create a new App Registration. Fill in the details as per your specifications

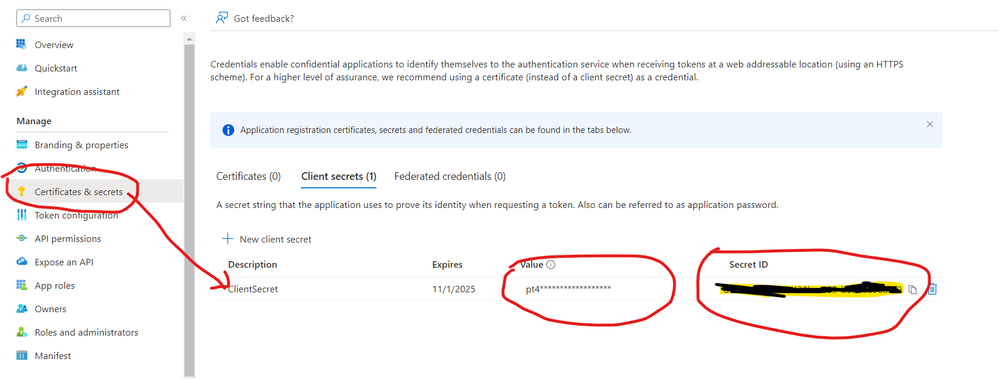

Once you have created the App Registration, navigate to the “Certificates and Secrets” section and create a new client secret

Note : Keep the value (it is client secret) and the client id in a secure place. Once the screen displays the value, it will not display it again in case you navigate away. If that happens, you have to delete this secret and create a new one.

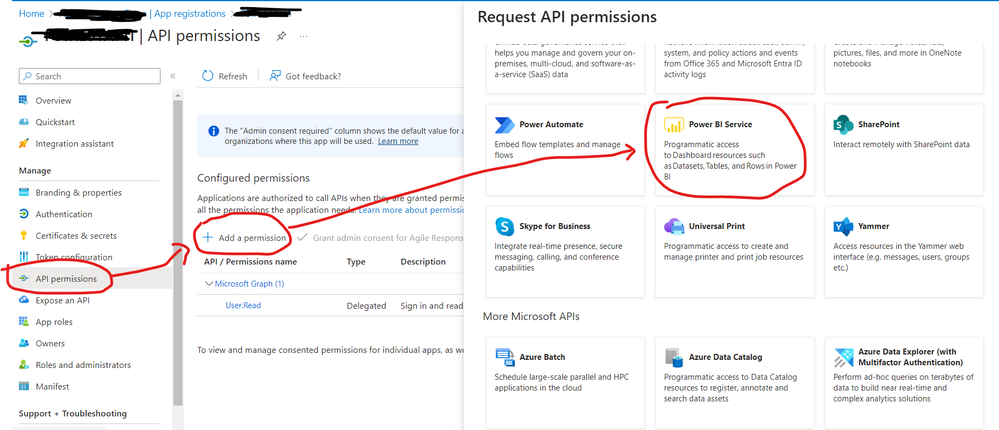

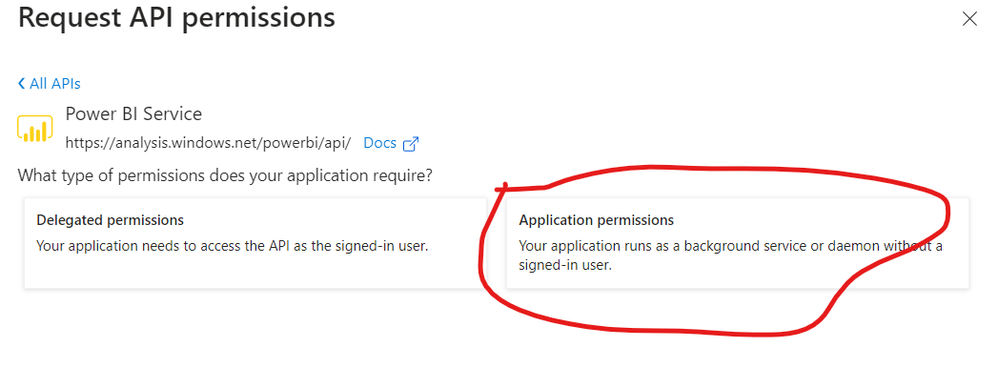

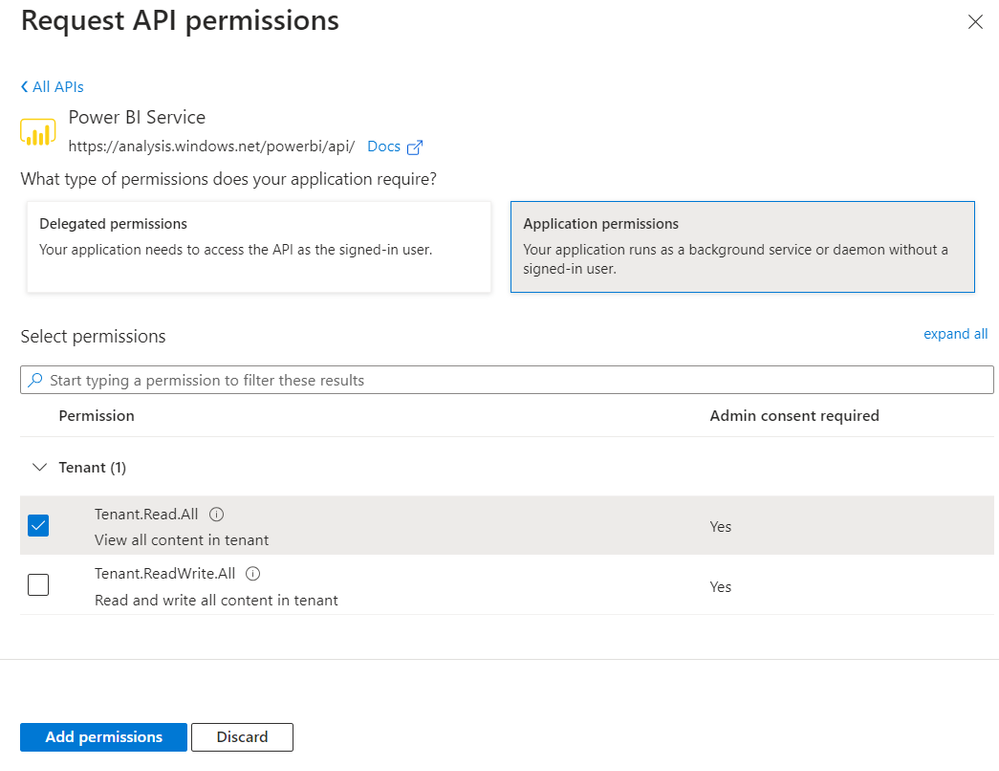

Once you have the secret created, we have to add some API Permissions (This might need a PowerBI admin)

Once you have this piece ready (and potentially added the App Registration to an Entra Group), have your PowerBI Admin follow these steps

These two steps will allow the new App Registration to access the PowerBI APIs via the REST call.

The PowerBI REST APIs have an excellent playground that can be accessed at https://learn.microsoft.com/en-us/rest/api/power-bi/admin

This playground can be used to test out the queries before you incorporate them in the code.

Azure Key Vault

Use an existing Key Vault or create a new one – this is required so that you don’t have the client secret (created earlier) in open format in your Spark Notebook.

To add a secret to the vault, follow the steps:

- Navigate to your new key vault in the Azure portal

- On the Key Vault settings pages, select Secrets.

- Select on Generate/Import.

- On the Create a secret screen choose the following values:

- Upload options: Manual.

- Name: Type a name for the secret. The secret name must be unique within a Key Vault. The name must be a 1-127 character string, starting with a letter and containing only 0-9, a-z, A-Z, and -.

- Value: Type the value for the secret. This is the value you saved from the earlier step when you created the App Registration and got a secret value.

- Leave the other values to their defaults. Select Create.

Azure Synapse Analytics

Once you have the first two parts done, we are ready to move to Synapse Analytics (or Fabric)

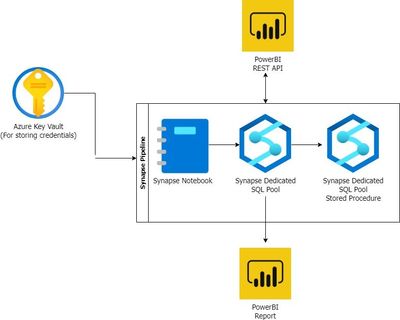

The notional architecture outlined above uses a Synapse Analytics Spark Notebook with a Dedicated SQL Pool to hold the data. This can be modified to have a Fabric notebook with a Managed Table/Datawarehouse table with some code modifications

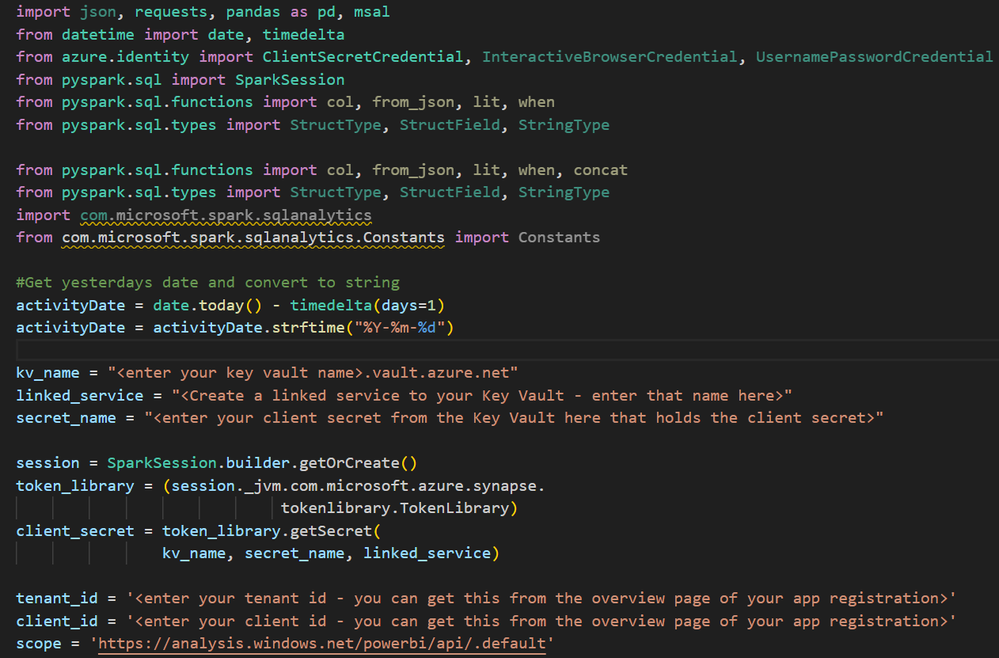

Spark Notebook

Navigate to the Notebooks under your Synapse Notebook and select an existing Spark Pool (or provision a new Spark Pool) and use PySpark as your language

Give a name to your Spark Notebook and click “Publish All” to commit your changes

The code modules are all available HERE

The entire end to end code is available for download HERE

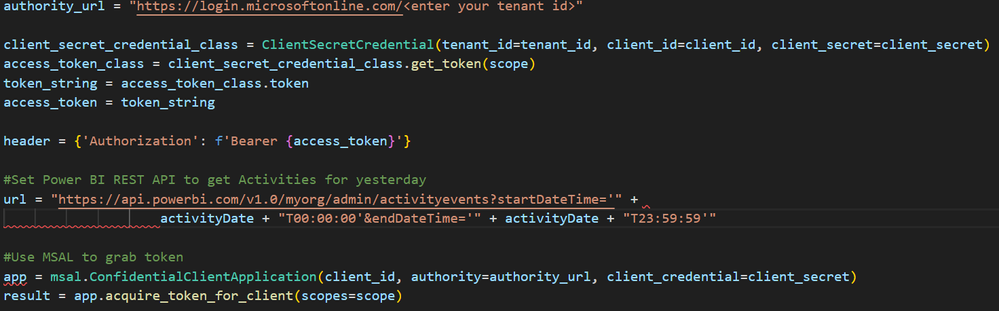

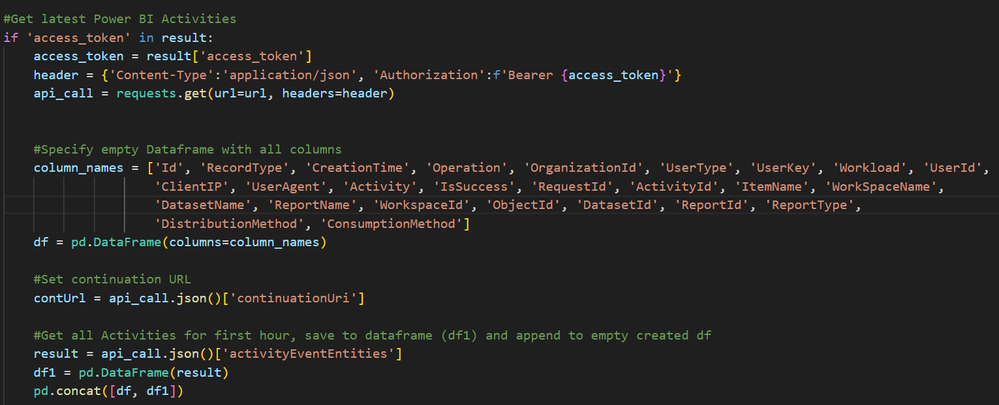

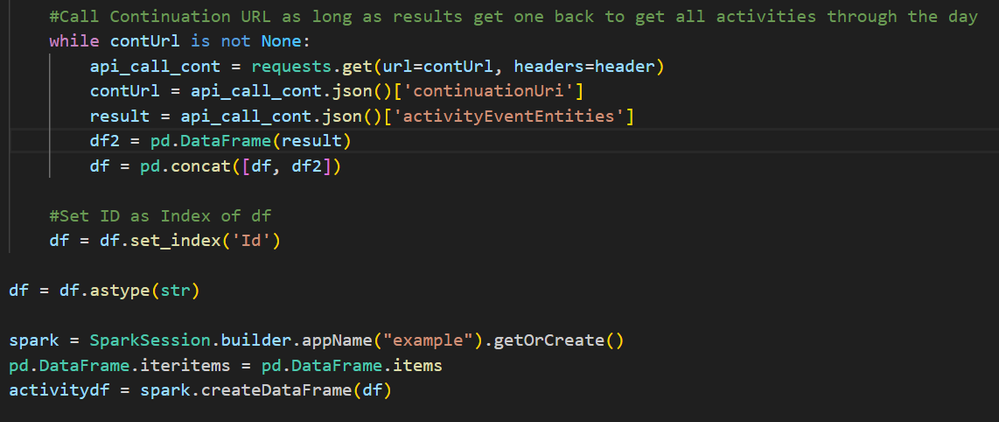

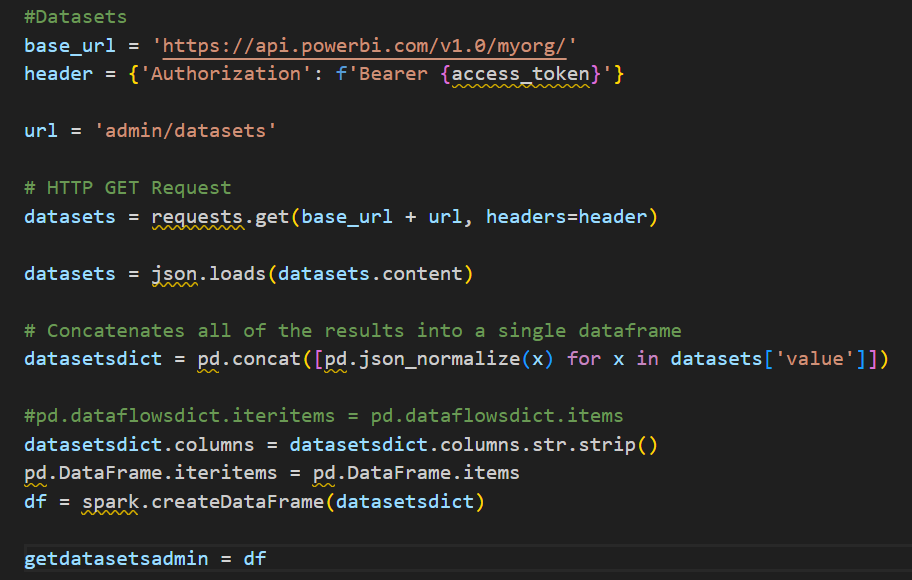

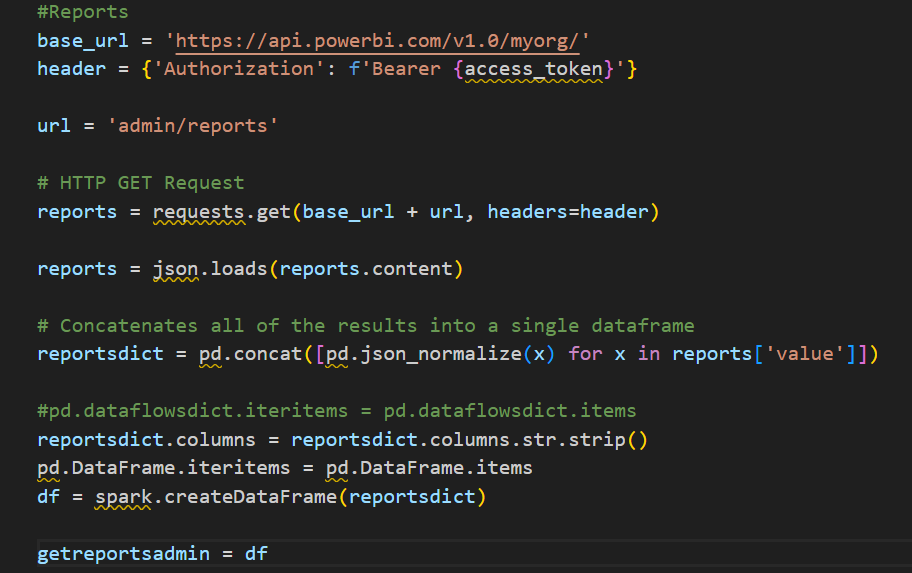

The entire code is represented in code snippets via screenshots here in case you want to follow along step by step.

Note : Please take care of code indentations – they might have moved and might introduce errors.

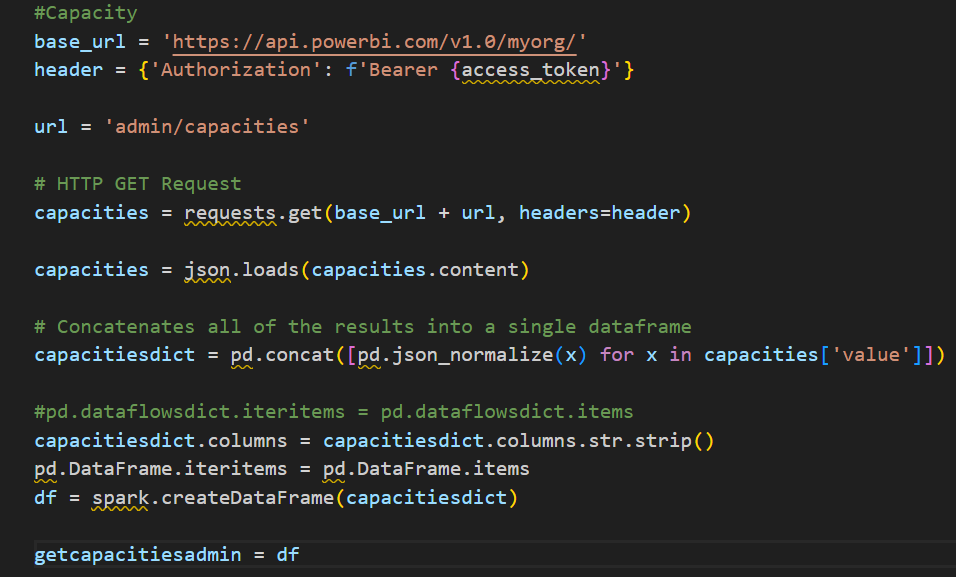

Once the base activity events pull is done, we can pull in other associated activities to complete the picture.

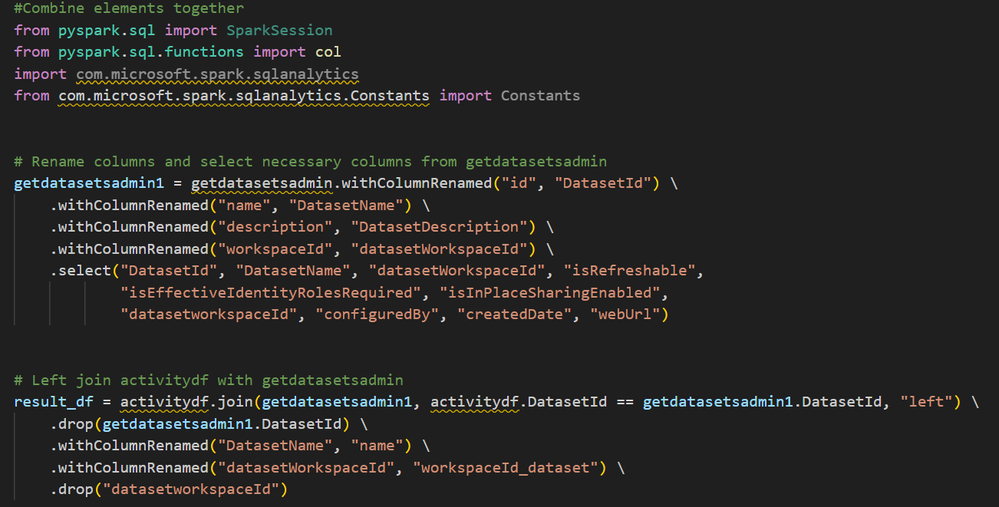

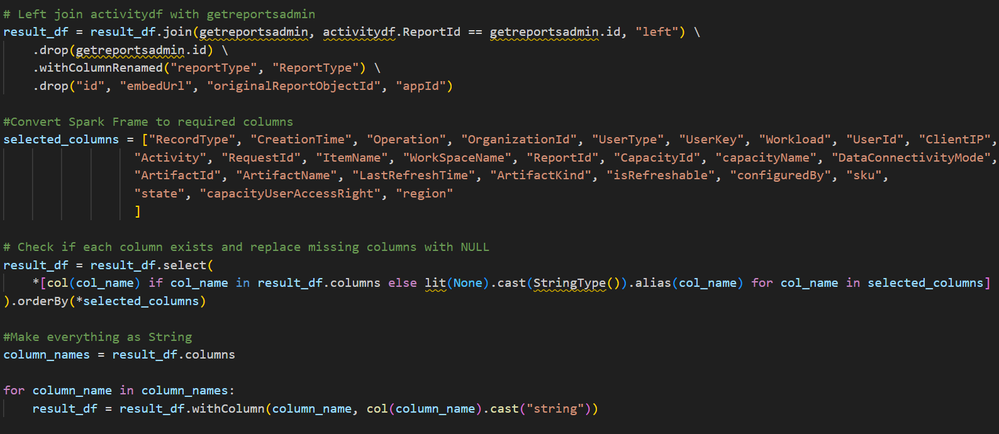

Now that we all the elements together in different spark data frames, we can have a set of consolidated data frames to combine the data together

Our final data frame is result_df which has all the values we need for final reporting/analysis.

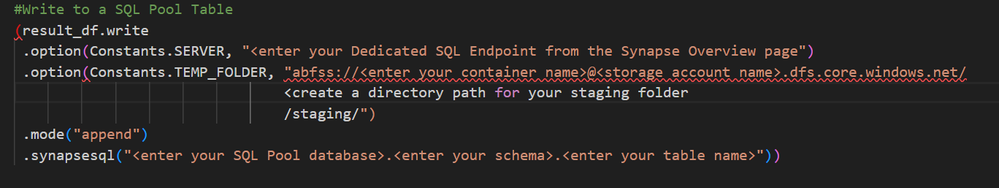

The code can be scheduled to run on a trigger for the previous day’s activity log. Because of this, we have to append the data into a Synapse Dedicated SQL Pool (or any relational database of your choice)

Use an existing Synapse Dedicated SQL Pool or create a small (DW100) one

Run the create table script found HERE in your pool to get a table of your choice.

Once you get your table created, you have to incorporate the final Spark Code that takes the Spark Dataframe (result_df) and appends the data to the SQL Pool table

Synapse Dedicated SQL Pool Stored Procedure

Since the Spark job appends data, there is a need to run a script that will check for and remove duplicate records if inserted. We will be using a Stored Procedure on the Pool itself but this can be easily modified to run on any relational database of your choice.

Note : if you are using Fabric, it might make sense to extend the Spark Notebook to have another code module that does the delete of duplicate records from the Managed Table

The Stored Procedure script can be found HERE

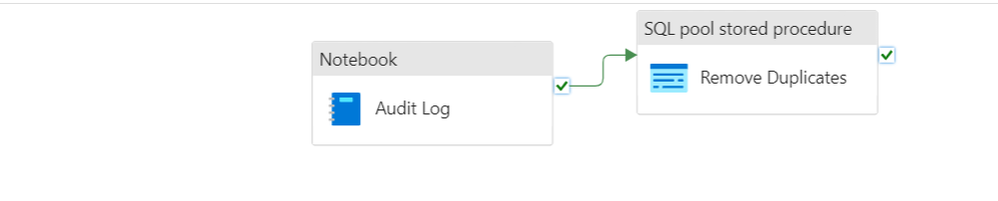

Synapse Pipeline

Once you have all the individual components in place, we can encapsulate the Spark Notebook and the Stored Procedure in a Synapse Pipeline. This pipeline can be triggered on a daily schedule.

Now that the pipeline is set to be running, we can connect the data from the SQL Pool to a PowerBI Dashboard and produce a report that outlines all the key activities.

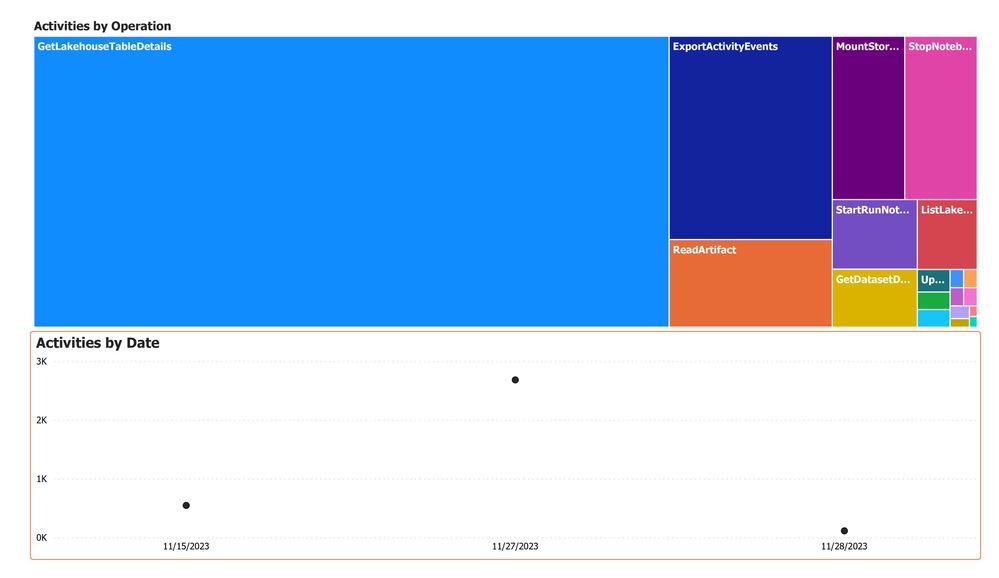

PowerBI Report

Once the data is ready, you can build a simple PowerBI report to visualize the data