This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

OVERVIEW

Azure CycleCloud is an enterprise-friendly tool for orchestrating and managing High Performance Computing (HPC) environments on Azure. With CycleCloud, users can provision infrastructure for HPC systems, deploy familiar HPC schedulers, and automatically scale the infrastructure to run jobs efficiently at any scale. Through CycleCloud, users can create different types of file systems and mount them to the compute cluster nodes to support HPC workloads.

Azure CycleCloud is targeted at HPC administrators and users who want to deploy an HPC environment with a specific scheduler in mind -- commonly used schedulers such as Slurm, PBSPro, LSF, Grid Engine, and HT-Condor are supported out of the box. CycleCloud is the sister product to Azure Batch, which provides a Scheduler as a Service on Azure.

CycleCloud provides methods to customize clusters via custom OS images, or bootstrap options like Cloud-init and Cluster-init. Using a custom OS image can help reduce node boot time compared to using Cloud-init or Cluster-init, but maintaining the custom OS can be a big commitment. Using Cloud-Init or Cluster-Init can be a faster implementation to install packages, configure mounts, or edit config files. The decision to use Cloud-init vs Cluster-init generally depends on what you need to do, whether versioning is needed, etc. This blog will focus on using Cloud-init and extending it with the Linux "at" utility.

DISCUSSION

"Cloud-init is the industry standard multi-distribution method for cross-platform cloud instance initialization. It is supported across all major public cloud providers, provisioning systems for private cloud infrastructure, and bare-metal installations."

(REF: http://loudinit.readthedocs.io/en/latest/)

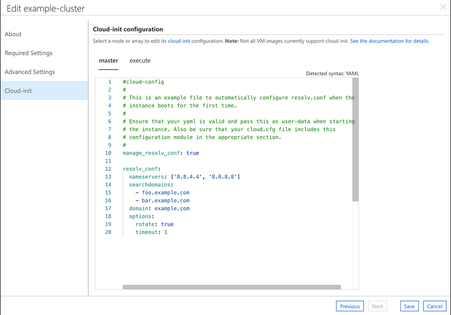

Azure CycleCloud provides the ability to configure Cloud-init in the GUI...

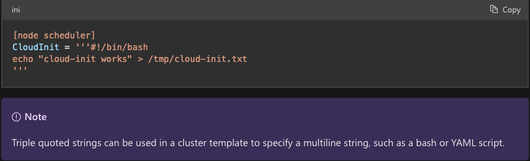

...or via the cluster template file:

Cloud-init is (in my opinion) "easier" than a cluster-init project because it can be configured directly in the GUI. (NOTE: the default GUI Cloud-init is not an exported parameter when using the "cyclecloud export_parameters" CLI command. The cluster template can be modified to create a new "parameterized" cloud-init that will be exported as a parameter if needed). The native Cloud-init syntax, as shown in the GUI capture above, is directly supported by CycleCloud, which is very useful when using multiple Linux distributions. A shell script can also be configured directly in the GUI for Cloud-init.

Here are some example use cases for Cloud-init:

- install a Linux package

- mount an NFS export (NOTE: the NFS client pkg will need to be installed by your

Cloud-init) - download a file, pkg, key, etc

Here are examples of use cases not well suited for Cloud-init:

- modify a scheduler config file (ie.

slurm.conf) - modify a CycleCloud (ie. Jetpack) configured mount, ie.

/schedor/shared - modify the CycleCloud

autoscale.jsonfile

These examples not well suited for Cloud-init are better suited to Cluster-init as it runs after Jetpack has configured the CycleCloud and cluster components (ie. OpenPBS). However, combined with Linux "at" utility, it becomes possible to use Cloud-init to support these use cases.

CLOUD-INIT + "AT"

A Redhat.com article describes the "at" utility as follows:

Theatandbatch(at -b) commands read from standard input or a specified file. Theattool allows you to specify that a command will run at a particular time. Thebatchcommand will execute commands when the system load levels drop to a specific point. Both commands use the user's shell.

Combining "at" with Cloud-init enables the ease of configuring the script in the CycleCloud GUI with the ability to modify actions performed by Jetpack. The high level structure of a Cloud-init script using "at" looks like this:

- If using CentOS 7

- install EPEL repo

- install/enable "at" package

- write a new script file locally (including a sleep loop) within the

Cloud-initscript - schedule the "at" command to run the new script file (so that

Cloud-initcan finish and move to Jetpack phase)

A simple example of this method is to change the scheduling behavior of the CycleCloud provided OpenPBS project. By default, the CycleCloud autoscale.json file is configured to use physical CPUs for VM resources in the OpenPBS cluster. Most Azure VMs use virtual CPUs, for example, the F72s_v2 VM has 72 virtual CPUs and 36 physical CPUs. To schedule against the 72 virtual CPUs requires modification to the autoscale.json file to use virtual CPUs. Since this file is created during the CycleCloud Jetpack phase it is not available when Cloud-init runs. The Cloud-init script below creates a new script file that checks for the existence of the autoscale.json file and if not found will sleep for 30s and try again. Once the file exists the while loop is broken, and the script modifies the autoscale.json file to use virtual CPUs:

WRAP UP

CycleCloud Cloud-init can be extended with the Linux "at" utility to accomplish tasks not possible with Cloud-init alone. It does not fully replace the CycleCloud specific Cluster-init projects, but does provide a quick and easy interface for small changes. And remember, the more changes you make using either Cloud-init or Cluster-init, the longer it will take to boot a node.

LEARN MORE

Interested in learning more about high performance computing?

- Read about Azure HPC, AI Infrastructure

- Visit our hub to find all Azure content for HPC

References: