This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Artificial Intelligence (AI) is revolutionizing how we analyze and interpret data, signaling a paradigm shift towards more accessible and user-friendly data analytics. Generative AI systems, like LangChain's Pandas DataFrame agent, are at the heart of this transformation. Using the power of Large Language Models (LLMs such as GPT-4, these agents make complex data sets understandable to the average person.

In this blog we will explore how LangChain and Azure OpenAI are revolutionizing data analytics. Discover the transformative potential of Generative AI and Large Language Models in making data analytics accessible to everyone, irrespective of their coding expertise or data science background. Dive into the behind-the-scenes magic of the LangChain agent and learn how it simplifies the user experience by dynamically generating Python code for data analysis

Generative AI: A Game Changer for Data Analytics: Generative AI transforms the field by enabling users to communicate with data in natural language. This pivotal development breaks down barriers, making data analytics accessible to everyone, not just those with coding expertise or data science backgrounds. The result? A more inclusive and expansive understanding of data.

The LangChain Pandas DataFrame Agent: LangChain's agent uses LLMs trained on vast text corpora to respond to data queries with human-like text. This agent intermediates complex data sources and the user, enabling a seamless and intuitive query process.

A Simplified User Experience: Imagine asking, 'What were our top-selling products last quarter?' and receiving an immediate, straightforward answer in natural language. That's the level of simplicity LangChain brings to the user experience. The agent handles all the heavy lifting, dynamically generating and executing the Python code needed for data analysis and delivering results within seconds, no user coding required.

The Behind-the-Scenes Magic: When users interact with the system, they see only the surface of a deep and complex process. The LLM crafts Python code from user queries, which the LangChain agent executes against the DataFrame. The agent is responsible for running the code, resolving errors, and refining the process to ensure the answers are accurate and easily understood.

Transformative Potential for Data Analysis: This technology empowers subject-matter experts with no programming skills to glean valuable insights from their data. Providing a natural language interface accelerates data-driven decision-making and streamlines exploratory data analysis.

By democratizing analytics, AI assistants are not just providing answers but enabling a future where data-driven knowledge and decisions are within everyone's reach.

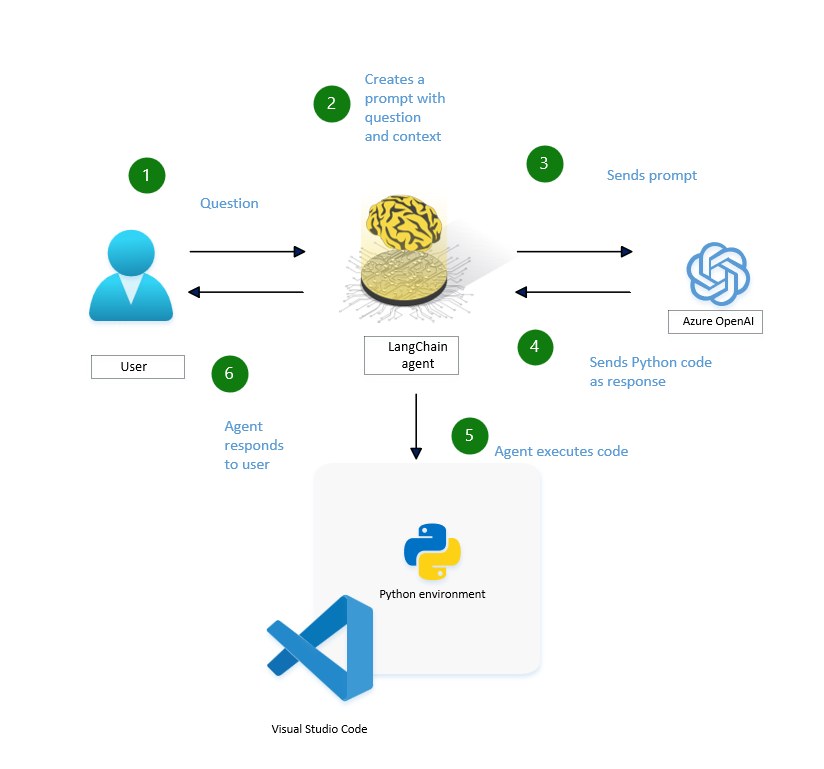

Behind the Scenes: How the LangChain Agent Works

Let's peel back the curtain to see how the LangChain agent operates when a user sends a query:

1. User Query: It all starts with the user's question.

2. Creating Contextual Prompts: The LangChain agent interprets the question and forms a contextual prompt, laying the groundwork for a relevant response.

3. Prompt Submission: This prompt is dispatched to Azure's OpenAI service.

4. Python Code Generation: If necessary, Azure OpenAI service crafts Python code in response to the prompt.

5. The Python code generated by the agent is executed in a Python environment, such as Microsoft Fabric, which is capable of processing and outputting the required information. This environment ensures that the Python code runs efficiently and securely, facilitating the seamless transformation of data into actionable insights.

6. User Response: Finally, the user receives a response based on the analysis carried out by the Python code, closing the loop on their initial query.

1. Azure Open AI Service

To expand on the AI-driven analytics journey, we must understand the mechanics of setting up the underlying AI services."

Creating an Azure OpenAI resource and deploying a model is a simple process through Azure's user-friendly interface. Here are the detailed steps complemented by visuals from various sources to guide you through the process:

Step 1: Sign in to Azure Portal

Visit the Azure Portal and sign in with your credentials.

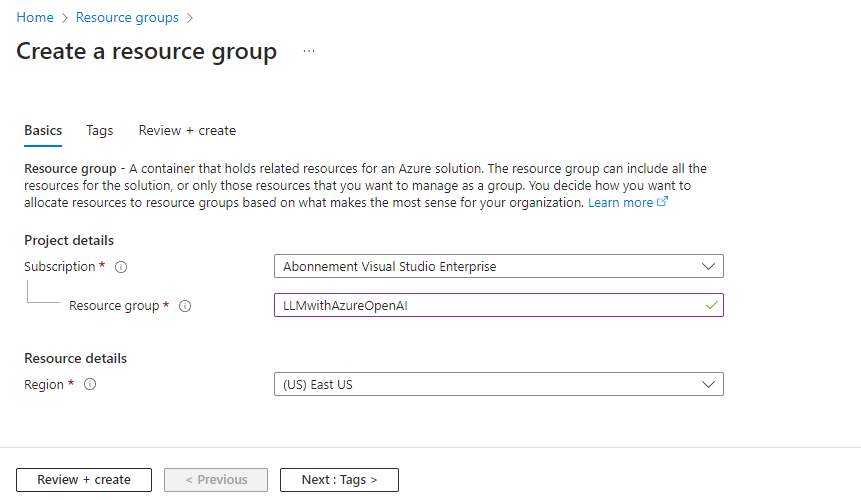

Step 2: Create a Resource Group

- Type 'resource group' in the search bar at the top of the portal page and select 'Resource groups' from the options that appear.

- Click 'Create' from the toolbar to create a new resource group.

- Fill in details such as Subscription, Resource group name, and Region.

- Click 'Review + Create' and then Create.

- Wait for the resource group to be created, then open it by selecting its name.

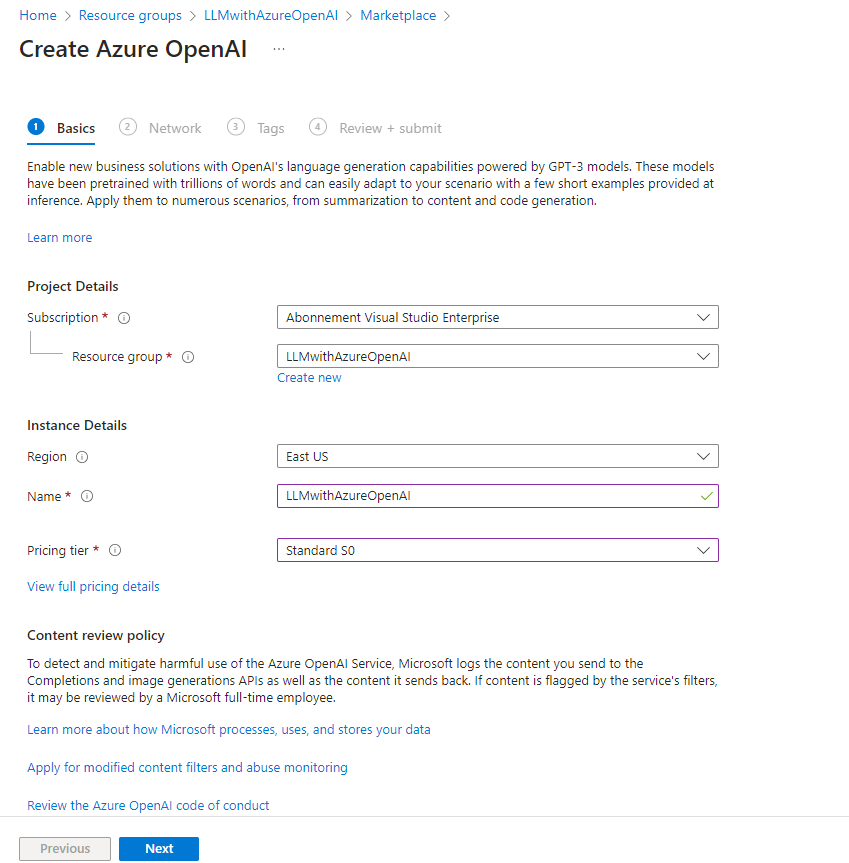

Step 3: Create an Azure OpenAI Resource - Inside your resource group, select Create Resources.

- Search for 'Azure OpenAI' in the Marketplace and select Create Azure OpenAI.

- Fill in the details such as Subscription, Resource Group, Region, and Service Name.

- Choose the Pricing tier (e.g., Standard S0).

- Click through 'Next' until you can click 'Create'.

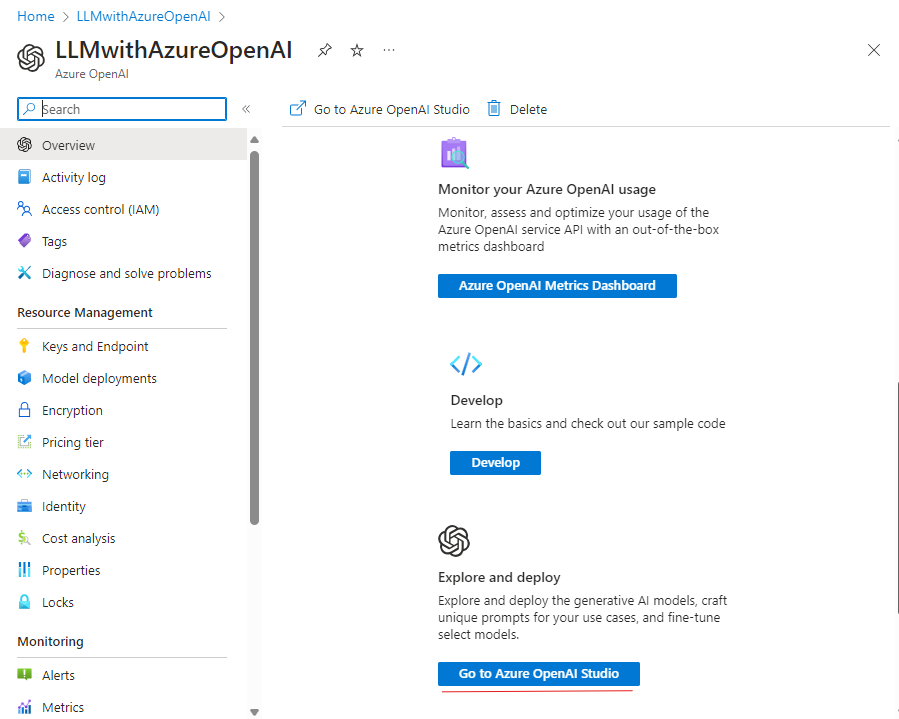

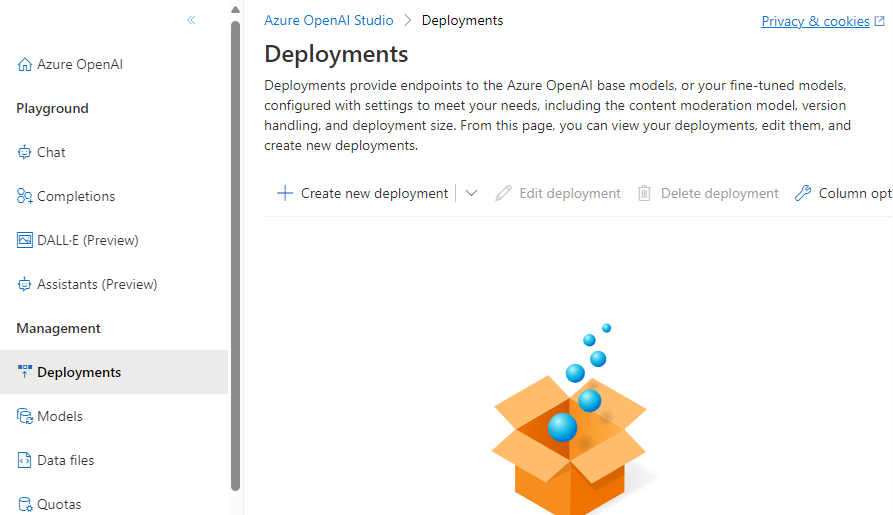

Step 4: Deploy a Model

- Once your Azure OpenAI resource is set up, go to Azure OpenAI Studio.

- Select it and go to Deployments.

- Click on 'Create new deployment.

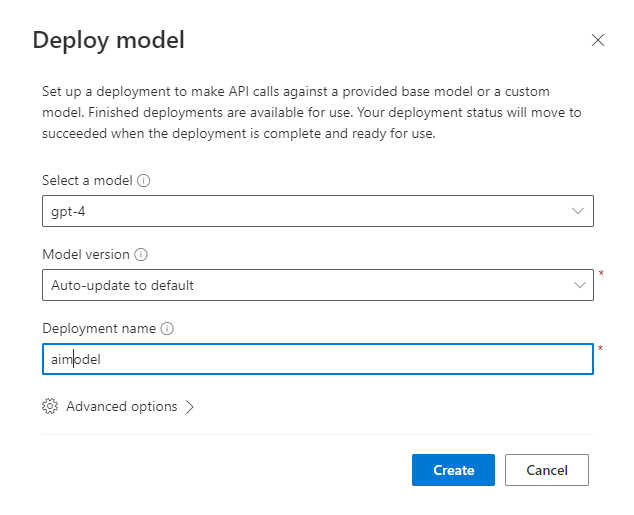

- Choose a model (like GPT-4), the model version, and enter a Deployment name.

- Click Create.

Step 5: Test Your Model

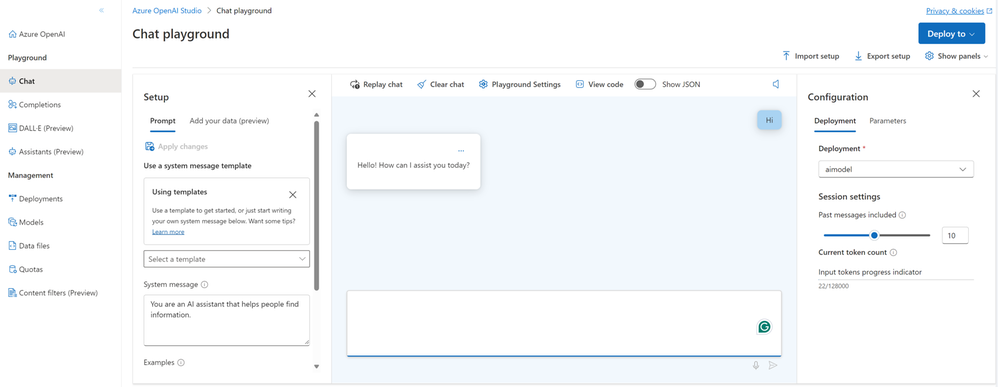

After deployment, you can test your model in Azure OpenAI Studio by accessing features like Chat and Completions playgrounds.

- Go to 'Chat' and enter some text in the box.

Step 6: Integrate with Applications

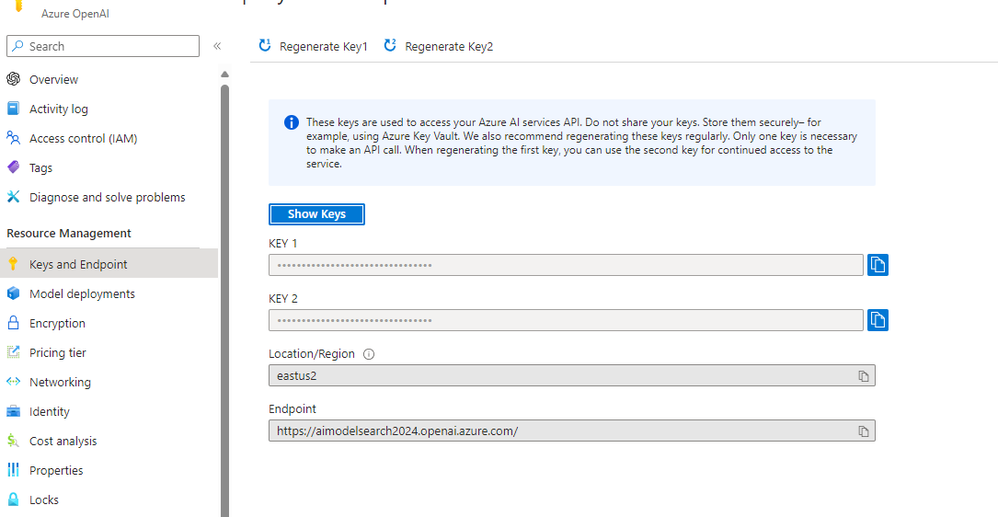

- Retrieve your endpoint URL and primary API key from your Resource management page's Keys and Endpoint section.

- Use these details to integrate Azure OpenAI capabilities into your applications.

2.Visual Studio Code

Harness the full potential of data analysis by integrating Jupyter with LangChain and a GPT-4 Language Model (LLM) in Visual Studio Code (VS Code). This comprehensive guide will walk you through using Anaconda as your Python kernel, from setup to executing insightful data analyses.

Step 1: Setting up your environment

To work with Python in Jupyter Notebooks, you must activate an Anaconda environment in VS Code, or another Python environment in which you've installed the Jupyter package. To select an environment, use Python: Select Interpreter command from the Command Palette (Ctrl+Shift+P).

Step 2: Install Visual Studio Code Extensions

- Ensure VS Code is equipped with the Python and Jupyter extensions:

- Open VS Code and navigate to the Extensions view (Ctrl+Shift+X).

- Search for and install the "Python" and "Jupyter" extensions by Microsoft.

Step 4: Launch Jupyter Notebook in VS Code

- Within your activated Anaconda environment, launch VS Code:

- Run code to open VS Code directly from the Anaconda Prompt or Terminal.

- Create a new Jupyter Notebook in VS Code: Open the Command Palette (Ctrl+Shift+P), type "Jupyter: Create New Blank Notebook", and select it.

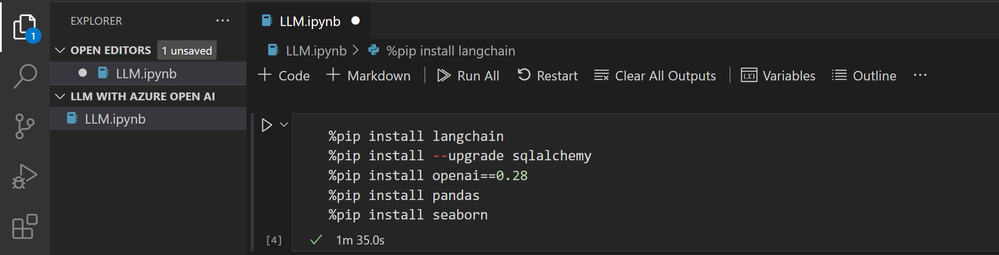

Step 5: Install Required Libraries

- In the first cell of your notebook, install the necessary libraries for your project:

- langchain: A custom package specific to this project.

- sqlalchemy: A SQL toolkit and ORM system for Python, upgraded to the latest version.

- openai==0.28: A package to interact with the OpenAI API, installed at a specific version (0.28).

- pandas: A data manipulation library in Python.

- seaborn: A Python data visualization library.

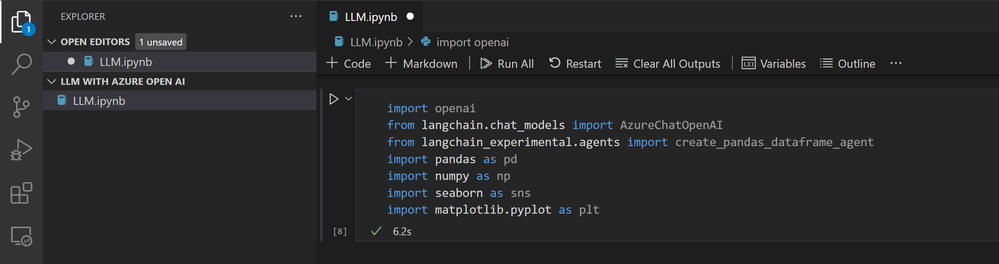

Step 6: Import Libraries and Configure OpenAI

- Configure the OpenAI SDK and import other necessary libraries in a new cell:

- openai: OpenAI's API client for interacting with their machine learning models.

- AzureChatOpenAI from langchain.chat_models: A custom module for integrating OpenAI with Azure's chat services.

- create_pandas_dataframe_agent from langchain_experimental.agents: A function to create an agent that interacts with pandas DataFrames.

- pandas as pd: A library for data manipulation.

- numpy as np: A library for numerical computations.

- seaborn as sns: A library for data visualization.

- matplotlib.pyplot as plt: A library for plotting.

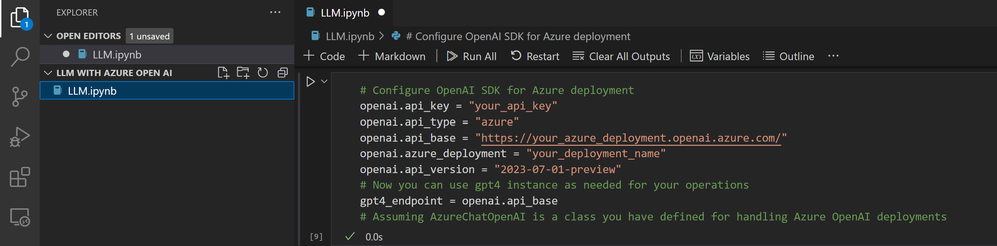

Step 7: Configure OpenAI SDK for Azure deployment

- Configure OpenAI SDK for Azure deployment in a new cell:

- openai.api_key = "xxxxxxxxxxxx": This sets the API key for authentication. This key should be kept secret.

- openai.api_type = "azure": This sets the type of API to Azure.

- openai.api_base = "https://xxxxxxxx.openai.azure.com/": This sets the base URL for the API.

- openai.azure_deployment = "xxxxxx": This sets the specific Azure deployment to interact with.

- openai.api_version = "2023-07-01-preview": This sets the API version to use.

- gpt4_endpoint = openai.api_base: This creates a variable gpt4_endpoint that stores the base URL of the API.

Step 8: Load Data, Instantiate the DataFrame Agent, and Analyze

Load your data into a DataFrame, instantiate the DataFrame agent, and begin your analysis with natural language queries:

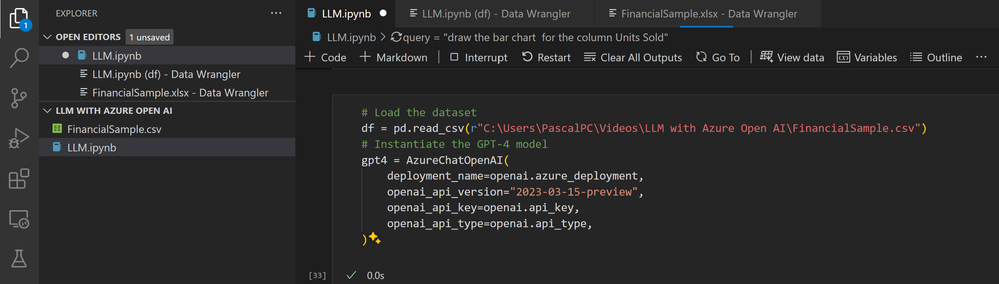

1. Load your data into a DataFrame and instantiate the DataFrame agent

Using the pandas library and GPT-4, this Python code shows a new way to analyze data by loading a dataset into a DataFrame and adding natural language processing features. By making an agent that merges the analytical skills of pandas with the contextual comprehension of GPT-4, users can ask their data questions in natural language, making complex data operations easier. This combination improves data availability and creates new possibilities for intuitive data discovery.

This Python code does two things:

- It employs the pandas library to read a dataset from a CSV file into a DataFrame, which is a two-dimensional data structure with labels. The data is kept in the variable df.

- It creates an instance of the GPT-4 model using the AzureChatOpenAI class, which is probably a wrapper around the Azure OpenAI API. The instance is created with several parameters, such as the deployment name, API version, API key, and API type. The created instance is kept in the variable gpt4.

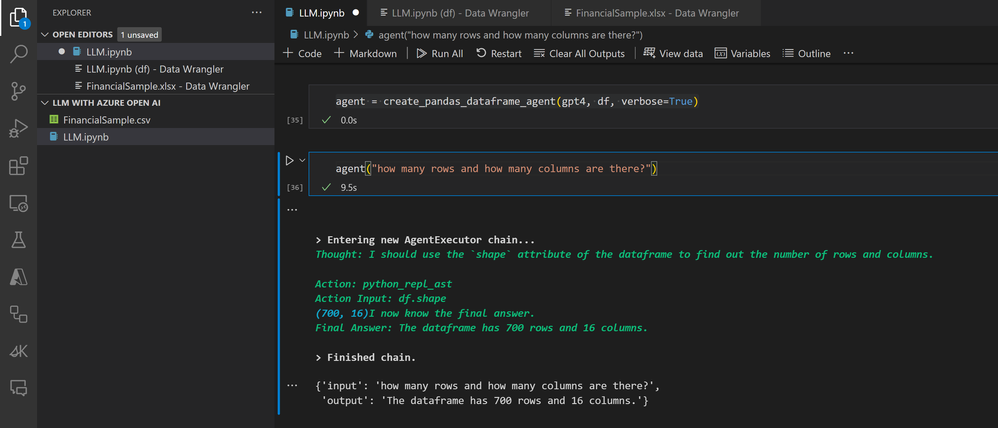

This line of Python code uses a function named create_pandas_dataframe_agent to create an agent. The function takes three arguments:

- gpt4: An instance of a GPT-4 model.

- df: A pandas DataFrame, which is a two-dimensional labeled data structure.

- verbose=True: A boolean that, when set to True, typically means the function will provide more detailed logs or messages.

The function is likely creating an agent that can process or interact with the data in the DataFrame using the GPT-4 model. The resulting agent is stored in the variable agent.

The following line, which is "How many rows and how many columns are there?" is a question that the agent function can recognize and answer. For instance, if `agent` is part of a program that works with databases or spreadsheets, this command might make it return the number of rows and columns in the current dataset.

2. Analysis with natural language queries

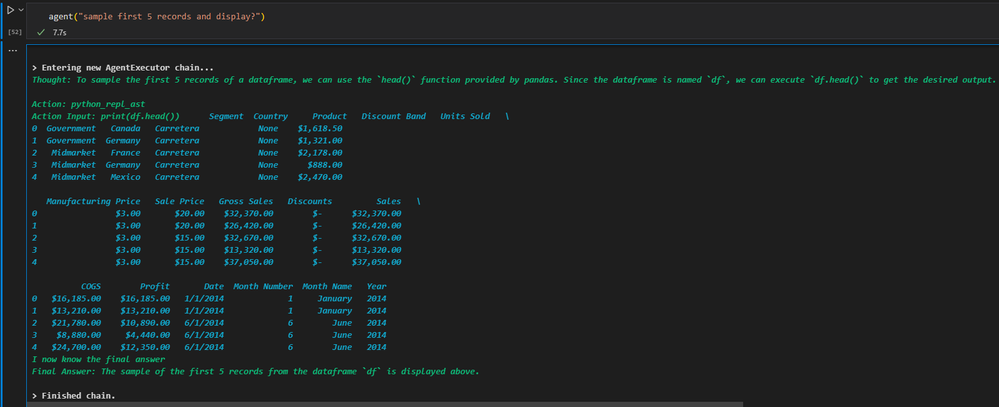

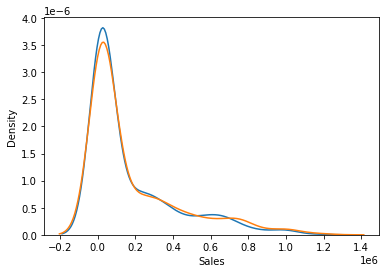

In this analysis, we delve into a dataset utilizing Python and pandas to reveal insights and patterns through natural language queries. Beginning with a preview of the dataset's structure and contents via the head() function, we set the stage for a deeper exploration into sales data across various dimensions. We keep on working on issues like finding outliers in important financial metrics—'Units Sold', 'Manufacturing Price', and 'Sale Price'—using both statistical and visual methods, even when the data format is not ideal. We finish our exploration by creating a Kernel Density Estimate (KDE plot to visually compare 'Sales' and 'Gross Sales', showing how seaborn can help us overcome the challenges of a text-based analysis environment and emphasizing the important steps in data cleaning, outlier detection, and distribution comparison.

The result shows the first 5 records of a dataframe called df, which is obtained using the head(function in pandas. This function, by default, gives the first 5 records of the dataframe, offering a glimpse of the data, with columns such as Segment, Country, Product, Discount Band, Units Sold, Manufacturing Price, Sale Price, Gross Sales, Discounts, Sales, COGS, Profit, Date, Month Number, Month Name, and Year. The records present sales information for the product "Carretera" in different segments and countries, describing aspects like sales price, gross sales, discounts, cost of goods sold (COGS, and profit, among others, for the beginning period of 2014.

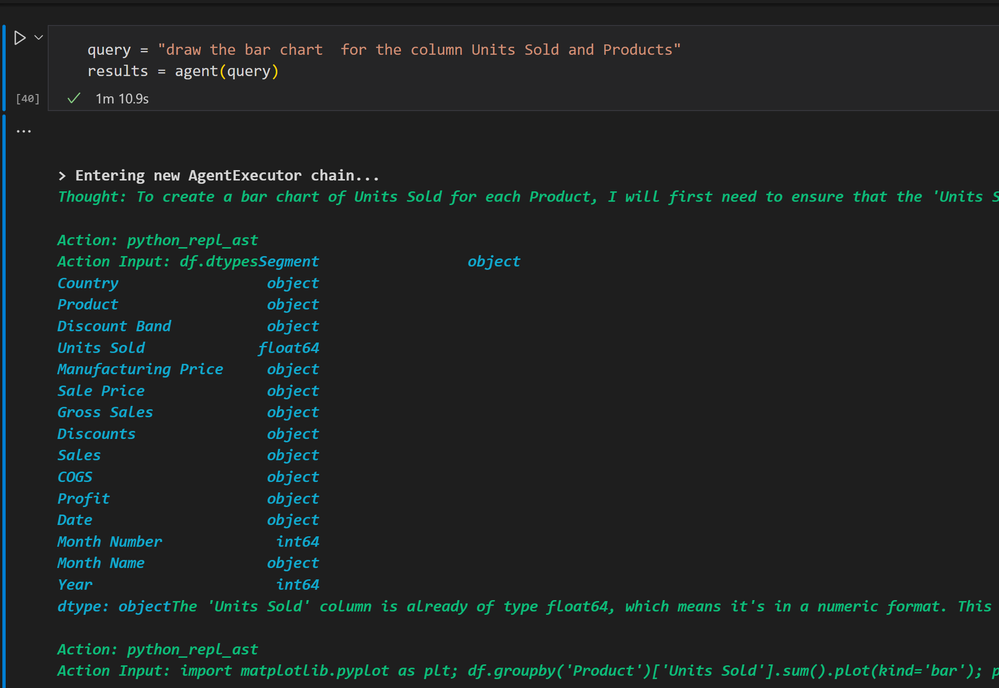

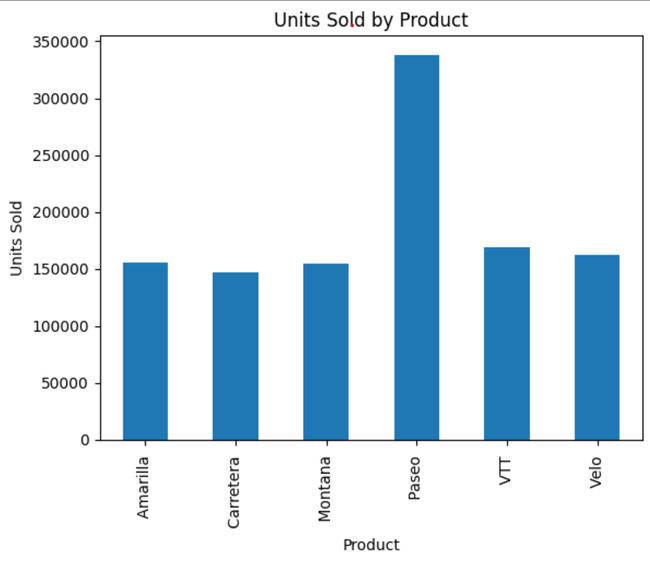

The sequence of Python explains how an Agent Executor chain makes a bar chart that shows how many units of each product were sold. Here is an overview:

- First Observation: The agent notices that the 'Units Sold' column seems to be in a string format because of the dollar signs and commas. The goal is to change this column to a numeric type so that a bar chart can be made. This chart will have the 'Product' column as the x-axis and the numeric 'Units Sold' column as the y-axis.

- First Action - Check Data Types: The agent runs a command (df.dtypes to check the data types of columns in the DataFrame. The output shows that the 'Units Sold' column is already a float64 type, meaning it's in numeric format.

- Understanding and Next Steps: The agent realizes that the dollar signs and commas seen in a previous display (not shown in the instructions are probably part of the display format and not the actual data. Since 'Units Sold' is already numeric, there's no need for data type conversion.

- Making the Bar Chart: The agent goes ahead to make the bar chart using matplotlib. It groups the data by 'Product', adds up the 'Units Sold' for each product, and plots this data as a bar chart. The chart is modified with labels for the x-axis ('Product', y-axis ('Units Sold', and a title ('Units Sold by Product')).

The instructions describe how the agent uses and tests data types and creates a visual representation of the sales data without needing to convert data types, since the 'Units Sold' column is already in the correct numeric format.

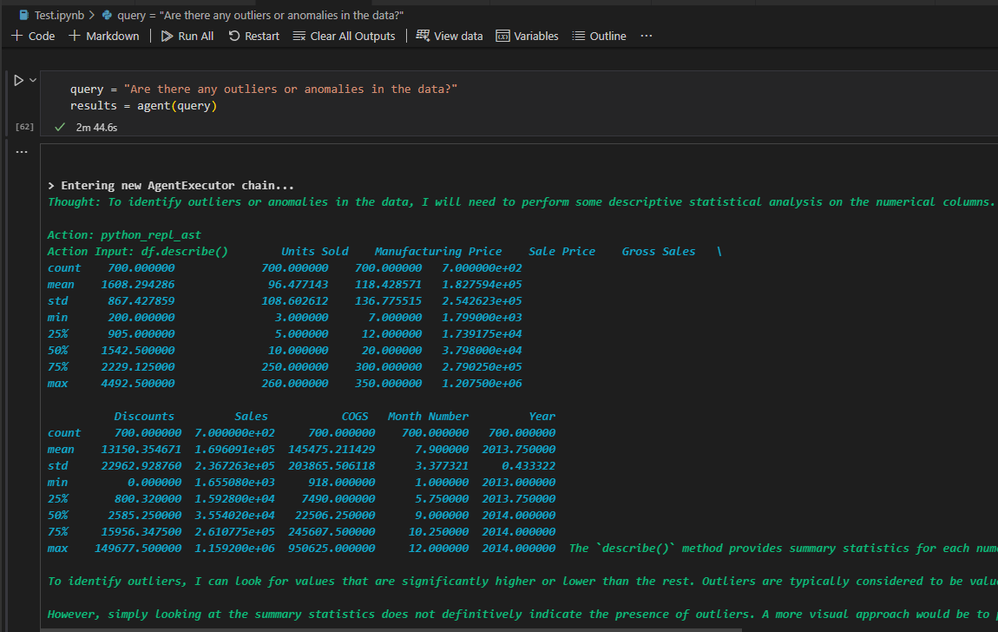

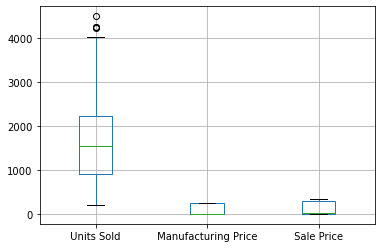

The sequence of Python code executions aimed at analyzing a dataset for outliers by:

- Summary Statistics with pandas: The initial step uses df.describe() to calculate summary statistics for the dataset's numerical columns. This function outputs essential metrics like the mean, standard deviation, quartiles, and max/min values, providing a statistical overview of the data.

- Identifying Outliers Conceptually: It mentions the concept of outliers being values significantly distant from the median, typically defined as those beyond 1.5 times the interquartile range (IQR) from the 75th or 25th percentile.

- Attempt at Visual Inspection via matplotlib: A code snippet aiming to plot boxplots for specific columns ('Units Sold', 'Manufacturing Price', and 'Sale Price') encounters a KeyError, suggesting a discrepancy between the specified and actual column names.

- Column Name Verification: To rectify the plotting issue, the code df.columns is executed to list all dataframe columns, revealing extra spaces in the column names, which explains the earlier KeyError.

Using the Lang chain analysis and Azure Open AI features, provide the steps that focus on solving a KeyError due to incorrectly formatted column names in a dataframe and finding possible outliers. Key steps include:

- Correcting Column Names: The KeyError was resolved by including leading and trailing spaces in the column names, allowing for successful reference in subsequent analysis.

- Outlier Identification: Due to visualization limitations, outliers were identified mathematically by calculating the Interquartile Range (IQR) for ' Units Sold ', ' Manufacturing Price ', and ' Sale Price '. Outlier thresholds were established as 1.5 times the IQR above the 75th percentile.

- Outlier Thresholds: Potential outliers were defined as values greater than 4215.3125 for ' Units Sold ', greater than 617.5 for ' Manufacturing Price ', and greater than 732.0 for ' Sale Price '.

- Conclusion: The process identified thresholds beyond which values in the specified columns could be considered outliers. Actual outlier identification would require applying these thresholds to the dataset.

This summary outlines the steps taken to correct data referencing issues and the method used to identify potential outliers in the dataset mathematically.

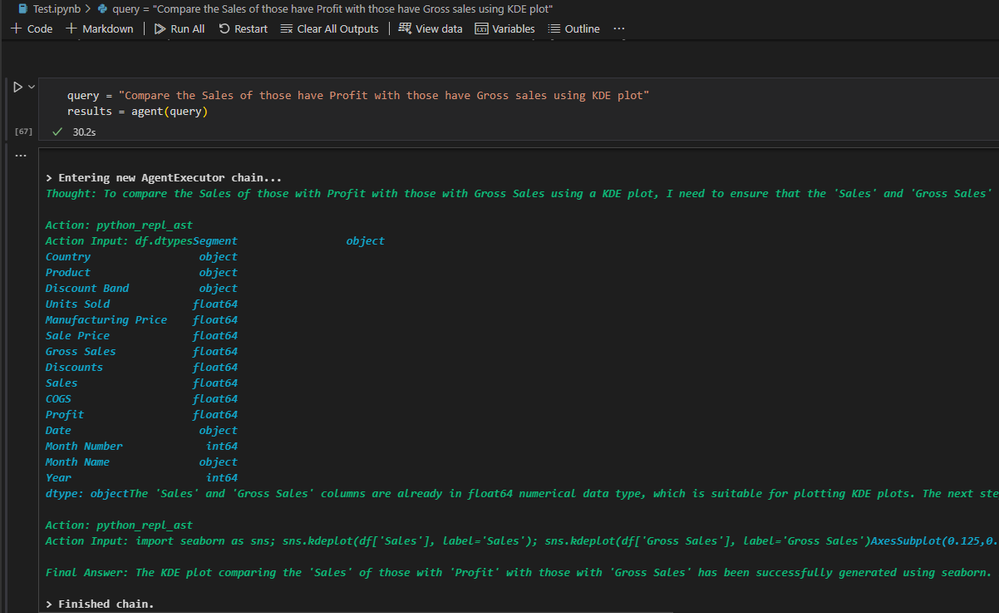

The process described involves preparing and visualizing data to compare the distribution of 'Sales' versus 'Gross Sales' using a Kernel Density Estimate (KDE) plot. Here are the key points summarized:

- Data Type Verification: Initially, it was confirmed that the 'Sales' and 'Gross Sales' columns were of float64 numerical data type, making them suitable for KDE plotting.

- KDE Plot Creation: A KDE plot for 'Sales' and 'Gross Sales' was generated using the seaborn library. This type of plot is helpful for visually comparing the distribution of two variables.

- Visualization Limitation: Due to the text-based nature of the interface, the KDE plot could not be inspected visually within this environment. However, the successful execution of the plotting code indicates that the KDE plot was created without errors.

- Conclusion: The summary concludes that the KDE plot comparing 'Sales' to 'Gross Sales' was successfully generated. To view the plot, the code should be run in a Python environment that supports graphical output, such as Jupyter Notebook or a Python script executed in an IDE with plotting capabilities.

3. Conclusion

Integrating Generative AI systems like LangChain's Pandas DataFrame agent is revolutionizing data analytics by simplifying user interactions with complex datasets. Powered by models such as GPT-4, these agents enable natural language queries, democratizing analytics and empowering users without coding skills to extract valuable insights.

The LangChain Pandas DataFrame agent acts as a user-friendly interface, bridging the gap between users and complex data sources. It interprets queries, generates Python code, executes analysis processes, and delivers understandable results seamlessly.

Setting up AI services, like Azure OpenAI, is made accessible through intuitive interfaces, facilitating resource creation, model deployment, and application integration. This transformative potential accelerates data-driven decision-making, streamlines analysis, and unlocks insights for users without coding or data science expertise. By democratizing analytics, AI assistants pave the way for a future where data-driven knowledge is accessible to everyone.

4. Resources

- Quickstart - Getting started with Azure OpenAI Assistants (Preview) - Azure OpenAI | Microsoft Learn

- Fundamentals of Azure OpenAI Service - Training | Microsoft Learn

- Python and Data Science Tutorial in Visual Studio Code

- Working with Jupyter Notebooks in Visual Studio Code

- Introduction to Azure AI Studio (preview)

- Get started with prompt flow to develop language model apps in the Azure AI Studio (preview)