This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Load testing is an essential part of ensuring that your application can handle the expected user traffic and perform optimally under designed load. JMeter is a popular open-source tool for load testing. In this blog, let us explore how JMeter can be used to load test Azure OpenAI based applications.

JMeter Set up:

Follow the below steps to set up JMeter.

- Download the latest version of JMeter from the Apache JMeter website Apache JMeter - Download Apache JMeter

- Select the version to install. Install Java version based on the dependencies

- Unzip the downloaded file to a local directory.

- Run jmeter.bat from terminal ( from bin folder)

Steps for setting up and Running a Load Test with Azure OpenAI endpoints:

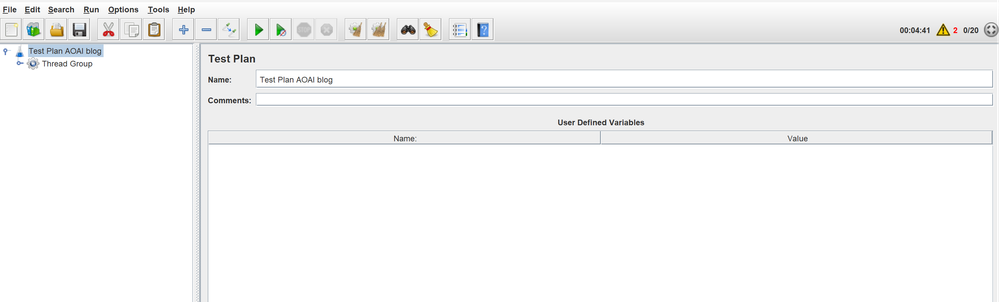

1. Open JMeter and create a new test plan by selecting 'Test Plan' from the 'File' menu.

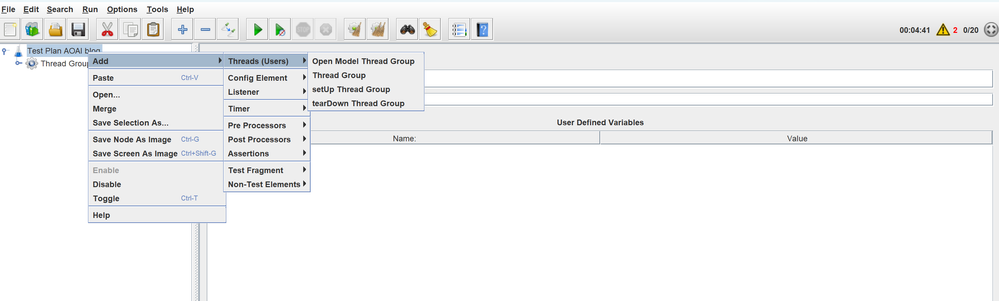

2. Right-click on the test plan and add a thread group by selecting 'Add' > 'Threads (Users)' > 'Thread Group'.

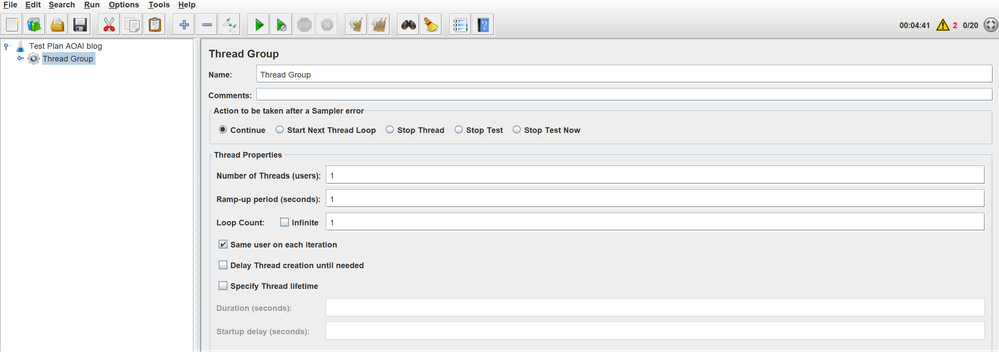

3. Configure the thread group by setting the number of threads, ramp-up period, and loop count. Each thread will execute the test plan in its entirety and completely independent of other test threads. Multiple threads are used to simulate concurrent connections. Ramp up period is used to gradually apply the load, start with ramp-up value equaling number of threads and adjust up or down as needed.

- The number of threads represents the number of users that will be simulated.

- The ramp-up period is the time taken for all the threads to start.

- The loop count is the number of times the test will be executed. You can configure these settings by selecting the thread group and setting the necessary parameters in the right-hand panel.

A combination of the above parameters along with logical controllers shown below can be used to set up and simulate the testing conditions. Typically, we use a logic controller like constant throughput timer (This is shown in section 4.4 below) to simulate the testing conditions. For eg, a test case will involve testing for 25 concurrent users with 6 requests per minute for each user, over a 3-minute duration.

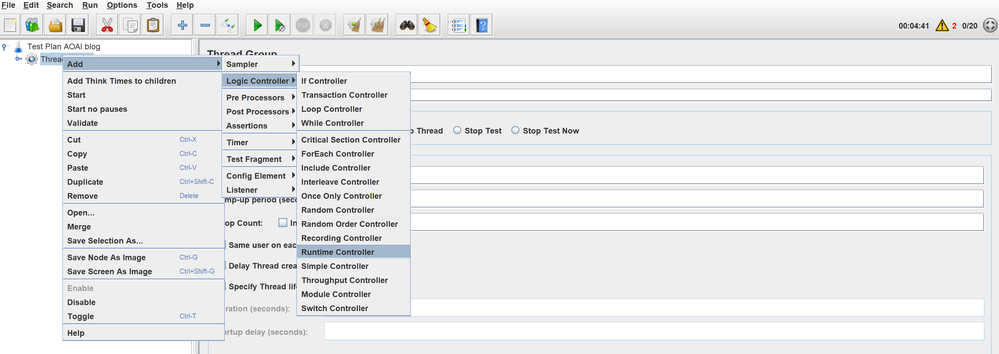

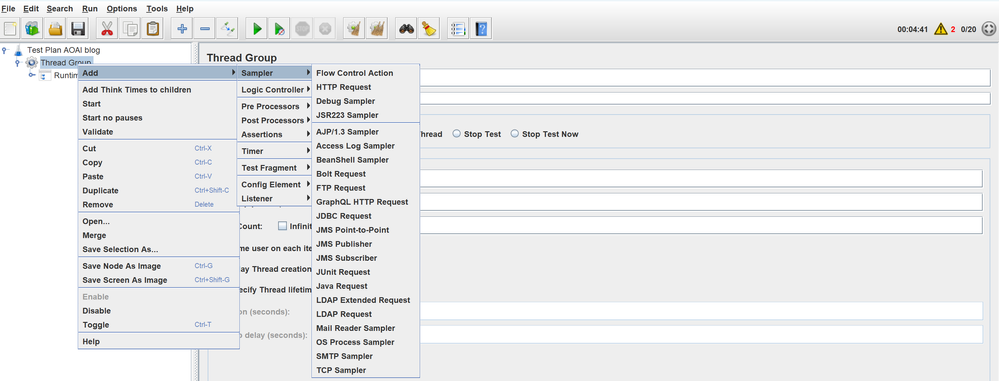

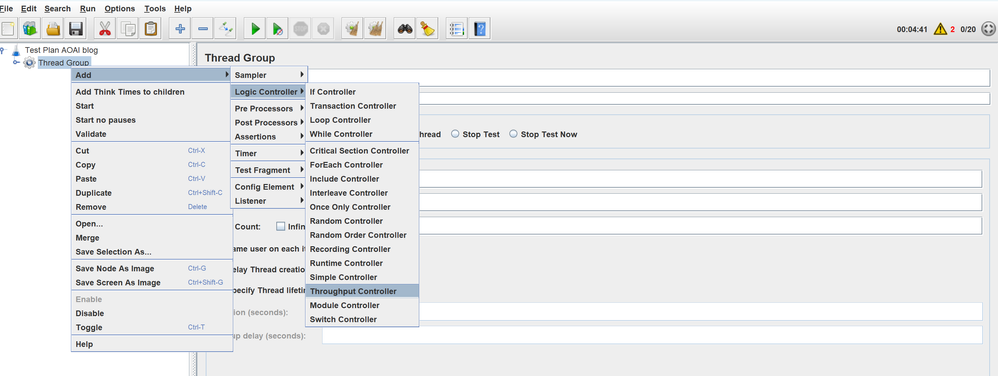

4. JMeter has two types of Controllers: Samplers and Logical Controllers. These drive the processing of a test.

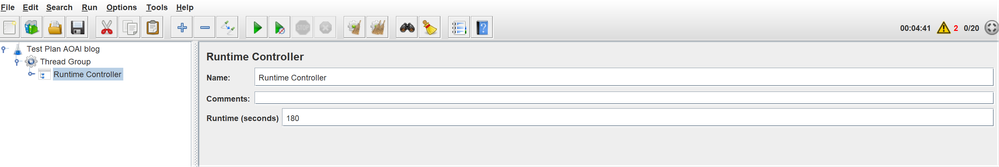

4.1 A logic controller is used to establish test throughput or run time depending on test requirements. The run time controller shown below can be configured to run the load test for a specific duration.

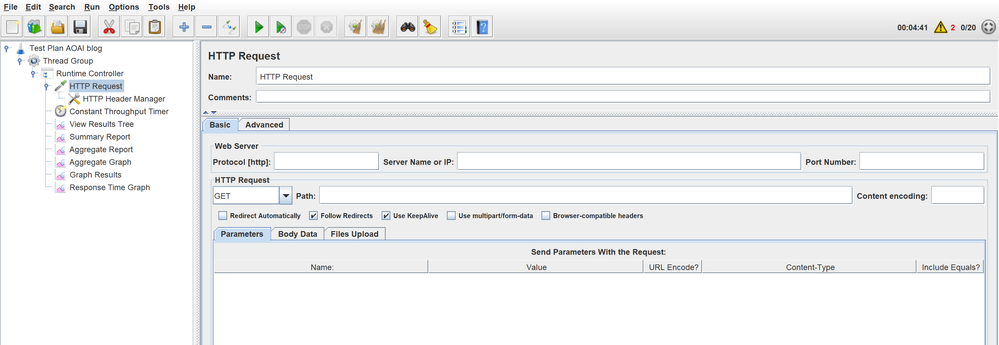

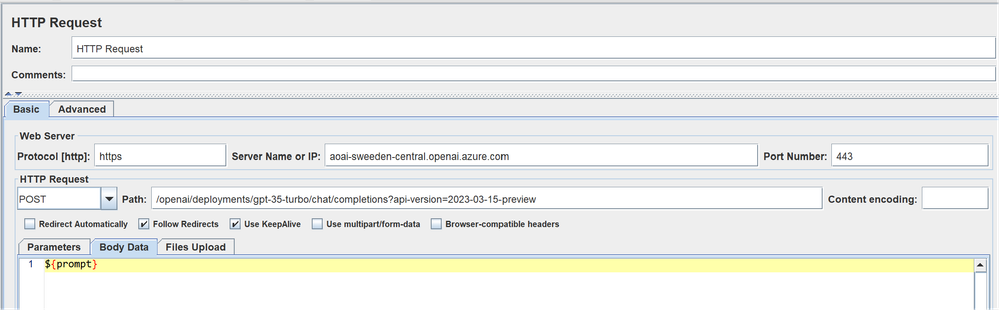

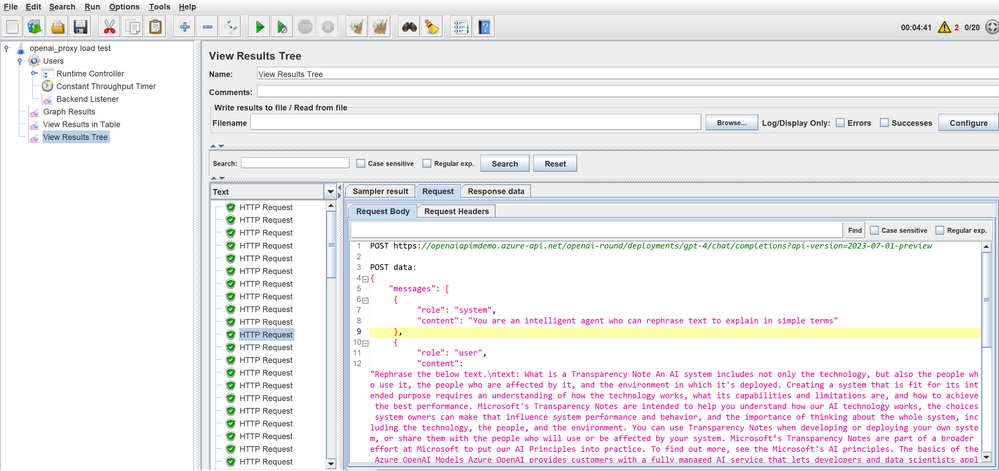

4.2 HTTP Request Sampler is added to test plan to define the AOAI endpoint or the APIM endpoint.

Http end points can be configured in the below screen.

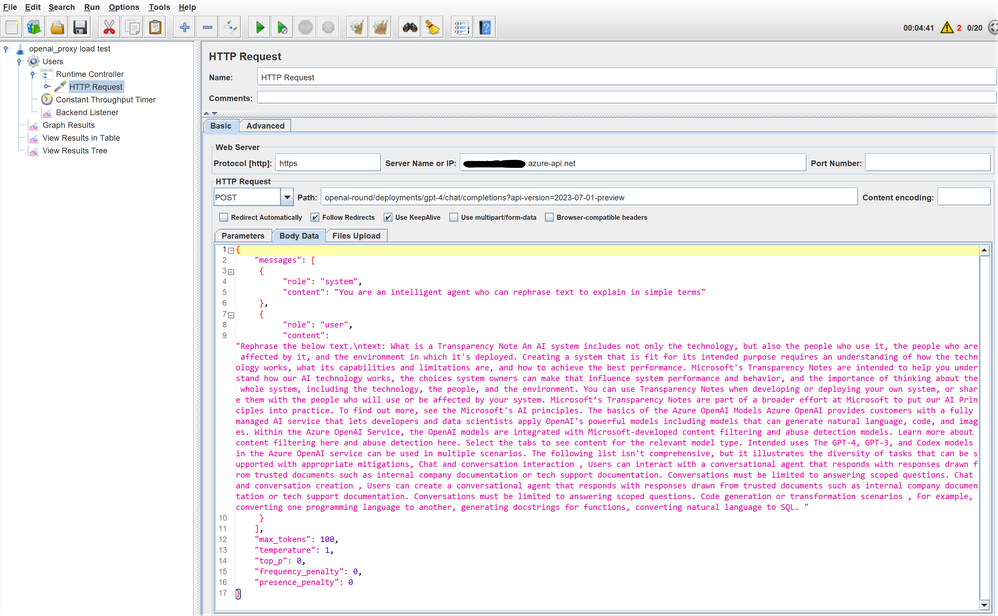

Below screen gives a sample prompt

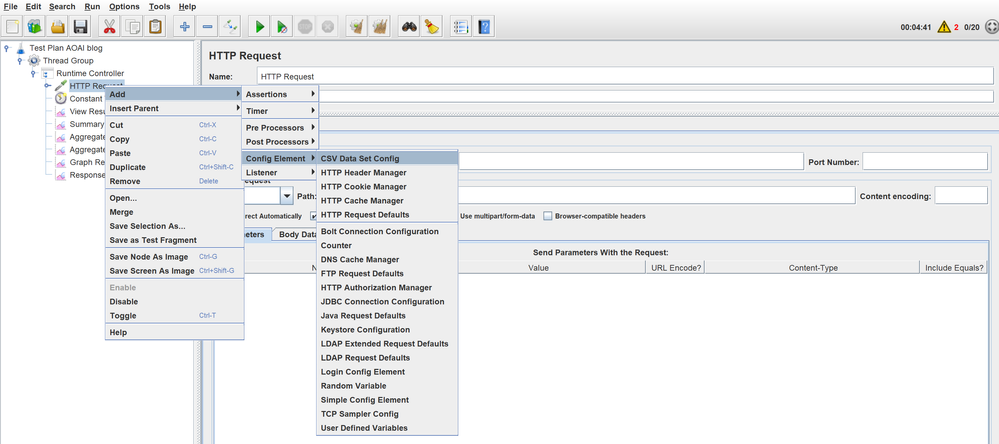

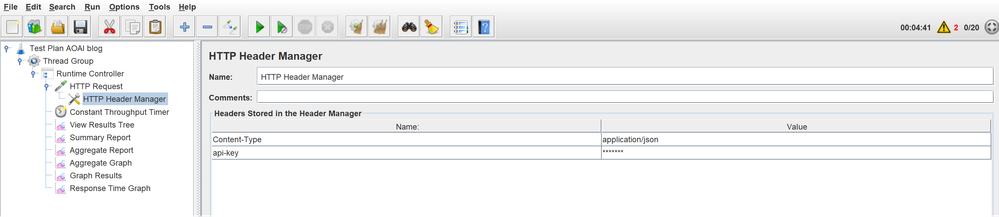

We can add a HTTP Header manager with a config element (for entering api-key for the AOAI (or APIM) endpoint)

Specify configuration for HTTP header. In our case, we will add the api-key for the AOAI endpoints or APIM endpoints based on test requirement.

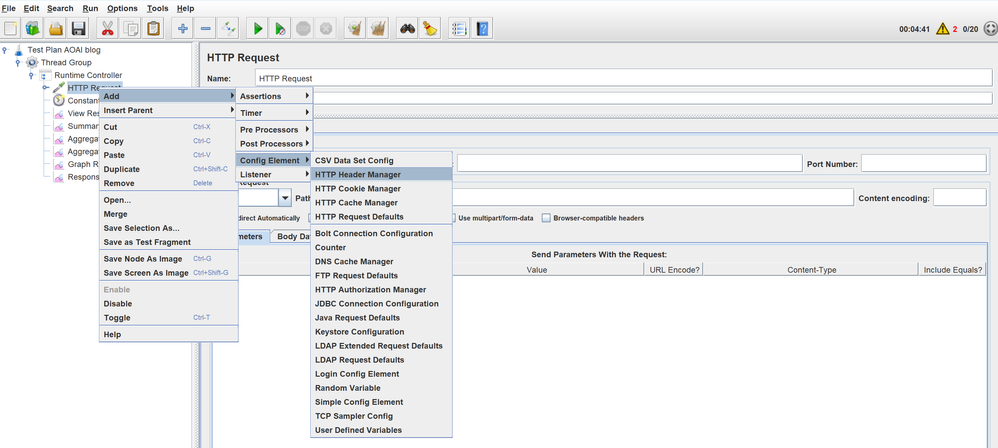

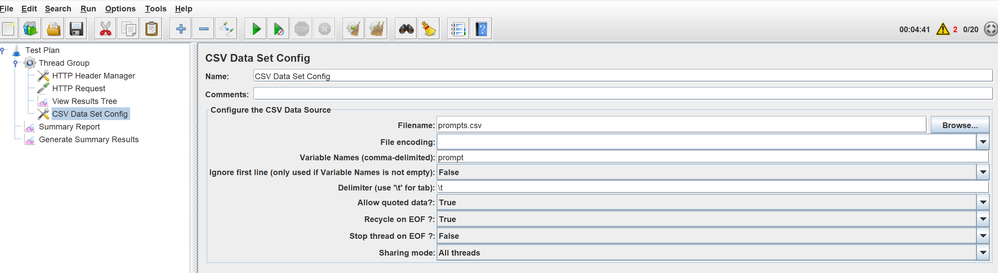

4.3 We can also run the load test with a csv file containing the prompts in a specified format by selecting "CSV Data Set Config".

The filename and variable should be configured as shown below.

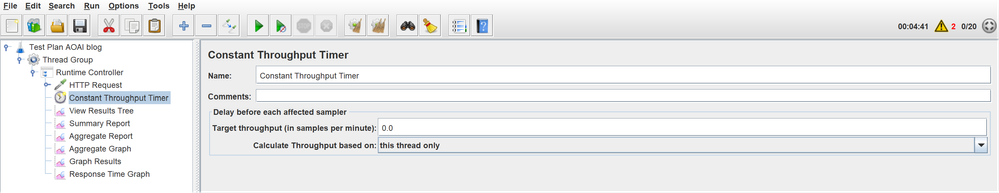

4.4 Constant throughput timer can be used to define a specific number of requests per minute.

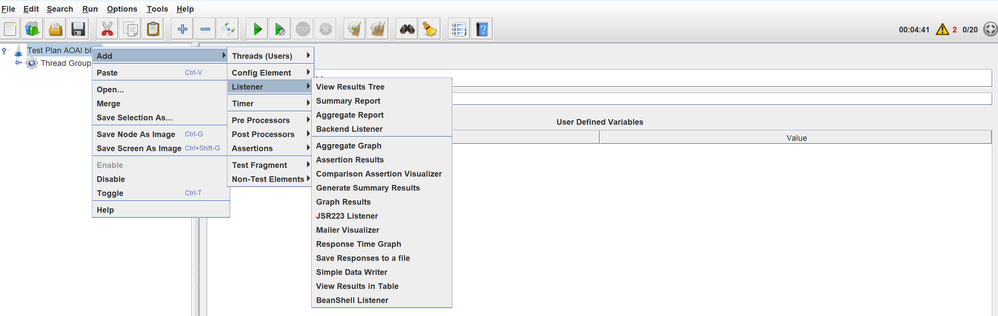

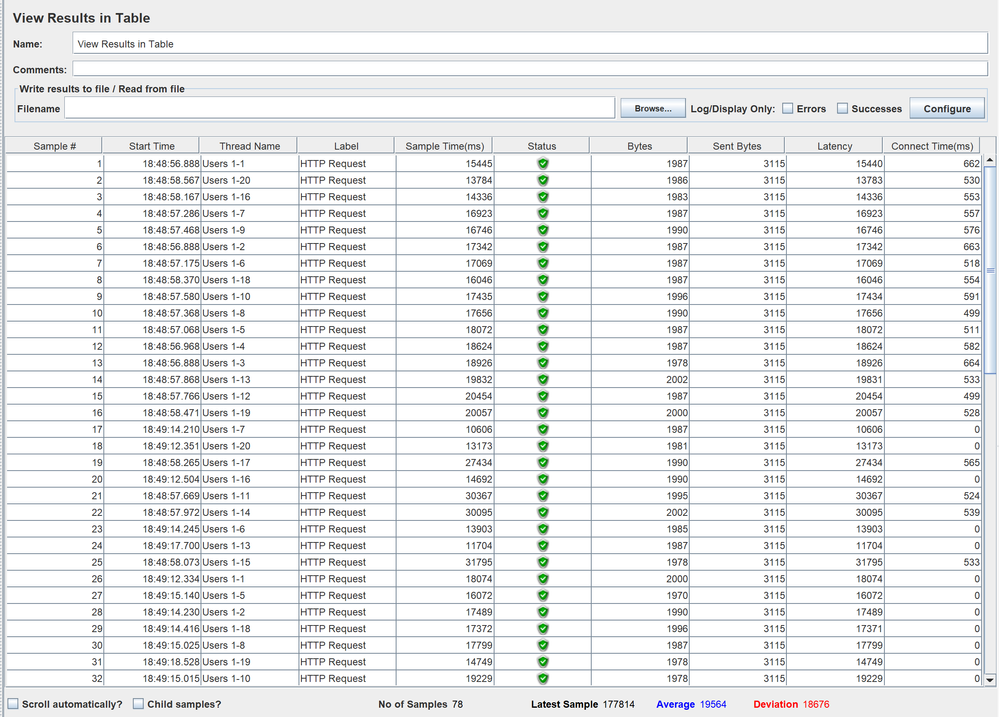

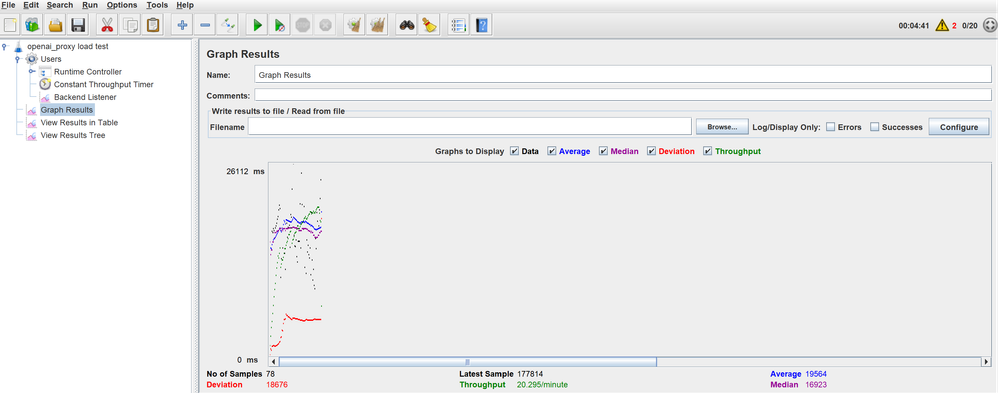

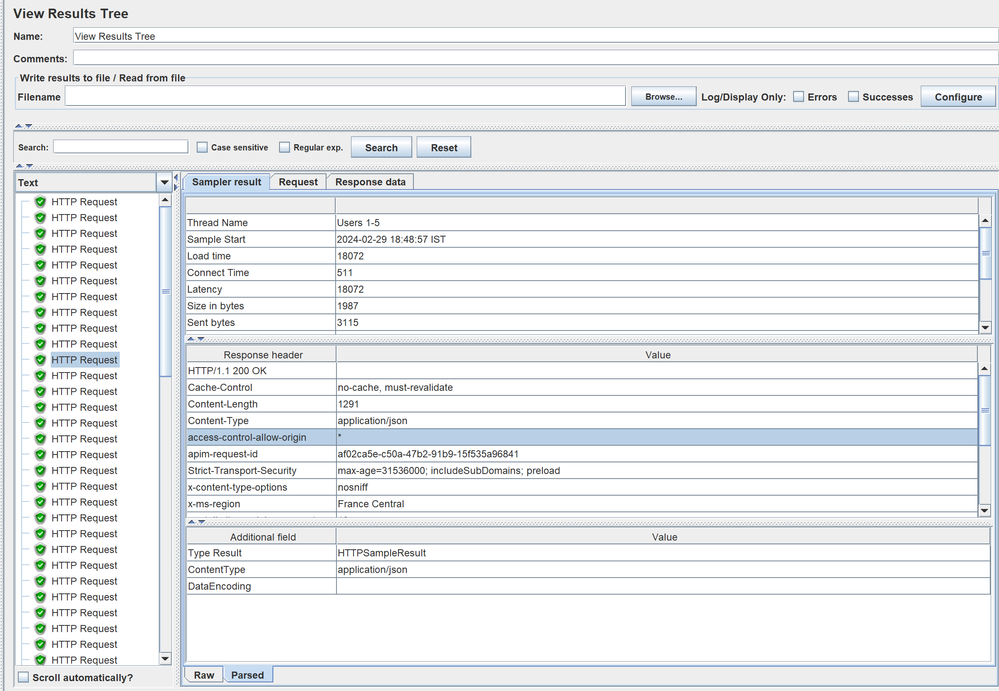

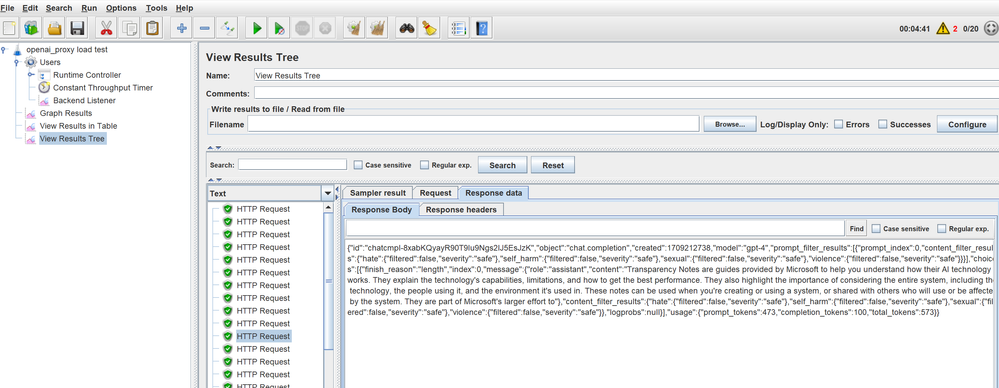

Listeners provide access to the information JMeter gathers about the test cases while JMeter runs. The Graph Results listener plots the response times on a graph. The "View Results Tree" Listener shows details of sampler requests and responses and can display basic HTML and XML representations of the response. Other listeners provide summary or aggregation information.

Additionally, listeners can direct the data to a file for later use. Every listener in JMeter provides a field to indicate the file to store data to. There is also a Configuration button which can be used to choose which fields to save, and whether to use CSV or XML format.

Adding Listeners for result analysis.

5. Configure listeners by adding reports for “View Results Tree”, “Summary Report”, “Backend Listener”, “Graph Results”, etc., as required.

6. Save the test plan by selecting 'Save Test Plan As' from the 'File' menu.

7. Run the test

8. View Results

View results in table

View results in graph:

View Results in Tree: This provides a detailed view where each request can be drilled down to view the request and response parameters.

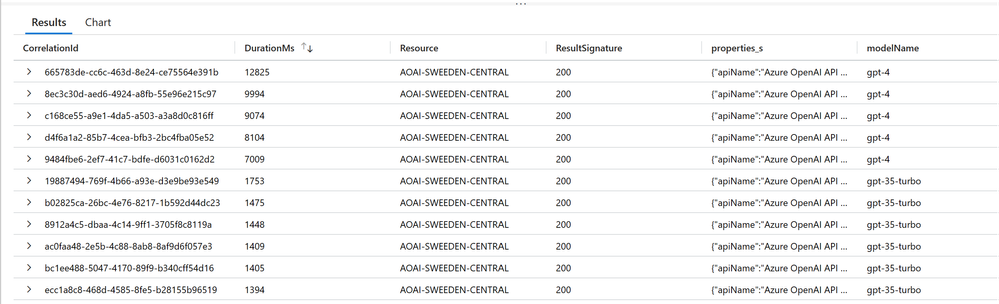

We can also check other metrics like tokens if logging is enabled from AOAI / APIM to correlate the results.

AzureDiagnostics

| take 100

| where Category == "RequestResponse" and OperationName =="ChatCompletions_Create"

| where ResultSignature ==200

| extend properties_s = parse_json(properties_s)

| project CorrelationId, DurationMs, Resource, ResultSignature, properties_s, modelName = properties_s.modelName

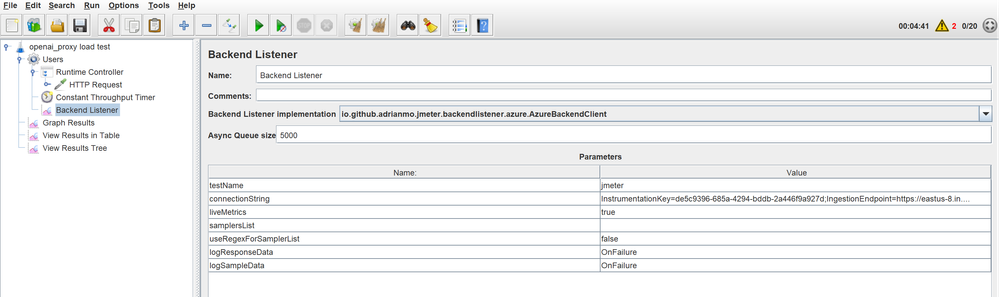

We can also use plugins like adrianmo/jmeter-backend-azure: A JMeter plug-in that enables you to send test results to Azure Monitor (github.com) that enables us to send test results to Azure Application Insights. This requires saving the .jar file for the extension in lib/ext folder of Jmeter home directory, and adding a "Backend Listener" at thread group level as shown below.

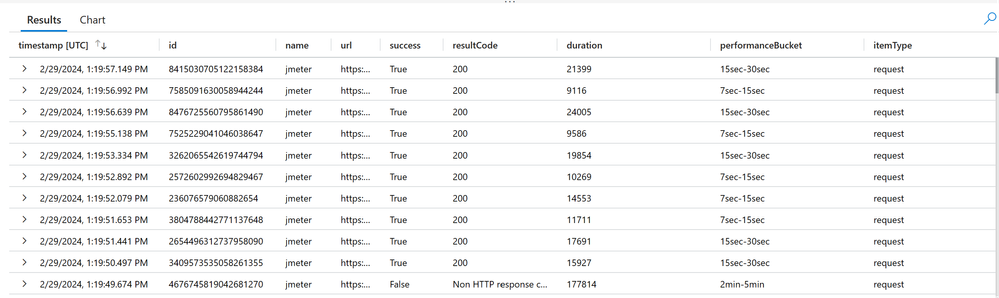

By running the below query, we will be able to see the results in app insights logs.

requests

| where name == "jmeter"

References:

- Apache JMeter - Apache JMeter™

- ignaciofls/LoadTest-AOAI: Load test repo to benchmark performance and identify bottlenecks of E2E Azure OpenAI projects (github.com)

- Monitoring Azure OpenAI Service - Azure AI services | Microsoft Learn

- azure-openai-service-proxy/loadtest at main · microsoft/azure-openai-service-proxy (github.com)

- adrianmo/jmeter-backend-azure: A JMeter plug-in that enables you to send test results to Azure Monitor (github.com)