This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

Microsoft Copilot for Security and NIST 800-171: Access Control

Microsoft Copilot for Security in Microsoft’s US Gov cloud offerings (Microsoft 365 GCC/GCC High and Azure Government) is currently unavailable and does not have an ETA for availability. Future updates will be published to the public roadmap here.

As of this writing we’ve received the Proposed Rule of the Cybersecurity Maturity Model Certification (CMMC) 2.0, and the public comment period ended on February 26. The National Institute of Standards and Technology (NIST) just released their analysis of public comments on the final draft of NIST Special Publication 800-171 Revision 3 (NIST 800-171r3) and initial draft of NIST 800-171Ar3. NIST plans to publish final versions sometime in Spring 2024. These publications are important because one of the primary requirements for CMMC is that organizations will need to implement most, if not all, of NIST 800-171r3’s controls for Level 2 certification.

In the first blog of this series, we looked at the System and Information Integrity family of requirements (3.14) in the draft of NIST 800-171r3, which covers flaw remediation, malicious code protection, security alerts via advisories and directives, and system monitoring. Also, the blog discussed how Microsoft Copilot for Security (Security Copilot) can help DIB organizations meet these requirements by identifying, reporting, and correcting system flaws more efficiently and effectively. The second blog in this series will dive into the very first requirement family – Access Control (3.1)

Early reports indicate organizations are reducing time and resource constraints by deploying Security Copilot in private preview and the early access program. Despite no public timeline on the availability of Security Copilot in Microsoft’s US-sovereign cloud offerings (Microsoft 365 GCC/GCC High and Azure Government), it’s worthwhile exploring how companies in the Defense Industrial Base (DIB) may use these AI-powered capabilities to meet NIST 800-171r3 security requirements, and ultimately defend against identity threats with finite or limited resources.

NOTE: Some requirements, such as 3.1.1 contain seven bullets (a-g) or more, and an entire blog could be written on that one requirement alone. Each section is not exhaustive of the requirement nor the applications of certain technologies. The suggested applications of Microsoft solutions do not guarantee compliance with any regulation nor prevention of an attack or compromise. All images and references are based upon preview experiences and do not guarantee identical experiences in general availability or within the U.S. Sovereign Cloud offerings.

Access Control (3.1.)

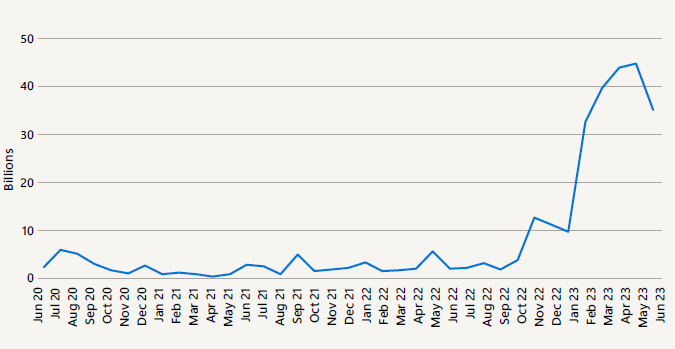

One might ask why Access Control holds the prominent first spot in the NIST 800-171 publication. It’s relatively simple – Access Control is alphabetically first. However, this requirement family is arguably one of the most paramount because of the remarkable growth in identity-based attacks and the need for identity architects or teams to work more closely with the Security Operations Center (SOC). Microsoft Entra data noted in the Microsoft Digital Defense Report shows the number of “attempted attacks increased more than tenfold compared to the same period in 2022, from around 3 billion per month to over 30 billion. This translates to an average of 4,000 password attacks per second targeting Microsoft cloud identities [2023]”.

3.1.1. Account Management

It is obviously a great starting point to “a. Define the types of system accounts allowed and prohibited” to access systems that hold Controlled Unclassified Information (CUI) or other sensitive information. Many organizations or their Managed Security Service Provider (MSSP) develop a mapping of privileged accounts and non-privileged accounts within their environment and develop policy based on principles of Least Privilege – which is a requirement to discuss later in this blog. Yet, the power of Microsoft Entra ID and Security Copilot shines most brightly after the security team “define(s)” or “c. Specify(ies) authorized users of the system(s), group(s) and role membership(s), and access authorization(s).”

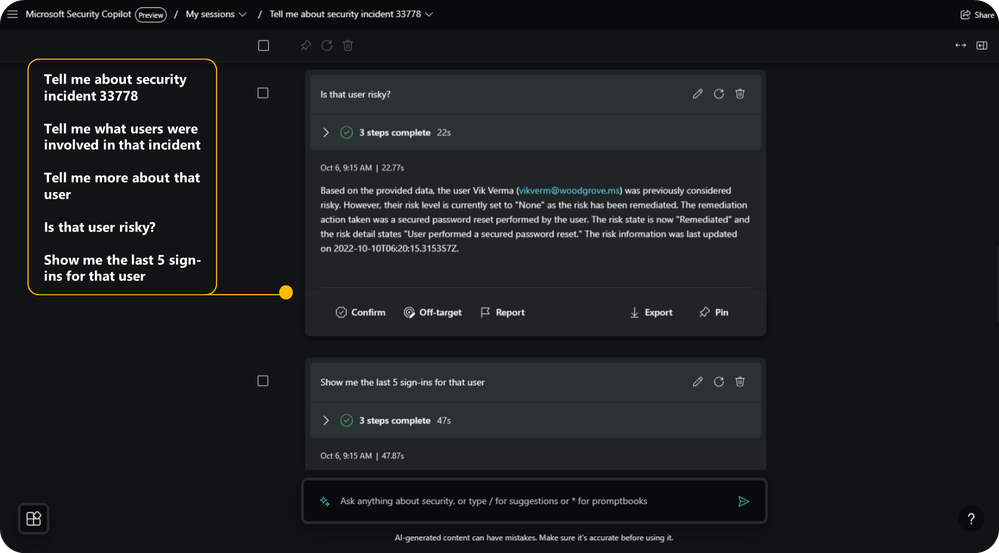

Microsoft Entra provides rich information for Microsoft Defender for Identity (MDI) and Microsoft Sentinel for “e. Monitor(ing) the use of system accounts.” Yet, Security Copilot increases the utility of this trove of incidents and events further by easily summarizing details about the totality of a user’s authentications, associations, and privileged access as shown in the figure below.

Furthermore, SOC and Identity administrators alike can quickly surface every user in the environment with expired, risky, or dormant accounts. They can also take the next steps to “f. Disable system accounts” when they meet those criteria or modify the identities and/or privileges. Much of this investigation and troubleshooting is done without the need of policy and configuration surfing, nor does the SOC or Identity administrator need to craft a KQL query or PowerShell script from scratch. Security Copilot allows these two roles to do all of this using natural language prompts.

Alex Weinert, VP of Identity Security at Microsoft, recently spoke of the narrowing gap between these two types of administrators, skillsets, and their teams in Episode 2 of The Defender’s Watch. Alex explains, “it’s more nuanced than… relying on your SOC team to catch things that are happening in Identity. Not all Identities are the same. Not all your servers are the same. We want to be making sure the two teams are working together to build a map of what are those critical resources and that there’s a feedback loop… listening to the SOC on the other side understanding what’s happening in the organization and what are we going to do as administrators [given investigation to remediation of an incident can take time]”. Security Copilot can be the accelerant for incidents and intelligence to drive Account Management and identity policy change.

Alex also quipped “if you’re an Identity Architect go buy your SOC team a pizza and get to know them” as he expressed the need for collaboration across Identity and SOC teams for access control. Ironically Dominoes just rolled out unified identity with Microsoft Entra ID.

3.1.2. Access Enforcement

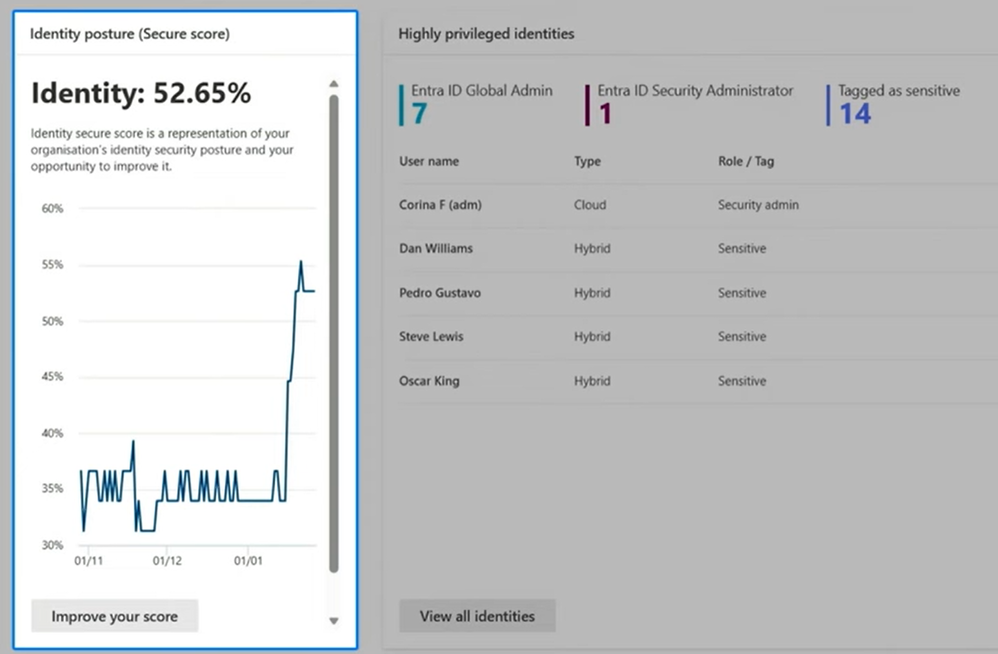

Security Copilot may help organizations day-to-day enforce Microsoft Entra ID access control policies and modify configurations to increase the identity score shown below. An Identity administrator or member of the SOC can also quickly create an audit log, for example, to detect when a new credential is added to an application registration by simply asking Security Copilot for the applicable KQL code. Also, individuals interviewed for CMMC assessments can leverage Security Copilot to quickly surface a summary of activities completed by your Entra ID (active directory) privileged users, identify when changes to Conditional Access policies were made, and more.

When going through a CMMC assessment, an assessor will be looking to determine if approved authorizations for “logical access” to CUI and system resources are enforced. Taking a step away from Security Copilot, it’s important to note the new MDI Identity Threat Detection and Response (ITDR) dashboard is one of the most elegant ways to show where and how enforcement is taking place or where your organization may not be. In a single plane, administrators can see their identity score from Microsoft Secure Score updated daily with a quick link to see access control policies and “system configuration settings”, new instances where users have exhibited risky lateral movement, and a summary of privileged identities with a quick link to view the full “list of approved authorizations”.

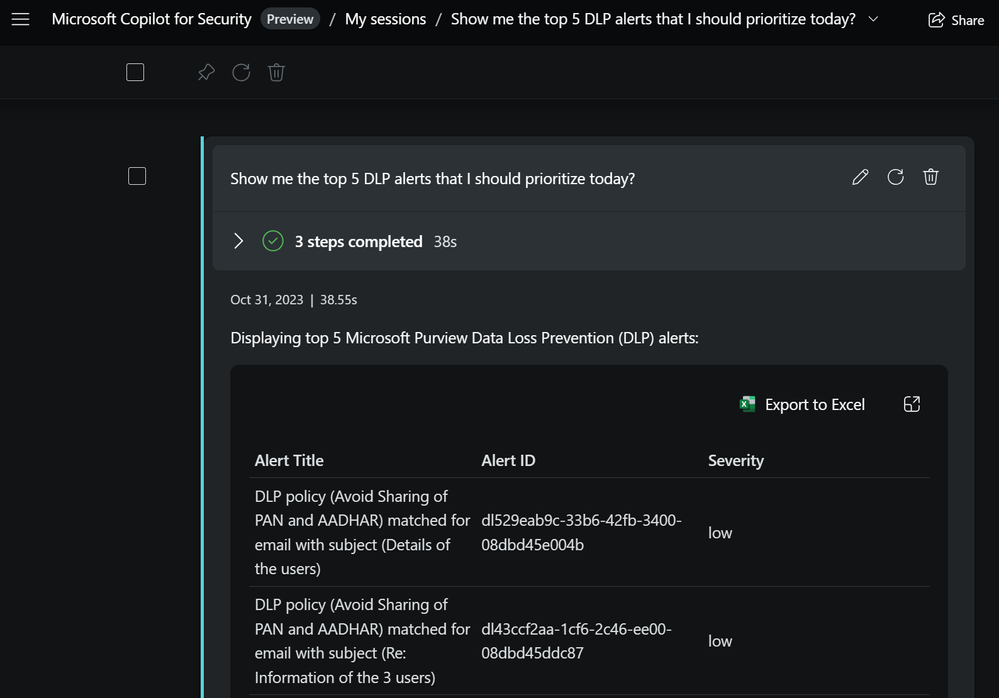

3.1.3. Information Flow Enforcement

Organizations meet this requirement by managing “information flow control policies and enforcement mechanisms to control the flow of CUI between designated sources and destinations (e.g., networks, individuals, and devices) within systems and between interconnected systems.” Microsoft Purview’s Information Protection label policies along with proper configuration of Data Loss Prevention (DLP) policies can prevent the flow of sensitive information between internal and external users via email, Teams, on-premises repositories and other applications. Security Copilot can share with users the top DLP alerts shown below, give a summary or explanation of an alert, and assist in adjusting policy based upon the alert scenario.

3.1.5 Least Privilege

Applying least privilege to accounts can often be combined with managing the functions they can perform, such as executing code or granting elevated access. Once an organization turns on Microsoft Defender for Cloud and Microsoft Entra ID Privileged Identity Management (PIM) for its resources in Azure or other infrastructure, users can be granted just-in-time access to virtual machines and other resources. Conversely, those same users can lose access based upon suspicious behavior like clearing event logs or disabling antimalware capabilities. Security Copilot can be used in the Microsoft Entra admin portal to guide the administrator on creating notification policies or conduct access reviews for activities like the aforementioned.

Security Copilot may also be used to identify where users have more than ‘just enough access’, or help the administrator create lifecycle workflows where a user’s privileges need modification based on changes in their role or group. On a final note, the draft of NIST 800-171Ar3 specifies that an assessor would possibly need to examine a list of access authorizations and validate where privileges were removed or reassigned during a given period – all of which can be generated in reports aided by Security Copilot.

3.1.11 Session Termination

This requirement has some art along with science. An organization can define “conditions or trigger events that require automatic session termination” by periods of inactivity, time of day, risky behavior, and more. Microsoft Entra ID defaults reauthentication requests to a rolling 90 days but that may be too infrequent for some users whom daily access sensitive data sets, such as an Azure subscription with Windows servers holding CUI. Security Copilot can aid administrators to develop Conditional Access policies based on sign-in frequency, session type (from a managed or non-managed device), or sign-in risk. Also, Security Copilot can be prompted to help a SOC analyst reason over permission analytics to determine the impact of a user who’s exhibiting risky behavior and take subsequent action to terminate a session outside of the normal ‘conditions’.

3.1.16 Wireless Access and 3.1.18 Access Control for Mobile Devices

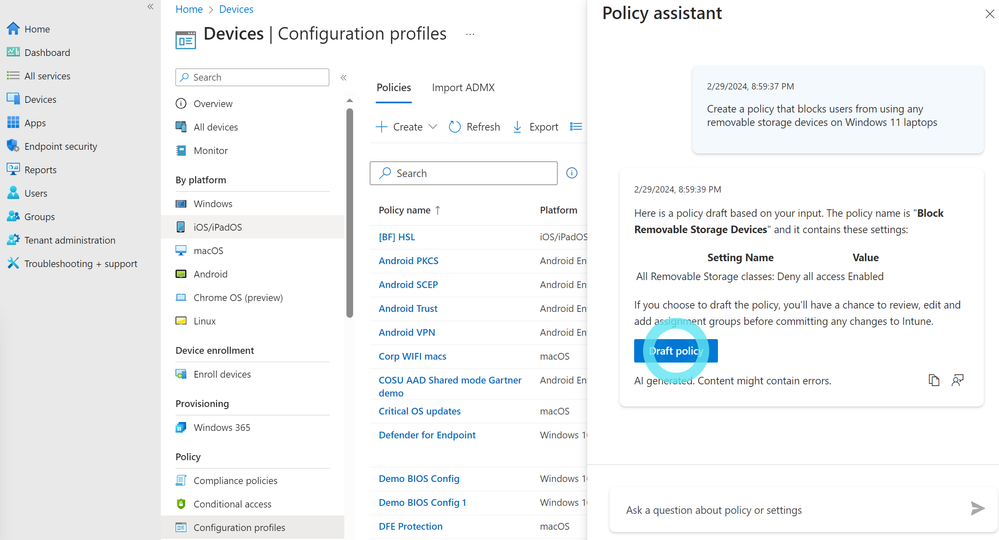

Rather an endpoint such as a laptop or various types of mobile devices, Security Copilot can aid users within the Microsoft Intune admin center to create policies for “usage restrictions, configuration requirements, and connection requirements” when wirelessly accessing systems of record. Below is an example of the embedded Security Copilot experience where we want to create a policy for Windows laptops in our environment.

Users can also ask Security Copilot to summarize an existing policy for devices in the environment, as well as generate or explore Microsoft Entra ID conditional access policies.

“Authoriz[ing] each type of wireless access” or “connection of mobile devices” will require policies that span multiple technologies. In many cases, administrators tasked with creating or managing these policies may not have the combined domain knowledge, yet Security Copilot bolsters individuals where they may possess certain skill gaps.

Meeting NIST 800-171 with Limited Resources

Joy Chik wrote in her blog, 5 ways to secure identity and access for 2024, “Identity teams can use natural language prompts in Copilot to reduce time spent on common tasks, such as troubleshooting sign-ins and minimizing gaps in identity lifecycle workflows. It can also strengthen and uplevel expertise in the team with more advanced capabilities like investigating users and sign-ins associated with security incidents while taking immediate corrective action.”

Microsoft Security Copilot is an advanced security solution that helps companies protect CUI access and prepare for CMMC assessment by elevating the skillset of almost every cybersecurity tool and professional in the organization. It’s also bringing the identity team and the SOC team closer together than ever before. DIB companies working with limited resources or MSSPs struggling to keep up with demand will, both, likely look to creatively deploy AI solutions such as Security Copilot in the near future.

Additional Resources

- Blog 1 of 2, Microsoft Security Copilot and NIST 800-171: System and Information Integrity (3.14.)

- Prompt users for reauthentication on sensitive apps and high-risk actions with Conditional Access

- Preparing for Security Copilot in US Government Clouds

- Microsoft unveils expansion of AI for security and security for AI at Microsoft Ignite