This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

*Please note: This workbook is considered Public Preview as there is some supplementary content that is still being finalized while the core functionality is finished. There will be an update in the future to move this to GA.*

Hate JSON templates? Looking to make your own Codeless Connectors for Microsoft Sentinel? You’re in luck. This workbook sets out to create a UI experience for creating Codeless Connectors in order to make it as easy as possible.

This solution currently has not been merged with the main Microsoft Sentinel repository, so it will not appear in Content Hub yet. You can find it for the time being in my personal repo: raw.githubusercontent.com/malowe101/Sentinel-Projects/master/CCP Builder Preview/CCP-Preview-Full-Workbook.json.

Prerequisites:

- Microsoft Sentinel Contributor permissions

- Registered application within Entra ID

- Any required permissions or details needed for external API sources

Registering an Application:

- Steps can be followed via the public document: Quickstart: Register an app in the Microsoft identity platform - Microsoft identity platform | Microsoft Learn

Design:

The design of the workbook is meant to mimic the experience that a user would experience when deploying an ARM template. The tabs currently are:

- Collection: This section has the user configure the components needed for data collection. By the end of it, the collection portion of the connector should be ready to go. These components are:

- The data collection endpoint

- The data collection rule

- The API request

- Paging

- Response parsing

- Authentication

- Connector Summary: This section has the user configure basic details that will primarily be shown in the connector gallery. The components are:

- The connector title

- The publisher associated with the connector

- A description of what the connector is and collects

- Queries used for tracking ingestion and connection (optional)

- Connector Details: This section has the user configure more UI components, permissions, and connection criteria. The components are:

- Prerequisites

- Instructions title (optional)

- Instructions description (optional)

- The instructions JSON body (optional)

- Deploy: This section reflects what the user has entered throughout the workbook. It serves as the last stop before deploying the template. The template that is deployed is hosted in GitHub and is sent the workbook values as parameters at deployment time.

Components:

- Workbook: Host of the experience and provides all of the functionality leveraged in the builder.

- GitHub: Hosts the template file that is used to deploy the connectors.

- Parameters: Used throughout the workbook to provide choices for the user and for storing user input.

- Tabs: Used within the builder to allow the users to change the options and steps shown.

- Text boxes: Used within the builder to provide help, tips, and instructions if needed.

- Custom endpoint query: Query type that allows for the API endpoint and credentials to be tested within the workbook to confirm validity.

- ARM Deploy: Button action type that allows the workbook to pull an ARM template from GitHub. This button is configured to send all of the user input to the template for deployment.

Workflow:

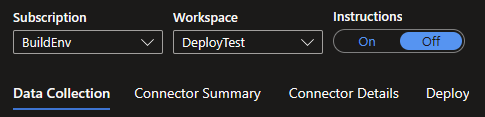

- Enter the workbook.

- Enter a subscription and workspace.

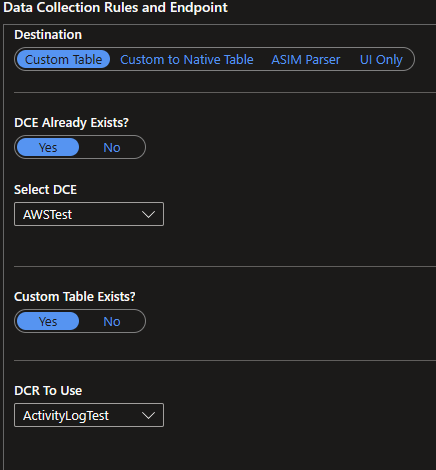

- Fill out the destination, data collection endpoint (DCE), and data collection rule(DCR).

- Custom table: Connector will send data to a custom table.

- Custom to Native Table: Connector will send data to the DCE/DCR which will route the logs to a native table.

- ASIM Table (Not Finished): Connector will send data to the DCE/DCR to be normalized and routed to the built-in table of interest.

- UI Only: Connector exists already as a resource elsewhere but needs a UI in the Microsoft Sentinel connector gallery.

- If a new table is needed, follow the steps for table and new DCR creation.

- Clicking the table wizard option will open the UI wizard that is normally seen via Log Analytics Workspaces > Tables > New Table.

- A sample JSON file is required for this method.

- It is recommended that the table name reflects the connector.

- It is recommended to create a new DCR at the same time as the table and list the table name within the DCR name.

- Clicking the table wizard option will open the UI wizard that is normally seen via Log Analytics Workspaces > Tables > New Table.

Note: For more information about the custom table creation experience, please see the documentation.

- Clicking the API option will expand a section within the workbook that will create a new custom table with the schema.

- The schema will need to be known in order to properly define the column titles and types.

- It is recommended that the table name reflects the connector.

- There will also be a section beneath the table creation section. The only value needed here is the name of the DCR as other properties will be pulled from other parts of the workbook.

- If sending custom logs to a native table, an editor will be present for the DCR that was selected. Modify the JSON to reroute the data as desired. For more information, please see the Azure Monitor documentation.

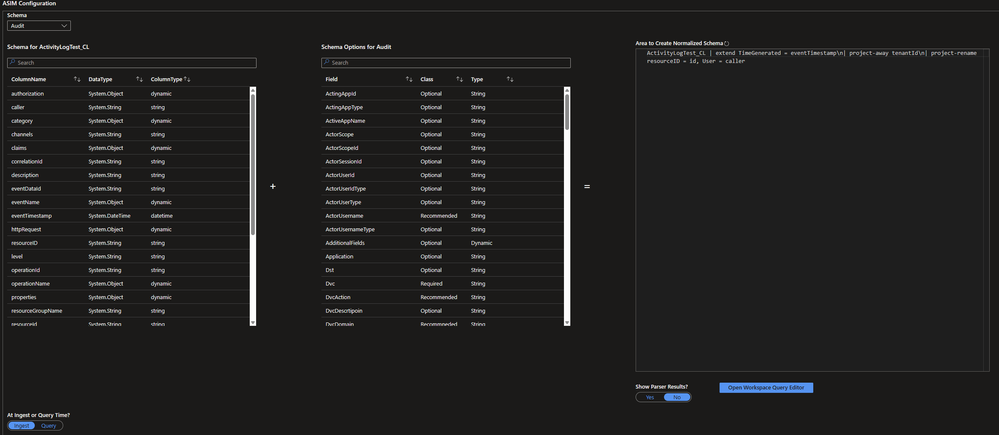

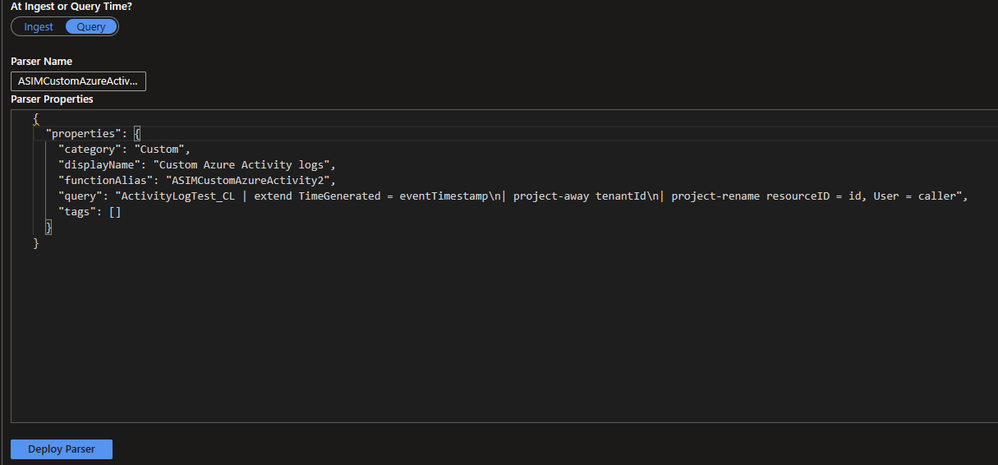

- If using the ASIM section, the existing schema for the custom table will be shown next to the schema or a basic ASIM parser. Use this section to normalize the custom logs to be similar to the ASIM parser. Use the deploy button to deploy the normalization either before ingestion (ingest-time) or after (query-time).

- If using ingest-time, modify the DCR body for the selected DCR and paste the normalized KQL next to transformKQL.

- If using query-time, enter a parser name and the properties for it.

- If using UI only, the collection details do not need to be set since the connector already exists elsewhere.

Note: Running the API calls for creating a table or DCR may return a failed message despite the operation being successful. For more details on the API, please see the documentation.

- Make sure to refresh the workbook to load the new resources in the lists.

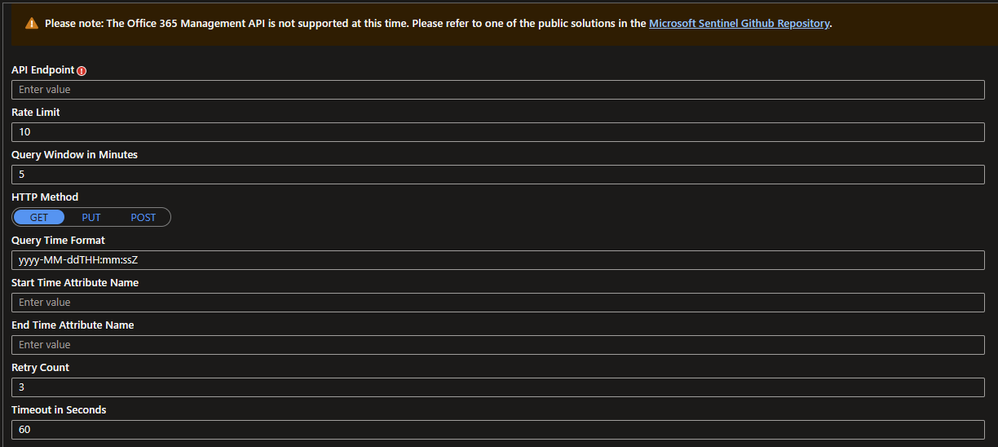

Request, Response, Paging, and Authentication:

- Enter the API endpoint.

- Select the HTTP method.

- Enter a timestamp format if needed.

- Fill out the remaining portions of the request section if needed.

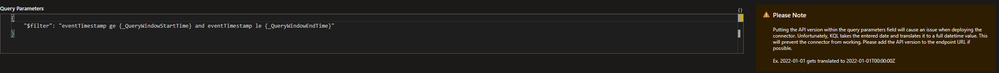

- If using query parameters, set 'Using Query Parameters' to yes.

- Enter the desired filter. Please note that api-version is not supported in this box as datetimes entered will be translated to a full datetime and will break the API call. It is recommended to include the api-version within the API endpoint URL.

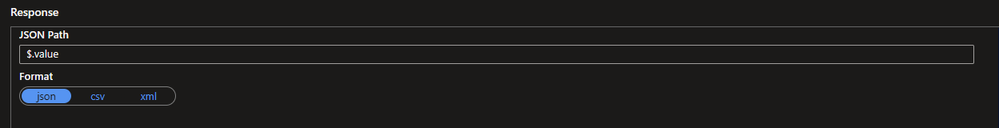

- Fill out the response section with JSONPath. For assistance with JSONPath, please see https://learn.microsoft.com/azure/data-explorer/kusto/query/jsonpath?WT.mc_id=Portal-fx.

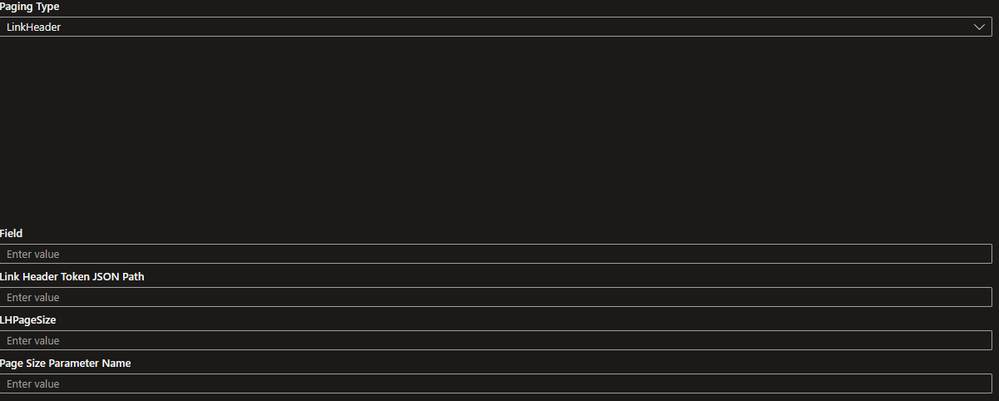

- Select a method for paging.

- Fill out the corresponding fields for the paging section.

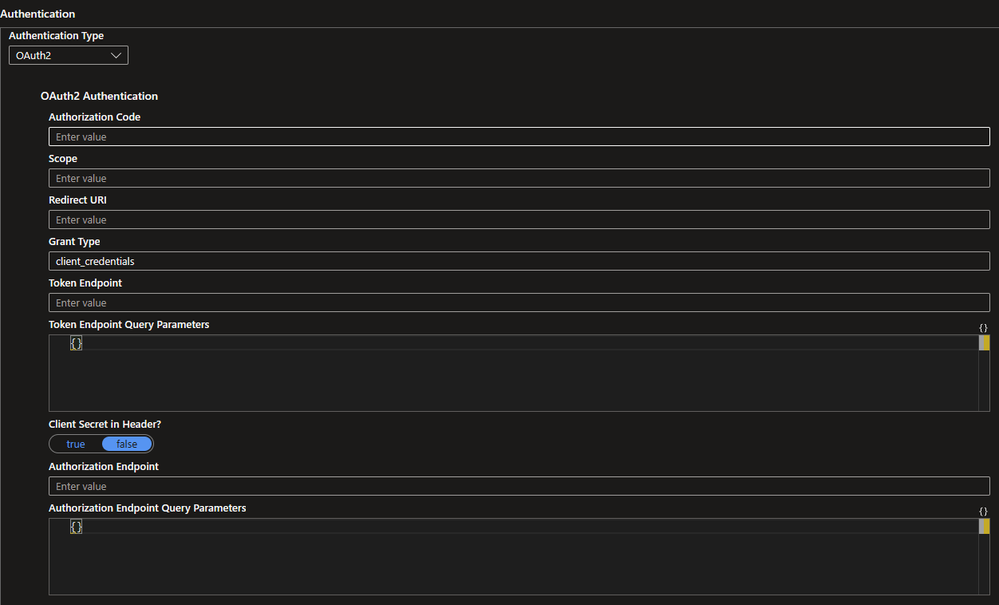

- Select a method of authentication (Basic, APIKey, OAuth2)

- Fill out the corresponding fields for the authentication section.

- Move on to the next tab to configure the UI.

Creating the UI:

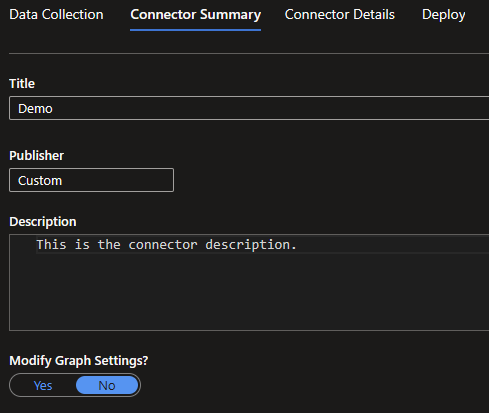

- Go to the Connector Summary tab.

- Fill out the connector title, publisher, and description. These will be what is represented in the connector gallery in Microsoft Sentinel.

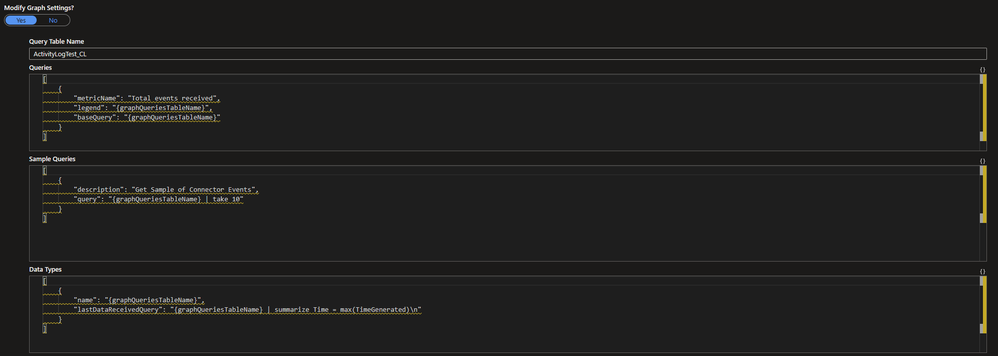

- Optional: Customize the graph visual section. This is what will be used to show if data is being ingested.

- Move onto the next tab to configure the connector page.

Detailing the Connector:

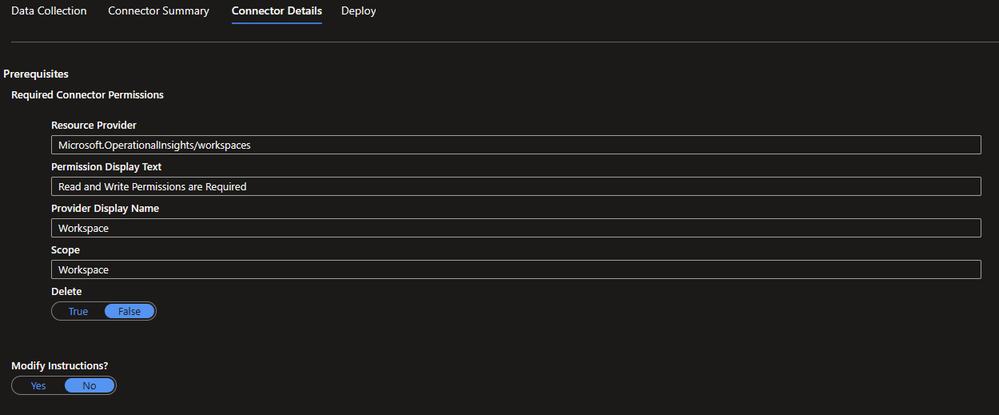

- Go to the Connector Details tab. This sets what is shown on the data connector page.

- Optional: Modify the permissions. These are already set and do not need to be modified.

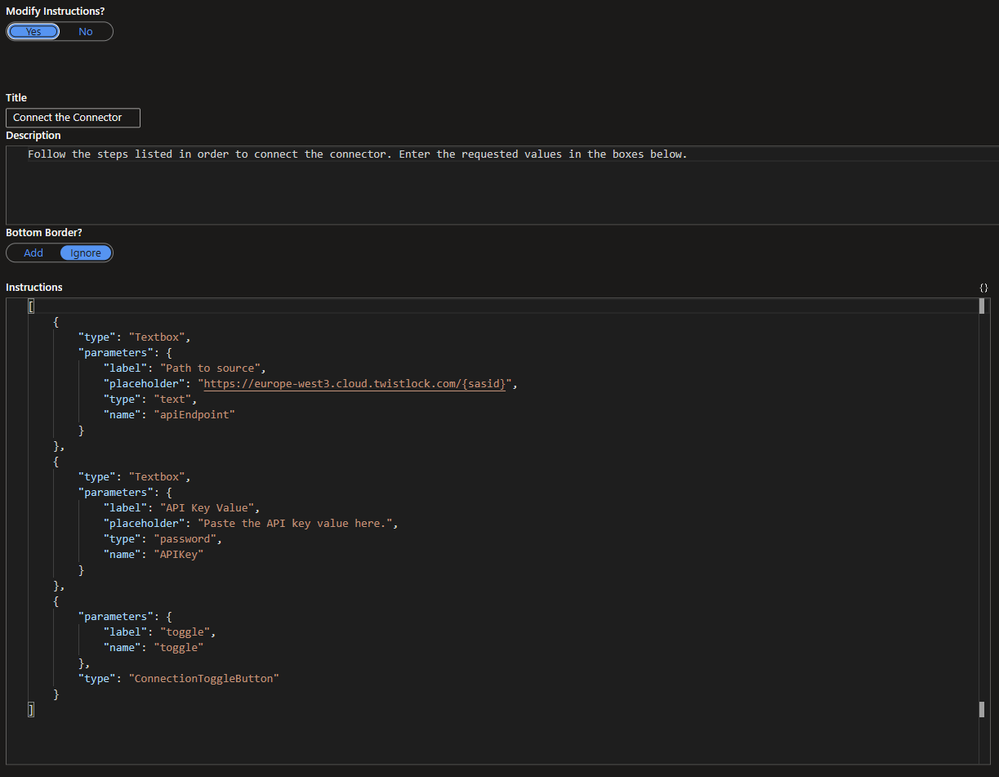

- Optional: Customize the instructions title, description, and JSON body. These detail which steps are required to configure the connector. The JSON can be modified to show different options for instructions but for simplicity sake, the simplest instructions are provided out of the box. If interested, please see the instructions section of the documentation.

- Move on to the last tab to review the connector details and deploy the connector.

Deploy the Connector:

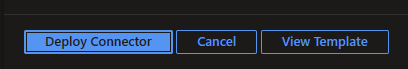

- Go to the Deploy tab.

- Review the details highlighted in the orange section to confirm that the information is correct. One thing to check for is if any values are either empty or contain '<unset>'.

- If all looks good, click on the deploy button.

- Optional: Click review template in order to manually review the ARM template and parameters. This also can be used if the connector template should be saved locally.

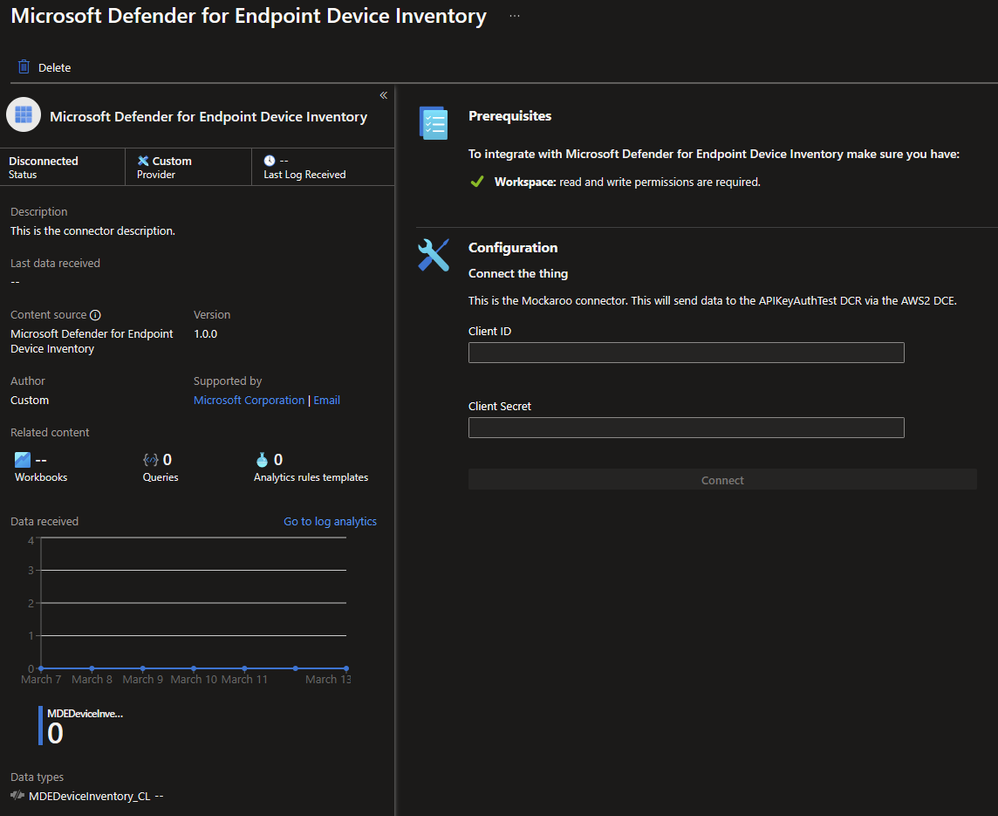

Connecting the Connector:

- Go to Microsoft Sentinel.

- Go to Data Connectors.

- Find the newly created connector.

- Click ‘open connector page’.

- Enter the details required to create the API connection.

Troubleshooting:

Q: My connector deployed but won’t connect. What could be wrong?

A: Chances are there was a detail that was missed or incorrectly entered. It is recommended that you review the template and details that were deployed.

Q: My connector is connected but data is not ingesting. What could be wrong?

A: Chances are either the data is not aligned with the schema properly or the API response is not being parsed correctly to match what the table is expecting. Test the JSONPath that the connector is using for the response and make sure that it aligns with the schema defined in the destination table.

Q: I am required to save any templates that are deployed, how can I save the connectors?

A: When deploying the connector, there is an option to ‘view template’ that allows for the template and the parameters that will be deployed.

Q: I want to disconnect my connector but it doesn’t want to disconnect when I click the button. What can I do?

A: Newly created connectors that are active can take a few attempts to disconnect. It may help to refresh the Azure session and try the disconnection again.

Unsupported Functionality in This Tool

- Multiple API endpoints

- Content fetching via Content URLs

- Deploying a connector in Azure GOV.

For support issues with connectors, this tool is only leveraging existing components for Codeless Connectors, so any issue should be routed to the official Microsoft Support for assistance with the connectors themselves and not the workbook. For more information about Codeless Connectors, please refer to our main documents:

- Codeless connector document: Create a codeless connector for Microsoft Sentinel | Microsoft Learn

- Advanced workbook concepts: Advanced Workbook Concepts with Workbooks 202 - Microsoft Community Hub

- Workbook documentation: Azure Workbooks overview - Azure Monitor | Microsoft Learn