This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

This article shows how to quickly build chat applications using Python and leveraging powerful technologies such as OpenAI ChatGPT models, Embedding models, LangChain framework, ChromaDB vector database, and Chainlit, an open-source Python package that is specifically designed to create user interfaces (UIs) for AI applications. These applications are hosted on Azure Container Apps, a fully managed environment that enables you to run microservices and containerized applications on a serverless platform.

- Simple Chat: This simple chat application utilizes OpenAI's language models to generate real-time completion responses.

- Documents QA Chat: This chat application goes beyond simple conversations. Users can upload up to 10

.pdfand.docxdocuments, which are then processed to create vector embeddings. These embeddings are stored in ChromaDB for efficient retrieval. Users can pose questions about the uploaded documents and view the Chain of Thought, enabling easy exploration of the reasoning process. The completion message contains links to the text chunks in the documents that were used as a source for the response.

Both applications use a user-defined managed identity to authenticate and authorize against Azure OpenAI Service (AOAI) and Azure Container Registry (ACR) and use Azure Private Endpoints to connect privately and securely to these services. The chat UIs are built using Chainlit, an open-source Python package designed explicitly for creating AI applications. Chainlit seamlessly integrates with LangChain, LlamaIndex, and LangFlow, making it a powerful tool for easily developing ChatGPT-like applications.

By following our example, you can quickly create sophisticated chat applications that utilize cutting-edge technologies, empowering users with intelligent conversational capabilities.

You can find the code and Visio diagrams in the companion GitHub repository. Also, check the following articles:

- Deploy and run an Azure OpenAI ChatGPT application on AKS via Bicep

- Deploy and run an Azure OpenAI ChatGPT application on AKS via Terraform

Prerequisites

- An active Azure subscription. If you don't have one, create a free Azure account before you begin.

- Visual Studio Code installed on one of the supported platforms along with the HashiCorp Terraform.

- Azure CLI version 2.49.0 or later installed. To install or upgrade, see Install Azure CLI.

aks-previewAzure CLI extension of version 0.5.140 or later installed- Terraform v1.5.2 or later.

Architecture

The following diagram shows the architecture and network topology of the sample:

This sample provides two sets of Terraform modules to deploy the infrastructure and the chat applications.

Infrastructure Terraform Modules

You can use the Terraform modules in the terraform/infra folder to deploy the infrastructure used by the sample, including the Azure Container Apps Environment, Azure OpenAI Service (AOAI), and Azure Container Registry (ACR), but not the Azure Container Apps (ACA). The Terraform modules in the terraform/infra folder deploy the following resources:

- azurerm_virtual_network: an Azure Virtual Network with two subnets:

ContainerApps: this subnet hosts the Azure Container Apps Environment.PrivateEndpoints: this subnet contains the Azure Private Endpoints to the Azure OpenAI Service (AOAI) and Azure Container Registry (ACR) resources.

- azurerm_container_app_environment: the Azure Container Apps Environment hosting the Azure Container Apps.

- azurerm_cognitive_account: an Azure OpenAI Service (AOAI) with a GPT-3.5 model used by the chatbot applications. Azure OpenAI Service gives customers advanced language AI with OpenAI GPT-4, GPT-3, Codex, and DALL-E models with Azure's security and enterprise promise. Azure OpenAI co-develops the APIs with OpenAI, ensuring compatibility and a smooth transition from one to the other. The Terraform modules create the following models:

- GPT-35: a

gpt-35-turbo-16kmodel is used to generate human-like and engaging conversational responses. - Embeddings model: the

text-embedding-ada-002model is to transform input documents into meaningful and compact numerical representations called embeddings. Embeddings capture the semantic or contextual information of the input data in a lower-dimensional space, making it easier for machine learning algorithms to process and analyze the data effectively. Embeddings can be stored in a vector database, such as ChromaDB or Facebook AI Similarity Search, explicitly designed for efficient storage, indexing, and retrieval of vector embeddings.

- GPT-35: a

- azurerm_user_assigned_identity: a user-defined managed identity used by the chatbot applications to acquire a security token to call the Chat Completion API of the ChatGPT model provided by the Azure OpenAI Service and to call the Embedding model.

- azurerm_container_registry: an Azure Container Registry (ACR) to build, store, and manage container images and artifacts in a private registry for all container deployments. In this sample, the registry stores the container images of the two chat applications.

- azurerm_private_endpoint: an Azure Private Endpoint is created for each of the following resources:

- azurerm_private_dns_zone: an Azure Private DNS Zone is created for each of the following resources:

- azurerm_log_analytics_workspace: a centralized Azure Log Analytics workspace is used to collect the diagnostics logs and metrics from all the Azure resources:

Application Terraform Modules

You can use these Terraform modules in the terraform/apps To deploy the Azure Container Apps (ACA) using the Docker container images stored in the Azure Container Registry you deployed in the previous step.

- azurerm_container_app: this sample deploys the following applications:

- chatapp: this simple chat application utilizes OpenAI's language models to generate real-time completion responses.

- docapp: This chat application goes beyond conversations. Users can upload up to 10

.pdfand.docxdocuments, which are then processed to create vector embeddings. These embeddings are stored in ChromaDB for efficient retrieval. Users can pose questions about the uploaded documents and view the Chain of Thought, enabling easy exploration of the reasoning process. The completion message contains links to the text chunks in the files that were used as a source for the response.

Azure Container Apps

Azure Container Apps (ACA) is a serverless compute service provided by Microsoft Azure that allows developers to easily deploy and manage containerized applications without the need to manage the underlying infrastructure. It provides a simplified and scalable solution for running applications in containers, leveraging the power and flexibility of the Azure ecosystem.

With Azure Container Apps, developers can package their applications into containers using popular containerization technologies such as Docker. These containers encapsulate the application and its dependencies, ensuring consistent execution across different environments.

Powered by Kubernetes and open-source technologies like Dapr, KEDA, and envoy, the service abstracts away the complexities of managing the infrastructure, including provisioning, scaling, and monitoring, allowing developers to focus solely on building and deploying their applications. Azure Container Apps handles automatic scaling, and load balancing, and natively integrates with other Azure services, such as Azure Monitor and Azure Container Registry (ACR), to provide a comprehensive and secure application deployment experience.

Azure Container Apps offers benefits such as rapid deployment, easy scalability, cost-efficiency, and seamless integration with other Azure services, making it an attractive choice for modern application development and deployment scenarios.

Azure OpenAI Service

The Azure OpenAI Service is a platform offered by Microsoft Azure that provides cognitive services powered by OpenAI models. One of the models available through this service is the ChatGPT model, which is designed for interactive conversational tasks. It allows developers to integrate natural language understanding and generation capabilities into their applications.

Azure OpenAI Service provides REST API access to OpenAI's powerful language models including the GPT-3, Codex and Embeddings model series. In addition, the new GPT-4 and ChatGPT model series have now reached general availability. These models can be easily adapted to your specific task, including but not limited to content generation, summarization, semantic search, and natural language-to-code translation. Users can access the service through REST APIs, Python SDK, or our web-based interface in the Azure OpenAI Studio.

You can use Embeddings model to transform raw data or inputs into meaningful and compact numerical representations called embeddings. Embeddings capture the semantic or contextual information of the input data in a lower-dimensional space, making it easier for machine learning algorithms to process and analyze the data effectively. Embeddings can be stored in a vector database, such as ChromaDB or Facebook AI Similarity Search (FAISS), explicitly designed for efficient storage, indexing, and retrieval of vector embeddings.

The Chat Completion API, which is part of the Azure OpenAI Service, provides a dedicated interface for interacting with the ChatGPT and GPT-4 models. This API is currently in preview and is the preferred method for accessing these models. The GPT-4 models can only be accessed through this API.

GPT-3, GPT-3.5, and GPT-4 models from OpenAI are prompt-based. With prompt-based models, the user interacts with the model by entering a text prompt, to which the model responds with a text completion. This completion is the model’s continuation of the input text. While these models are compelling, their behavior is also very sensitive to the prompt. This makes prompt construction a critical skill to develop. For more information, see Introduction to prompt engineering.

Prompt construction can be complex. In practice, the prompt acts to configure the model weights to complete the desired task, but it's more of an art than a science, often requiring experience and intuition to craft a successful prompt. The goal of this article is to help get you started with this learning process. It attempts to capture general concepts and patterns that apply to all GPT models. However, it's essential to understand that each model behaves differently, so the learnings may not apply equally to all models.

Prompt engineering refers to the process of creating instructions called prompts for Large Language Models (LLMs), such as OpenAI’s ChatGPT. With the immense potential of LLMs to solve a wide range of tasks, leveraging prompt engineering can empower us to save significant time and facilitate the development of impressive applications. It holds the key to unleashing the full capabilities of these huge models, transforming how we interact and benefit from them. For more information, see Prompt engineering techniques.

Vector Databases

A vector database is a specialized database that goes beyond traditional storage by organizing information to simplify the search for similar items. Instead of merely storing words or numbers, it leverages vector embeddings - unique numerical representations of data. These embeddings capture meaning, context, and relationships. For instance, words are represented as vectors, whereas similar words have similar vector values.

The applications of vector databases are numerous and powerful. In language processing, they facilitate the discovery of related documents or sentences. By comparing the vector embeddings of different texts, finding similar or related information becomes faster and more efficient. This capability benefits search engines and recommendation systems, which can suggest relevant articles or products based on user interests.

In the realm of image analysis, vector databases excel in finding visually similar images. By representing images as vectors, a simple comparison of vector values can identify visually similar images. This capability is precious for tasks like reverse image search or content-based image retrieval.

Additionally, vector databases find applications in fraud detection, anomaly detection, and clustering. By comparing vector embeddings of data points, unusual patterns can be detected, and similar items can be grouped together, aiding in effective data analysis and decision-making.

Here is a list of the most popular vector databases:

- ChromaDB is a powerful database solution that stores and retrieves vector embeddings efficiently. It is commonly used in AI applications, including chatbots and document analysis systems. By storing embeddings in ChromaDB, users can easily search and retrieve similar vectors, enabling faster and more accurate matching or recommendation processes. ChromaDB offers excellent scalability high performance, and supports various indexing techniques to optimize search operations. It is a versatile tool that enhances the functionality and efficiency of AI applications that rely on vector embeddings.

- Facebook AI Similarity Search (FAISS) is another widely used vector database. Facebook AI Research develops it and offers highly optimized algorithms for similarity search and clustering of vector embeddings. FAISS is known for its speed and scalability, making it suitable for large-scale applications. It offers different indexing methods like flat, IVF (Inverted File System), and HNSW (Hierarchical Navigable Small World) to organize and search vector data efficiently.

- SingleStore: SingleStore aims to deliver the world’s fastest distributed SQL database for data-intensive applications: SingleStoreDB, which combines transactional + analytical workloads in a single platform.

- Astra DB: DataStax Astra DB is a cloud-native, multi-cloud, fully managed database-as-a-service based on Apache Cassandra, which aims to accelerate application development and reduce deployment time for applications from weeks to minutes.

- Milvus: Milvus is an open source vector database built to power embedding similarity search and AI applications. Milvus makes unstructured data search more accessible and provides a consistent user experience regardless of the deployment environment. Milvus 2.0 is a cloud-native vector database with storage and computation separated by design. All components in this refactored version of Milvus are stateless to enhance elasticity and flexibility.

- Qdrant: Qdrant is a vector similarity search engine and database for AI applications. Along with open-source, Qdrant is also available in the cloud. It provides a production-ready service with an API to store, search, and manage points—vectors with an additional payload. Qdrant is tailored to extended filtering support. It makes it useful for all sorts of neural network or semantic-based matching, faceted search, and other applications.

- Pinecone: Pinecone is a fully managed vector database that makes adding vector search to production applications accessible. It combines state-of-the-art vector search libraries, advanced features such as filtering, and distributed infrastructure to provide high performance and reliability at any scale.

- Vespa: Vespa is a platform for applications combining data and AI online. Building such applications on Vespa helps users avoid integration work to get features, and it can scale to support any amount of traffic and data. To deliver that, Vespa provides a broad range of query capabilities, a computation engine with support for modern machine-learned models, hands-off operability, data management, and application development support. It is free and open source to use under the Apache 2.0 license.

- Zilliz: Milvus is an open-source vector database, with over 18,409 stars on GitHub and 3.4 million+ downloads. Milvus supports billion-scale vector search and has over 1,000 enterprise users. Zilliz Cloud provides a fully-managed Milvus service made by the creators of Milvus. This helps to simplify the process of deploying and scaling vector search applications by eliminating the need to create and maintain complex data infrastructure. As a DBaaS, Zilliz simplifies the process of deploying and scaling vector search applications by eliminating the need to create and maintain complex data infrastructure.

- Weaviate: Weaviate is an open-source vector database used to store data objects and vector embeddings from ML-models, and scale into billions of data objects from the same name company in Amsterdam. Users can index billions of data objects to search through and combine multiple search techniques, such as keyword-based and vector search, to provide search experiences.

This sample makes of ChromaDB vector database, but you can easily modify the code to use another vector database. You can even use Azure Cache for Redis Enterprise to store the vector embeddings and compute vector similarity with high performance and low latency. For more information, see Vector Similarity Search with Azure Cache for Redis Enterprise

LangChain

LangChain is a software framework designed to streamline the development of applications using large language models (LLMs). It serves as a language model integration framework, facilitating various applications like document analysis and summarization, chatbots, and code analysis.

LangChain's integrations cover an extensive range of systems, tools, and services, making it a comprehensive solution for language model-based applications. LangChain integrates with the major cloud platforms such as Microsoft Azure, Amazon AWS, and Google, and with API wrappers for various purposes like news, movie information, and weather, as well as support for Bash, web scraping, and more. It also supports multiple language models, including those from OpenAI, Anthropic, and Hugging Face. Moreover, LangChain offers various functionalities for document handling, code generation, analysis, debugging, and interaction with databases and other data sources.

Chainlit

Chainlit is an open-source Python package that is specifically designed to create user interfaces (UIs) for AI applications. It simplifies the process of building interactive chats and interfaces, making developing AI-powered applications faster and more efficient. While Streamlit is a general-purpose UI library, Chainlit is purpose-built for AI applications and seamlessly integrates with other AI technologies such as LangChain, LlamaIndex, and LangFlow.

With Chainlit, developers can easily create intuitive UIs for their AI models, including ChatGPT-like applications. It provides a user-friendly interface for users to interact with AI models, enabling conversational experiences and information retrieval. Chainlit also offers unique features, such as displaying the Chain of Thought, which allows users to explore the reasoning process directly within the UI. This feature enhances transparency and enables users to understand how the AI arrives at its responses or recommendations.

For more information, see the following resources:

Deploy the Infrastructure

Before deploying the Terraform modules in the terraform/infra folder, specify a value for the following variables in the terraform.tfvars variable definitions file.

This is the definition of each variable:

prefix: specifies a prefix for all the Azure resources.location: specifies the region (e.g., EastUS) where deploying the Azure resources.

NOTE: Make sure to select a region where Azure OpenAI Service (AOAI) supports both GPT-3.5/GPT-4 models like gpt-35-turbo-16k and Embeddings models like text-embedding-ada-002.

OpenAI Module

The following table contains the code from the terraform/infra/modules/openai/main.tf Terraform module used to deploy the Azure OpenAI Service.

Azure Cognitive Services uses custom subdomain names for each resource created through the Azure portal, Azure Cloud Shell, Azure CLI, Bicep, Azure Resource Manager (ARM), or Terraform. Unlike regional endpoints, which were common for all customers in a specific Azure region, custom subdomain names are unique to the resource. Custom subdomain names are required to enable authentication features like Azure Active Directory (Azure AD). We need to specify a custom subdomain for our Azure OpenAI Service, as our chatbot applications will use an Azure AD security token to access it. By default, the terraform/infra/modules/openai/main.tf module sets the value of the custom_subdomain_name parameter to the lowercase name of the Azure OpenAI resource. For more information on custom subdomains, see Custom subdomain names for Cognitive Services.

This Terraform module allows you to pass an array containing the definition of one or more model deployments in the deployments variable. For more information on model deployments, see Create a resource and deploy a model using Azure OpenAI. The openai_deployments variable in the terraform/infra/variables.tf file defines the structure and the default models deployed by the sample:

Alternatively, you can use the Terraform module for deploying Azure OpenAI Service. to deploy Azure OpenAI Service.

Private Endpoint Module

The terraform/infra/main.tf the module creates Azure Private Endpoints and Azure Private DNDS Zones for each of the following resources:

In particular, it creates an Azure Private Endpoint and Azure Private DNDS Zone to the Azure OpenAI Service as shown in the following code snippet:

Below you can read the code of the terraform/infra/modules/private_endpoint/main.tf module, which is used to create Azure Private Endpoints:

Private DNS Zone Module

In the following box, you can read the code of the terraform/infra/modules/private_dns_zone/main.tf module, which is utilized to create the Azure Private DNS Zones.

Workload Managed Identity Module

Below you can read the code of the terraform/infra/modules/managed_identity/main.tf module, which is used to create the Azure Managed Identity used by the Azure Container Apps to pull container images from the Azure Container Registry, and by the chat applications to connect to the Azure OpenAI Service. You can use a system-assigned or user-assigned managed identity from Azure Active Directory (Azure AD) to let Azure Container Apps access any Azure AD-protected resource. For more information, see Managed identities in Azure Container Apps. You can pull container images from private repositories in an Azure Container Registry using user-assigned or user-assigned managed identities for authentication to avoid using administrative credentials. For more information, see Azure Container Apps image pull with managed identity. This user-defined managed identity is assigned the Cognitive Services User role on the Azure OpenAI Service namespace and ACRPull role on the Azure Container Registry (ACR). By assigning the above roles, you grant the user-defined managed identity access to these resources.

Deploy the Applications

Before deploying the Terraform modules in the terraform/apps folder, specify a value for the following variables in the Terraform.tfvars variable definitions file.

This is the definition of each variable:

resource_group_name: specifies the name of the resource group that contains the infrastructure resources: Azure OpenAI Service, Azure Container Registry, Azure Container Apps Environment, Azure Log Analytics, and user-defined managed identity.container_app_environment_name: the name of the Azure Container Apps Environment in which to deploy the chat applications.container_registry_name: the name of Azure Container Registry used to hold the container images of the chat applications.workload_managed_identity_name: the name of the user-defined managed identity used by the chat applications to authenticate with Azure OpenAI Service and Azure Container Registry.container_apps: the definition of the two chat applications. The application configuration does not specify the following data because thecontainer_appmodule later defines this information:image: This field contains the name and tag of the container image but not the login server of the Azure Container Registry.identity: The identity of the container app.registry: The registry hosting the container image for the application.AZURE_CLIENT_ID: The client ID of the user-defined managed identity used by the application to authenticate with Azure OpenAI Service and Azure Container Registry.AZURE_OPENAI_TYPE: This environment variable specifies the authentication type with Azure OpenAI Service: if you set the value of theAZURE_OPENAI_TYPEenvironment variable toazure, you need to specify the OpenAI key as a value for theAZURE_OPENAI_KEYenvironment variable. Instead, if you set the value toazure_adin the application code, assign an Azure AD security token to theopenai_api_keyproperty. For more information, see How to switch between OpenAI and Azure OpenAI endpoints with Python.

Container App Module

The terraform/apps/modules/container_app/main.tf module is utilized to create the Azure Container Apps. The module defines and uses the following data source for the Azure Container Registry, Azure Container Apps Environment, and user-defined managed identity created when deploying the infrastructure. These data sources are used to access the properties of these Azure resources.

The module creates and utilizes the following local variables:

This is the explanation of each local variable:

identity: uses the resource ID of the user-defined managed identity to define theidentityblock for each container app deployed by the module.identity_env: uses the client ID of the user-defined managed identity to define the value of theAZURE_CLIENT_IDenvironment variable that is appended to the list of environment variables of each container app deployed by the module.registry: uses the login server of the Azure Container Registry to define theregistryblock for each container app deployed by the module.

Here is the complete Terraform code of the module:

As you can notice, the module uses the login server of the Azure Container Registry to create the fully qualified name of the container image of the current container app.

Managed identities in Azure Container Apps

Each chat application makes use of a DefaultAzureCredential object to acquire a security token from Azure Active Directory and authenticate and authorize with Azure OpenAI Service (AOAI) and Azure Container Registry (ACR) using the credentials of the user-defined managed identity associated with the container app.

You can use a managed identity in a running container app to authenticate and authorize with any service that supports Azure AD authentication. With managed identities:

- Container apps and applications connect to resources with the managed identity. You don't need to manage credentials in your container apps.

- You can use role-based access control to grant specific permissions to a managed identity.

- System-assigned identities are automatically created and managed. They are deleted when your container app or container app is deleted.

- You can add and delete user-assigned identities and assign them to multiple resources. They are independent of your container app or the container app's lifecycle.

- You can use managed identity to authenticate with a private Azure Container Registry without a username and password to pull containers for your Container App.

- You can use managed identity to create connections for Dapr-enabled applications via Dapr components

For more information, see Managed identities in Azure Container Apps. The workloads running in a container app can use the Azure Identity client libraries to acquire a security token from the Azure Active Directory. You can choose one of the following approaches inside your code:

- Use

DefaultAzureCredential, which will attempt to use theWorkloadIdentityCredential. - Create a

ChainedTokenCredentialinstance that includesWorkloadIdentityCredential. - Use

WorkloadIdentityCredentialdirectly.

The following table provides the minimum package version required for each language's client library.

| Language | Library | Minimum Version | Example |

|---|---|---|---|

| .NET | Azure.Identity | 1.9.0 | Link |

| Go | azidentity | 1.3.0 | Link |

| Java | azure-identity | 1.9.0 | Link |

| JavaScript | @azure/identity | 3.2.0 | Link |

| Python | azure-identity | 1.13.0 | Link |

NOTE: When using Azure Identity client library with Azure Container Apps, the client ID of the managed identity must be specified. When using the DefaultAzureCredential, you can explicitly specify the client ID of the container app managed identity in the AZURE_CLIENT_ID environment variable.

Simple Chat Application

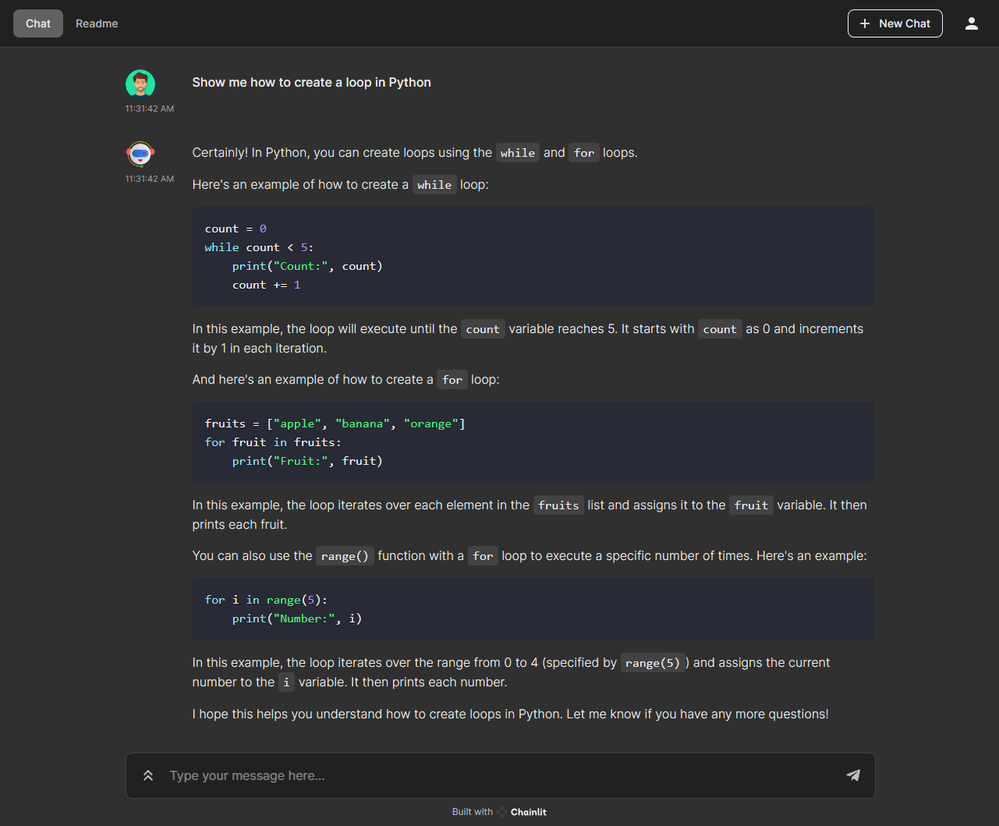

The Simple Chat Application is a large language model-based chatbot that allows users to submit general-purpose questions to a GPT model, generating and streaming back human-like and engaging conversational responses. The following picture shows the welcome screen of the chat application.

You can modify the welcome screen in markdown by editing the chainlit.md file at the project's root. If you do not want a welcome screen, leave the file empty. The following picture shows what happens when a user submits a new message in the chat.

Chainlit can render messages in markdown format and provides classes to support the following elements:

- Audio: The

Audioclass allows you to display an audio player for a specific audio file in the chatbot user interface. You must provide either a URL or a path or content bytes. - Avatar: The

Avatarclass allows you to display an avatar image next to a message instead of the author's name. You need to send the element once. Next,, if an avatar's name matches an author's name, the avatar will be automatically displayed. You must provide either a URL or a path or content bytes. - File: The

Fileclass allows you to display a button that lets users download the file's content. You must provide either a URL or a path or content bytes. - Image: The

Imageclass is designed to create and handle image elements to be sent and displayed in the chatbot user interface. You must provide either a URL or a path or content bytes. - Pdf: The

Pdfclass allows you to display a PDF hosted remotely or locally in the chatbot UI. This class either takes a URL of a PDF hosted online or the path of a local PDF. - Pyplot: The

Pyplotclass allows you to display a Matplotlib pyplot chart in the chatbot UI. This class takes a pyplot figure. - TaskList: The

TaskListclass allows you to display a task list next to the chatbot UI. - Text: The

Textclass allows you to display a text element in the chatbot UI. This class takes a string and creates a text element that can be sent to the UI. It supports the markdown syntax for formatting text. You must provide either a URL or a path or content bytes.

Chainlit provides three display options that determine how an element is rendered in the context of its use. The ElementDisplay type represents these options. The following display options are available:

Side: this option displays the element on a sidebar. The sidebar is hidden by default and opened upon element reference click.Page: this option displays the element on a separate page. The user is redirected to the page upon an element reference click.Inline: this option displays the element below the message. If the element is global, it is displayed if it is explicitly mentioned in the message. If the element is scoped, it is displayed regardless of whether it is expressly mentioned in the message.

You can click the user icon on the UI to access the chat settings and choose, for example, between the light and dark themes.

The application is built in Python. Let's take a look at the individual parts of the application code. The Python code starts by importing the necessary packages/modules in the following section.

These are the libraries used by the chat application:

os: This module provides a way of interacting with the operating system, enabling the code to access environment variables, file paths, etc.sys: This module provides access to some variables used or maintained by the interpreter and functions that interact with the interpreter.time: This module provides various time-related time manipulation and measurement functions.openai: The OpenAI Python library provides convenient access to the OpenAI API from applications written in Python. It includes a pre-defined set of classes for API resources that initialize themselves dynamically from API responses, making it compatible with a wide range of versions of the OpenAI API. You can find usage examples for the OpenAI Python library in our API reference and the OpenAI Cookbook.random: This module provides functions to generate random numbers.logging: This module provides flexible logging of messages.chainlit as cl: This imports the Chainlit library and aliases it ascl. Chainlit is used to create the UI of the application.DefaultAzureCredentialfromazure.identity: when theopenai_typeproperty value isazure_ad,aDefaultAzureCredentialobject from the [Azure Identity client library for Python - version 1.13.0(https://learn.microsoft.com/en-us/python/api/overview/azure/identity-readme?view=azure-python) is used to acquire security token from the Azure Active Directory using the credentials of the user-defined managed identity, whose client ID is defined in theAZURE_CLIENT_IDenvironment variable.load_dotenvanddotenv_valuesfromdotenv: Python-dotenv reads key-value pairs from a.envfile and can set them as environment variables. It helps in the development of applications following the 12-factor principles.

The requirements.txt file under the src folder contains the list of packages used by the chat applications. You can restore these packages in your environment using the following command:

Next, the code reads environment variables and configures the OpenAI settings.

Here's a brief explanation of each variable and related environment variable:

temperature: A float value representing the temperature for Create chat completion method of the OpenAI API. It is fetched from the environment variables with a default value of 0.9.api_base: The base URL for the OpenAI API.api_key: The API key for the OpenAI API.api_type: A string representing the type of the OpenAI API.api_version: A string representing the version of the OpenAI API.engine: The engine used for OpenAI API calls.model: The model used for OpenAI API calls.system_content: The content of the system message used for OpenAI API calls.max_retries: The maximum number of retries for OpenAI API calls.backoff_in_seconds: The backoff time in seconds for retries in case of failures.

In the next section, the code sets the default Azure credential based on the api_type and configures a logger for logging purposes.

Here's a brief explanation:

-

default_credential: It sets the default Azure credential toDefaultAzureCredential()if theapi_typeis "azure_ad"; otherwise, it is set toNone. -

logging.basicConfig(): This function configures the logging system with specific settings.stream: The output stream where log messages will be written. Here, it is set tosys.stdoutfor writing log messages to the standard output.format: The format string for log messages. It includes the timestamp, filename, line number, log level, and the actual log message.level: The logging level. It is set tologging.INFO, meaning only messages with the levelINFOand above will be logged.

-

logger: This creates a logger instance named after the current module (__name__). The logger will be used to log messages throughout the code.

Next, the code defines a helper function backoff that takes an integer attempt and returns a float value representing the backoff time for exponential retries in case of API call failures.

The backoff time is calculated using the backoff_in_seconds and attempt variables. It follows the formula backoff_in_seconds * 2 ** attempt + random.uniform(0, 1). This formula increases the backoff time exponentially with each attempt and adds a random value between 0 and 1 to avoid synchronized retries. Then, the application defines a function called refresh_openai_token() to refresh the OpenAI security token if needed.

The function follows these steps:

- It fetches the current token from

cl.user_session(which seems to be a part of thechainlitlibrary) using the key'openai_token'. The user_session is a dictionary that stores the user’s session data. The id and env keys are reserved for the session ID and environment variables. Other keys can be used to store arbitrary data in the user’s session. - It checks if the token is

Noneor if its expiration time (expires_on) is less than the current time minus 1800 seconds (30 minutes).

Next, the code defines a function called start_chat that is used to initialize the when the user connects to the application or clicks the New Chat button.

Here is a brief explanation of the function steps:

@cl.on_chat_start: The on_chat_start decorator registers a callback functionstart_chat()to be called when the Chainlit chat starts. It is used to set up the chat and send avatars for the Chatbot, Error, and User participants in the chat.cl.Avatar(): the Avatar class allows you to display an avatar image next to a message instead of the author name. You need to send the element once. Next, if an avatar's name matches an author's name, the avatar will be automatically displayed. You must provide either a URL or a path or content bytes.cl.user_session.set(): This API call sets a value in the user_session dictionary. In this case, it initializes themessage_historyin the user's session with a system content message, indicating the chat's start.

Finally, the application defines the method called whenever the user sends a new message in the chat.

Here is a detailed explanation of the function steps:

@cl.on_message: The on_message decorator registers a callback functionmain(message: str)to be called when the user submits a new message in the chat. It is the main function responsible for handling the chat logic.cl.user_session.get(): This API call retrieves a value from the user's session data stored in the user_session dictionary. In this case, it fetches themessage_historyfrom the user's session to maintain the chat history.message_history.append(): This API call appends a new message to themessage_historylist. It is used to add the user's message and the assistant's response to the chat history.cl.Message(): This API call creates a Chainlit Message object. TheMessageclass is designed to send, stream, edit, or remove messages in the chatbot user interface. In this sample, theMessageobject is used to stream the OpenAI response in the chat.msg.stream_token(): The stream_token method of the Message class streams a token to the response message. It is used to send the response from the OpenAI Chat API in chunks to ensure real-time streaming in the chat.await openai.ChatCompletion.acreate(): This API call sends a message to the OpenAI Chat API in an asynchronous mode and streams the response. It uses the providedmessage_historyas context for generating the assistant's response.- The section also includes an exception handling block that retries the OpenAI API call in case of specific errors like timeouts, API errors, connection errors, invalid requests, service unavailability, and other non-retriable errors. You can replace this code with a general-purpose retrying library for Python like Tenacity.

Below, you can read the complete code of the application.

You can run the application locally using the following command. The -w flag` indicates auto-reload whenever we make changes live in our application code.

Documents QA Chat

The Documents QA Chat application allows users to submit up to 10 .pdf and .docx documents. The application processes the uploaded documents to create vector embeddings. These embeddings are stored in ChromaDB vector database for efficient retrieval. Users can pose questions about the uploaded documents and view the Chain of Thought, enabling easy exploration of the reasoning process. The completion message contains links to the text chunks in the files that were used as a source for the response. The following picture shows the chat application interface. As you can see, you can click the Browse button and choose up to 10 .pdf and .docx documents to upload. Alternatively, you can drag and drop the files over the control area.

After uploading the documents, the application creates and stores embeddings to ChromaDB vector database. During the phase, the UI shows a message Processing <file-1>, <file-2>..., as shown in the following picture:

When the code finished creating embeddings, the UI is ready to receive user's questions:

Understanding the individual steps for generating a specific answer can become challenging as your chat application grows in complexity. To solve this issue, Chainlit allows you to easily explore the reasoning process right from the user interface using the Chain of Thought. If you are using the LangChain integration, every intermediary step is automatically sent and displayed in the Chainlit UI just clicking and expanding the steps, as shown in the following picture:

To see the text chunks that the large language model used to originate the response, you can click the sources links, as shown in the following picture:

In the Chain of Thought, below each message, you can find an edit button, as a pencil icon, if a prompt generated that message. Clicking on it opens the Prompt Playground dialog, allowing you to modify and iterate on the prompt as needed.

Let's take a look at the individual parts of the application code. The Python code starts by importing the necessary packages/modules in the following section.

These are the libraries used by the chat application:

os: This module provides a way of interacting with the operating system, enabling the code to access environment variables, file paths, etc.sys: This module provides access to some variables used or maintained by the interpreter and functions that interact with the interpreter.time: This module provides various time-related functions for time manipulation and measurement.openai: The OpenAI Python library provides convenient access to the OpenAI API from applications written in Python. It includes a pre-defined set of classes for API resources that initialize themselves dynamically from API responses, which makes it compatible with a wide range of versions of the OpenAI API. You can find usage examples for the OpenAI Python library in our API reference and the OpenAI Cookbook.random: This module provides functions to generate random numbers.logging: This module provides flexible logging of messages.chainlit as cl: This imports the Chainlit library and aliases it ascl.Chainlit is used to create the UI of the application.DefaultAzureCredentialfromazure.identity: when theopenai_typeproperty value isazure_ad, aDefaultAzureCredentialobject from the Azure Identity client library for Python - version 1.13.0 is used to acquire security token from the Azure Active Directory using the credentials of the user-defined managed identity, whose client ID is defined in theAZURE_CLIENT_IDenvironment variable.load_dotenvanddotenv_valuesfromdotenv: Python-dotenv reads key-value pairs from a.envfile and can set them as environment variables. It helps in the development of applications following the 12-factor principles.langchain: Large language models (LLMs) are emerging as a transformative technology, enabling developers to build applications that they previously could not. However, using these LLMs in isolation is often insufficient for creating a truly powerful app - the real power comes when you can combine them with other sources of computation or knowledge. LangChain library aims to assist in the development of those types of applications.

The requirements.txt file under the src folder contains the list of packages used by the chat applications. You can restore these packages in your environment using the following command:

Next, the code reads environment variables and configures the OpenAI settings.

Here's a brief explanation of each variable and related environment variable:

temperature: A float value representing the temperature for Create chat completion method of the OpenAI API. It is fetched from the environment variables with a default value of 0.9.api_base: The base URL for the OpenAI API.api_key: The API key for the OpenAI API.api_type: A string representing the type of the OpenAI API.api_version: A string representing the version of the OpenAI API.chat_completion_deployment: the name of the Azure OpenAI GPT model for chat completion.embeddings_deployment: the name of the Azure OpenAI deployment for embeddings.model: The model used for chat completion calls (e.g,gpt-35-turbo-16k).max_size_mb: the maximum size for the uploaded documents.max_files: the maximum number of documents that can be uploaded.text_splitter_chunk_size: the maximum chunk size used by theRecursiveCharacterTextSplitterobject.text_splitter_chunk_overlap: the maximum chunk overlap used by theRecursiveCharacterTextSplitterobject.embeddings_chunk_size: the maximum chunk size used by theOpenAIEmbeddingsobject.max_retries: The maximum number of retries for OpenAI API calls.backoff_in_seconds: The backoff time in seconds for retries in case of failures.system_template: The content of the system message used for OpenAI API calls.

In the next section, the code sets the default Azure credential based on the api_type and configures a logger for logging purposes.

Here's a brief explanation:

-

default_credential: It sets the default Azure credential toDefaultAzureCredential()if theapi_typeis "azure_ad"; otherwise, it is set toNone. -

logging.basicConfig(): This function configures the logging system with specific settings.stream: The output stream where log messages will be written. Here, it is set tosys.stdoutfor writing log messages to the standard output.format: The format string for log messages. It includes the timestamp, filename, line number, log level, and the actual log message.level: The logging level. It is set tologging.INFO, meaning only messages with the levelINFOand above will be logged.

-

logger: This creates a logger instance named after the current module (__name__). The logger will be used to log messages throughout the code.

Next, the code defines a helper function backoff that takes an integer attempt and returns a float value representing the backoff time for exponential retries in case of API call failures.

The backoff time is calculated using the backoff_in_seconds and attempt variables. It follows the formula backoff_in_seconds * 2 ** attempt + random.uniform(0, 1). This formula increases the backoff time exponentially with each attempt and adds a random value between 0 and 1 to avoid synchronized retries. Then, the application defines a function called refresh_openai_token() to refresh the OpenAI security token if needed.

The function follows these steps:

- It fetches the current token from

cl.user_session(which seems to be a part of thechainlitlibrary) using the key'openai_token'. The user_session is a dictionary that stores the user’s session data. The id and env keys are reserved for the session ID and environment variables. Other keys can be used to store arbitrary data in the user’s session. - It checks if the token is

Noneor if its expiration time (expires_on) is less than the current time minus 1800 seconds (30 minutes).

Next, the code defines a function called start_chat that is used to initialize the when the user connects to the application or clicks the New Chat button.

Here is a brief explanation of the function steps:

@cl.on_chat_start: The on_chat_start decorator registers a callback functionstart_chat()to be called when the Chainlit chat starts. It is used to set up the chat and send avatars for the Chatbot, Error, and User participants in the chat.cl.Avatar(): the Avatar class allows you to display an avatar image next to a message instead of the author's name. You need to send the element once. Next, if an avatar's name matches an author's name, the avatar will be automatically displayed. You must provide either a URL or a path or content bytes.

The following code is used to initialize the large language model (LLM) chain used to reply to questions on the content of the uploaded documents.

The AskFileMessage API call prompts the user to upload up to a specified number of .pdf or .docx files. The uploaded files are stored in the files variable. The process continues until the user uploads files. For more information, see AskFileMessage.

The following code processes each uploaded file by extracting its content.

- The text content of each file is stored in the list

all_texts. - This code performs text processing and chunking. It checks the file extension to read the file content accordingly, depending on if it's a

.pdfor a.docxdocument. - The text content is split into smaller chunks using the RecursiveCharacterTextSplitter LangChain object.

- Metadata is created for each chunk and stored in the

metadataslist. - If

openai.api_type == "azure_ad", the code invokes therefresh_openai_token()that gets a security token from Azure AD to communicate with the Azure OpenAI Service.

The next piece of code performs the following steps:

- It creates an OpenAIEmbeddings configured to use the embeddings model in the Azure OpenAI Service to create embeddings from text chunks.

- It creates a ChromaDB vector database using the

OpenAIEmbeddingsobject, the text chunks list, and the metadata list. - It creates an AzureChatOpenAI LangChain object based on the GPR model hosted in Azure OpenAI Service.

- It creates a chain using the RetrievalQAWithSourcesChain.from_chain_type API call uses previously created models and stores them as retrievers.

- It stores the metadata and text chunks in the user session using the

cl.user_session.set()API call. - It creates a message to inform the user that the files are ready for queries, and finally returns the

chain. - The

cl.user_session.set("chain", chain)call stores the LLM chain in the user_session dictionary for later use.

The following code handles the communication with the OpenAI API and incorporates retrying logic in case the API calls fail due to specific errors.

@cl.on_message: The on_message decorator registers a callback functionmain(message: str)to be called when the user submits a new message in the chat. It is the main function responsible for handling the chat logic.cl.user_session.get("chain"): this call retrieves the LLM chain from the user_session dictionary.- The

forloop allows multiple attempts, up tomax_retries, to communicate with the chat completion API and handles different types of API errors, such as timeout, connection error, invalid request, and service unavailability. await chain.acall: The asynchronous call to the RetrievalQAWithSourcesChain.acall executes the LLM chain with the user message as an input.

The code below extracts the answers and sources from the API response and formats them to be sent as a message.

- The

answerandsourcesare obtained from theresponsedictionary. - The sources are then processed to find corresponding texts in the user session metadata (

metadatas) and createsource_elementsusingcl.Text(). cl.Message().send(): the Message API creates and displays a message containing the answer and sources, if available.

Below, you can read the complete code of the application.

You can run the application locally using the following command. The -w flag` indicates auto-reload whenever we make changes live in our application code.

Build Docker Images

You can use the src/01-build-docker-images.sh Bash script to build the Docker container image for each container app.

Before running any script in the src folder, make sure to customize the value of the variables inside the 00-variables.sh file located in the same folder. This file is embedded in all the scripts and contains the following variables:

The Dockerfile under the src folder is parametric and can be used to build the container images for both chat applications.

Test applications locally

You can use the src/02-run-docker-container.sh Bash script to test the containers for the sender, processor, and receiver applications.

Push Docker containers to the Azure Container Registry

You can use the src/03-push-docker-image.sh Bash script to push the Docker container images for the sender, processor, and receiver applications to the Azure Container Registry (ACR)

Monitoring

Azure Container Apps provides several built-in observability features that together give you a holistic view of your container app’s health throughout its application lifecycle. These features help you monitor and diagnose the state of your app to improve performance and respond to trends and critical problems.

You can use the Log Stream panel on the Azure Portal to see the logs generated by a container app, as shown in the following screenshot.

Alternatively, you can click open the Logs panel, as shown in the following screenshot, and use a Kusto Query Language (KQL) query to filter, project, and retrieve only the desired data.

Review deployed resources

You can use the Azure portal to list the deployed resources in the resource group, as shown in the following picture:

You can also use Azure CLI to list the deployed resources in the resource group:

You can also use the following PowerShell cmdlet to list the deployed resources in the resource group:

Clean up resources

You can delete the resource group using the following Azure CLI command when you no longer need the resources you created. This will remove all the Azure resources.

Alternatively, you can use the following PowerShell cmdlet to delete the resource group and all the Azure resources.