This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

While I have written before about the speed of the Movidius: Up and running with a Movidius container in just minutes on Linux, there were always challenges “compiling” models to run on that ASIC. Since that blog, Intel has been fast at work with OpenVINO and Microsoft has been contributing to ONNX. Combining these together, we can now use something as simple as a Model created in http://customvision.ai at the edge running in OpenVINO for acceleration.

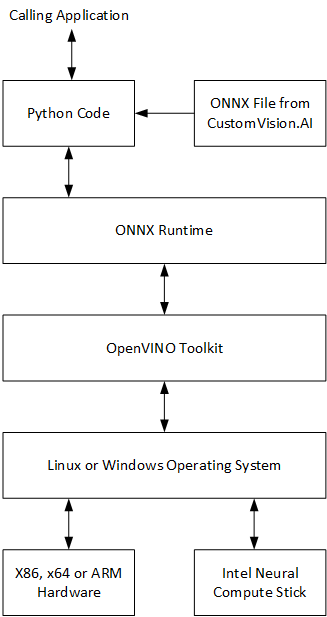

Confused? The following block diagram shows the relationship:

On just standard hardware and with a RTSP camera, I am able to score 3.4 frames per second on 944 x 480 x 24 bit images with version 1 of the Compute Stick or 6.2 frames per second with version 2. While I can get something close to this using CPU, the Movidius frees the CPU and allows multiple “calling applications” where the CPU performance is limited to just one.

| OpenVINO Tag | Hardware | FPS from RTSP | FPS Scored | CPU Average | Memory |

| CPU_FP32 | 4 @ Atom 1.60 GHz (E3950) | 25 | 3.43 | 300% (of 400%) | 451 MB |

| GPU_FP16 |

Intel® HD Graphics 505 on E3950 |

25 | 6.3 | 70% (of 400%) | 412 MB |

| GPU_FP32 |

Intel® HD Graphics 505 on E3950 |

25 | 5.5 | 75% (of 400%) | 655 MB |

| MYRIAD_FP16 | Neural Compute Stick | 25 | 3.6 | 20% (of 400%) | 360 MB |

| MYRIAD_FP16 | Neural Compute Stick version 2 | 25 | 6.2 | 30% (of 400%) | 367 MB |

More info here: https://software.intel.com/content/www/us/en/develop/articles/get-started-with-neural-compute-stick.html and most of this work is based off this reference implementation: https://github.com/Azure-Samples/onnxruntime-iot-edge/blob/master/README-ONNXRUNTIME-OpenVINO.md.

Similar to the reference implantation above, I base this approach using a Docker Container, allowing this to be portable and deployed as an Azure IoT Edge Module. Note, while OpenVINO, ONNX and Movidius are supported on Windows, exposing the hardware to a container is only supported on Linux.

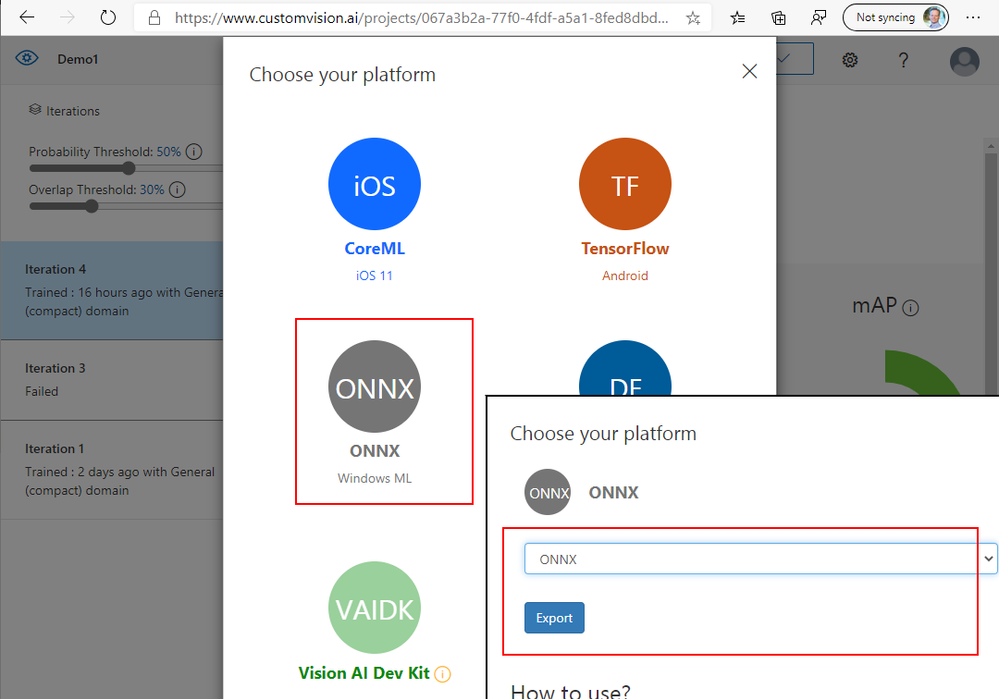

Step 1: in CustomVision.AI, create and train a model, then export it as ONNX.

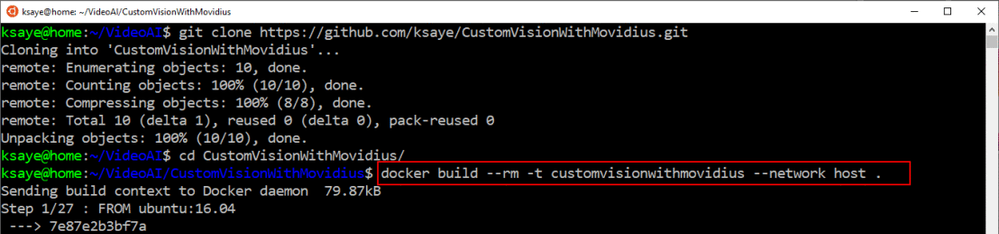

Step 2: Using Git, clone the following repository: https://github.com/ksaye/CustomVisionWithMovidius.git

Modify line 67 of the DockerFile to reflect the URL of your exported ONNX zip file.

Step 3: On Linux with Docker or Moby installed at a command prompt where you cloned the repository, run as shown below. This will take a while.

docker build --rm -t customvisionwithmovidius --network host .

Step 4: On the host Linux PC, run the following commands to ensure that the application has access to the USB or Integrated MyriadX ASIC:

sudo usermod -a -G users "$(whoami)"

sudo echo SUBSYSTEM=="usb", ATTRS{idProduct}=="2150", ATTRS{idVendor}=="03e7", GROUP="users", MODE="0660", ENV{ID_MM_DEVICE_IGNORE}="1" > /etc/udev/rules.d/97-myriad-usbboot.rules

sudo echo SUBSYSTEM=="usb", ATTRS{idProduct}=="2485", ATTRS{idVendor}=="03e7", GROUP="users", MODE="0660", ENV{ID_MM_DEVICE_IGNORE}="1" >> /etc/udev/rules.d/97-myriad-usbboot.rules

sudo echo SUBSYSTEM=="usb", ATTRS{idProduct}=="f63b", ATTRS{idVendor}=="03e7", GROUP="users", MODE="0660", ENV{ID_MM_DEVICE_IGNORE}="1" >> /etc/udev/rules.d/97-myriad-usbboot.rules

sudo udevadm control --reload-rules

sudo udevadm trigger

sudo ldconfig

Step 5: With the image built, create an instance running the following command to create the container, start the container and monitor the log files. Note this web service listens on port 87 by default.

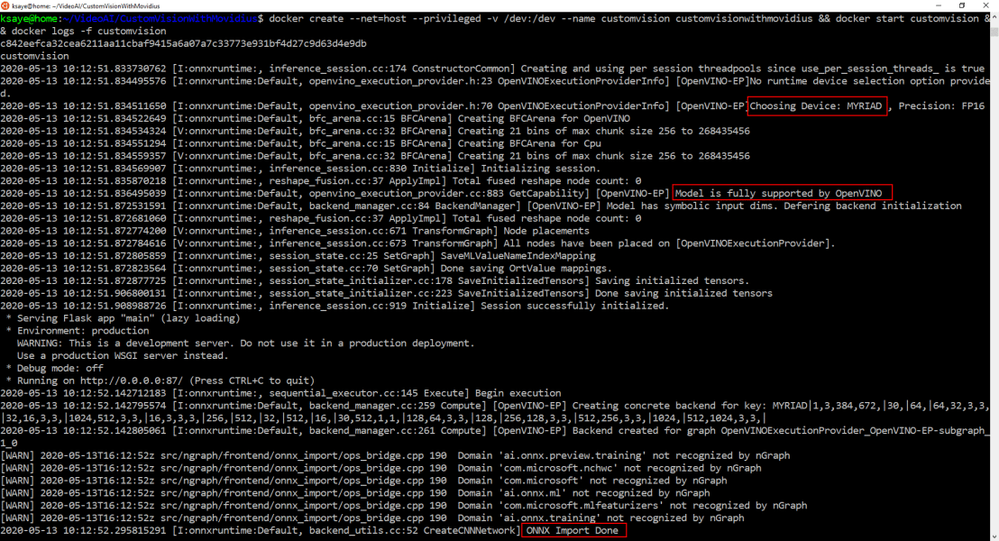

docker create --net=host --privileged -v /dev:/dev --name customvision customvisionwithmovidius && docker start customvision && docker logs -f customvision

Step 6: To send an image to the web service, simply run the following curl command, replacing 1.jpg for your image.

curl -X POST http://127.0.0.1:87/image -F imageData=@1.jpg

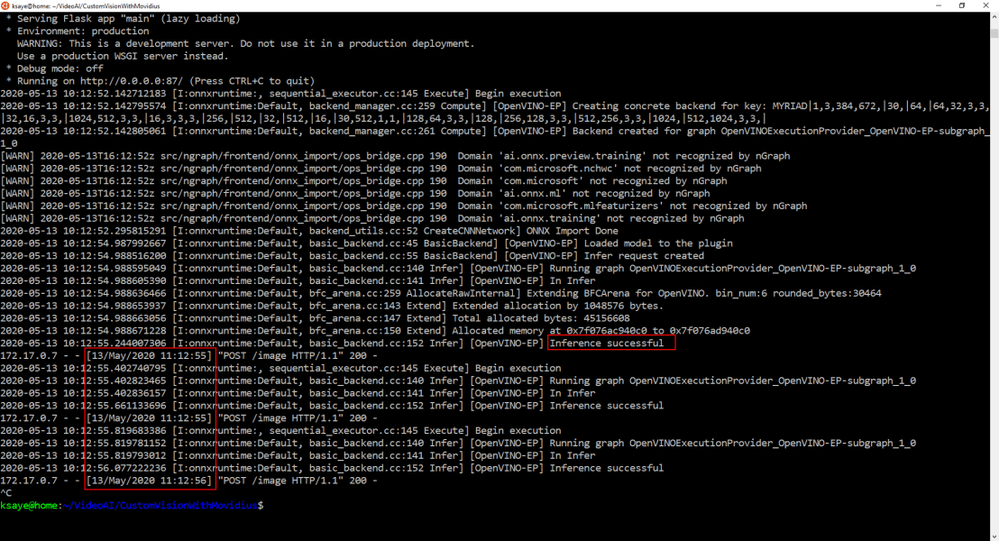

Looking at the screen, I see the Myriad was found, and the ONNX model was supported and loaded.

Because I have a process sending images to the web service as fast as it will take them, note that we see below multiple inferences per second.