This post has been republished via RSS; it originally appeared at: Premier Field Engineering articles.

Hello. I’m Sean Greenbaum, Sr. Premier Field Engineer (PFE) based in Orlando, FL. Today, I’m here to talk to you about our new Assessments program, called On-Demand Assessments.

You likely know of these assessments by their previous name, Risk Assessment Program (RAP), or as we’ve been calling them since they became cloud based, RAP as a Service.

Background

The RAP as a Service program was wildly successful and very well received by our customers. You were able to work with your Microsoft TAM (Technical Account Manager) to be provisioned access to the RAP tool for your decided technology (Active Directory, Exchange, SQL, SharePoint, and a couple dozen others), download the tool, run it to collect the needed data, and upload it to Microsoft for analysis. All this was initiated through a web-based portal allowing you, your Microsoft Technical Account Manager (TAM), and any necessary PFEs on your account access to the data. This allowed you to work closely with your Microsoft Account team to review the collected data, address any issues and risks, and rerun the tool as you saw fit to verify remediation and collect new data.

On-Demand Assessments

A new system was developed to leverage changes in the way Microsoft provides services and leverage existing technologies in Azure. Microsoft announced a new services model called Unified Support. Many changes exist between Unified Support and Premier Support. This article isn’t intended to go through these changes. However, I do want to point out one of the changes Unified Support brought to our Services customers-Services Hub.

Services Hub is intended to allow our customers even more self-service options, including the ability to initiate On Demand Assessments (There are some licensing conversations to have with your Account Team first). If you are already a Unified Support customer, then you’ve likely had these conversations. You can contact your TAM with questions and they will be able to help you through the process. The Services Hub is used to provision your assessment, and Azure Log Analytics is used to store and process the data that is collected.

All current Risk Assessment offerings are being converted from the old system, to the new On-Demand system, powered by Services Hub and Azure Log Analytics. For more information on which technologies have already been converted to the new system, please click here.

Note: Offline Assessments are NOT being converted as this time.

For existing Unified Support customers, you can log into the Services Hub portal and access the Health/Assessments page. If you are not a Unified Support customer, or do not have access to the Services Hub, contact your TAM for assistance.

Due to these changes, the process to get setup and ready to go is slightly more complicated. I’ve broken down the process into 6 phases.

- Phase 1: Setup Azure Log Analytics

- Phase 2: Link Services Hub workspace to Azure Log Analytics workspace

- Phase 3: Configure the on-premises data collection server

- Phase 4: Provision your Assessment in Service Hub

- Phase 5: Add assessment to the Data Collection machine

- Phase 6: Grant Access to the Azure Log Analytics Workspace and review the data

Now that you have a high-level overview, lets begin with our first On Demand Assessment.

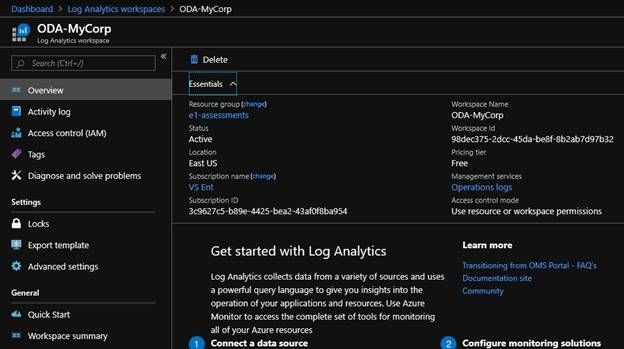

Phase 1: Setup Azure Log Analytics

All data collected by the new process stores the data in an Azure Log Analytics workspace. Specifically, it stores the data in an Azure Log Analytics workspace in your Azure Subscription, under your control, not in one under control of Microsoft. This means you control who has access to the data. When you want that data to be deleted, you have the power to delete it, audit it, etc. – just like any other Azure Log Analytics data you may already be using.

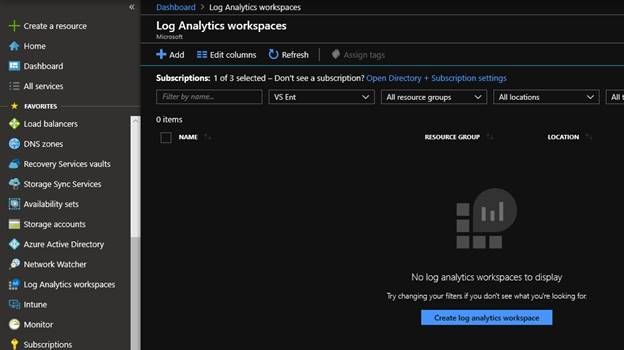

Of course, this means you need to create an Azure Log Analytics workspace to hold your data. Log in to the Azure portal, and search for Log Analytics workspaces. (You may want to “star” it so it appears on your Favorites list of Azure services)

Here, we see I do not have any created. Click on Add to create one.

Note: If you already have an Azure Log Analytics workspace and you would like to use that one, you can. Make note of its name as you will need that later.

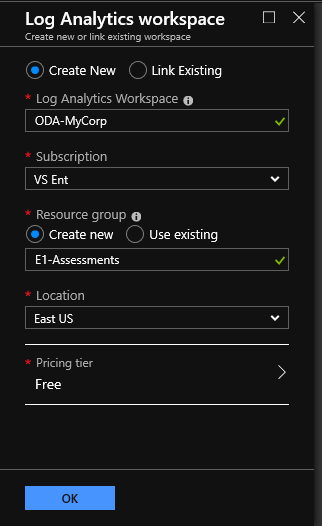

Give your new Log Analytics Workspace a friendly name, choose your subscription, select or create a new Resource Group, choose a location to store the data and choose the Free pricing tier.

Note: Azure Log Analytics lets you upload 5GB of data per month and retain it for 31 days for free, as of the time of this writing (March 2019). (Azure Log Analytics Pricing). The uploaded data from the On-Demand Assessment is expected to be much less than this size limit, generally in the 10-100s of MBs. The data is also overwritten each time the data is collected, meaning it will be retained for less than 31 days.

Note: If your company does not have an Azure Subscription that can be used, contact your TAM.

Phase 2: Link Services Hub workspace to Azure Log Analytics workspace

Log into the Services hub portal at https://serviceshub.microsoft.com. If you do not have access, contact your TAM.

Services Hub also uses the concept of Workspaces. Each org will have at least one Workspace. A workspace contains many elements of Unified Support; however I’m only going to focus on the On-Demand Assessments here.

First, lets connect this Services Hub Workspace to your Azure Log Analytics Workspace.

Note: Each Services Hub Workspace can only be linked to one Azure Log Analytics Workspace. If your organization has a need to separate On-Demand Assessment data into separate Azure Log Analytics workspaces, then you must work with your TAM to create additional Services Hub Workspaces.

Note: In order to complete the linkage between the Services Hub workspace and the Azure Log Analytics workspace, you must be an owner of the Azure Subscription, and an Owner of the Azure Log Analytics workspace. This is a one-time operation, and as of the time of the writing of this article, these are the required permissions.

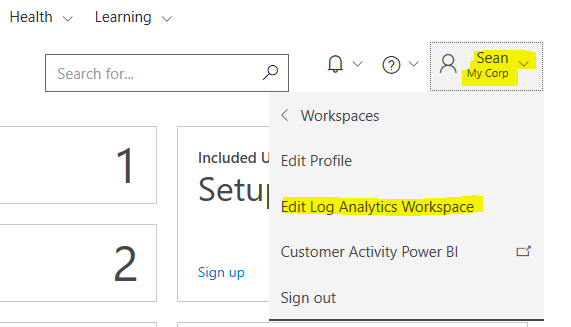

Select your name from the top right-hand corner. In the drop down, select “Edit Log Analytics Workspace”

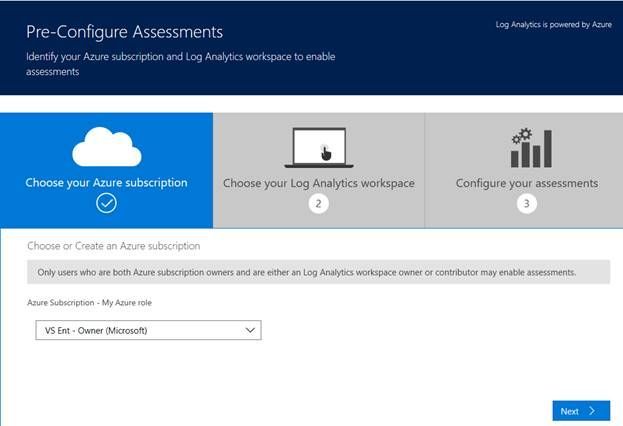

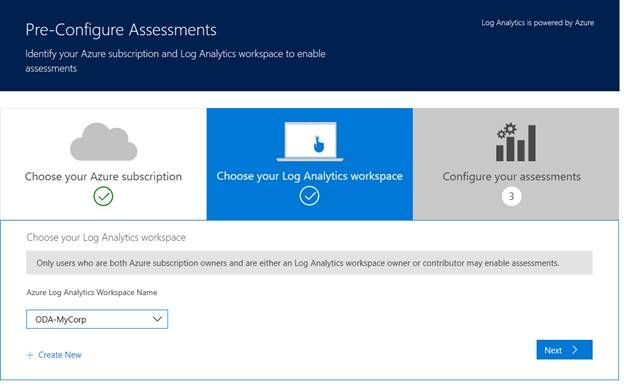

On the Pre-Configure Assessments Page, select your Azure Subscription from the drop-down menu. Click Next.

On the “Choose your Log Analytics workspace” page, select the Azure Log Analytics workspace you would like to store this data in. If you skipped Phase 1 above, you can click the “Create New” link to create a new Azure Log Analytics workspace. Click Next to proceed.

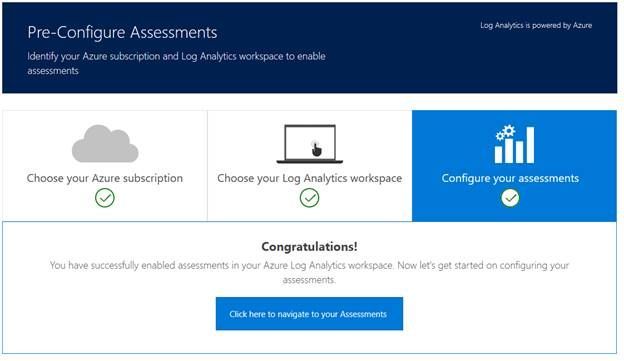

You have now successfully linked the Services Hub workspace to your Azure Log Analytics workspace.

Phase 3: Configure the on-premises data collection server

There are multiple supported configurations for customers to configure their data collection environment.

- Option 1) Data is collected by a single machine called the Data Collection machine. It then sends the data to an OMS Gateway server which has internet connectivity to Azure Log Analytics.

- Option 2) Data is collected by a single machine called the Data Collection machine. It has internet connectivity and can upload the data directly to Azure Log Analytics

- Option 3) You already have a SCOM infrastructure in place and wish to use that to collect the data and upload it to Azure Log Analytics

All options are detailed here. Download the Setup Assessment.pdf file. If you already know which assessment you’ll be doing, feel free to also download the Prerequisite document for that assessment as well. You will need it in the next section.

Here, I will detail the steps for Option 2. In my experience, this is going to be the most common option.

Build a single Data collection machine

Build a new VM. This VM will be your data collection machine. It will need access to all the servers for the assessment(s) you’ll be using it to collect data from.

Note: If your intention is to use this machine for multiple assessments, it will need to meet the pre-reqs for all assessments. Review the documentation for each assessment to help with sizing the VM properly, configuring appropriate firewall rules, and provisioning needed service accounts.

The new VM must be at least Windows Server 2008 R2 SP1 and have .NET 4.0 or newer.

Note: The On-Demand assessment tool is being recompiled using .NET 4.6.2 and will require that starting July 2019. Ensure at least .NET 4.6.2 is installed on your Data collection machine now.

Note: It is recommended your data collection machine be on the highest operating system available, with full patches. As of this writing, that recommendation means either Server 2016 or Server 2019.

Each assessment will have details on minimum hardware specs recommendation. In general, most will recommend 16GB of RAM, at least 2 CPU cores and at least 10GB of free disk space. If you have multiple assessments running from a single data collection machine, you’ll need to adjust those specs as necessary to ensure enough resources for all tasks and data.

The VM also must be joined to your AD forest that contains the servers being analyzed.

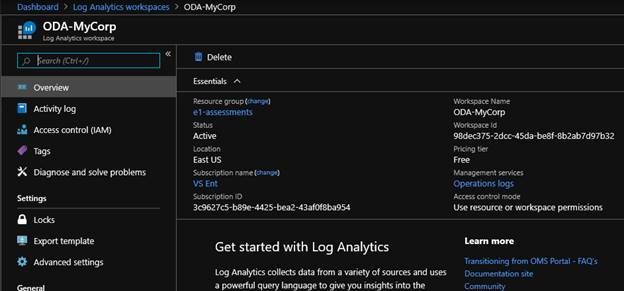

Once the VM is built and ready to go, install the Microsoft Monitoring Agent (MMA). Log on to the Azure portal and locate your Azure Log Analytics workspace.

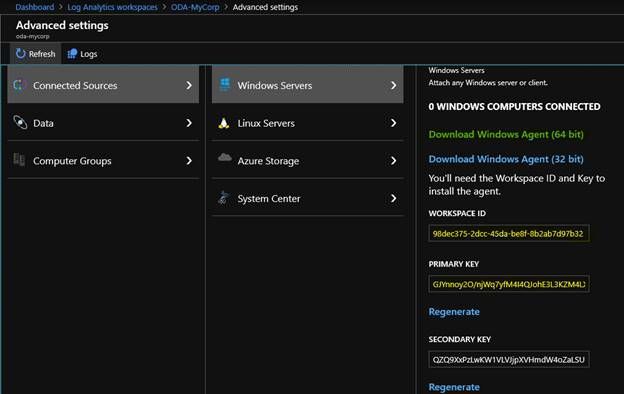

Click Advanced Settings, Connected Sources, Windows Servers.

Download the Windows Agent (64-bit). (If using a 32bit OS on the tools machine, then download that one)

Make note of the Workspace ID, and either the Primary or Secondary Key. Either key will work, we just need one of them.

On the tools machine, run the MMASetup file you downloaded. Click next to start the wizard, agree to the License terms, and select your preferred installation folder.

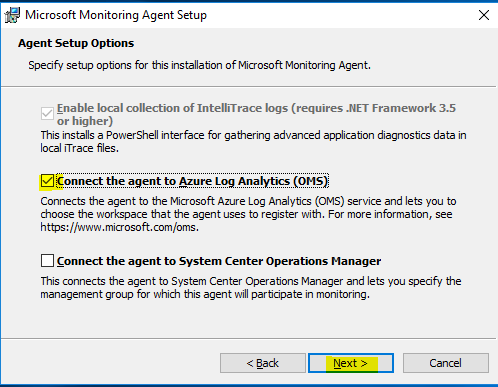

On the Agent Setup Options, check the box “Connect the agent to Azure Log Analytics (OMS).

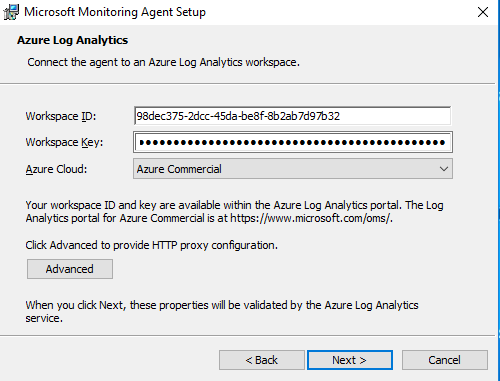

The next page will ask you to enter the Workspace ID and key from the Azure portal. This is how the agent authenticates with the Log Analytics workspace. Also select the correct Azure cloud if using a cloud other than Azure Commercial. If you have special HTTP proxy configurations, you can enter those here too. Click Next when done.

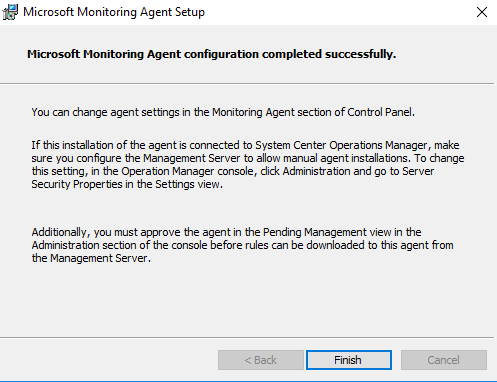

Confirm the Install. It should only take a minute or two.

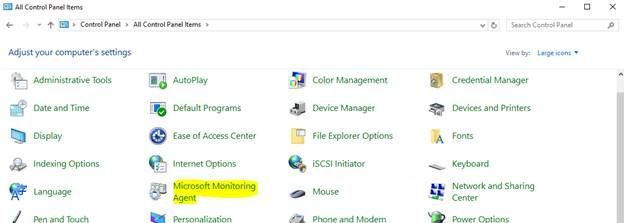

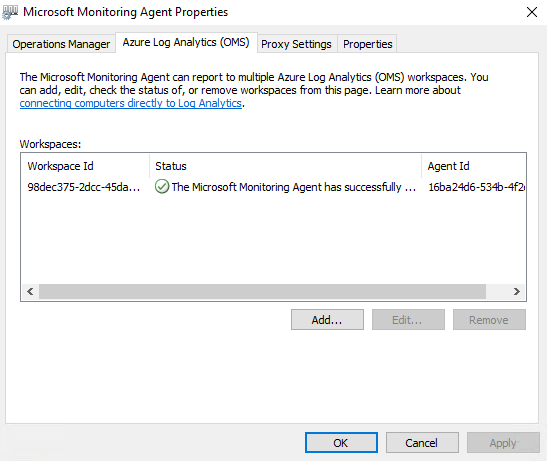

Confirm you are connected to Azure. Open Control Panel and search for the “Microsoft Monitoring Agent”

Select it.

Click on the Azure Log Analytics (OMS) tab at the top. Confirm the Status has a Green checkmark for your Workspace ID. This indicates a successful connection.

Phase 4: Provision your Assessment in Service Hub

Now that Services Hub and Azure Log Analytics are connected, and the data collection machine is connected to the Azure Log Analytics workspace, Services Hub can now add the assessment(s).

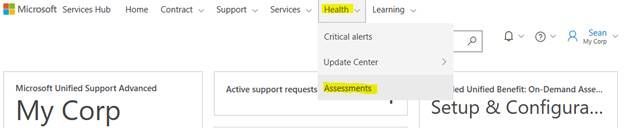

Log into Services Hub and select your Workspace (if using one other than your default)

From the top tool bar, Select Health, Assessments.

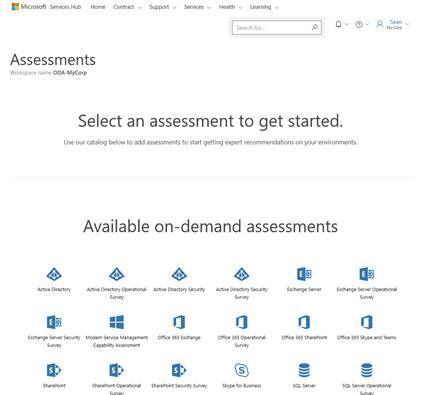

From here you will see a list of the Available On-Demand Assessments you can run.

Select the Assessment you would like to add by clicking on it.

A pop-up will be displayed with a summary of the assessment and the option to Close or Add Assessment. Click “Add Assessment”

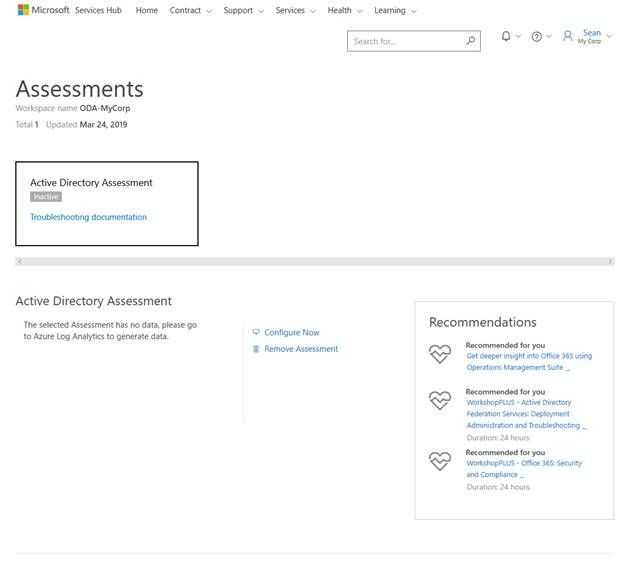

Once the system processes the Add request, you’ll be brought back to the Assessments page in the Services Hub portal. You’ll see the status of the newly added assessment as “Inactive”. Selecting the assessment will show you options to “Configure Now” or “Remove Assessment”. There is no need to use these links. We will complete the needed steps in the next section of this document.

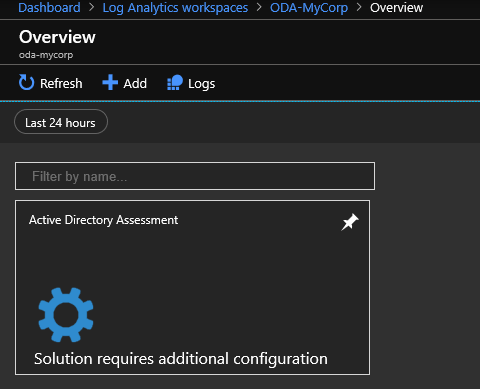

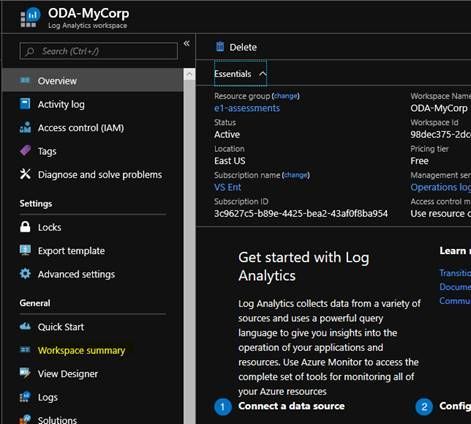

You have successfully added the Assessment to Services Hub. Next, lets check the portal to ensure it has been properly added there as well. Log into the Azure portal and locate your Azure Log Analytics Workspace.

In the list on the left-hand side, locate “Workspace Summary”. Click on it.

You’ll see that your Assessment has been added to the workspace, and it requires additional configuration. That will be handled in the next phase of this document.

Phase 5: Add assessment to the Data Collection machine

Now that all the infrastructure is ready to go, it’s time to start collecting data. Each Assessment will have different requirements for completing the data collection. This download link allows you to select which assessment you are configuring and provides the needed steps to be completed on the Data Collection Machine. Download the pre-reqs document for your assessment.

Typically, each assessment will require you to create a service account which the assessment will run under. This account will need privileged permissions to the servers and applications that are being assessed. Each assessment you run should have its own service account, and the permissions should be specific to the needs to the assessment being performed.

Once you have the service account configured as required, and any other pre-reqs are completed as per the document, you will also be provided with a PowerShell command to run on your data collection machine. This command will prompt you to provide a local Working Directory on the Data Collection machine where temporary and report data will be stored specific to the assessment. It will also prompt you for other details – like the service account name and password, or server names to be included.

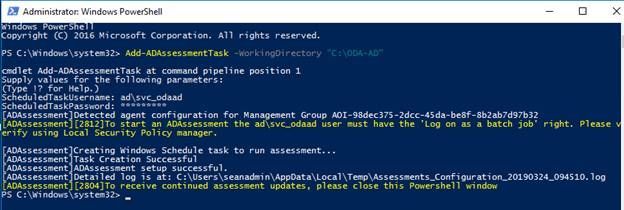

I will show the process for the Active Directory Assessment here.

Once you have created the service account, granted it the necessary rights on the domain and on the data collection machine, run the PowerShell command.

Open PowerShell as Admin.

For the AD assessment, the PowerShell command is:

Add-ADAssessmentTask -WorkingDirectory <dir here>

Note: The directory must already exist. Create it first.

The PowerShell command will then prompt you to provide the Username and Password of the service account. Your output will look like this:

Close the PowerShell window.

What just happened

The PowerShell command creates a scheduled task on the data collection machine. It supplies all the necessary commands and arguments to run the data assessment.

This machine will reach out to all the systems under the scope of the assessment, collect the data from them, then perform the heavy calculations needed to determine the issues and risks to your environment. Once the data is compiled, it will upload the summary results to Azure Log Analytics for consumption.

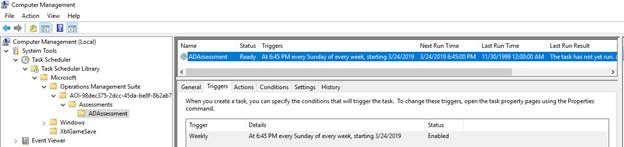

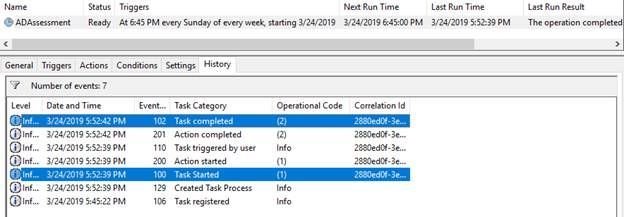

You can view the Schedule Task like you would any other scheduled task.

Run Computer Management, Navigate to System Tools, Task Scheduler, Task Scheduler Library, Microsoft, Operations Management Suite, AOI-<GUID>, Assessments. You’ll see a folder for each assessment you’ve added, and within each folder the scheduled task.

You’ll notice the scheduled task is set to run 1 hour after it was first created, and every 7 days after that.

This means your Azure Log Analytics portal will receive new data every 7 days from your data collection machine. If you’d like to change the schedule, feel free to do so. Keep in mind, on some environments it may take hours or days for the data to be processed and uploaded. Setting the task to run Daily may not be advisable. Generally weekly or twice a month is our recommendation, but the decision is up to you. You can run it as frequently or in-frequently as you deem the data to be valuable.

When the Scheduled Task runs, it only runs for a few seconds before competing. Here you can see I manual executed the task, and we see it completing in about 3 seconds.

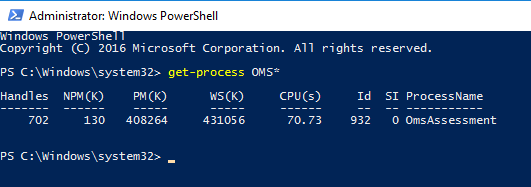

This does not mean the data was collected. The scheduled task simply executed another process which is currently doing the heavy work of reaching out to the servers, getting the data, compiling it, and sending it to the Microsoft Monitoring Agent. You can verify this by looking for a process named “OMSAssessment.exe”

Depending on the size of the environment, this process will run for several hours, or days. If it is running, data is being collected and analyzed.

You’ve done good work. Kick back, relax, and let the assessment do its work.

Phase 6: Grant Access to the Azure Log Analytics Workspace and review the data

Once the OMSAssessment.exe process has finished, all data collection is complete and the data is sent to the Microsoft Monitoring Agent to be processed and uploaded to Azure Log Analytics.

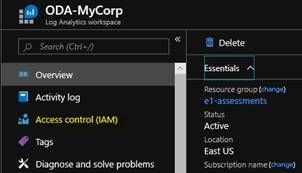

Log into the Azure web portal, select Log Analytics Workspaces and click on your Workspace.

Grant Users access to view the data

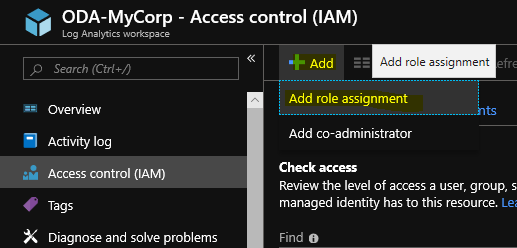

From the overview page, select Access Control (IAM)

Click Add, and select Add role assignment

From the Add role assignment blade, select the following:

- Role: Log Analytics Reader

- Assign access to: Azure AD user, group, or service principal

- Select: <Enter the name or email address of the user you would like to grant the access>

This can include external (not in your Azure AD tenant) users if guest access is allowed in your tenant.

Once you’ve selected all the individuals you’d like to grant this access to, click Save.

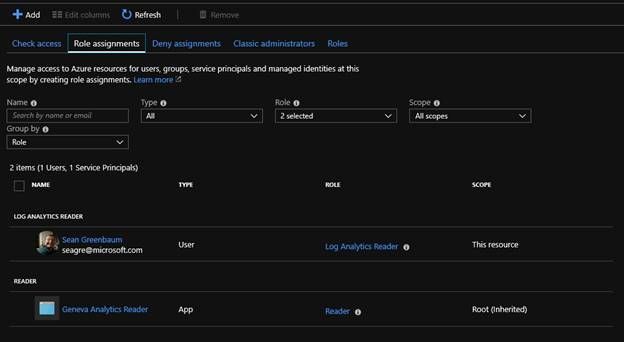

Clicking on the Role Assignments tab lets you review the various roles and what access they have to this Log Analytics workspace:

Note: If you are planning to have a Microsoft Premier Field Engineer work with you to review the results, they may ask you to grant them the Log Analytics Reader role. This allows the PFE to view the data in Azure Log Analytics. The access can be removed by you at any time. You should consider removing it when the PFE has completed their work with you.

Review the Data

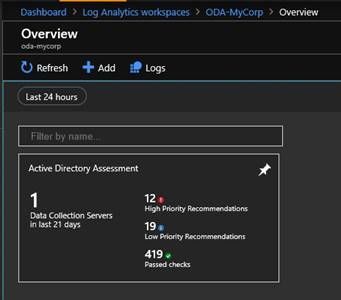

From the Overview page, select Workspace summary.

In the workspace summary you’ll see the Assessment you ran. (My demo is showing the Active Directory Assessment)

Click on the Assessment tile to be brought into the Assessment. Here you will see the Recommendations broken down by category and sorted by weight.

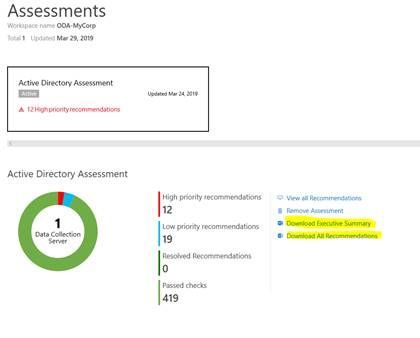

Reports

Each assessment generates its own data and reports. From the Services Hub dashboard, you can download the Executive Summary and the All Recommendations documents.

Some assessments also log additional report data which can only be found on the Data Collection machine.

Note: Previously this data was uploaded to the Risk Assessment portal. However, due to concerns about PII data, it has been decided this data will not uploaded. Instead, you will retain full custody of the data and can share it with others at your discretion.

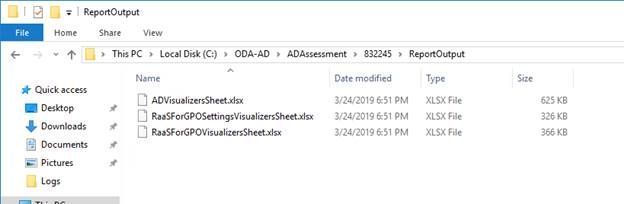

Remember when you setup the assessment you were asked to supply a working folder for the data? Under this folder location is a subfolder with a 6-digit number. This folder and its contents are regenerated each time the assessment is run. The number may change, and the previous contents will be erased. Under the 6-digit folder is another folder ReportOutput. Here you may find additional reports that can assist you in your remediation and documentation efforts.

Be sure to check this location for more goodies.

FAQs

Q1) Can I add multiple assessments to one Workspace/Data Collection machine?

A1) Absolutely. Simply start at Phase 4 of this document to add your additional assessments. However, a word of caution. The security boundary is the Azure Log Analytics workspace. If you have a need to separate your assessments and delegate access to a different team, then you need to store it in separate Azure Log Analytics Workspaces. A Services Hub workspace can only be linked to one (1) Azure Log Analytics Workspace. This will require you to work with your TAM to create an additional Services Hub workspace and complete all the steps in this document as if it were new.

Q2) What if I want to do multiples of the same assessment? For example, I have multiple AD Forests and I’ve purchased multiple AD Assessments for them.

A2) Yes, however you need to have your TAM create multiple Services Hub workspaces, each linked to their own Azure Log Analytics Workspaces. Essentially each Forest will be a new buildout of the steps in this document.

Conclusion

You’ve linked your Services Hub workspace to your Azure Log Analytics Workspace, built your Data Collection machine, provisioned your assessments, collected the data from the target servers, and granted access to your staff to view the actionable items in the portal. The assessment you have configured will now rescan the environment and upload the data every 7 days (unless you’ve changed the defaults).

Be sure to check the portal often and review the results. Being proactive is the best way to ensure issues don’t become problems. Using this data is a great way to do that. As always, if you have questions about some of the items found during the assessment, contact your TAM and ask to speak to a PFE.

Until next time, Sean “Whats this button do” Greenbaum.

May i know whether windows 10 can run as data collection machine or not ? Since i found the collection machine with client OS version can able to work