This post has been republished via RSS; it originally appeared at: Core Infrastructure and Security Blog articles.

Hi Team, it's Eric Jansen again, and as always, I'm excited to show you guys what I have in store for you. Now, I gave you guys a break in Part 2 of the series and kept that one short, but break time is over, team; I'm going back to a bit of a more in-depth discussion today, so buckle up and get comfortable. In this edition and in some future editions of the series, I'll be focusing on collecting data from the DNS Analytical Log, based on certain use cases and presenting it in a more concise and useful manner. In order to do this, I'll share some examples of how you can parse the DNS Analytical Log to find possible items of interest, since just scrolling through literally, what can be millions of lines of events, just doesn't sound like a fun time to me; Nor is it really feasible. Time is money!

For this scenario, I've developed a customized Windows based version of the popular Pi-Hole, just without the pretty GUI. I did this using PowerShell, DNS policy, and some block lists from GitHub. This was implemented on a non-domain joined physical server using Windows Server 2019. At the time of this writing the server has over 550K Query Resolution Policies to deny query attempts to domains listed within those QRPs.

Note: An important side note for those that have a similar configuration to mine – there is a hard limit of how many policies you can ‘Get’, using Get-DNSServerQueryResolutionPolicy. That limit is 500K, however you can have more than 500K policies, and they will still work, but once you hit that threshold, you’ll need some custom code (fear not, I've already written it and can share to those that are interested) to get past that limit for collecting information about those policies (though Get-DNSServerQueryResolutionPolicy still works fine if you’re querying for a specific policy). A topic that I may or may not discuss in the future.

In my scenario though, I have one domain per QRP (you aren’t limited to that, that’s just what I did), and those domains include ones that host ads, collect telemetry / tracking data, malware, and other unwanted domains. Also, for those wondering, yes, in the build process, I did enable the DNS Analytical log - I'd have a hard time writing the rest of this blog if I didn't ![]() . As I've alluded to previously, the use of DNS 'black holes' or 'sink holes' is a pretty common use case for DNS policy; as far as I can tell, it's second only to support requests for Split Brain configurations.

. As I've alluded to previously, the use of DNS 'black holes' or 'sink holes' is a pretty common use case for DNS policy; as far as I can tell, it's second only to support requests for Split Brain configurations.

In a future post, if there's interest, I'll detail that build process and discuss the functions that I've written to make everything happen. For the time being, however, let's dig in to see how we can harvest some useful data from the analytic log for this particular scenario.

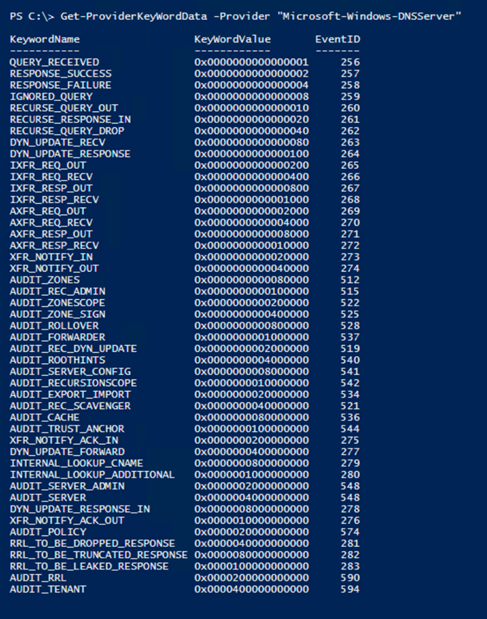

OK, so if we're going to parse the DNS Analytical Log to harvest any useful data the first thing that we'll need to know is what events exist to be able to parse, as well as the format for each of those events that we're interested in parsing. The events themselves are generated based on provider outputs. In Part 1 of the series, I shared the Get-ProviderKeyWordData function with you guys to show the event keywords that the provider would return. Since then, I've modified the function to include the event ID's and message format information, but I've only tested its functionality with the "Microsoft-Windows-DNSServer" provider - so no guarantees that it'll work any others.

The updated code for that is here, and if you've been following the series, you may notice a new parameter that I've added to the function (-ShowEventStructure), which we'll use in a minute:

The new default output now shows the Event ID of the associated Keyword Value that the provider logs to the DNS Analytical Log:

For the purposes of today's blog, and in following suit with Part 1 of the series, I'm just going to focus on the Parsing of RESPONSE_FAILURE events - output by the provider as Event ID 258. For the most part, what I show you in regard to parsing this event would apply to all of the others. I only say this because I haven't written a parser for all of the events seen in the list above (not yet anyhow). The reason that I'm interested in the 258 events (specifically those that are triggered as a result of policy) are because these are the responses that are sent back to clients that are getting queries refused as a result of something that they're trying to query (knowingly or unknowingly) that resides in the DNS block list (which exist as QRPs).

But why is this of value? How can this help my organization? Well, here's a handful of reasons for both employing a DNS black hole solution and parsing the analytical logs to see what's happening in your organization. (But please write a comment if you have other great use cases that I've missed):

- Additional means for more granular control of internet access

- You have the ability to block specific domains or hosts with DNS.

- Though block lists can be bypassed, there are other measures that can be implemented in conjunction with them to mitigate that.

- Bandwidth savings

- This could be especially useful in environments that are remote where bandwidth is extremely limited / degraded. Any organization that relies on satellite communications, maybe remote teams in Antarctica, or the shipping industry with numerous vessels on the open ocean, or military units operating in remote locations - you name it. But I wouldn't even limit it to that, why not save bandwidth even in high speed environments?

- The bottom line is that if a machine or device can’t resolve a host or domain, then it can’t connect to it, and there can easily be hundreds of thousands of attempts per day even in small / medium sized environments, depending on the block list and clients trying to get out.

- Ability to find “Indicators of Compromise” or “IOCs”

- By parsing and analyzing the ‘hit data’, and possibly comparing current findings to historic trends, among other ways, you may be able to find compromised devices in your environment.

- Ability to find cross domain violations

- Some customers have air gapped environments that aren't supposed to communicate with one another. If one machine gets moved from one of those environments (for example Domain B) to the other (Domain A) and tries to connect to something back on its original domain, if that domain (Domain B) is in the block list, then those violations can be identified, and a security investigation started.

- Those queries can be traced back to the originating client and you can do further auditing to get a good idea of who made the violation (If it, in fact, was a violation).

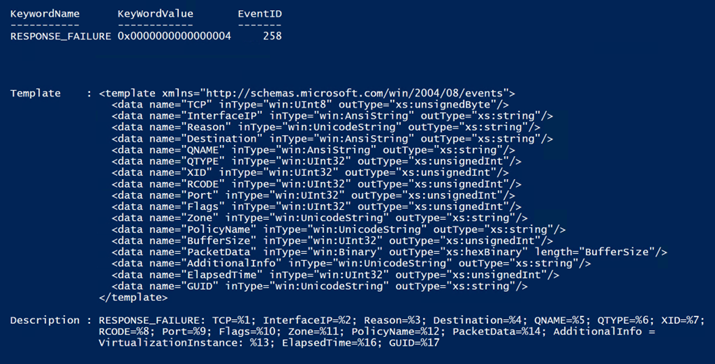

Now that we see a bit of the value proposition, we need to dig in more to figure out how to parse this data. First, I'll figure out the structure of the event that I'm interested in. To see what that looks like, we have some options, two of those options are to trigger the event in the live log and take a look at the format or use the Get-ProviderKeyWordData function with the -ShowEventStructure parameter; we're going to do both for comparison. The use of the -ShowEventStructure parameter will show the XML Elements and child elements in the template property, as well as the structure of the message in the Description property. It dumps this info for ALL events for the defined provider, so your screen may get busy if you run the following command:

Get-ProviderKeyWordData -Provider "Microsoft-Windows-DNSServer" -ShowEventStructure.

Note: You can't see the command that I ran because I just snipped the data that I was interested in from a much larger list. Again, I'm just showing the part that I'm interested in.

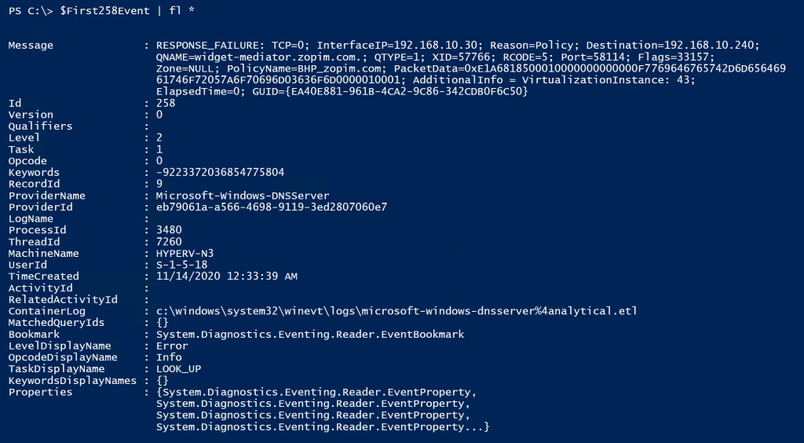

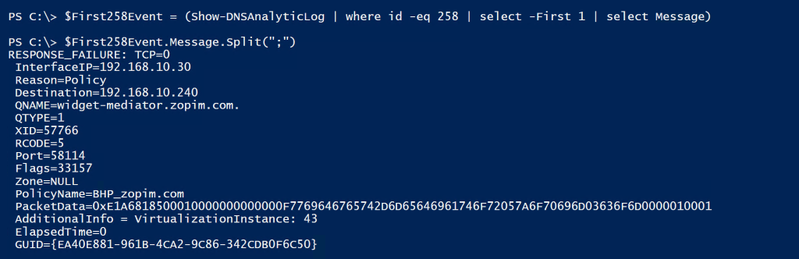

So now let's compare this to an actual event and see what we can do to pick the event apart for harvesting purposes. So, I ran the Show-DNSAnalyticLog function (more on this function can be found here), looking only for 258 events, selecting the first one found, showing all properties in a list format:

The Result:

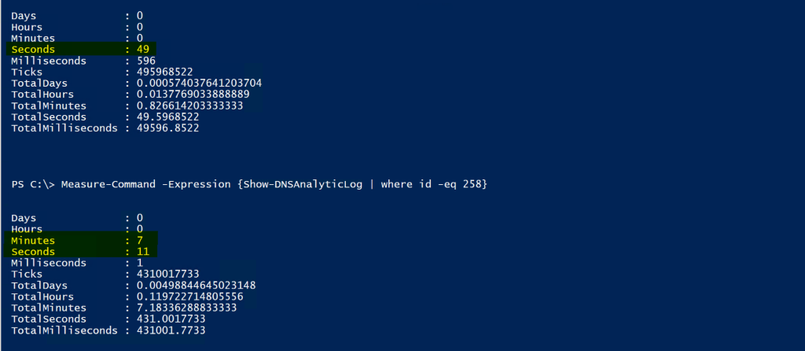

Note: The way I collected the '258 Event' in the screenshot above is definitely not the best way to go about this, I just happened to have recently cleared the log so there wasn't much in it; That and I'm just looking for the first one, so it returned data pretty quickly. If you have millions of events however, and maybe the first event is towards the end of the log, you definitely don't want to send them all through the pipeline for data collection. This was purely for demonstration purposes…but since I'm already going down the rabbit hole, I might as well give you some metrics to look at.

To give you an example of the speed difference, look at the difference between using a hash table (I’m hiding the hash table version of the collection method in the screenshot below, because I'll be discussing it more, in greater depth, in just a bit ![]() . ) to find the '258 events' vs collecting all events and sending them through the pipeline. Keep in mind that a full log could take SIGNIFICANTLY longer, and everyone's mileage may vary depending upon resources:

. ) to find the '258 events' vs collecting all events and sending them through the pipeline. Keep in mind that a full log could take SIGNIFICANTLY longer, and everyone's mileage may vary depending upon resources:

Ok, I'm back out of the rabbit hole, and back to the primary discussion. So, yes…I'm mainly interested in the Message property. I'll split the property on the semi colon since it splits up the child elements of the message property nicely, and see if I can make it more closely resemble the XML template that I showed you guys earlier:

Ok, that’s a lot closer to what we see in the XML template. When parsing and collecting data, now you'd just need to decide what data is of interest to you, and why. For this particular scenario, I decided that I wanted to collect the following data:

- The Event ID, which is actually a property of its own.

- I'd want this because I'd likely parse multiple events in a single parsing cycle, and not just 258 Events - but for this scenario, I'm just parsing 258 Events.

- The Query Source (seen above as the Destination), so that I know where the query originated from.

- Though the element name is 'Destination', that is where the server is sending the failure response, which makes it the 'Query Source'.

- The Query (seen above as the QNAME), so that I know what FQDN was being queried.

- The Query Type (seen above as QTYPE), so that I know if it was queried via IPv4 or IPV6.

- The Policy (seen above as PolicyName), so that I know which policy blocked the query.

- The TimeStamp, which is actually a property of its own, but useful so that I know when the query response took place.

- The XID (also known as the 'Transaction ID'), so that I can look at client / server transactions.

- The GUID, so that I can follow complete conversations, since that's not possible with just the XID.

OK, log file defined, event picked, properties of the event decided upon, and even child elements decided upon, so how do we go about collecting all of this? Well, the first thing that we need to do is collect the data from the DNS Analytical log so that we can parse it. The most efficient way that I know of to accomplish this is by using the Get-WinEvent cmdlet with the -FilterHashTable parameter.

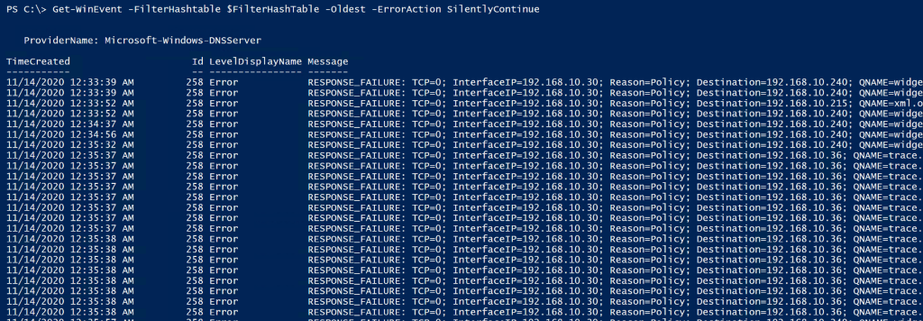

Let's do it! Below is a code sample to demonstrate how I built the hash table filter, and its functionality with Get-WinEvent:

Below shows that the filter works, since it is only returning 258 events, and just as an FYI, for this demonstration, I am logging all events from the provider to the Analytical Log. I haven't limited the output of the provider, as I demonstrated how to do in Part 1 of the series.

Awesome, we've just confirmed that the filter finds the data that we're interested in, so we can now work on picking it apart to generate more useful statistics. Ok, so how do we do that? There's a lot of different ways. What I decided to do was create a custom PSObject to build a customized table and then I just used string manipulation to pick out just the pieces that I was interested in, to store into the PSObject. I chose string manipulation because it's fast and it's easier to understand for most folks reading this, over regular expressions, for example (where I have to re-learn them every time I use them ![]() ). Here's a snippet of code with some comments to help anyone that needs it.

). Here's a snippet of code with some comments to help anyone that needs it.

Note: For the code below to work, the above code must be run in order to populate the $FilterHashTable variable. Also, of note the DNS Provider was modified to add additional info in Server 2019, so there is additional data in the Message Property. In the code block below, you'll see that I have the following, because I'm using Server 2019:

GUID=$Event.Message.Split(";")[15].trim(" ").substring(5)

If you have Server 2016, you'll need to, change that to this:

GUID=$Event.Message.Split(";")[14].trim(" ").substring(5)

This is the case because the "ElapsedTime" field is new to 2019 and shimmed between "Additional Info" and "GUID", as seen in the output of the 258 Event Structure above.

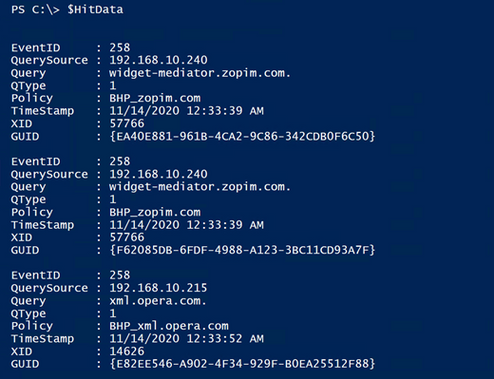

Now, we run the code above. So, what does it look like, and did we meet the goal of parsing the event and collecting only the specific data of interest? Well, let's see:

Boom! We won! It's so pretty!

Ok, it's just an array of data in a custom object that contains only the data that I wanted to collect, but I think it's pretty.. I can hear you guys now though:

"OK, how is this better than just finding the 258 events, which we already know how to do? It looks like we're just taking the same info that's in the log and just moving it around a bit on the screen."

That's a great question!

Well, now that we have the data in a separate variable, we can do a lot with it. We can build reports, for example. The report could contain the following and more:

- Total 258 Events / Total blocked Queries

- Total 258 Events / Total blocked Queries, that were a result of a policy.

- Unique blocked queries (based on the Transaction IDs).

- A list of unique domains that the clients are trying to get to, that they aren't allowed to get to.

- The number of unique domains in that list.

- A list of which clients are trying to get to those domains.

- Though if you have a tiered DNS forwarding hierarchy in place this may take a little extra work, depending upon where the QRPs have been deployed.

- Percentage of total queries being blocked during the timeframe of events that were in the log, at the time of the parse operation.

- Percentage of domains being queried and blocked, out of the total list of QRPs / domains that are in the block list.

- Etc.

The next thing that I want to do since I've successfully collected the parsed data, is export all of what I’ve deemed useful, so that maybe it can be ingested into SQL for tracking historical data. In addition to that I want to further refine this data to build a more concise report that could potentially be e-mailed or even written to its own event log… But I don't want to make this post any longer than it already is, so I'll continue this in the next post... I know, I know, such an abrupt ending – I'm practicing the art of writing cliffhangers. ![]()

Oh yeah, I almost forgot to mention that all of my code comes with the following disclaimer:

The code snippets above are considered sample scripts. Sample scripts are not supported under any Microsoft standard support program or service. The sample scripts are provided AS IS without warranty of any kind. Microsoft further disclaims all implied warranties including, without limitation, any implied warranties of merchantability or of fitness for a particular purpose. The entire risk arising out of the use or performance of the sample scripts and documentation remains with you. In no event shall Microsoft, its authors, or anyone else involved in the creation, production, or delivery of the scripts be liable for any damages whatsoever (including, without limitation, damages for loss of business profits, business interruption, loss of business information, or other pecuniary loss) arising out of the use of or inability to use the sample scripts or documentation, even if Microsoft has been advised of the possibility of such damages.

Until next time!