This post has been republished via RSS; it originally appeared at: Core Infrastructure and Security Blog articles.

Today as we develop and run application in AKS, we do not want credentials like database connection strings, keys, or secrets and certificates exposed to the outside world where an attacker could take advantage of those secrets for malicious purposes. Our application should be designed to protect customer data. AKS documentation describes in detail security best practice

In this article we will show how to implement and deploy pod security by deploying Pod managed Identity and Secrets Store CSI driver resources on Kubernetes. There are many articles and blogs that discuss this topic in detail however we will discuss how to deploy it the resources using Terraform. The source code you will find here and Azure pipeline to deploy it is here

Prerequisite resources:

The following resources should exist before running azure pipeline.

- Server Service Principal ID and Secret: Terraform will use it to access Azure and create resources. Also, will be used to integrate AKS with AAD.

- Client Service Principal ID and Secret: It will be used to integrate AKS with AAD.

- AAD Cluster Admin Group: AAD group for cluster admins

- Azure Key Vault: A KV should exists where CSI will connect with it. You can also modify the code to create the KV during the TF execution

AKS Terraform Scripts Overview

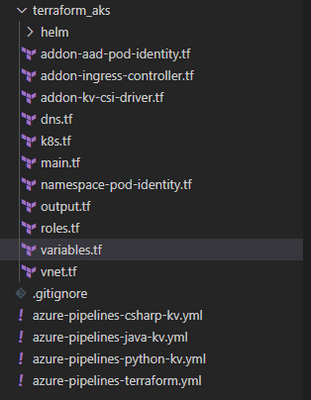

Current repo has the following structure. Terraform scripts are located under “terraform_aks” folder.

Each file, under terraform_aks folder, is designed to define specific resource deployment.

- Variables.tf: terraform use this file to read custom settings variable to use during the run time. If the variable is defined in the variable file then TF expect a default value or it will be passed as env variable during execution. For example, cluster network specification

variable "virtual_network_name" { description = "Virtual network name" default = "aksVirtualNetwork" } variable "virtual_network_address_prefix" { description = "VNET address prefix" default = "15.0.0.0/8" } variable "aks_subnet_name" { description = "Subnet Name." default = "kubesubnet" } variable "aks_subnet_address_prefix" { description = "Subnet address prefix." default = "15.0.0.0/16" } - main.tf: defined different terraform providers will be use in the execution.

provider "azurerm" { version = "~> 2.53.0" features {} } terraform { required_version = ">= 0.14.9" # Backend variables are initialized by Azure DevOps backend "azurerm" {} } data "azurerm_subscription" "current" {} - vnet.tf: create the network resource to use with AKS based on variable.tf input

resource "azurerm_virtual_network" "demo" { name = var.virtual_network_name location = azurerm_resource_group.rg.location resource_group_name = azurerm_resource_group.rg.name address_space = [var.virtual_network_address_prefix] subnet { name = var.aks_subnet_name address_prefix = var.aks_subnet_address_prefix } tags = var.tags } data "azurerm_subnet" "kubesubnet" { name = var.aks_subnet_name virtual_network_name = azurerm_virtual_network.demo.name resource_group_name = var.resource_group_name depends_on = [azurerm_virtual_network.demo] } - K8s.tf: The main script to create AKS. The resource configuration as following

resource "azurerm_kubernetes_cluster" "k8s" { name = var.aks_name location = azurerm_resource_group.rg.location dns_prefix = var.aks_dns_prefix resource_group_name = azurerm_resource_group.rg.name linux_profile { admin_username = var.vm_user_name ssh_key { key_data = var.public_ssh_key_path } } addon_profile { http_application_routing { enabled = true } } default_node_pool { name = "agentpool" node_count = var.aks_agent_count vm_size = var.aks_agent_vm_size os_disk_size_gb = var.aks_agent_os_disk_size vnet_subnet_id = data.azurerm_subnet.kubesubnet.id } # block will be applied only if `enable` is true in var.azure_ad object role_based_access_control { azure_active_directory { managed = true admin_group_object_ids = var.azure_ad_admin_groups } enabled = true } identity { type = "SystemAssigned" } network_profile { network_plugin = "azure" dns_service_ip = var.aks_dns_service_ip docker_bridge_cidr = var.aks_docker_bridge_cidr service_cidr = var.aks_service_cidr } depends_on = [ azurerm_virtual_network.demo ] tags = var.tags } - To enable AAD integration we used the following configuration for the role_base_access_control section

# block will be applied only if `enable` is true in var.azure_ad object role_based_access_control { azure_active_directory { managed = true admin_group_object_ids = var.azure_ad_admin_groups } enabled = true } identity { type = "SystemAssigned" } - After creating the cluster we need to add cluster role binding where we assign AAD admin group as cluster admins

resource "kubernetes_cluster_role_binding" "aad_integration" { metadata { name = "${var.aks_name}admins" } role_ref { api_group = "rbac.authorization.k8s.io" kind = "ClusterRole" name = "cluster-admin" } subject { kind = "Group" name = var.aks-aad-clusteradmins api_group = "rbac.authorization.k8s.io" } depends_on = [ azurerm_kubernetes_cluster.k8s ] } - roles.tf: this script will assign different roles to cluster and agentpool like acr image puller role

resource "azurerm_role_assignment" "acr_image_puller" { scope = azurerm_container_registry.acr.id role_definition_name = "AcrPull" principal_id = azurerm_kubernetes_cluster.k8s.kubelet_identity.0.object_id } -

To Enable POD Identity. Agent pool should have two specific roles as Managed Identity Operator over the node resource group scope

resource "azurerm_role_assignment" "agentpool_msi" { scope = data.azurerm_resource_group.node_rg.id role_definition_name = "Managed Identity Operator" principal_id = data.azurerm_user_assigned_identity.agentpool.principal_id skip_service_principal_aad_check = true } Virtual Machine Contributor

resource "azurerm_role_assignment" "agentpool_vm" { scope = data.azurerm_resource_group.node_rg.id role_definition_name = "Virtual Machine Contributor" principal_id = data.azurerm_user_assigned_identity.agentpool.principal_id skip_service_principal_aad_check = true } -

Addon-aad-pod-identity.tf: The script will deploy AAD Pod identity helm chart.

-

Addon-kv-csi-driver.tf: The script will deploy Azure CSI Secret store provider helm chart

-

Namespace-pod-identity.tf: It will deploy the managed Identity for specific namespace. Also, it will deploy CSI store provider for this namespace.

Deploying AKS cluster using Azure DevOps pipeline

We can deploy the cluster using azure DevOps pipeline. In the repo there is file call “azure-pipelines-terraform.yml”

The deployment use Stage and Jobs to deploy the cluster as following.

- Task Set Terraform backed: will provision backend storage account and container to save terraform state

- task: AzureCLI@1 displayName: Set Terraform backend condition: and(succeeded(), ${{ parameters.provisionStorage }}) inputs: azureSubscription: ${{ parameters.TerraformBackendServiceConnection }} scriptLocation: inlineScript inlineScript: | set -eu # fail on error RG='${{ parameters.TerraformBackendResourceGroup }}' export AZURE_STORAGE_ACCOUNT='${{ parameters.TerraformBackendStorageAccount }}' export AZURE_STORAGE_KEY="$(az storage account keys list -g "$RG" -n "$AZURE_STORAGE_ACCOUNT" --query '[0].value' -o tsv)" if test -z "$AZURE_STORAGE_KEY"; then az configure --defaults group="$RG" location='${{ parameters.TerraformBackendLocation }}' az group create -n "$RG" -o none az storage account create -n "$AZURE_STORAGE_ACCOUNT" -o none export AZURE_STORAGE_KEY="$(az storage account keys list -g "$RG" -n "$AZURE_STORAGE_ACCOUNT" --query '[0].value' -o tsv)" fi container='${{ parameters.TerraformBackendStorageContainer }}' if ! az storage container show -n "$container" -o none 2>/dev/null; then az storage container create -n "$container" -o none fi blob='${{ parameters.environment }}.tfstate' if [[ $(az storage blob exists -c "$container" -n "$blob" --query exists) = "true" ]]; then if [[ $(az storage blob show -c "$container" -n "$blob" --query "properties.lease.status=='locked'") = "true" ]]; then echo "State is leased" lock_jwt=$(az storage blob show -c "$container" -n "$blob" --query metadata.terraformlockid -o tsv) if [ "$lock_jwt" != "" ]; then lock_json=$(base64 -d <<< "$lock_jwt") echo "State is locked" jq . <<< "$lock_json" fi if [ "${TERRAFORM_BREAK_LEASE:-}" != "" ]; then az storage blob lease break -c "$container" -b "$blob" else echo "If you're really sure you want to break the lease, rerun the pipeline with variable TERRAFORM_BREAK_LEASE set to 1." exit 1 fi fi fi addSpnToEnvironment: true - Task Install Terraform CLI based on the parameter version.

-

Task Terraform Credentials: will read the SP account information that will be used to execute the pipeline

- task: AzureCLI@1 displayName: Terraform init inputs: azureSubscription: ${{ parameters.TerraformBackendServiceConnection }} scriptLocation: inlineScript inlineScript: | set -eux # fail on error subscriptionId=$(az account show --query id -o tsv) terraform init \ -backend-config=storage_account_name=${{ parameters.TerraformBackendStorageAccount }} \ -backend-config=container_name=${{ parameters.TerraformBackendStorageContainer }} \ -backend-config=key=${{ parameters.environment }}.tfstate \ -backend-config=resource_group_name=${{ parameters.TerraformBackendResourceGroup }} \ -backend-config=subscription_id=$subscriptionId \ -backend-config=tenant_id=$tenantId \ -backend-config=client_id=$servicePrincipalId \ -backend-config=client_secret="$servicePrincipalKey" workingDirectory: ${{ parameters.TerraformDirectory }} addSpnToEnvironment: true -

Task Terraform init to initiate terraform

-

Task Terraform apply will execute the terraform with auto-approve flag so terraform will run the apply.

P.S We could add task for terraform plan and the ask for approval.

Setting up pipeline in Azure DevOps

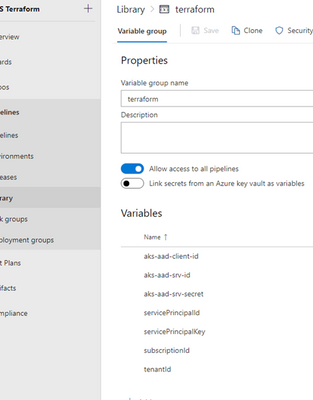

- Under Pipeline Library Create new variable group call it terraform and create following variables

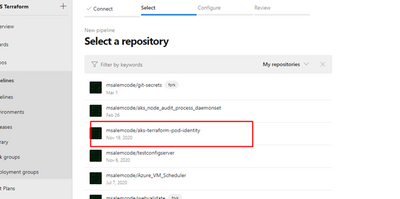

- Add new pipeline then select Github

- After login select the terraform repo

- Select Existing Azure Pipeline YAML then select “azure-pipeline-terraform.yml"

- Once we save the pipeline and created the prerequisite resources and updated the variable.tf file then we are ready to run the pipeline and we should get something like that

Check Our work

Cluster information

Under cluster configuration we should see AAD is enabled

Azure POD Identity / CSI Provider Pods

From command line we can check kube-system namespace for MIC and NMI pods

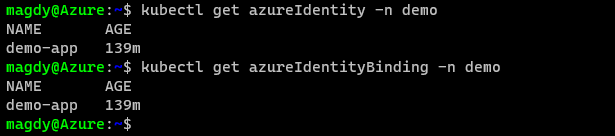

Namespace Azure Identity and Azure Identity Binding

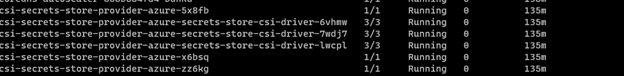

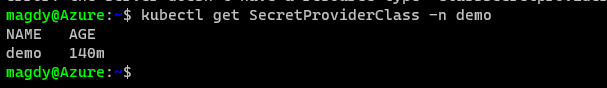

Check for CSI secret store provider

Summary

In this article we demonstrated how to deploy AKS integrated with AAD and deploy Pod Identity and CSI provider using terraform and helm chart. In the next article we will demo how to build application and use POD Identity to access azure resources.