This post has been republished via RSS; it originally appeared at: ITOps Talk Blog articles.

Hello folks,

Since the beginning of the pandemic, we’ve all been mostly stuck to our home offices. And since I’ve been concentrating of the hybrid services that Azure can provide I setup a simulated on-prem environment at home with left-over desktop, laptops, and Raspberry PI.

It’s been really cool and useful. But now that vaccination is progressing and that the government is starting to talk about re-opening the economy and the border, I’m thinking I might get back on the road sooner rather than later. I’ll want to access my “on-prem lab” environment from anywhere but my ISP cycles my IP address regularly.

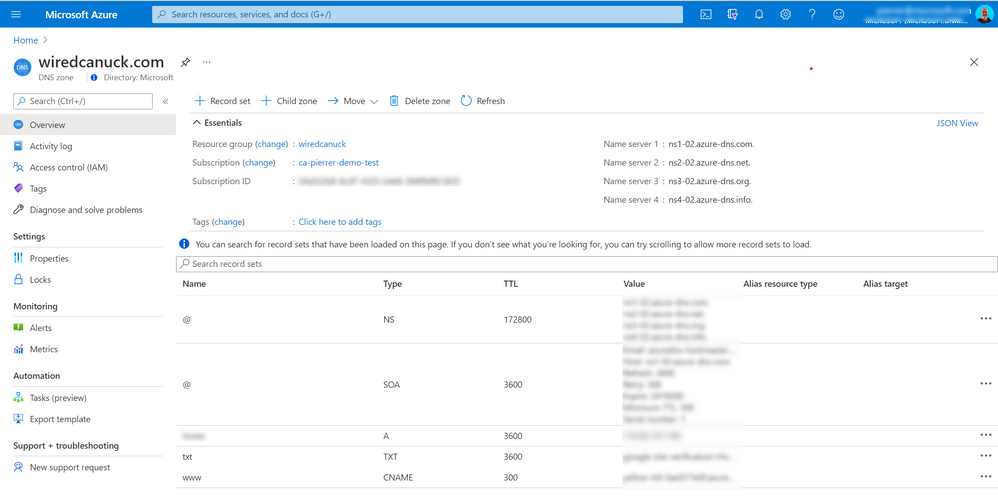

I could always use a service like noip.com or dyndns but I already have a zone managed in Azure DNS and I thought it would be fun to figure it out. Azure DNS doesn't currently support zone transfers so I could not setup a zone transfer from my own DNS server. DNS zones can be imported into Azure DNS by using the Azure CLI. DNS records are managed via the Azure DNS management portal, REST API, SDK, PowerShell cmdlets, or the CLI tool.

Here is my V1 plan.

I already have a DNS server running on a Linux server in my on-prem environment. I will schedule a job to get the external IP of my ISP’s router and send it to my zone in Azure. Sounds easy…. Problem... I don’t want to cache credentials for my job to connect to my zone and update the record.

Plan v1.1

Just like v1, I will schedule a job on my Linux server to get the external IP of my ISP’s router. BUT!! I code it to send it to an Azure Function via a webhook and let the function update my record in the DNS zone in Azure.

That’s better...

I can secure the webhook with a function key and assign a managed identity to the function, so it only has rights to update the zone records but not change any of the other configuration and security.

That should work!

Let’s go.

Linux Server setup

I installed PowerShell Core on the Linux box since I’m more familiar with PowerShell than I am with bash. But I could have used either.

Created a simple script Some parts of the script like the Azure Function key and the Function webhook URL will be updated after we create the function.

Azure Function Setup

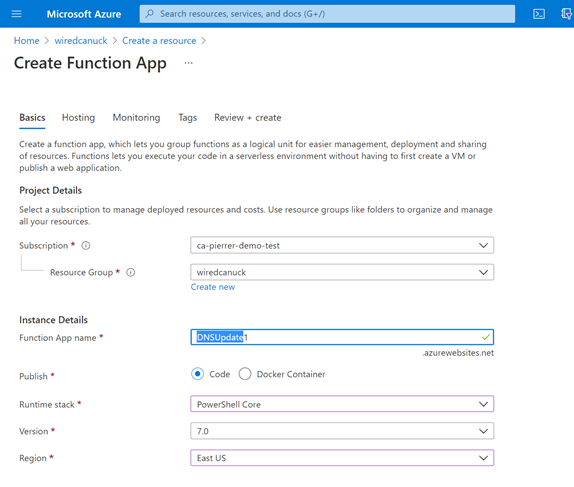

In the Azure portal I navigated to the Resource Group where my DNS Zone is lo0cation and created an Azure Function

I ensured to create the function with the PowerShell Core stack and using a consumption (Serverless) plan type.

Once the function was created, I navigated to the function App and clicked on the Functions menu item on the left of the screen.

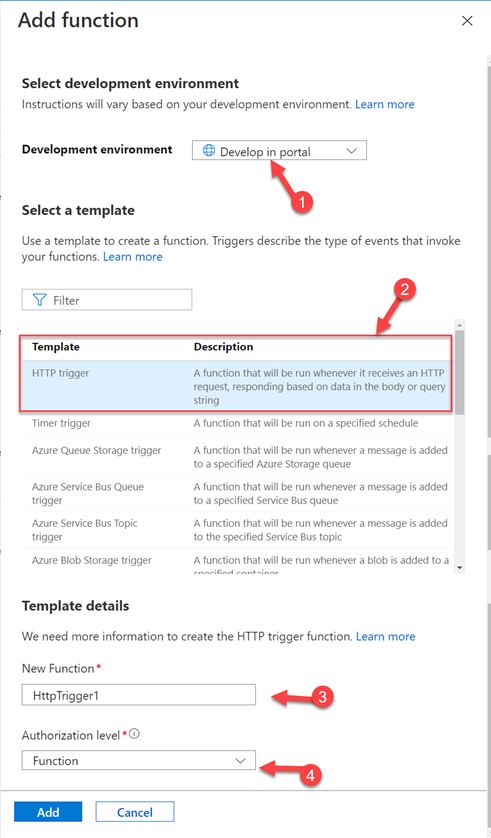

And clicked the “+ Add” selection on the next screen and fill in the details like Using the Portal for development (1), “HTTP Trigger” from the template list (2), a function name (3) and “Function” as the authorization level.

Going back to the DNSUpdate1 function screen I made sure to navigate to the “Identity” section and turn on the system assigned identity. And save the changes and confirm on the next screen. That way the function has an identity we can use to restrict access.

When the changes are saved, you can click the Azure role assignments button to assign the proper rights. In my case I only assigned “DNS Zone contributor” only in the specific resource group in my subscription.

Now that my function is created and has the proper rights, we can create the PowerShell code in the “Code + Test” section and insert our code.

The code will capture the HTTP request, and the body of the request and process the information.

Once the code is saved, I can click the “Get function URL” to get the Webhook address for the on-prem portion of this solution. The URL will include the Default function key as highlighted below

https://dnsupdate1.azurewebsites.net/api/HttpTrigger1?code=FPX43K329H7PEeJPl68ZC8LZfYCwkDyKJElShadzPWSxtc3LQASlbA==

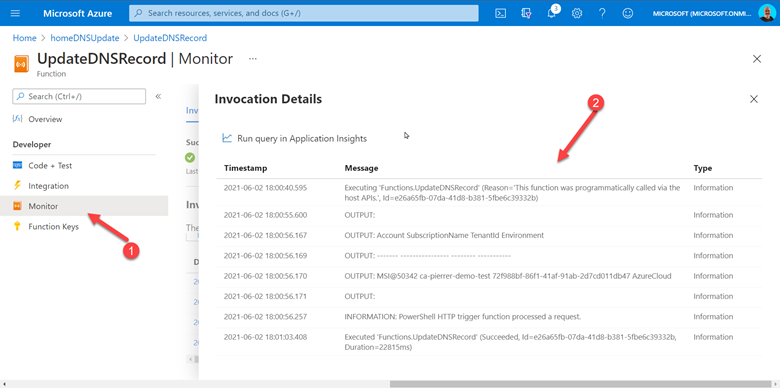

And now, every 12 hours (as per my CRON job) the IP address of my home network will be checked and updated if need be. You can monitor the results in the monitor section and see all the invocation of the function.

Conclusion

This just proves that even if some functionalities don’t exist yet, you can leverage multiple Azure services and configuration to address on-prem issues efficiently and securely.

Are you running into challenges that could be addressed with cloud services and a bit of imaginations? Let me know.

Cheers!

Pierre