This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Auditing is a powerful security feature which is widely used by many customers. Customers must maintain audit logs for longer retention periods for their security & Compliance requirements. If you have longer retention periods, the audit logs for Azure Database can be huge and the storage can become costly. To save cost and maintain your audit logs for longer retention periods, you can compress the log files with help of a copy data tool from Azure data factory. You can configure the pipelines to compress and decompress audit log files using known compression technologies like WinZip, zipdeflate etc. In This blog I will show detailed steps below to create the pipelines

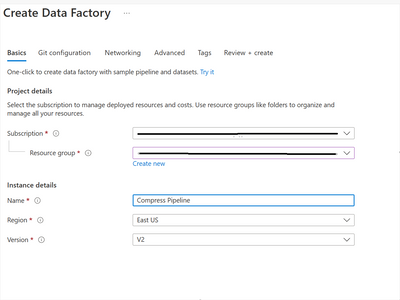

Go to the Azure Portal and create a new Data Factory resource:

From the Azure Data Factory resource you created, click on the “Launch studio” button shown below.

Data Factory offers different tools like:

- Ingest,

- Orchestrate,

- Transform data and

- Configure SSIS

To learn more please refer to Azure Data Factory documentation.

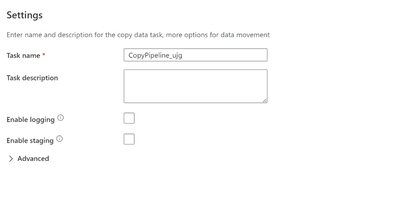

Here we will use the copy data tool: Chose the “Ingest” option, then select the “built in copy task” as below. You can run these pipelines on demand or schedule them as per your requirement:

The Copy data tool can be scheduled based on your requirement.

Using this tool you can copy the data from your source, compress it and write the data to a target. It has list of supported source and target data stores:

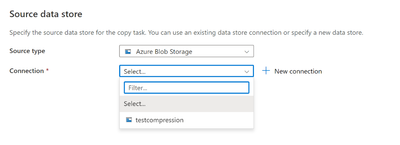

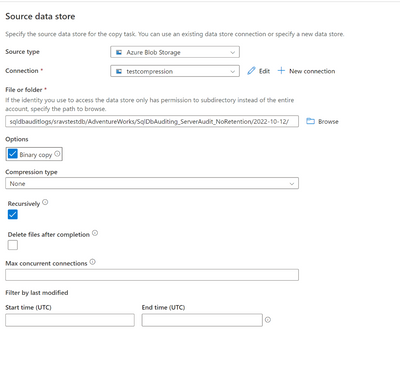

Select source as Azure Blob Storage., You can select an existing connection or create a new connection:

For a new connection fill in the details as required:

As we are trying to compress .xel files, check that the Binary copy option and compression type for source data source is “none” as the files are not compressed on the source.

Select the Destination data store, here we will use the same Azure Blob Storage to copy the compressed files but choose any location to keep the compressed files.

For our example, we selected the Zipdeflate compression type.

Below is the summary of the copy pipeline that will be created:

A sample Deployment status is shown below. If there are any errors, you can check and rectify if needed:

From Azure Data Studio monitor, you can check the status of the pipelines and run them on demand if required.

Similarly, you can create a pipeline to decompress the files and copy to the target:

Note: This will not control any manual actions that are taken like deletion of files etc. that need to be monitored by cloud administrators. The compressed files will not obey the retention configured for Audit and it must be maintained by administrators.

The compressed files will be saved as below and if you want to save further cost you can try moving them to archive tier and move them back to hot storage tier when you must access them. Moving to archive tier is only applicable for servers that require retention greater than 180 days (about 6 months) and you do not have to access the files on regular basis.

To summarize, if you have long retention periods for audit logs and lots of data need to be stored in blob storage, you can use the ADF pipeline to compress /decompress audit logs which can incur space and cost savings. Once the audit log files are compressed, they are stored in block blob format, and you can move them to archive tier to store them for longer periods at lower cost.