This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

With special thanks to Ishna Kaul for designing anomaly detection algorithms and sharing the research which lead to this blog and notebook.

Also, Thanks to @AmritpalSingh for review and all the suggestion to improve this further.

Introduction

In this blog, we will demonstrate how you can identify anomalous Windows network logon sessions using an Isolation Forest algorithm with an Azure ML studio notebook connected to a Microsoft Sentinel workspace. Logon anomalies generally indicate the presence of an external adversary in your environment or malicious activity from a rogue insider. This blog is meant as a starting point to demonstrate real world machine learning anomaly detection use cases using the Microsoft Sentinel Ecosystem. The model showcases a general framework which may need customization and tuning to further adjust the sensitivity of the triggered anomalies. The model can also be applied on other types of datasets by carefully analyzing the historical data and extracting the important features useful for modelling.

We are currently sharing this work by publishing 2 separate notebooks targeted for data scientists and for SOC analysts. The first version for data scientists has sections to explore and visualize the data and anomalies along with the sections for interpreting the results. The second version for SOC analysts has sections dedicated to assist faster investigation of the anomalies flagged by the model depending upon the interpretability of the model output using SHAP library which may suggest the possible causes of the anomaly.

Problem Statement

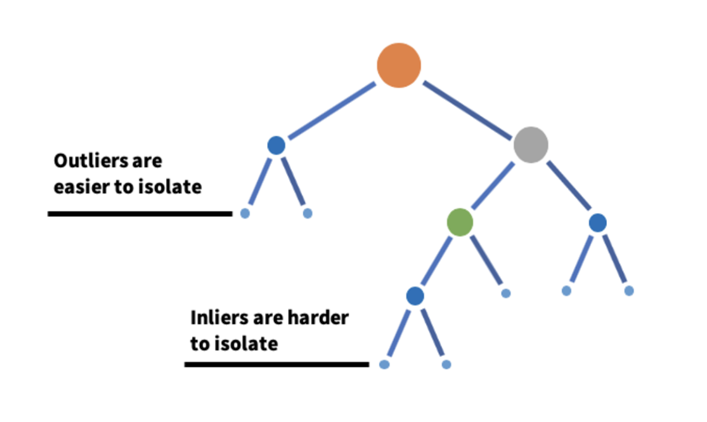

Due to the ever-changing nature of user logon behaviors, it is often cumbersome to find unusual logon activities based on various logon attributes. Traditional atomic detections often create noise since they rely on the static logon properties of the environment. User logon behaviors are often recorded in telemetry with multiple properties such as the logon method used (interactive, remote), logon authentication method (NTLM, Kerberos), source IP, location, destination host and more. User logon patterns are combinations of these properties and often change depending on the environment. To find anomalies based on these complex patterns, we can leverage Isolation Forest model which is a machine learning algorithm. The Isolation Forest algorithm will split the data into two parts based on a random threshold value. The algorithm will continue recursively splitting until each data point has been isolated. Then anomalies can be detected using isolation (how far a data point is in relation to the rest of the data). To detect an anomaly the Isolation Forest calculates the average path length (the number of splits required to isolate a sample) of all the trees for a given instance and uses this to determine if it is an anomaly (shorter average path lengths indicate anomalies).

Image Source: Detecting and Preventing abuse on LinkedIn using Isolation Forest

Gif Source: Isolation forest: the art of cutting off from the world

The following architecture diagram is a general overview of the steps required for preparing your data, starting from raw event logs to finally preparing the aggregated dataset which can be used for data modelling purposes. We will also derive new columns capturing various logon patterns associated with users such as SuccessfulLogons, FailedLogons, DomainsAccessed, HostsLoggedon, SouceIps and more.

If you want to generate these anomalies on a recurrent basis then - depending on the scale and volume of your data - you can set up an intermediate pipeline to save historical data into a custom table and load results from it. This information can be iteratively extracted as a separate dataset and stored in a different table for the model to use as input. There are various ways you can take this query and write it to a custom log table in sentinel including Logic Apps and Log Analytics data export feature. Also Check out the latest blog to export historical data at scale using notebook Export Historical Log Data from Microsoft Sentinel

Data Architecture Diagram

Data Preparation

For this anomaly detection, we will consider the following two Windows logon events. These events identify successful and unsuccessful logon activity on your host.

- 4624(S) An account was successfully logged on. (Windows 10) | Microsoft Learn

- 4625(F) An account failed to log on. (Windows 10) | Microsoft Learn

To effectively identify the patterns and flag the anomalies, we can start with specific logon types which are noisy in nature therefore harder to find anomalies in those events using traditional atomic detections. Network logons are the noisiest events in the environment, and it can be difficult to write traditional rules looking for anomalous network logon activity. You can apply additional filtering on these input datasets for types which are generally benign and irrelevant for this analysis, such as filtering machine account logons, ANONYMOUS LOGONs, etc. This filtering is optional and can be added as required based on the analysis of historical patterns in your data.

For this demo, we are retrieving data from the original table as a one-time export, starting with 21 days of historical data. You can adjust the number of historical days depending on the volume and scale of the data in your environment. Next, we will perform Feature Engineering to create additional columns. We have specifically selected 4 features with numeric data points for demo purpose in this blog:

- FailedLogons

- SuccessfulLogons

- ComputersSuccessfulAccess

- SrcIpSuccessfulAccess

For these columns, additional metrics such as mean, standard deviation, z-score are also calculated These are basic statistical values calculated to identify the distribution of the dataset or particular feature and assist in identifying the outliers which are outside normal distribution. You can refer to other public references to understand these concepts further.

Data Modelling

In this step, we will select the subset of features generated from the previous steps to be used for data modelling.

We will run Isolation Forest model on the subset of data selected. For simplicity, the it is run using values such as contamination = 0.01 which means 1% of the dataset is anomalous. You can tune this and other model parameters further depending on the size and volume of the data retrieved following the data processing and modelling steps. We have also used the decision function of the model to generate a score associated with each result. You can use this score as a threshold to adjust the sensitivity of the algorithm which determines the number of anomalies generated.

After running the model with tuned parameters, you will generate anomalies depending on the score threshold set. The higher the anomaly score, the more anomalous the event.

Data Visualization

If you are Data scientists, you can use Notebook for Data scientists to visualize the anomalies by using Histogram, 3D Scatterplot or Correlation plot to understand the correlation of features in the data. These will ease while exploring and understanding the dataset which will help in tuning the model parameters to increase the efficacy of the anomalies flagged. The visualization may not be suitable for SOC analysts or a person with non-data science backgrounds.

Here are some example outputs based on the demo dataset used.

PCA Plot of showing anomalies:

A 3D scatter plot of PCA (Principal Component Analysis) is a way to visualize data in three dimensions, after reducing the dimensionality of the data using PCA. In a 3D scatter plot of PCA, each axis represents a principal component (a combination of the original features), and the position of each data point in the plot represents its projection onto these three principal components. In Below plot, we have colored all the non-anomalous points as green, and anomalies are in red and blue colors as shown. The legend shows the domain, user and date parameters associated with anomalies.

These and other visualizations will assist in identifying how the model is performing and the distribution of anomalies as compared to normal data points. If required, data scientists can go back to the previous steps, adjust model parameters such as contamination, and regenerate the plots to make informed decisions based on the historical data and trends in the environments.

Interpreting anomalies classified using SHAP

One of the important aspects added in these notebooks is how to interpret the anomalies generated by Isolation Forest. The anomalies generated generally have a score associated with them describing how anomalous they are, but to investigate further SOC analysts need to know which parameters the model has flagged as anomalous. For this purpose, we can use the SHAP(Shapley Additive exPlanations) library to explain the predictions(score) generated by Isolation Forest. SHAP can provide Global as well as local interpretation of anomalies. Global interpretation helps to understand the model as a whole, while local interpretation helps to understand the predictions for individual data points. The notebook version for Data Scientists also has various SHAP plots for feature importance, and Force plot for local anomalies, showing how various features contribute to and affect the score calculated by model.

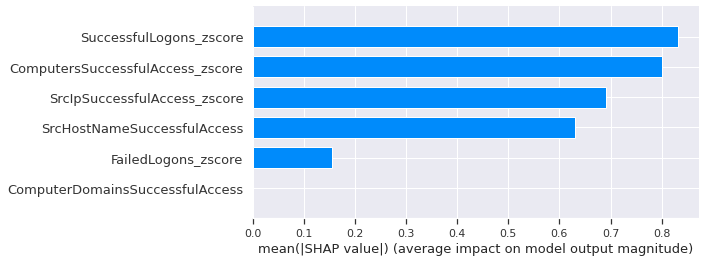

Here is an example SHAP bar plot output of the global feature importance for the demo dataset used.

The plot has a horizontal axis that shows the feature importance, and the vertical axis shows the feature name. The bars are colored to show whether the feature had a positive (in red) or negative (in blue) effect on the prediction. The length of each bar shows the magnitude of the feature importance.

You can also force plot to explain local anomalies. Here is an example output from SHAP force plot for one of the anomalies.

A SHAP force plot shows the contribution of each feature to the final prediction for a single data point. The plot has a horizontal axis that shows the SHAP value, which indicates how much a feature contributed to the prediction (positive values indicate that the feature contributed positively, and negative values indicate that the feature contributed negatively). The plot also has horizontal bars for each feature, which indicate the value of the feature for the data point being analyzed. The bars are colored to show whether the feature value is high (in red) or low (in blue). The width of each bar shows how much the feature contributed to the final prediction.

To interpret the SHAP force plot or bar plot, you should look for features with high absolute SHAP values or feature importance. These are the features that have the greatest impact on the prediction. The direction of the SHAP value or feature importance indicates whether the feature has a positive or negative effect on the prediction. For example, a high positive SHAP value or feature importance for the "number of failed login attempts" feature indicates that a high number of failed login attempts is associated with a higher probability of being an anomaly.

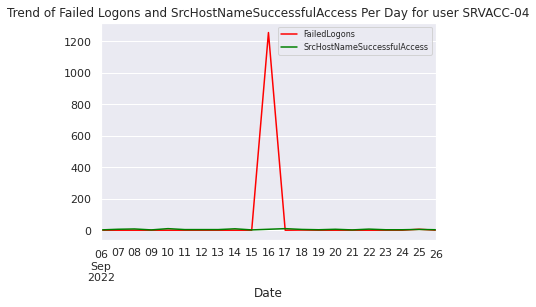

Depending on the feature impacting the anomaly score, SOC analysts can run relevant queries to analyze the historical patterns for that feature and compare with the day on which the anomaly is observed. For example, in this case the analyst can run a query to extract the Failed logon trend for the account flagged and see if there are any spikes for the given day as compared to the historical trend. The analyst can also use account nblets to extract important insights from available datasets other than SecurityEvent for additional correlation. This will help in faster triage and automating investigation of anomalies generated by the model.

Here is an example image from the demo dataset for one of the outliers flagged by model shown in above force plot and showing Failed Logons spike on 9/16 which is one of the higher width features.

Conclusion

In this blog, we demonstrated a generic anomaly detection framework using Azure ML notebooks within Azure Sentinel. The framework can be implemented on other datatypes by exploring the data and extracting important features useful for anomaly detection depending upon the security use case. For this blog, we started with Windows event login data with the goal of finding users with anomalous login patterns. We created 2 notebook versions for use by different analyst personas. The notebook targeted towards Data Scientists can be tweaked at various stages of execution from Feature Engineering to Data Visualization to explore the data and anomalies in various ways. The other version of this Notebook is targeted towards SOC Analysts/Threat hunters who want to investigate the anomalies resulting from this model and triage those to investigate any potential malicious activity.

References

- Azure-Sentinel-Notebooks/Guided Hunting - Anomaly detection with Isolation Forest on Windows Logon data For Data Scientist .ipynb (github.com)

- Azure-Sentinel-Notebooks/Guided Investigation - Anomalous users generated by Isolation Forest Model for SOC Analysts.ipynb (github.com)

- Explaining Machine Learning Models: A Non-Technical Guide to Interpreting SHAP Analyses (aidancooper.co.uk)

- Interpretation of Isolation Forest with SHAP | by Eugenia Anello | Towards AI

- arXiv Paper: Lateral Movement Detection Using User Behavioral Analysis

- Decision Trees (mlu-explain.github.io)