This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Introduction

This blog post provides a step by step guide for incremental data load from Oracle to Azure Database for PostgreSQL – Flexible Server using Azure Data Factory dataflows. By using this solution there is no additional set up required in the source Oracle database as typically needed using LogMiner or a traditional CDC tool (Change Data Capture).

There are 2 parts in the solution, the 1st part covers the process, source configuration in Oracle, Staging configuration in the Azure Storage, Creating the linked services and self hosted integration runtime in Azure Data Factory and configuring the datasets in the source Oracle database and Azure Storage, the remaining sections are covered in Part 2 here :- Part 2

Limitations

This solution provides a delta load scenario where the data is moved in 30 minutes intervals from source to target. If the requirement is for a real time solution the recommendation is to consider other solutions available such as Open Source or 3rd Party products.

Process workflow

High Level Architecture for the process

Here are the steps to capture Incremental data from the source to target using Azure Data Factory Dataflows :-

Oracle Source Configuration

- Watermark table: this is needed to capture the changes in each table via audit columns.

- Triggers for tracking actual metadata: create a trigger for the data changes insert or update and a trigger for the delete records to capture the deleted record reference and its time.

- Download and Install ADF Integration Runtime : In order to load the data to the staging area in blob storage and to establish the connection between source database and ADF, download and install the integration runtime to the source server or the other server / virtual machine with the same IP range of the source database server.

Staging

- Staging for the table data: This may not be necessary, however it is better to offload the source environment dependency.

- Azure Storage Account: Create an Azure storage account with the containers for the table data, watermark table, deleted records and logs.

- Linked Service: Create the linked service required for the Oracle connection; the user has to have read and write permission in the tables to be copied to Azure DB for PostgreSQL – Flexible Server.

- Datasets: Create datasets for the data tables, deleted records and watermark table by using Azure Data Factory and create a pipeline with the trigger intervals required.

- Important to note: while loading the watermark table, capture the current timestamp as WATERMARKUPDVALUE as this will be needed in order to update the watermark table later for the next run.

Azure Data Factory Dataflows

- Linked Service for Azure PGSQL: Create the linked service for the Azure DB for PostgreSQL – Flexible Server database connection; the user must have permission to read and write for the schema and tables to be copied from Oracle database.

- Azure Data Factory Dataflows: Create the Azure Data Factory data flows for the source data and watermark table to be joined and filter the deltas required to load in Azure Db for PostgreSQL – Flexible Server database.

- Once the delta records are captured, the next step is to add ALTER ROW option to UPSERT the data into target database based on its primary key column.

- Similarly, create a data flow for the DELETE records, while adding ALTER ROW use the DELETE option based on its primary key column

Target Setup – Azure PostgreSQL – Flexible Server

- Azure DB for PostgreSQL – Flexible server: create the source schema, and the table structure as required.

- User: Create a user with the privileges to access the schema and tables to read and write data.

- Make sure the tables created contains a primary key

Update the watermark table in the source.

- Once the above steps are completed successfully, the next step is to update the watermark table in the source database (Oracle)

- Update the watermark table column WATERMARKVALUE with the WATERMARKUPDVALUE captured during the first step of the pipeline process in the azure storage container.

- The above step would change the watermark update date value which would be used as the start time for the next run.

The detailed explanation of the above steps with the examples along with the screenshots for each section are outlined in the following steps:

Oracle Source Configuration

- Here are the examples to create a watermark table and a table to capture the deletes in the database.

Creating the watermark table:-

CREATE TABLE <SCHEMA>."WATERMARKTABLE”

(

"WATERMARKTABLENAME" VARCHAR2(255 BYTE),

"WATERMARKCOLUMNNAME" VARCHAR2(255 BYTE),

"WATERMARKVALUE" TIMESTAMP (6),

"WATERMARKUPDVALUE" TIMESTAMP (6)

);

Creating a table to capture the deleted records:-

CREATE TABLE "<SCHEMA>"."DELETE_DT"

( "NAME" VARCHAR2(100 BYTE),

"EMP_ID" NUMBER(10,0),

"ROLE" VARCHAR2(100 BYTE),

"REPORT_TO" VARCHAR2(100 BYTE),

"LAST_MODIFIED" TIMESTAMP (6),

"TABLENAME" VARCHAR2(50 BYTE),

"DEL_REC_DATE" TIMESTAMP (6)

);

Auditing should be enabled on all Oracle Source tables that need to be migrated to track the delta changes. Here are the steps to enable auditing on the tables to capture deltas if not already available.

Example-1: Using SCN_TO_TIMESTAMP

- Oracle already have every changes in the database recorded by system change number(SCN)

- Create a new column as Last_updated_date as a timestamp character type and update the columns with the function SCN_TO_TIMESTAMP

Example:-

UPDATE <TABLE_NAME> SET LAST_UDPATED_DATE = SCN_TO_TIMESTAMP(ORA_ROWSCN)

Example-2: Using Triggers

- Add a new column Last_modified_date in the existing table as a timestamp character type column.

- Create a trigger for after update or insert to update the last_modified_column with systimestamp to capture the changes in the record.

Example:-

CREATE OR REPLACE TRIGGER "<SCHEMA>"."WATERMARK_UPDATE_TGR"

BEFORE UPDATE OR INSERT ON test4adf

FOR EACH ROW

DECLARE

BEGIN

IF INSERTING

THEN

:NEW.LAST_MODIFIED := systimestamp;

ELSE

:NEW.LAST_MODIFIED := systimestamp;

END IF;

END;

/

- Create another trigger for capturing the deleted records.

Example:-

CREATE or REPLACE TRIGGER "<SCHEMA>"."WATERMARK_DELETE_TGR"

BEFORE DELETE ON test4adf

FOR EACH ROW

BEGIN

INSERT INTO DELETE_DT VALUES (:old.name,:old.emp_id,:old.role,:old.report_to,systimestamp,:old.tablename,systimestamp,:old.tablename);

END;

/

Configuration Steps on Azure Blob Storage For Staging

Create the containers in Azure blob storage named <table_name>, Watermark, DeleteRecords and Logs, this is a staging area to capture the data from source and apply timestamp in watermark just before starting the ADF dataflow process.

- Table_name – To store the data from Oracle under the specific table name

- Watermark – To store the latest watermark data from Oracle

- DeleteRecords – To store the deleted records from Oracle

- Logs – To store the logs from Azure Data Factory jobs

Create linked services between Oracle and Azure PostgreSQL in ADF

Navigate Azure Data Factory in the portal and create the linked service using Oracle as source and a linked service for target using Azure DB for PostgreSQL – Flexible server.

Also create the table datasets in azure data factory for the source table and for the table that captured the deleted records.

Create a self-hosted integration runtime and a linked service connects to Oracle

Once the selfhosted Integration Runtime is created, you can download and install the integration runtime in Oracle source server or in a different server having the same IP range.

When this is successfully completed, the connection with the Oracle database can be established using the linked service.

Enter the connection details for the source Oracle server such as username, password, port and SID to create the linked service and test connectivity.

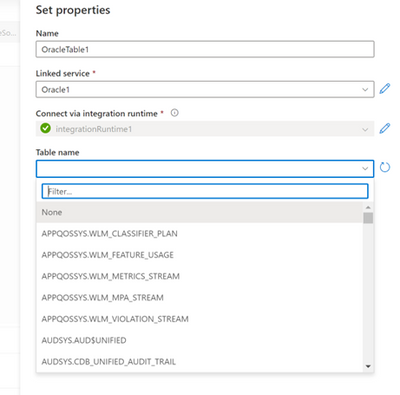

Configuring Oracle source dataset

When the linked service is connected successfully, you can select the table(s) to be copied over to the Azure Blob Storage from the drop down.

When the workflow is successfully created, the connection and preview the source data can be tested.

Repeat the above steps for the watermark and deleted records tables.

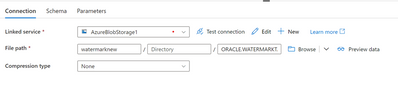

Configuring the datasets for Azure Storage

Create a linked service for Azure storage by using your subscription.

Find the instructions in the following link

Linked services - Azure Data Factory & Azure Synapse | Microsoft Learn

After creating the linked service use the browse option for the blob storage to stage the source files from Oracle.

Example:-

Repeat the above steps for data tables, watermark table, deleted records table and for the logs.

Final Thoughts

We hope that this post has helped you to configure Incremental Load using ADF and described scenarios and options for using the solution to migrate your databases successfully. The remaining steps are covered in Part 2 here :- Part 2

If you have feedback or suggestions for improving this data migration asset, please contact the Azure Databases SQL Customer Success Engineering Team. Thanks for your support!