This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Our goal in this blog post is to demonstrate how to use Azure Blob Storage with React.js to create an image gallery. We will go over the capabilities, advantages, and best features of Azure Blob Storage. We will also discuss the different programming languages that can be used to build Azure Storage Blob clients, focusing on the JavaScript Azure Storage Blob client library.

What is Azure Blob Storage?

Azure Blob Storage is a Microsoft Azure cloud-based object storage solution. It enables developers to use blobs to store and manage unstructured data such as documents, photos, videos, backups, and logs. Blobs can be grouped together to form containers, providing a scalable and cost-effective method for storing and retrieving massive amounts of data.

What can we do with Blob Storage?

Azure Blob Storage offers various capabilities, such as:

- Azure Blobs: Storing and serving static content to web applications example Images and documents, Streaming video and audio and Storing and processing big data, logs, and telemetry.

- Azure Files: Managed file shares for cloud or on-premises deployments.

- Azure Queues: A messaging store for reliable messaging between application components.

- Azure managed Disks: Block-level storage volumes for Azure VMs.

- Storing backups and archives for disaster recovery.

Advantages of using Azure Blob Storage:

- Scalability: Blob Storage can handle massive amounts of data, and you can easily scale storage as your needs grow.

- Durability: Data stored in Blob Storage is redundantly backed up to ensure high availability and durability.

- Accessibility: Data can be accessed securely from anywhere in the world using RESTful APIs or client libraries.

- Cost-Effective: Blob Storage offers flexible pricing tiers, allowing you to optimize costs based on data access patterns.

- Integration: Seamlessly integrate Blob Storage with other Azure services for a comprehensive cloud solution.

Some of the Best Features available in Azure Blob Storage

- Storage Tiers: Choose between hot, cool, and archive tiers to match data access patterns and reduce costs.

- Blob Lifecycle Management: Automatically manages data retention and deletion based on predefined policies.

- Shared Access Signatures: Generate time-limited access tokens to grant temporary access to specific blobs.

- Azure Functions Triggers: Trigger functions when new blobs are added or modified, enabling real-time processing.

- Blob Versioning: Keep track of changes and revisions to blobs using versioning capabilities.

Programming Languages for Consuming Azure Blob Storage

- Users or client applications can access objects in Blob Storage via HTTP/HTTPS, from anywhere in the world. Objects in Blob Storage are accessible via the Azure Storage REST API, Azure PowerShell, Azure CLI, or an Azure Storage client library. Client libraries are available for different languages, including:

- JavaScript or (node.js): Utilize the Azure Storage Blob client library for JavaScript to interact with Blob Storage from web applications built with React.js or server frameworks.

- C#(.Net): Use the Azure SDK for .NET to build .NET-based applications that interact with Blob Storage.

- Python: The Azure SDK for Python enables Python developers to integrate Blob Storage functionality into their applications.

- Java, Go, and more: Azure Blob Storage provides SDKs for multiple programming languages, enabling broad language support.

Procedure

- Provision Azure blob storage

- Clone our Client Application.

- Integrate Azure SDK in our Client Application.

Pre-requisites

- Azure subscription, if you’re a student redeem Azure for students else create a free account

- Nodejs installed on your machine.

- VS Code

Provision Azure blob storage

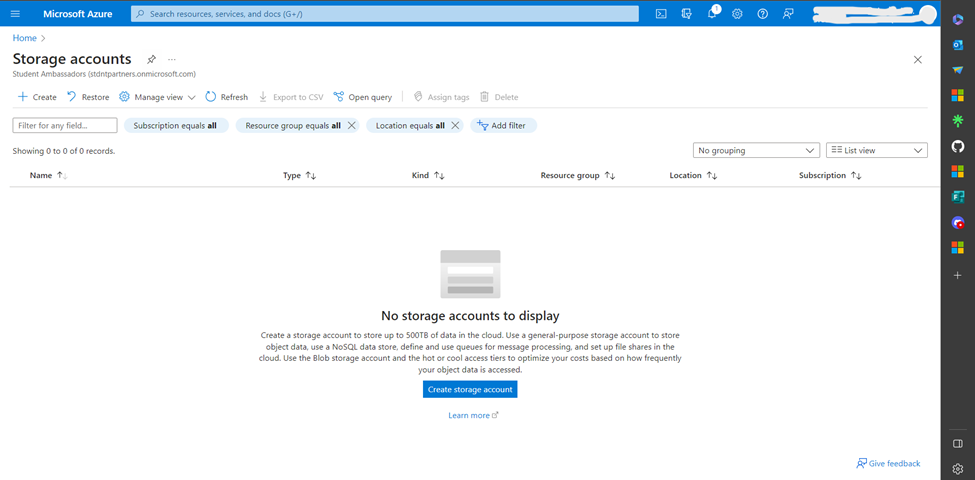

- Go to Azure portal and search for storage accounts

- Create a new storage account.

- Select your subscription and create a resource group

- Create a unique storage account name, select your region, performance go with standard and redundancy select Geo-redundant storage (GRS)

- Under security make sure the below options are selected. They will enable us to access the storage using REST API endpoints and Authentication.

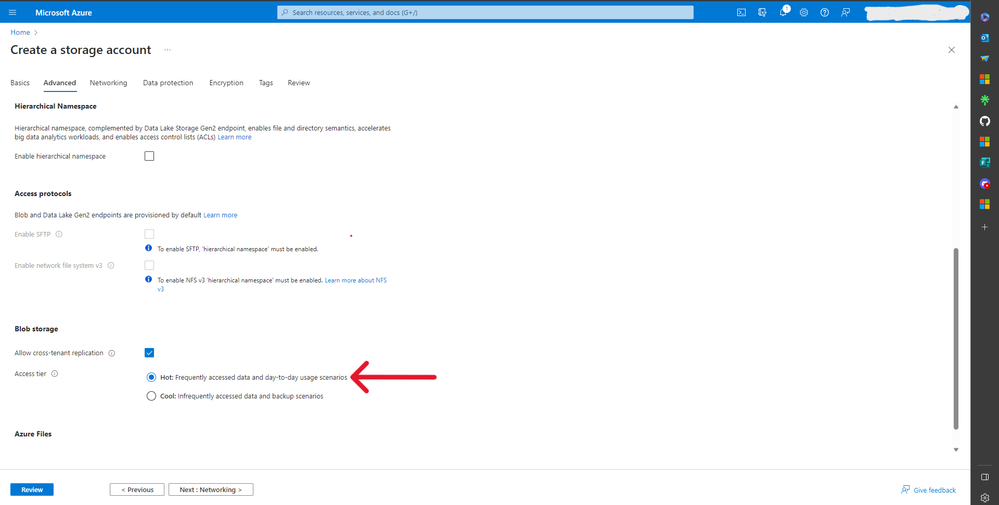

- Under blob storage access tier select HOT, because we want to access our blob storage files frequently.

- Under network connectivity, for now lets go with enable public access but later I will show you how to change this. Also select Microsoft routing.

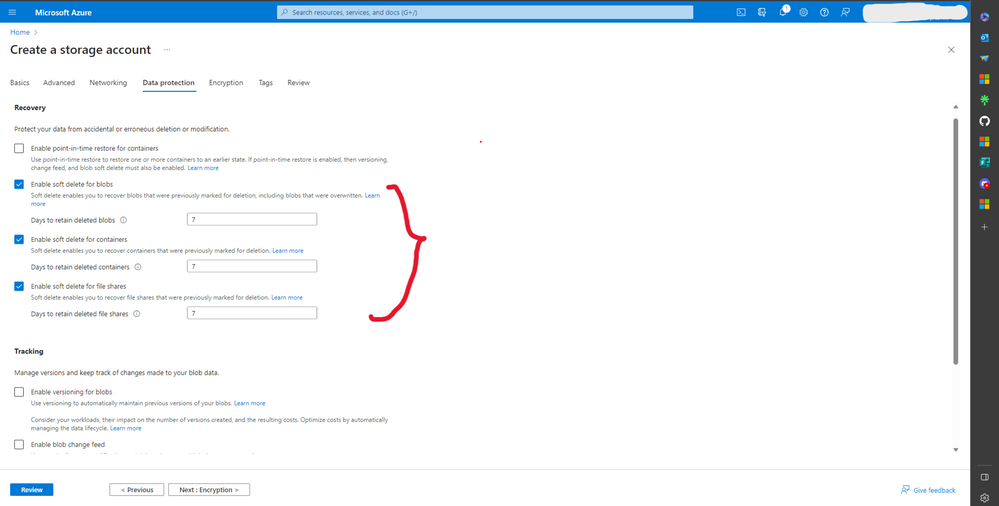

- Under recovery, lets enable soft delete, this is important when we want to recover our blob, container, or files.

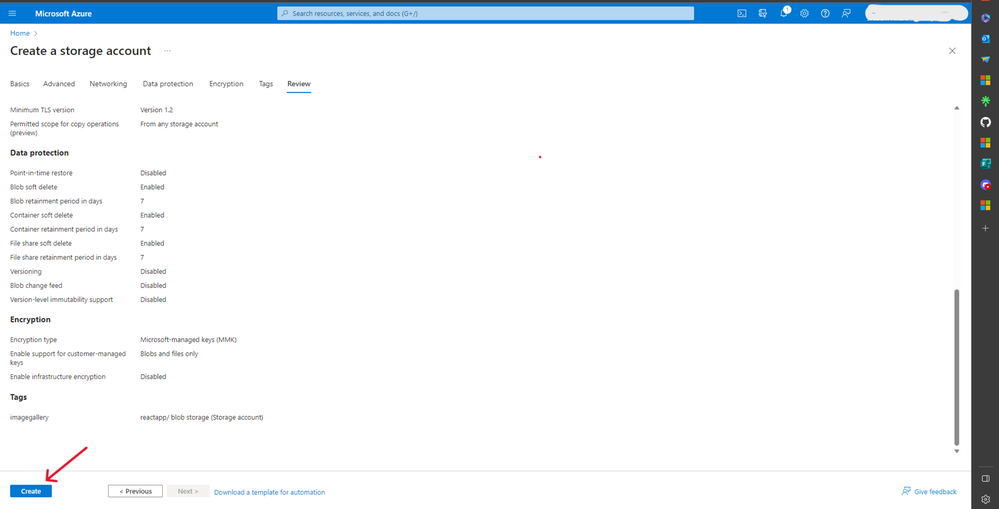

- For encription, I will go with Microsoft-managed keys.

- Create tags, give a name and value of your choice, the main aim of tags is to categorize resources and consolidate bills to one tag.

- Review your options and create.

- After deployment is completed, go to resource.

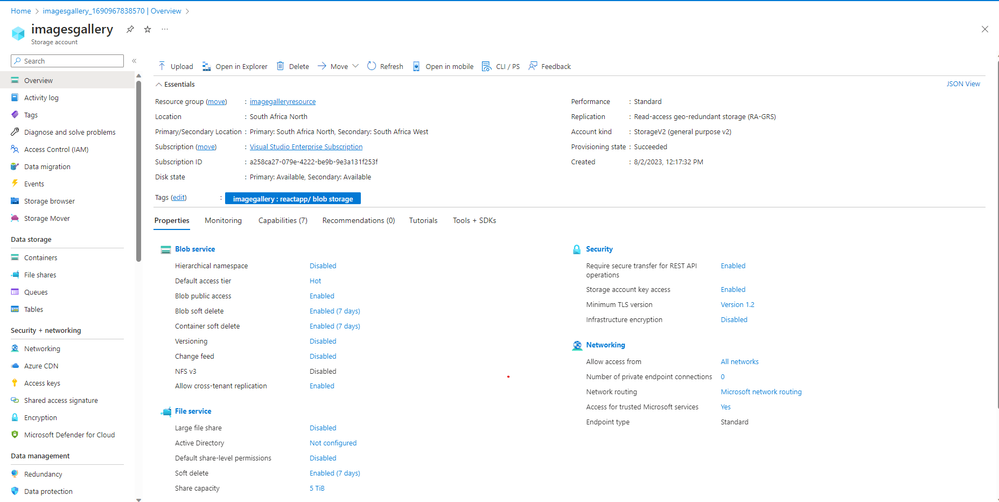

- Under overview, you will find all the options related to our resource which we had earlier selected.

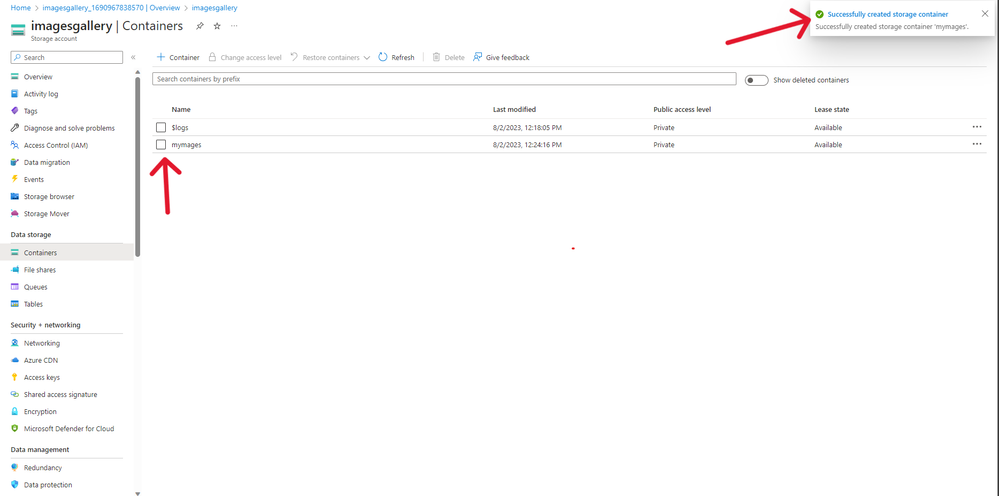

- Click Containers and create a new container (think of a container as a folder where you can store related files like images). Give it a unique name and access level. I will go with private because I want to access my container using an SAS access token.

- After successfully creating a container, you will see it displayed below.

- Under shared access signature, select allowed services, for this example we only need blob but select all if you like. Allowed resource type select container. Allowed permissions go with all options. Select blob versioning and blob indexing. Start and expiry date are important, because this is the lifetime of our SAS token to be valid. Select your time zone. I have opted not to add an IP address since I will access the local storage with a local server, but if your application is live get its IP address and it here so that only that IP address can access the storage account. Allowed protocols I will go with both HTPPS and HTTP since I’m running a local host client application. Lastly click Generate SAS and connection string.

- Copy the SAS token, we will use it in our react application in the environmental variables.

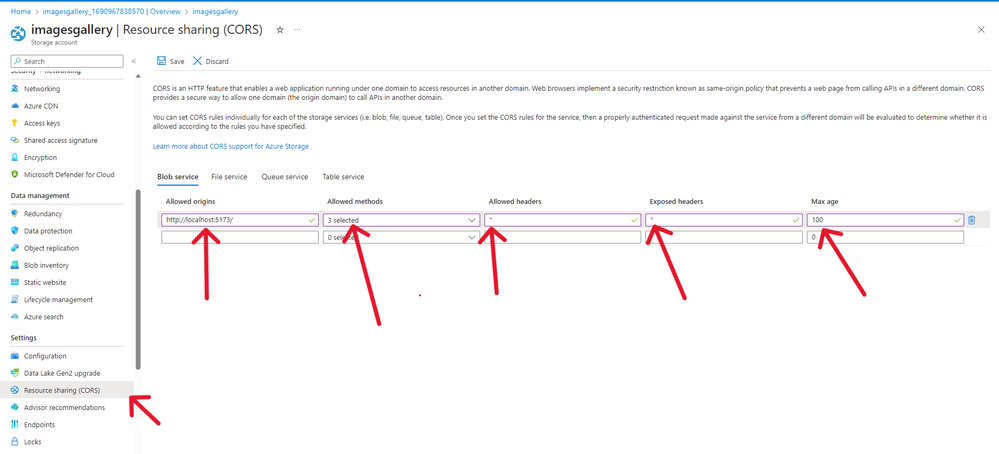

- Under CORS, we need to enable the origin which is going to access our storage account, allowed methods example POST, GET, DELETE & PUT. Allowed headers and exposed header I have gone with * meaning all (it’s not a good practice). The max age is the amount of time the browser should cache the preflight Options in the request, I have chosen 100 seconds. More information about CORS.

Clone our Client Application.

- Create a folder and open it using VSCode. Open the terminal on your VS code and type

Git clone https://github.com/kelcho-spense/Image_Gallery_Azure_Blob_storage-Educator_Developer_Blog-.git . - Wait till the process is done.

- Click on .env file and add your SAS token (the one we generated on step 17 above). If you used the values I used for Storage account and storage container, you do not need to change the values I have provided.

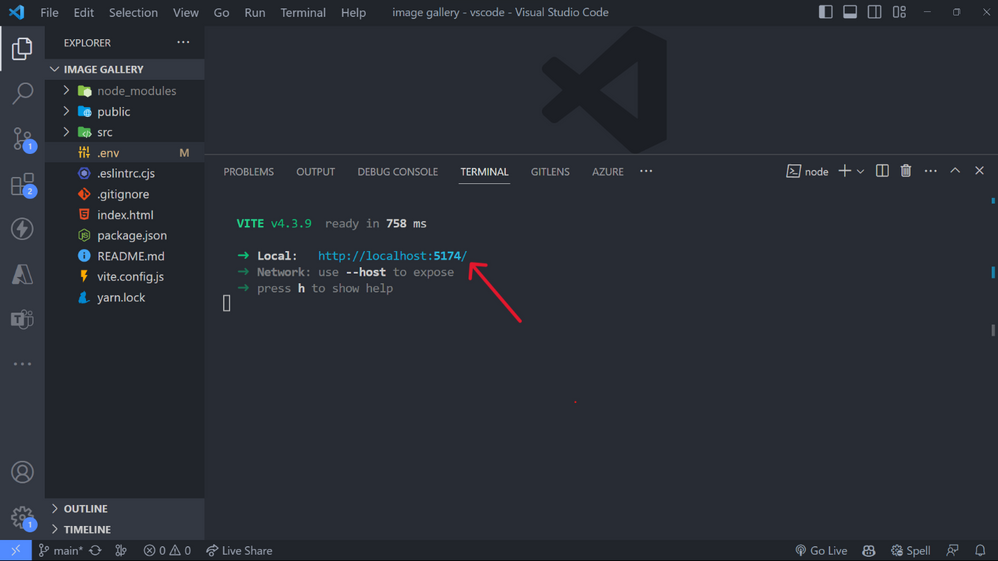

- To install our node dependencies run yarn or npm install (if not using yarn as your package manager). Then run yarn run dev or npm run dev to start our local application server.

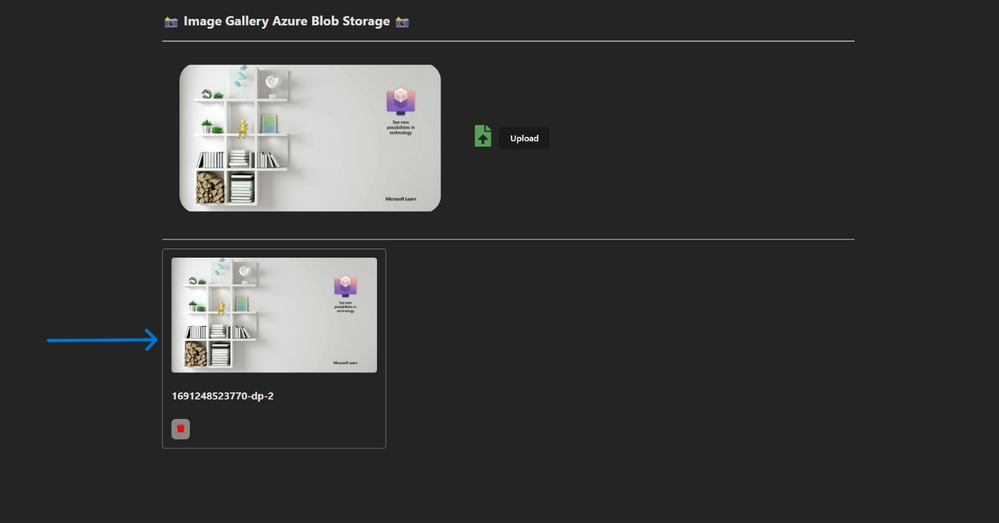

- View your image gallery applications on http://localhost:5174/

- Click on the upload icon to upload an image, select image and click okey.

- After uploading successfully, an image should be displayed below.

- After uploading several images you can even click the Delete button (red in color) to delete the image.

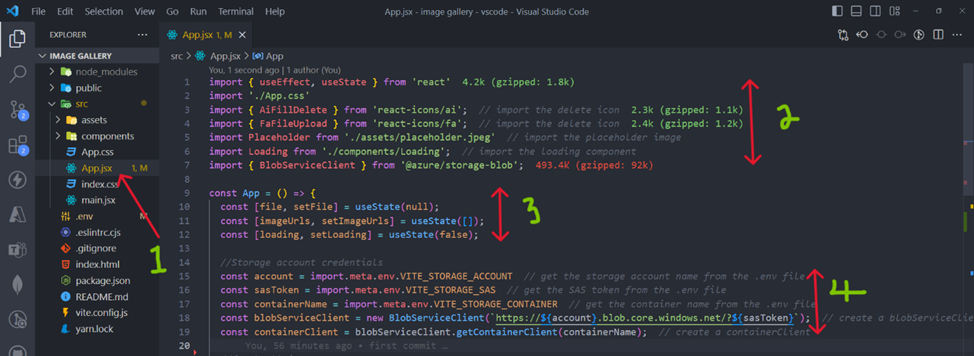

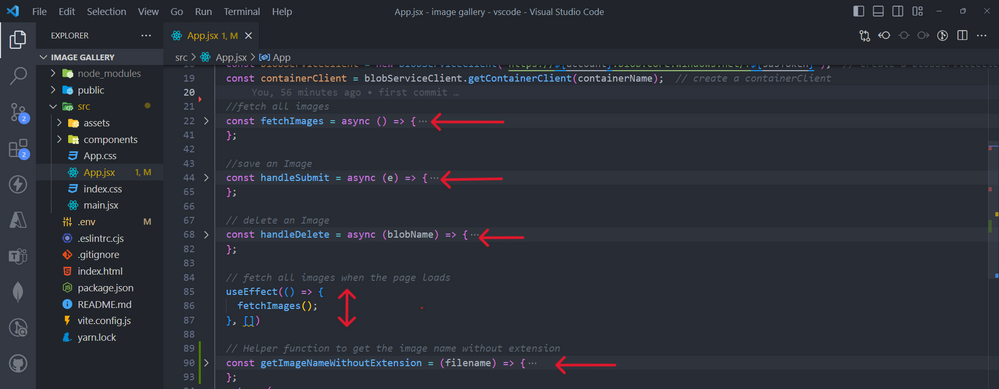

- I have stored all the code in App.js file for simplicity reasons. The file starts with icons imports, some states (variables) and storage account credentials.

- We have 3 main functions, fetchImages to fetch all images, handleSubmit to save images to azure blob storage, handleDelete to delete an images.

- This code below is just for uploading and displaying images on our web page.

Read more about

- Introduction to Azure Blob Storage

- Create a virtual machine and storage account for a scalable application

- Tutorial: Build a highly available application with Blob storage

- Tutorial: Encrypt and decrypt blobs using Azure Key Vault

- Upload large amounts of random data in parallel to Azure storage

- Download large amounts of random data from Azure storage