This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

What if a salesperson could simply ask a chatbot in natural language to fetch the information about products from complex databases and then create a sales order back in their SAP system, all without leaving the Microsoft Teams interface?

With Azure AI Studio and Power Platform, this is not only possible but easy to implement. In this blog post, we'll explore a real-life use case where a salesperson uses a GPT-4 powered bot to query data from SAP systems in natural language. The same AI model can further create a JSON that could be used to place a sales order in the SAP system, all without having to leave the chat interface.

You can view the video below to see how the scenario mentioned above could look, and then read the rest of the blog to understand how to build this.

Enterprise adoption of Generative AI.

The release and development of advanced AI models in the past year, specifically Generative AI, has caused both individuals as well as organizations to get excited to use and implement their own AI solutions. AI is helping developers and individuals in organizations become more efficient as well as create new tools with the data they own.

Examples of such tools is the new Microsoft Office 365 with Copilots which allows organizations and individuals to use their data on Microsoft Graph along with Generative AI to become more productive. The suite of AI tools on Azure AI studio is another such example which empowers developers to create their own AI-powered applications.

When it comes to organizations, most organizations are becoming increasingly focused on using data to:

1) Integrate AI into their products to give their customers a personalized user experience.

2) Optimize their workflows using AI to reduce the labor and effort required to complete tasks and therefore become more efficient.

How organizations can use AI to optimize their workflows:

What can help organizations achieve the first goal – to improve their own business processes and become more efficient by using AI, is to utilize their organization-related data to the fullest. As you can see from this McKinsey report, the most AI use cases adopted by organizations are for services optimization and improving their workflows.

When looking at the type of data organizations own regarding their own workflows, there are two important sources of data:

1)The data of its business operations like Finance, Sales, HR, Supply chain etc.

2) The data on their own employees and the collaboration between them like emails, chats, and shared documents.

Both forms of data are important and would play a role in any AI solution a company creates.

Microsoft has been one of the leading software companies that provides collaboration tools for organizations whether it is through Teams chats, emails on Outlook, creating documents together on Word or analyzing data on Excel, and therefore, has all such data stored on the Microsoft Graph.

SAP has been one of the leading ERP (enterprise resource planning) software providers. According to an SAP Report, 85 out of 100 of the largest organizations use SAP S/4HANA and therefore, have business process data on SAP systems.

It would truly be remarkable if organizations could utilize both these sources of data to create products that could leverage AI to make their organization more efficient.

The above has become increasingly possible with the many AI and app building tools that are available on the Azure AI Studio and the Microsoft Power Platform that can connect to SAP systems seamlessly.

The following part of the blog will explain how to build the chatbot shown above using the Microsoft Power Platform, the Azure AI Studio and data from an SAP system by explaining in detail how the use case demonstrated in the video above was built. You could refer to this blog for more information on how and why Power Platform and Azure AI Studio could be used together.

The steps listed below will demonstrate just how easy it is to integrate some of the most advance LLM (large language models) models with data from your organization (directly from the SAP systems) and create your own AI chatbot/assistant on Microsoft Teams – all in a low-code manner.

Azure Open AI Studio and how to set it up:

Azure Open AI Studio, also called the Azure AI studio, has a notable set of tools that could be utilized to create AI solutions. For this specific example, the Chat Playground has been used to create and test a bot.

To create a bot on the Chat Playground, there are a few prerequisites you need:

An Azure account, An Azure Resource Group, Azure Resource, and a tenant with the Open AI service enabled. You could read more about it here.

To get access to the Azure Open AI studio and sign up for it, you can follow the steps mentioned here.

Once you have access to the Azure Open AI studio, you can go onto the Chat Playground and enter instructions for how you want your bot to behave. You can try adding some sample data to the prompt just to check if the bot is performing the right way and to test whether your prompt needs to be changed.

After the bot is performing in a satisfactory manner, you can save the text prompt you have used and add it to your request in the Power Automate flow (which would communicate with Azure AI studio) later.

Here is a screenshot of the Chat Playground and an example of the prompt.

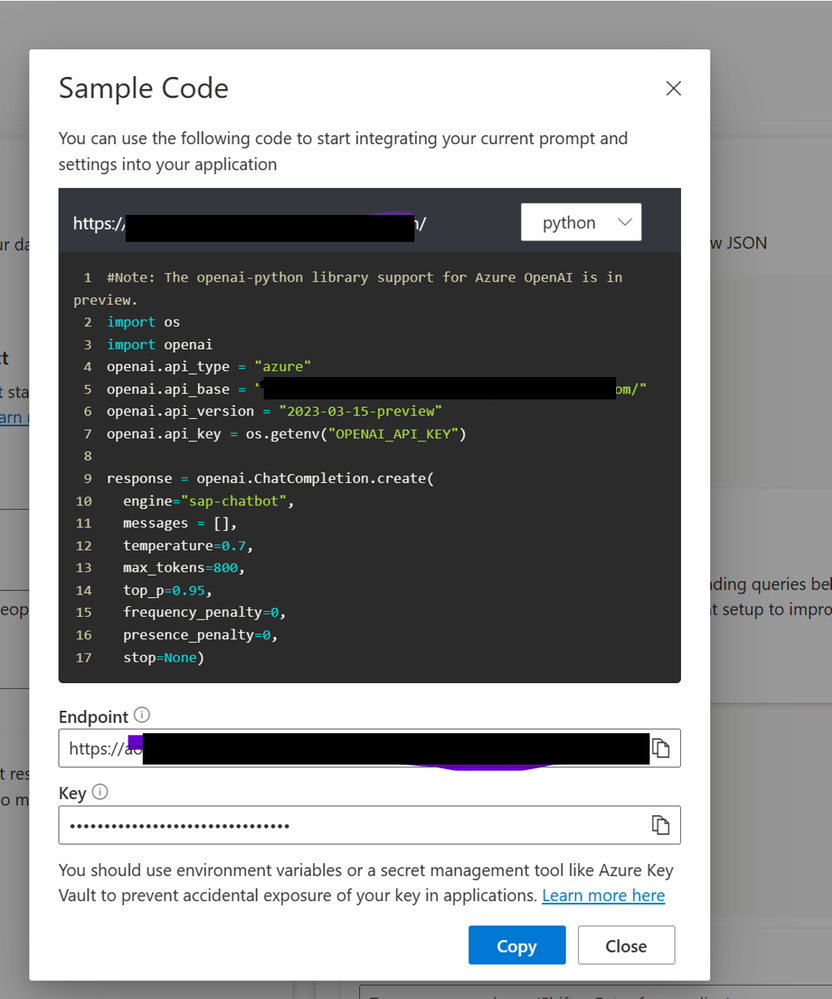

In order to view your access URL and API Token, go to the view code tab in the Chat Playground and you will see your end point URL and your Key. Keep those for your HTTP request to Azure OpenAI that you will make later.

There are other optional parameters you can use to tweak your URL, you can read more about it here.

After you have tweaked your prompt and the conversation you are having with the bot is satisfactory, the next step is to go onto Power Platform to build the bot.

How Power Platform could be used to connect your SAP system and Azure Open AI studio:

On the Power Platform, we would use two main components, Power Virtual Agents to create a bot deployed on Teams and Power Automate to create the flow that communicates with Azure AI studio.

To develop on the Power Platform and deploy the bot on Teams, you would require a developer account for Microsoft 365, you can read about it more here.

For the Power platform development program, you will also need a Power Platform Developer program account which you can read about here.

Creating a Power Virtual Agent Bot as the medium of conversation:

For this chatbot you will be using Power Automate as well as the Power Virtual Agents components of the Power Platform.

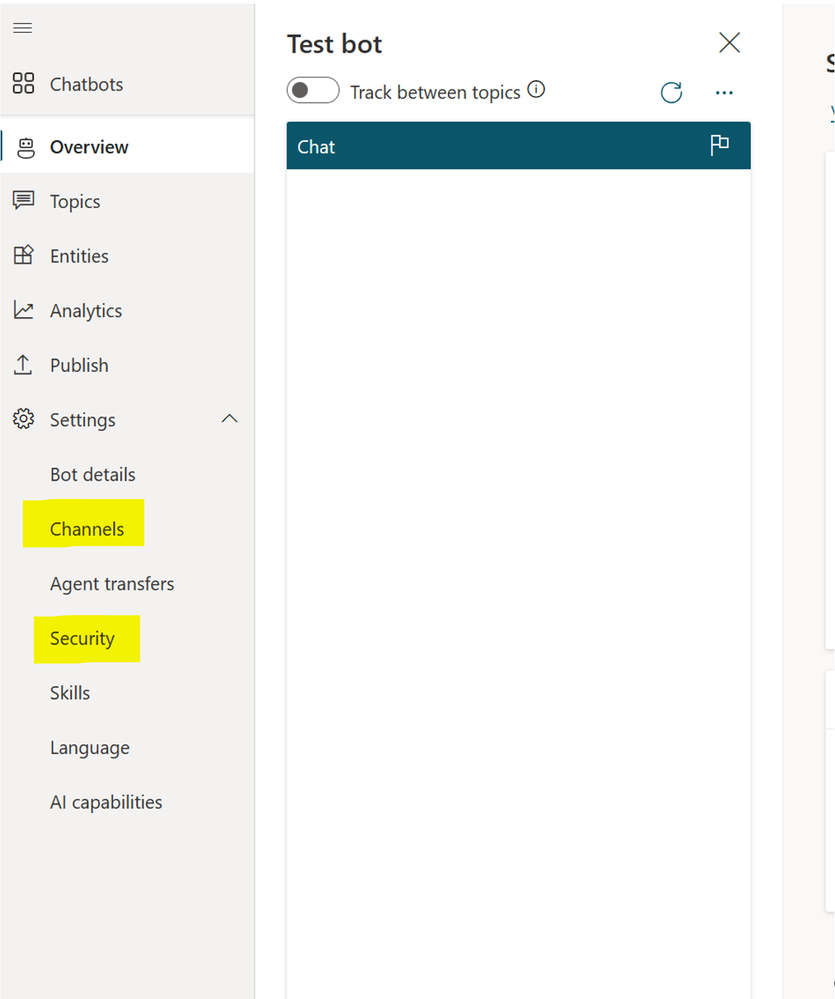

The first step would be to create a Power Virtual Agent bot that utilizes Microsoft Teams as a channel of deployment.

To do that, log into Power Virtual Agents and create a new chatbot.

The next step is to click on channels for the bot and choose teams. After that, move onto security and authentication and then select “only for Teams"

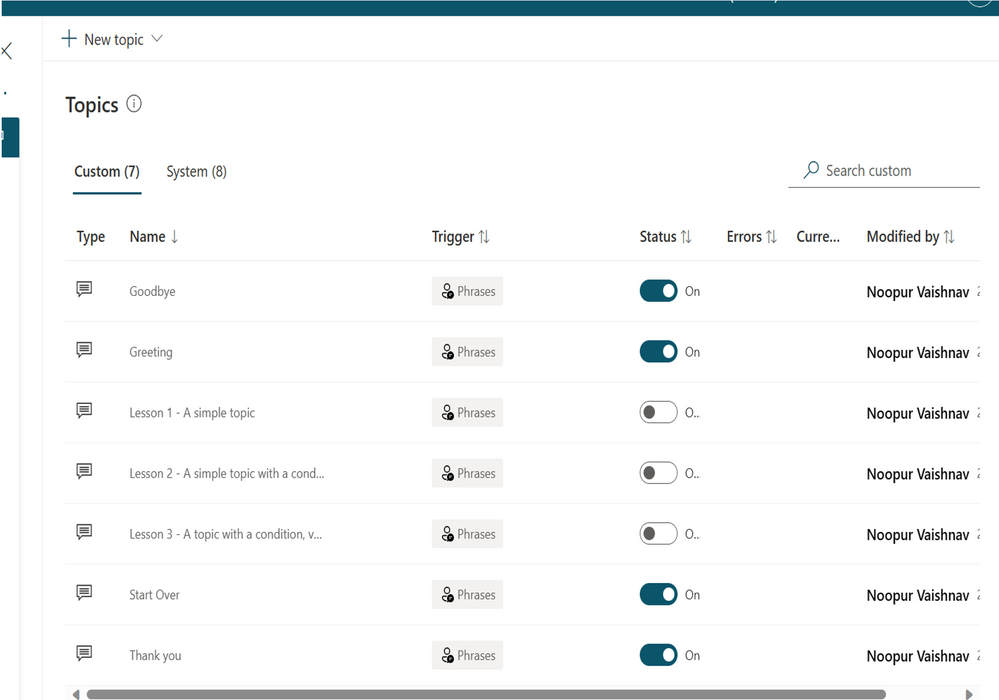

Now you can move to the topics tab in Power Virtual Agents and disable the topics with the three lessons as shown below:

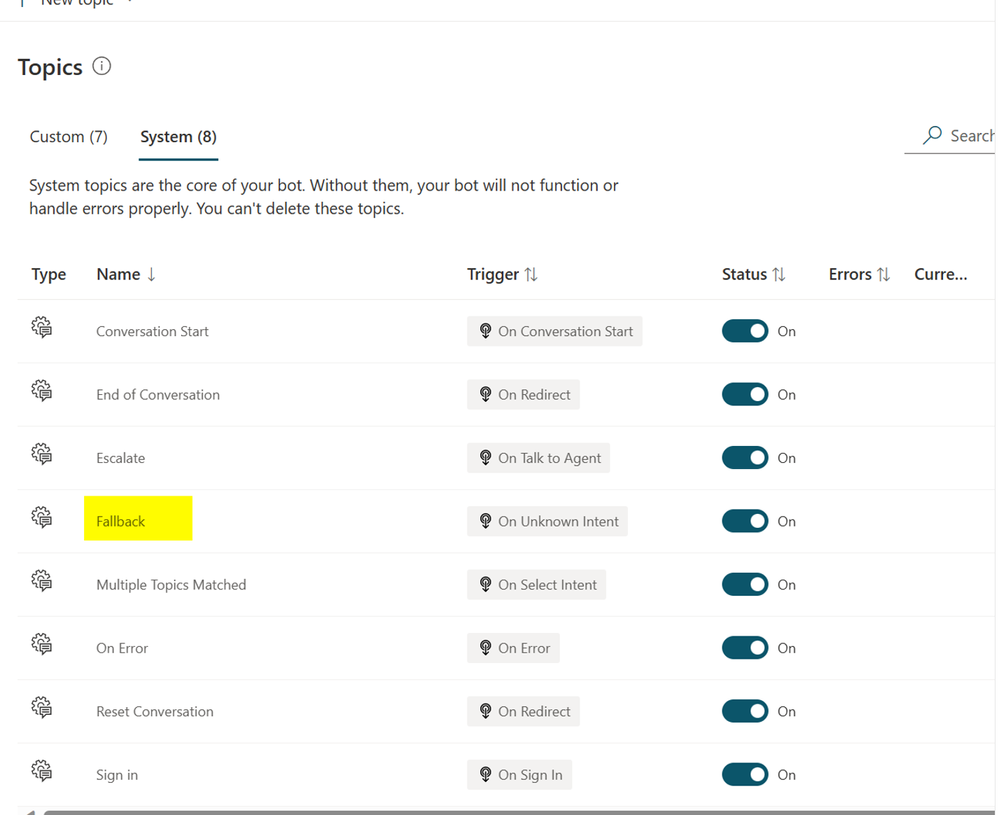

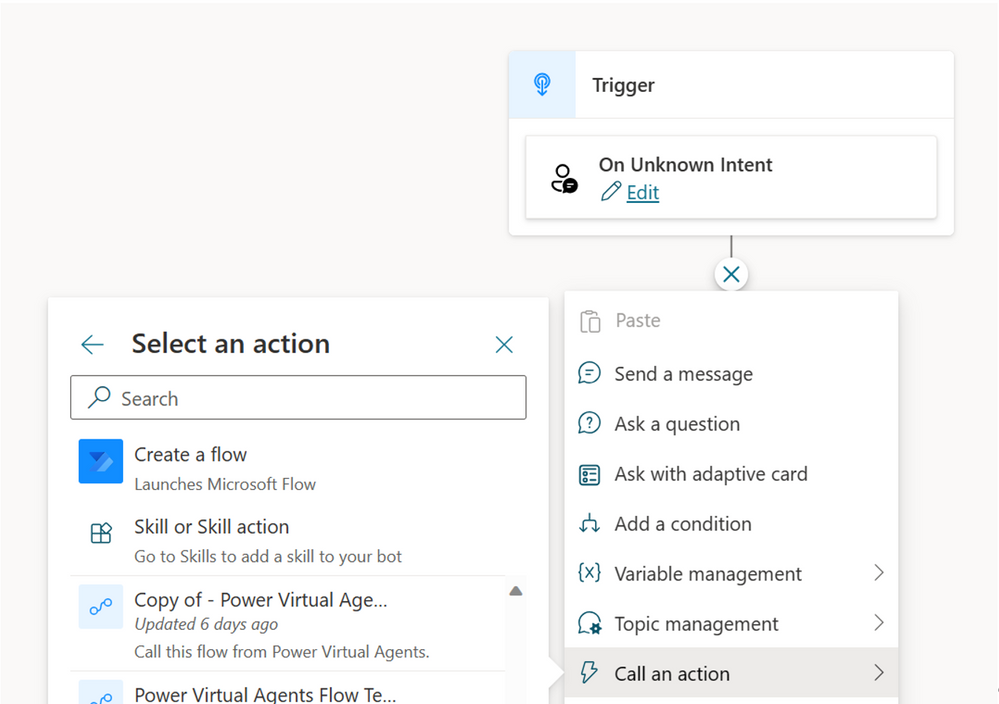

Then you can navigate the system tabs and select the fallback tab.

Delete all the default content and call/ create a Power Automate flow like this:

From here, select the action “Create a flow” and you will then be navigated to Power Automate. Here is where you will create the Power Automate flow.

Power Automate flow to call Azure OpenAI and create Sales Order in the SAP system:

There are 2 main calls your Power Automate flow must make.

1) The call to the bot deployed on Azure Open AI that sends the prompt, the appended SAP data and chat history to the bot deployed on Azure AI Studio via an HTTP request.

Note: The Azure AI Studio currently only processes one message at a time and not the entire chat history. Therefore, you must append all the previous messages in the session to the request to give the bot context, this blog post was referred to in order to fix this problem.

2) The call to the SAP system to create a sales order in the case that the sales order is confirmed.

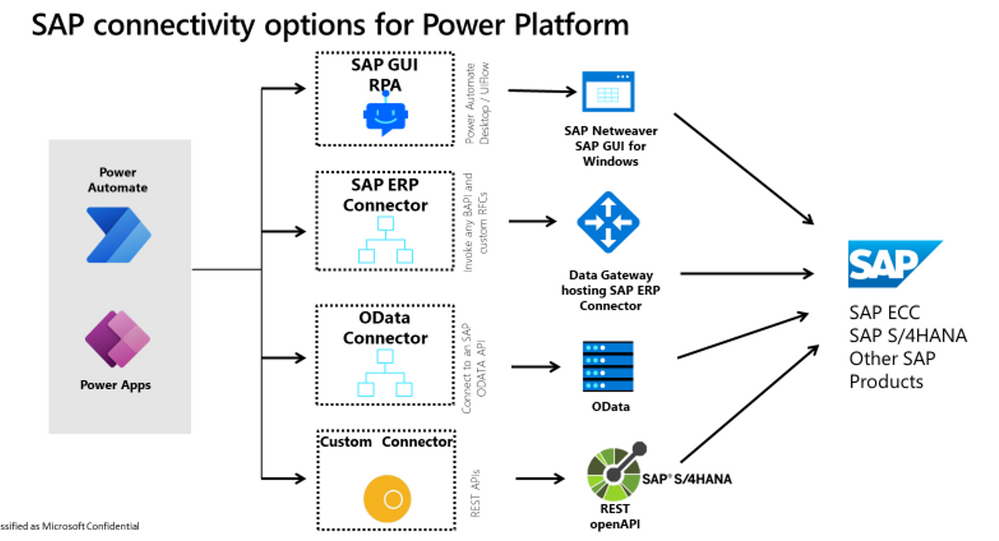

There are several ways to connect to an SAP system and call functions on the system (Refer to the Diagram below). However, RFC (Remote Function calls) through BAPIs (Business Application Programing Interfaces) are an easy way to connect from the Power Platform onto SAP systems. Therefore, for the purpose of this use case, we have made use of the SAP ERP option among the various others shown in the diagram below.

SAP connectivity with Power Platform.

Before we move onto the flow, ensure that you have an SAP system ready along with the Azure AI studio bot deployed (as explained above). If you do not have an active SAP connection but have a S-USER ID, you can follow the resources mentioned below.

To learn more about how to use the SAP system, you can refer to these two videos and follow the steps in them:

Power Platform + SAP - Installing the On-premises Data Gateway

SAP Cloud Platform Portal & Power Apps

Once the steps mentioned in the video have been completed and you have ensured that your ERP connector is connected to an SAP system, you can move onto creating the Power Automate flow.

Power automates flow explanation:

Note: The use case was created in reference to a blog post, and it has a more in-depth explanation of the steps.

There are two main variables that are used here that are sent from the Power Virtual Agent bot and you can set them as shown below.

- UnknownTriggerPhrase: This is the user query that will trigger the fallback topic in Power Virtual Agents and will run the flow.

- Chathistory: This is the variable that will contain the entire conversation/chat history from the bot.

Step 1: The first step in the flow is the step to initialize the system prompt variable, this is the text with the instructions on what role you want the bot to play and how you want it to respond.

The prompt that was used for this demo is:

“You are a chatbot that is a Salesperson's assistant and utilizes only data provided in this prompt answer questions and recommends products to users.

1)You must read the entire conversation so far and answer to the last message with all the context in mind , understand the excel data below and answer questions about it in natural language without adding your own input.

2) When the user expresses intent to create an order, confirms the item, ask them the for quantity only and never create a JSON without that input.

3) When the user types “confirm” a JSON should be generated with the only Item number and quantity. The response only contains a JSON and no other text surrounding the JSON as this is going to be used to call an API.

The Json format should be:

{

"Item_number" : "12323",

"Quantity" : 12

}

You will not use your knowledge for anything other than understanding this data fully and following the above steps and not question the user on inventory levels or anything else.

Moreover, you will always respond with ALL the data in mind and not only some.

Here is the data you should learn about and understand the description of each, (This data copy pasted from an Excel with the column headers and rows), understand ALL the data entries and reply accordingly. : “

Step 2 : Collecting SAP Data to put into your prompt.

The first step would be to export your data from your SAP System in to an Excel. You would have to concatenate various tables in the SAP system to get the total set of information about a product/material. At the very least it should include:

Material Number/ Product ID, Product Type, Category, Name, Description, Supplier Name, Price.

You can get these in the following columns in SAP tables (You can search up which tables and columns in the SAP database to use for any field using an AI powered search tool too.)

Here are some of the tables and columns used to get the fields required.

- PRODUCT_ID: Use field MATNR in table MARA

- TYPE_CODE: Use field MTART in table MARA

- CATEGORY: Use field MATKL in table MARA

- NAME: Use field MAKTX in table MAKT

- DESCRIPTION: Use field MAKTX in table MAKT

- PRICE: Use field STPRS in table MBEW

- WIDTH: Use field BREIT in table MARM

- HEIGHT: Use field HOEHE in table MARM

- VENDOR/ SUPPLIER information from EINA

Once you have the data merged and ready, you could copy paste it into it to an Excel on SharePoint, or on Azure Blob Storage and use Power Automate flow to fetch and store it in a variable in Power Automate. The variable can then be added into your system prompt. For the sake of this blog, the data from the combined Excel has just simply been copy pasted and put into the prompt as text and is sent to Azure AI studio as shown below.

Note: This is just for the purpose of a small-scale bot, the easier way to handle copious amounts of data would be to store it on the cloud, query it based on the request and then add the data to the prompt. You could refer to the end of this blog for more information on how this could be done.

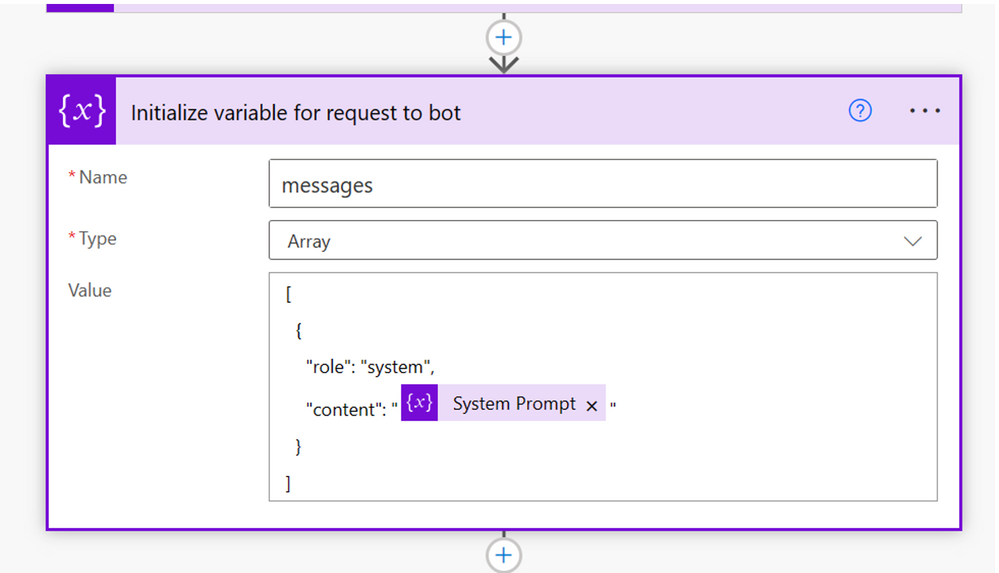

Step 3: Now as mentioned earlier, the LLM has no knowledge of the previous messages sent, therefore, we need to ensure that we keep sending it an array of all previous messages from the chat session as well. To do that, we create an array variable that has the system prompt as the original message and has the format shown below:

Initial array is:

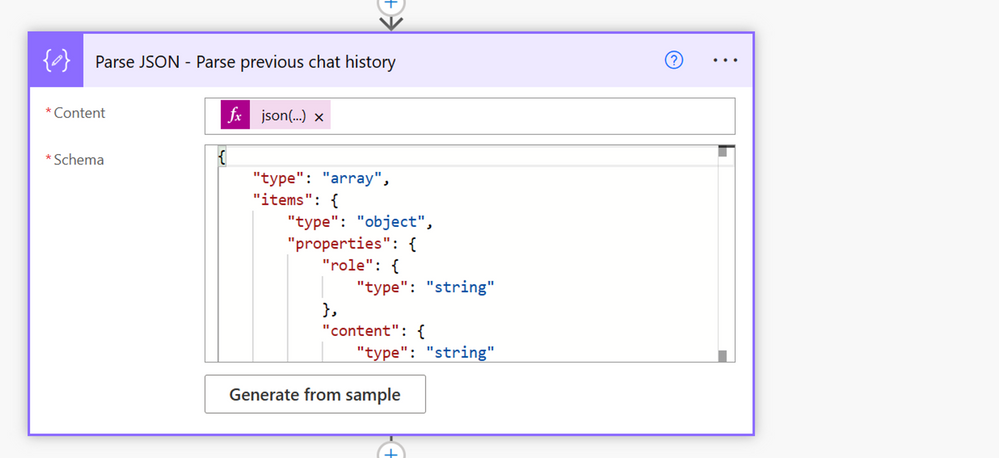

Step 4: Next, we will use the global variable that stores all the previous messages (from the Power Virtual Agent bot) and convert that into a JSON array with the schema below:

The variable that contains all the previous chat history will be empty before the first message is sent to the bot for that conversation session, if the variable is not empty, it means that the most recent message is a continuation of a conversation that has already been started.

For each of those cases the array with the prompt and the conversation history will be framed differently and would have to populated in a different way.

To populate the array in a different way you could add the following expression that was referred to from this blog:

Step 5: You will then loop over the JSON array and append it to an array that will be used as a request. This will append the previous chat history (if present), the system prompt as well as the most recent phrase to the array and therefore the prompt that is to be sent to Azure OpenAI.

Step 6: Finally, we will make the call to the Azure Open AI Studio with the” Messages” array embedded in the body. These messages array finally contains: All previous messages from the chat + the System prompt with the SAP data.

The URI and the api-key here are the ones you would have have copy pasted and saved from the Chat Playground.

Step 7: We Initialize a variable called content and store only the text response by the bot on Azure AI Studio and not any other headers and information about the call.

For this we initialize a variable with the expression:

Step 8: Now that we have a response from Azure Open AI, we need to check if the user expressed intent to confirm a sales order.

To check if a sales order needs to be created in an SAP System, we add a condition checking for the same by checking if the response from the bot contains a “{“.

This is to check if a JSON was returned as we had instructed the bot to return JSON in a case a used expresses intent to create a sales order.

Step 9: Yes (If the sales order needs to be created).

Step 10: The item number as well as the quantity needs to be extracted from the JSON created by the AI model for this, we do the following two steps. (Refer to the image above).

1) Creating a “Compose Input” in which you parse the content returned by the AI model and select only the JSON part of it.

The input for this is the content returned by the HTTP request and the expression input is:

This ensures only the JSON is extracted from the Azure Open AI response.

2) Parse the JSON

The input for this variable is the output from the previous step and the sample JSON is the same JSON you gave in the prompt:

Step 11:

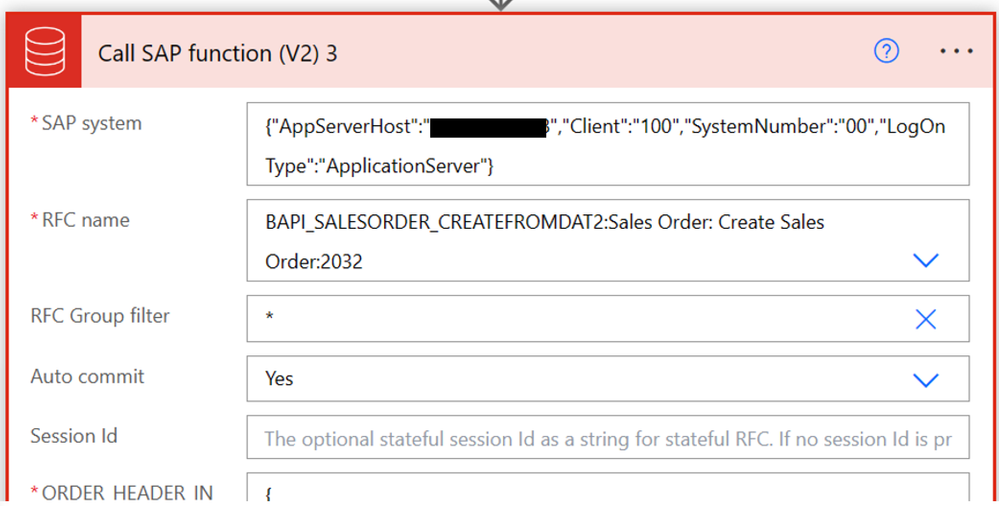

The BAPI to create a sales order in the SAP system must be populated. To do that, find the Call SAP Function Action and add it to the flow and then search for “BAPI_SALESORDER_CREATEFROMDAT2” as the name of the RFC.

The parameters that need to be populated are:

- Order_HEADER_IN

- Order_HEADER_INX

- Order_PARTNERS

- Order_ITEMS_IN

- Order_ITEMS_INX

- Order_SCHEDULE_IN

- Order_SCHEDULE_INX

You can convert each of the fields on the SAP system for each of these parameters into JSON as follows, do this for all the fields you need in the above parameters. You could decide which ones you want to keep constant and which one you want to take as input from the prompt:

Also, select “show advanced options” and turn “Auto commit” on so that the sales order transaction is committed in the SAP system.

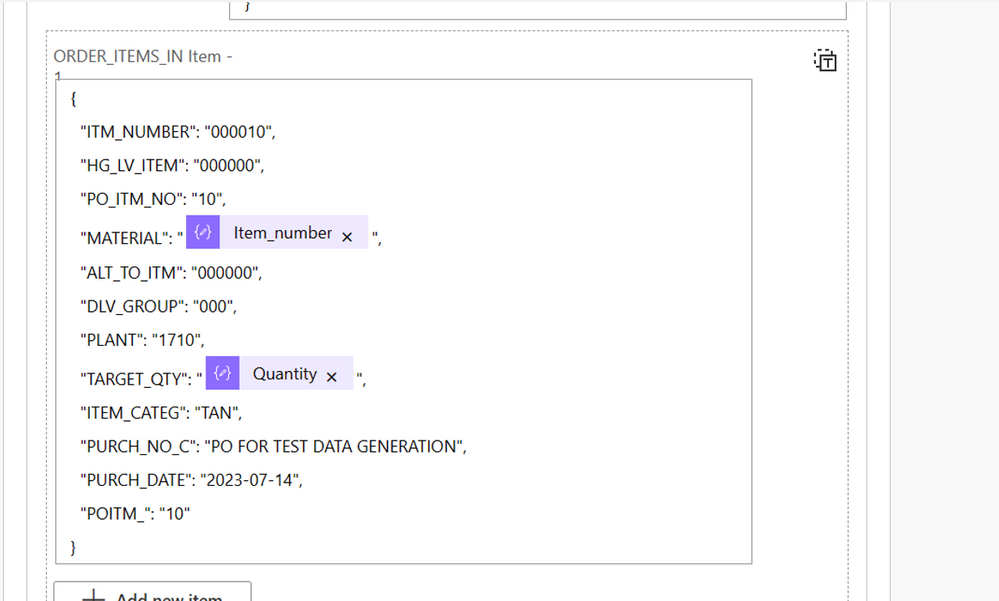

You would also have to extract the fields required that are not constant from the HTTP request. For example, The Item_ number ( which is an input from the conversation) from the parsed JSON in the step above could be used to populate the Material Number field in the Order_ITEMS_IN parameter's JSON. This is by entering the following expression:

You could use this for any field you want to input dynamically.

Step 12: In the response to send back to the Power Virtual Agents bot we attached the sales document number with the response. This is done by selecting the sales document number field from the dynamic variables.

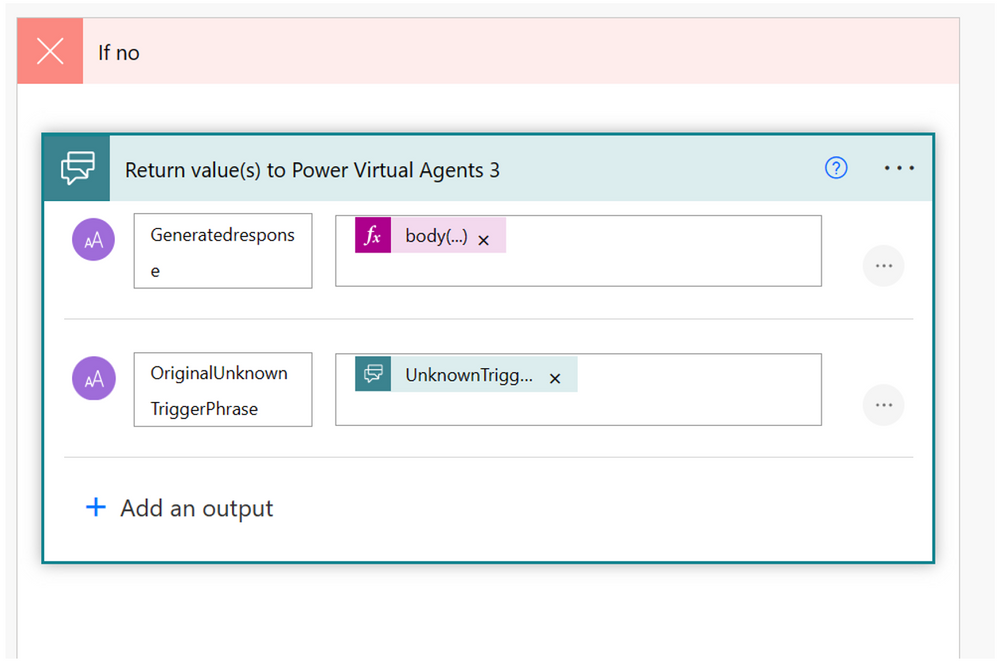

Step 13: No (If a sales order does not need to be created, we send the following response with just the normal message response from the bot on Azure Open AI):

The Generatedresponse would simply be the text response provided by the bot deployed on Azure AI Studio:

Finally, in both cases we receive a response back from the system which will then be sent back to the Power Virtual Agents. The next step would be to navigate back to Power Virtual Agents and complete the flow.

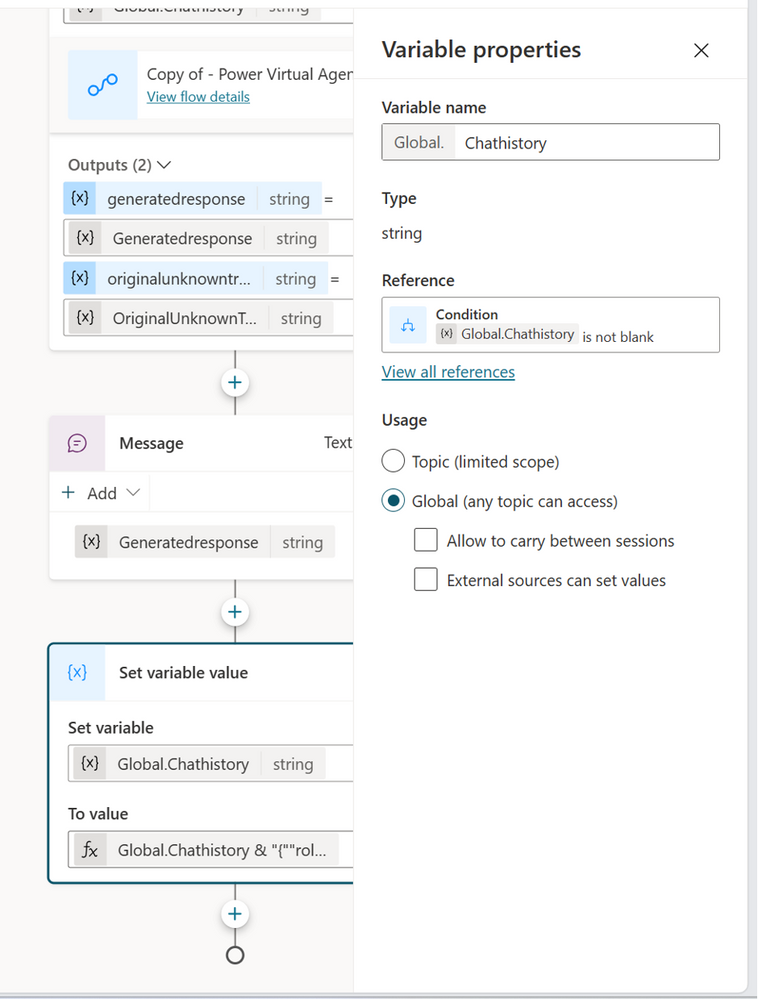

Power Virtual agents flow to send AI response back to the chat interface:

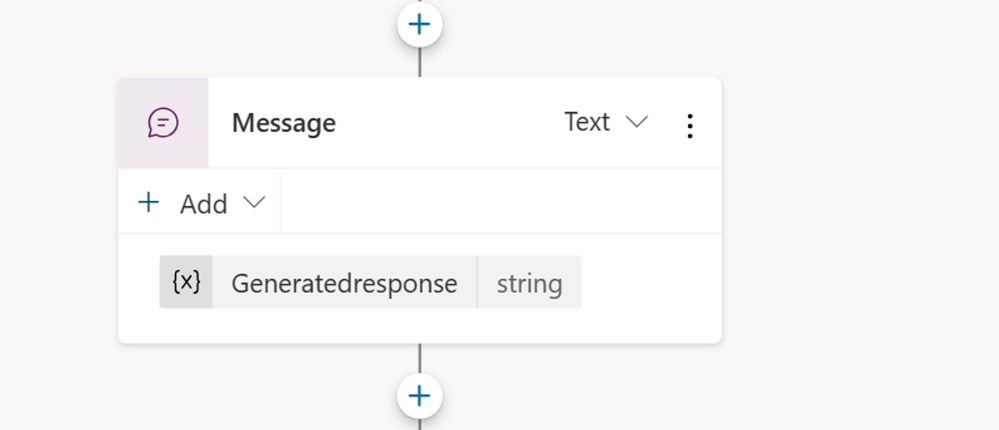

After your Power Automate flow is ready, you can navigate back to your Power Virtual Agents flow and complete the fallback topic there.

Once you navigate back to Power Virtual Agents, you can select the variable. Do not map the initial two variables for now. (Refer to the image below and this blog for more details.)

Below add a Send Message step that contains the Generatedresponse string.

After it, add a step called “Set Variable” and create a new variable: Chathistory. This is set to ‘Global ‘so that it remains through the entire flow. (This is the variable that is going to store the entire chat history and therefore needs to be accessed throughout the whole flow.)

For the value of this variable, use the Power Fx formula that will combine the chat history + the latest user question along with the Azure Open AI response.

The blog that was referred to for making this flow suggests the use of Substitute formula to escape double quote characters so that the generated JSON does not break.

Enter the below in the formula field:

To handle the first time a user sends a query (the case in which there is no chat history and Global.Chathistory is empty), there needs to be a check at the beginning of the flow.

In the Condition, set the checking condition to Global.Chathistory is not Blank.

Once you add the Condition, in the All-Other Condition part of it (when there is no chat history, and the variable has not been initialized yet) add a ‘Set a variable’ step and set the value to a formula which is an Empty string: “”

The last step is to map the Power Automate inputs to the Power Virtual Agent variables.

For UnknownTriggerPhrase, set it to the Activity.Text system variable.

To ensure that there are no unescaped double quotes characters sent to Power Automate, the UnknownTriggerPhrase input should be wrapped with the below Power Fx formula in:

For the Chathistory variable, use the Global.Chathistory

Once we receive the response back in Power virtual agents, we can set the variables back to the bot interface and now publish the bot with these changes. You should be able to converse with the bot about materials and the fields related to it that you have added in your SAP data. You can then also confirm and finally place a sales order.

Caveats to the above solution:

- The Teams chat history does not get deleted every time it is closed, therefore, every time you want to start your chat over, enter the words “Start Over” to the bot to start a new conversation.

- The complete SAP data set could not be added as there is a token limit.

- For the sake of the solution, as mentioned above, the SAP data was manually extracted from the system and added to an Excel and copy pasted into the chat prompt.

Future scope to expand and scale the solution for real-life scenarios.

In the example above for the sake of proof of concept, the actual data has been exported from various tables in the SAP System, placed into an Excel and then added to the prompt manually. However, this solution cannot be used in a large-scale and real-life scenario.

A proposed way to do this in a real-life manner is to:

- To load SAP data on a regular basis onto Azure Data Lake for example by using the SAP CDC Connector as shown in this blog.

- Use Azure Cognitive Search to search for query related materials based on intent (to avoid having to send all the data to the AI model in the chat prompt). Here are more details on how to do this in this article.

In conclusion, this is just an example of how you can utilize the wealth of tools available on Azure AI Studio along with your SAP data to integrate generative AI into your business processes. With the above-mentioned tools you practically have all the tools in your arsenal to improve any other business process you would like to in a similar manner.