This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

- This Article is Authored By Michael Olschimke, co-founder and CEO at Scalefree International GmbH and Co-authored with @bschlottfeldt Bárbara Schlottfeldt Maia BI Consultant from Scalefree

- The Technical Review is done by Ian Clarke and Naveed Hussain – GBBs (Cloud Scale Analytics) for EMEA at Microsoft

Introduction

The previous articles of this blog series discussed the Data Vault 2.0 architecture and methodology. The next articles will focus on another Data Vault 2.0 pillar: the Data Vault 2.0 model. The model has been developed specifically for enterprise data warehousing. It is an extensible model that can deal with structural changes in the data and can be extended over time by additional data (and business logic). Therefore, it fits well into agile methodologies such as Scrum.

The model is also the basis for the automation capabilities of the Data Vault 2.0 approach. Software packages such as Vaultspeed rely on these patterns to generate the model in a data-driven approach. This helps enterprises to manage data flows at scale.

Brief Example

The basic idea of the Data Vault 2.0 model can be best explained by an example: consider two source systems: a customer relationship management (CRM) system and an ecommerce shop. Both systems are not integrated but a business user in the middle is expected to create customer records for new customers who sign up for the service to purchase products utilizing them. When a new customer signs up, the business user creates the account in the CRM system first and subsequently in the ecommerce system. The issue arises from the fact that customers relocate, marry, or get a new credit card. In all these cases, the business user is expected to update the customer records in both systems to keep the data in sync. But sometimes, only one of the systems is updated and soon enough, the customer records are out-of-sync and the same customer has different last names, addresses, and credit card data.

Automated integration might help to some extent but breaks once in a while as well. In any case, enterprise data is often not consistent. But it is expected from the data analysts to answer simple questions such as: “What is the revenue of a CRM customer in the ecommerce system?” To answer this question, data must be integrated from both systems. But that can quickly become a challenge if every descriptive attribute of the customer can change over time, including the first and last name and address as well as the birth date. One would argue that there is no way a customer can change their birthdate. But what about dirty data that is only fixed in one of the systems but not the other? All this data will no longer be in sync if not properly maintained.

There is one solution that is practiced in today’s enterprise organizations: when a new customer signs up, the business user (or automated process) creates the customer record in one of the systems, for example the CRM system. By doing so, all of the descriptive attributes that the customer will bring to the organization are added to the CRM system. In addition to that, the business user will also add another attribute that is not provided by the customer: the customer number. This business key is added as part of the business process and doesn’t describe the customer. It is not “attached to the customer” and is delivered to the enterprise. Instead, the business process adds it to identify the customer record in the CRM system. And when copying the customer record to the ecommerce system, the business key is copied over as well. This business key remains stable, even if the customer relocates, marries, or gets a new credit card. And therefore, the business key can be used to integrate both datasets.

Besides business keys (that identify business objects, such as customers, products, and the like) and descriptive data there is another fundamental component in all data: relationships between business keys: they relate business keys, for example the products purchased by a customer in the ecommerce system. These three fundamental components exist in all data. For example, your personal data captured by the social media network of your choice: your email address or username identifies your user record. Descriptive data provides the first and last name and other descriptive data about the user. There are relationships between this username and groups joined by the user (the groups are also identified by some group name or ID). Descriptive data might not only describe the username or group identifier but also the relationship between the username and the group itself: for example when the user joined or left the group.

Introducing Data Vault 2.0 Entities

The Data Vault 2.0 model represents these fundamental components: hubs capture a distinct list of all business keys used to identify business objects:

If the enterprise organization stores customer records in different systems and all of them use the same business keys, the hub is defined as a distinct list of all these business keys. Duplicate business keys will not be captured. If the enterprise organization is using different business keys to identify customer records, all the business keys used will be captured by the hub, not just the leading business key or the one desired by the business.

The Data Vault 2.0 link captures relationships between business keys. This is again defined as a distinct list of relationships. If multiple systems store the relationships between employees and corporate cars, all of these relationships will be loaded into the same link, without duplicates records.

Descriptive data is captured by satellites. They might describe either a hub or link, but only one such parent at a time (no satellite describes both a hub and link at the same time or two hubs…). Satellites provide the data that describes the business object that is identified by the business key in the hub (who is behind the customer number 42? First name: Tyler, last name: Durden, occupation: soap salesman). They also describe the relationships, for example the day of marriage between two customers. As a side-node, satellites are often split, leading to the separation of attributes by type of data or rate of change. However, the discussion is out of scope of this article and we refer to the Scalefree Blog for details.

Standard Attributes

The Data Vault 2.0 model utilizes standard attributes in the model:

- Hash keys and sequences are options to identify the records in the model. For example, every business key in the hub is identified by a hash key which is derived from the business key and improves the overall join performance of the model. Theoretically, a sequence value could also be used, but is out of fashion due to dependencies in the orchestration of the loading procedures of the data analytics platform. Another alternative is to use the business keys itself for identification purposes, but to this day, hash keys are often the better option.

- Load date timestamps identify the incoming batch of data and indicate when a business key, a relationship between business keys or descriptive data has been captured by the Data Vault 2.0 model.

- Load end date timestamps define when a descriptive delta in a satellite has been replaced by the next delta. Nowadays, the load end date timestamp is virtualized (often using a SQL window function) and is no longer materialized.

- The record source attribute indicates where the record has been loaded from but is only used for debugging purposes. It should not be used for data lineage, business key extension, or anywhere in downstream business user queries.

- Hash diffs are used to simplify the delta check when loading satellites and therefore improve the loading performance, especially when satellites are large (data volume and number of attributes).

There are additional attributes, such as the last seen date timestamp and the snapshot date timestamp which will be skipped within this article to simplify the discussion.

The standard attributes are used throughout the model to establish the pattern of the Data Vault 2.0 model: all hubs are modeled similarly, the same is true for links and satellites. This way, the automation capabilities of the model are promoted.

Hubs in Detail

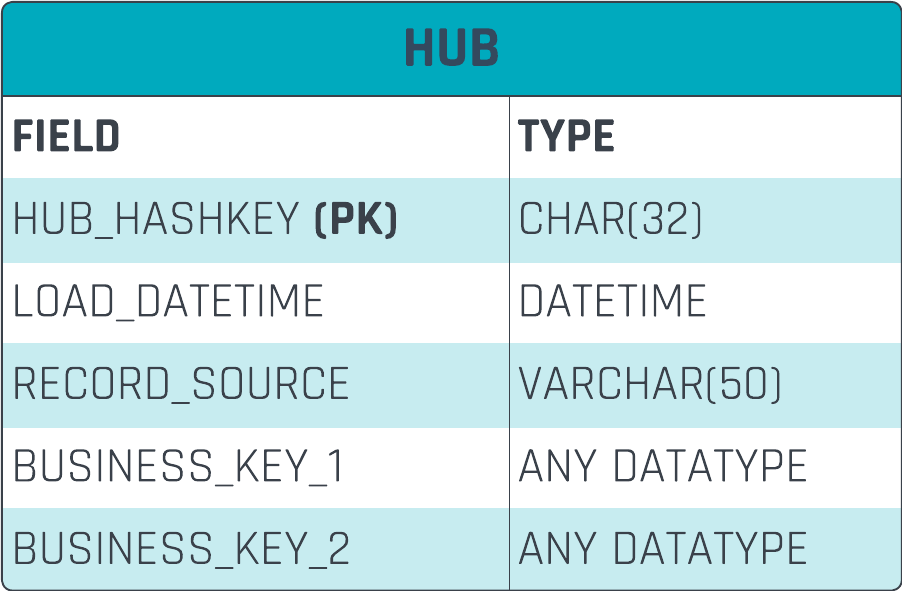

The following entity structure is used to capture business keys in a hub:

The hash key, which is derived from the business key, is used as the primary key of the entity to identify business keys in the hub. The load date identifies when the business key was loaded to the data analytics platform for the very first time. The record source defines where it was loaded from and the store_id is the business key that identifies the business object (the store).

The following table shows a data example:

Both the business keys and the hash keys are a distinct list: the hub was defined as a distinct list of business keys and, since hash keys are derived from business keys, the same applies to hash keys in the entity. In this case, the record source is rather short and only indicates the type of source system (MDM, CRM). In many cases, the record source is more detailed, for example providing the key of the file in the data lake where the record originates from.

The following DDL statement is used to create a hub in Azure Fabric:

CREATE TABLE [DV].[STORE_HUB] (

store_hashkey CHAR(32) NOT NULL,

load_datetime DATETIME2(3) NOT NULL,

record_source CHAR(32) NOT NULL,

store_id INT NOT NULL

);

GO;

ALTER TABLE [DV].STORE_HUB ADD CONSTRAINT store_hk_pk PRIMARY KEY NONCLUSTERED (store_hashkey) NOT ENFORCED;

The first statement creates the table with all columns. The second statement adds the primary key constraint to the entity. Note that the primary key is not enforced in Fabric and therefore serves documentation purposes for the user and SQL optimizer.

Links in Detail

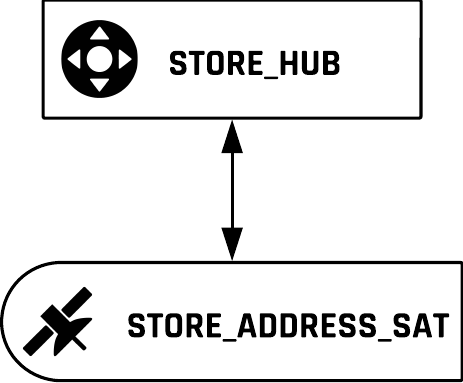

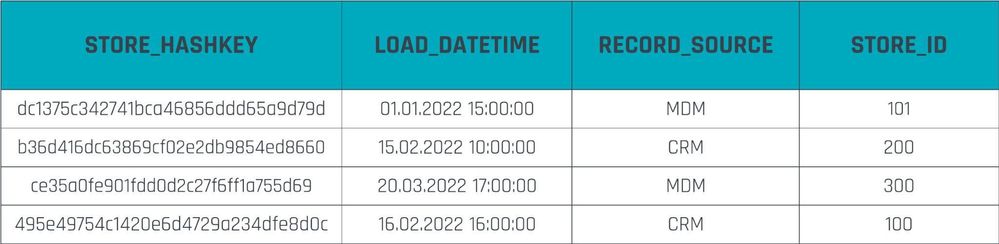

Links are designed in the manner depicted by the next diagram:

In the above table, the hash key is again used to identify the records in this entity, just like with the previous hub example. In the link, the hash key identifies relationships. While the relationship is between business keys, it is actually implemented as a relationship between hash keys: in the previous example, the business keys in the hub are identified by hash keys in the primary key. Therefore, the link refers to the business keys via their hash keys.

Other than that, the link’s entity structure includes the load date timestamp and the record source, just like the hub.

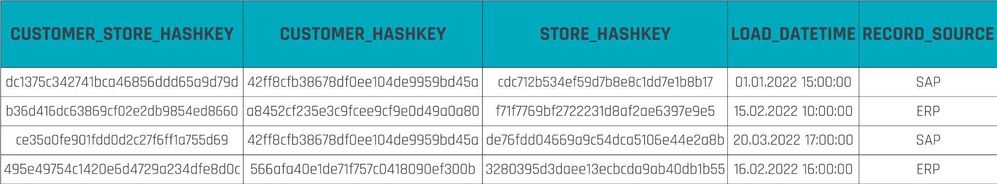

The next table shows example data for the link:

It should be noted, that the hash key of the primary key is not derived by calculating a hash value on the hash keys from the hub references: “never hash a hash”. Not because it is insecure (the hash key or hash diff values are not used to secure the data analytics platform in any way) but because it requires more effort. Instead of calculating the hash keys of the referenced business keys, the business keys itself are just concatenated and a hash key is calculated. This process of calculating hash keys and hash diffs is presented in a subsequent article when we will discuss the implementation of the loading procedures for the Data Vault 2.0 model on Fabric.

The following statement is used to create a new link entity in Fabric:

CREATE TABLE [DV].[CUSTOMER_STORE_LINK] (

customer_store_hashkey CHAR(32) NOT NULL,

customer_hashkey CHAR(32) NOT NULL,

store_hashkey CHAR(32) NOT NULL,

load_datetime DATETIME2(3) NOT NULL,

record_source VARCHAR(50) NOT NULL

);

GO;

ALTER TABLE [DV].CUSTOMER_STORE_LINK ADD CONSTRAINT customer_store_hk_pk PRIMARY KEY NONCLUSTERED (customer_store_hashkey) NOT ENFORCED;

Similar to the DDL statements for hubs, the first table creates the table itself, while the second statement creates the non-enforced primary and foreign keys. Again, while both are not enforced, they primarily serve documentation purposes.

Satellites in Detail

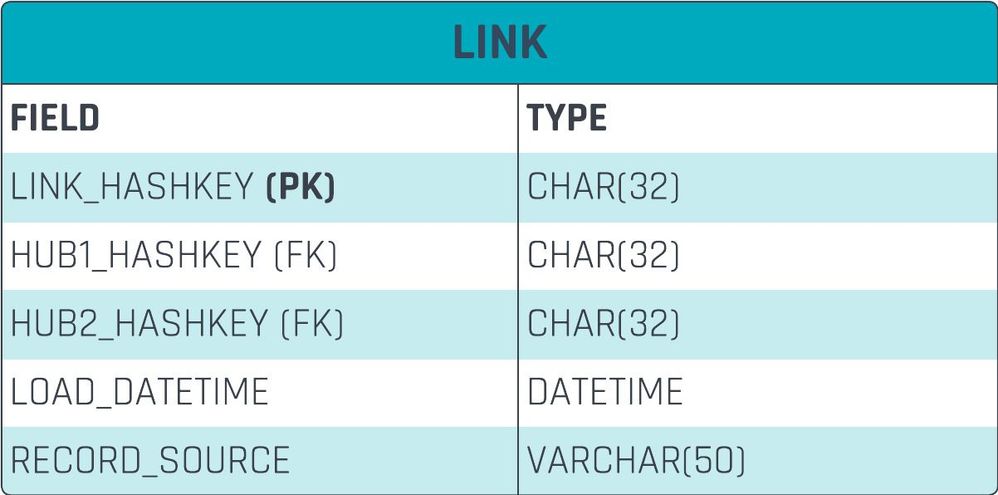

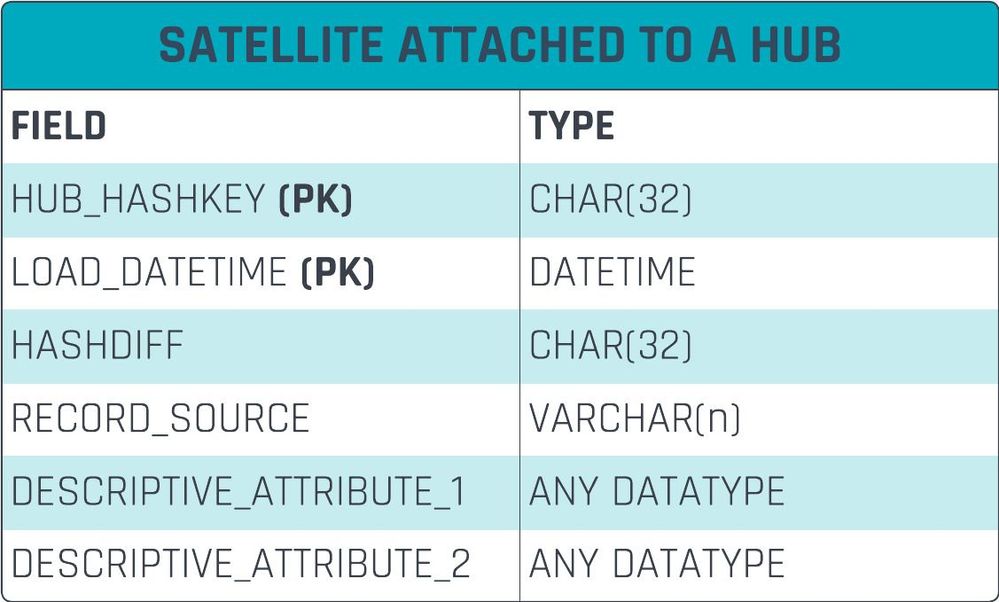

The third standard entity of the Data Vault 2.0 model is the satellite. The following table shows the structure of satellites in detail:

In satellites, the primary key doesn’t only consist of the hash key but also the load date timestamp. The reason for that is that the satellite’s purpose is to capture descriptive data over time for the same parent (business key in a hub or relationship in a link). But the same parent has always the same hash key and therefore, multiple descriptive changes over time would lead to a duplicate primary key. Therefore, the extension of the satellite’s primary key by the load date timestamp allows to track changes over time for the same parent’s hash key.

The hash diff is used in satellites to improve the performance of delta detection when loading the satellite. Not every data row from the source is captured by the satellite, only changes. These delta records, or deltas for short, are created for every single change in the source dataset. This not only reduces the amount of storage required to keep track of the history but also the performance: if less data is stored to disk, less data is required to be loaded from disk for queries.

To identify the delta in the loading process of the satellite, a column-by-column comparison could be used or, alternatively, a hash value on both sides of the loading process (the staging area or data lake and the target satellite) could be used. If both hash values are the same, there is no change in the staging area. If there is a change in the hash value, there must be a change somewhere in the staging area. The hash value is called “hash diff” and is used to identify the difference between staging area and Raw Data Vault layer.

For example, if the staging area or data lake contains the descriptive data “Michael”, “Olschimke”, and “Scalefree” for first name, last name, and company name the hash key is calculated roughly using the following pseudo code:

MD5(CONCAT(“Michael”, “|”, “Olschimke”, “|”, “Scalefree”));

If the data in the satellite is different, e.g. Bárbara Schlottfeldt Maia at Scalefree, the following pseudo-code would calculate the hash value:

MD5(CONCAT(“Bárbara”, “|”, “Schlottfeldt Maia”, “|”, “Scalefree”));

Since the inputs to the hash function are different, a different hash value would result and be used as a hash diff value. Based on the different hash diff values, a change is detected. If there were no changes in the staging area, both inputs on both sides would be the same, hence no change in hash value.

The above pseudo code is simplified: he actual calculation of both the hash key and the hash diff values are discussed in one of the upcoming articles in this blog series.

The record source defines the source of the record. Again, it should not be used for business queries, data lineage (use metadata instead), or any other purpose other than debugging.

All remaining attributes are descriptive attributes, such as the first name, last name, and birthdate of customers, the begin and end dates of the employment (a relationship between the employee and the employer), etc. And yes, business timelines, including valid from and valid to dates are captured as descriptive data. Do not use them in the primary key of a satellite (or a so-called multi-active satellite). We will not cover multi-temporal solutions in this blog series, but it is covered to some extent in our book “Building a Scalable Data Warehouse with Data Vault 2.0” (check out the “Temporal PIT”) and we cover it in our training sessions.

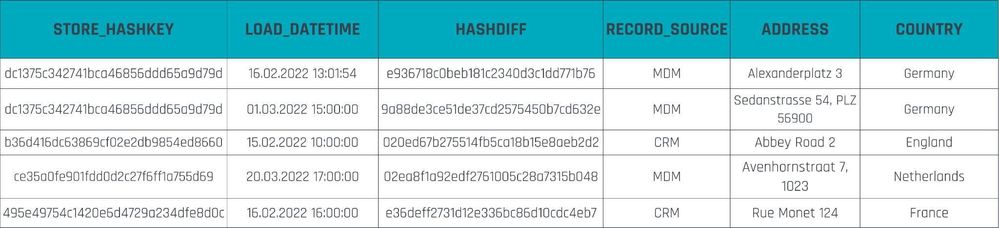

The next table shows a data example for a satellite:

The first two rows show two separate descriptions of the same parent record. Both share the same hash key because they describe the same parent. However, they have been captured over time, therefore the load date timestamp is different, just like the hash diff value. The actual change is reflected by the different descriptive data attributes.

A satellite table can be created using the following T-SQL code in Fabric:

CREATE TABLE [DV].[STORE_ADDRESS_SAT] (

store_hashkey CHAR(32) NOT NULL,

load_datetime DATETIME2(3) NOT NULL,

hashdiff CHAR(32) NOT NULL,

record_source VARCHAR(200) NOT NULL,

address_description VARCHAR(300),

country VARCHAR(50)

);

GO;

ALTER TABLE [DV].STORE_ADDRESS_SAT ADD CONSTRAINT store_address_sat_hk_ldts_pk PRIMARY KEY NONCLUSTERED (store_hashkey, load_datetime) NOT ENFORCED;

GO;

ALTER TABLE [DV].STORE_ADDRESS_SAT ADD CONSTRAINT FK_STORE_ADDRESS_SAT FOREIGN KEY (STORE_HASHKEY) REFERENCES STORE_HUB (STORE_HASHKEY) NOT ENFORCED;

The first DDL statement creates the table, while the second statement adds the non-enforced primary key and foreign key to the entity for documentation purposes.

Hash Keys vs. Sequences vs. Business Keys

It was already mentioned that there are three options available to identify records in a Data Vault 2.0 model: hash keys, which are the standard option; sequence keys, which are considered outdated; and business keys, which might be an option in some scenarios.

Sequences are typically not used because they have several disadvantages. Namely, they introduce dependencies in the loading process which become a problem with high volume and real-time data. Also, building a distributed data analytics platform becomes a challenge as each platform will create independent sequences and joining across multiple platforms using these sequences becomes a challenge if not impossible. Therefore, sequences are only a theoretical option, but in practice are not used by new projects currently.

The real competition is between hash keys and business keys. Here the answer is a bit more complicated. In many of today’s database systems, the hash key will be more performant, as it guarantees a fixed length join condition, compared to a business key, which can quickly become a multi-part business key with multiple columns and variable length. This will not only increase the join conditions, but also the indexes on a traditional database. Additionally, the variable length might require joining shadow or secondary pages during joins.

But on more modern database systems, such as Azure Synapse and Fabric (and many other database systems based on massively parallel processing or MPP principles for short), columns used in foreign key constraints and primary keys are often hashed transparently. This in turn makes the joins again fixed-length and on a single column. And therefore, business keys become an option for modern database systems.

Therefore, the winner in the comparison of hash keys and business keys is…it depends. It depends on if the data analytics platform to be built should include traditional database systems in the tool stack. Even the use of data lakes (where the above query pattern is not supported) for staging purposes and integration of semi-structured or unstructured data would turn the favor to the use of hash keys. On the other hand, it is expected that more and more systems will allow the use of business keys in the long-term future. Therefore the winner today is the hash key but in the future, it will be the business key.

Therefore, in today’s typical tool stacks, especially with the data lake in the hybrid architecture, it is often still recommended to use hash keys instead of business keys for performance reasons. It is also not advised to create mixed environments, for example where a SQL Server environment is using hash keys and a Fabric data warehouse is using business keys. This would complicate query patterns where data or information from different environments should be joined. In parts of the query, the join between entities would be performed on the hash keys, in other parts the joins would be based on business keys. Too complicated to automate, to document, to explain to power users. Instead it is advised to stick to one approach and that is often the hash key…today.

Data-Driven Models vs. Logical Models vs Source Vaults

Another decision to be made by the data modeler is to use one of the approaches to Data Vault 2.0 modeling.

Some users in the industry decide to use the logical approach where Data Vault is used to model a business. The advantage is that the Data Vault model reflects how the business should be: there are customers, products (both modeled as hubs); customers purchase products (modeled as link) and descriptive data is captured by satellites. The advantage is that the data modeler can optimize the model for the business use case which will simply query the information later.

However, the big disadvantage of the logical model is that it is limited for one business use case. The advantage is also the disadvantage because it assumes that there is a single version of the truth in data warehousing. But what about the other users in different departments, tax regions, or across the desk? It might be desirable to come up with a single truth in an enterprise organization but it is often unrealistic.

The other issue is that the logical model is used to describe the business expectation of how the business should work. However, when loading the data from the source systems, the data engineers are faced with a gap: between the expectation of the business user regarding how the business should be and what the source systems are actually tracking and providing.

Therefore a few projects decide to use the opposite approach: instead of using Data Vault entities to model the business, they model the raw data. In this case, the primary key in the source model is considered as the business key in the hub; foreign keys in the source model are turned into links and everything else describes either the primary key in the hub or the foreign key in the link. If a source system provides metadata, the Source Vault could be derived automatically without any data modeler required. But that is where these problems start as some data sources don’t provide any metadata at all. While solvable, the bigger issue is that the primary keys in a well-modeled source system are not business keys but surrogate keys. These surrogate keys are defined by the system and have no meaning outside of the data source (no other source system should use the same identifiers to identify the same business object). Therefore, the Source Vault has a problem with integrating the data. It is possible but requires additional entities: Same-As-Link (SAL) entities are required throughout the model and will degrade query performance.

Therefore, it is recommended to use the middle-ground between both (extreme) approaches: the data-driven modeling approach models the source data as it is being used by the business - and that is a reference to the business key. In the initial example, the business key was used to integrate both source systems (the CRM and ecommerce store). Therefore, the shared business key is analyzed and used in the Data Vault 2.0 model to integrate the data and simplify queries downstream. This is the approach the authors of this article strongly recommend, especially with regard to project success and automation capabilities.

Conclusion

This concludes our discussion on the basic entity types in Data Vault, the reasoning behind the model, and how to model the entities in Fabric. But to be honest with you, Data Vault is a bit more complex. While 80 % of the entities in your model will be simple hubs, links, and satellites as presented in this article, there are advanced entity types that deal with specific characteristics in the data. For example, there is a non-historized link that deals with event data, transactions, and messages; or a multi-active satellite that is used to capture multiple descriptions for the same parent in the same load (e.g. multiple phone numbers per contact). On the other hand, the presented three basic entity types are the base patterns for all those advanced entity types: they are derived from the entities presented in this article. In the next upcoming article, we will discuss the aforementioned non-historized link and multi-active satellite as examples for such advanced entity types.

About the Authors

Michael Olschimke is co-founder and CEO at Scalefree International GmbH, a Big-Data consulting firm in Europe, empowering clients across all industries to take advantage of Data Vault 2.0 and similar Big Data solutions. Michael has trained thousands of data warehousing individuals from the industry, taught classes in academia and publishes on these topics on a regular basis.

Bárbara Schlottfeldt Maia is a BI Consultant for Scalefree. She has been working in the Business Intelligence field with numerous clients and has knowledge on ETL/ELT development, Enterprise Data Warehousing and Data Vault 2.0 using different technologies and databases.