This post has been republished via RSS; it originally appeared at: Azure Data Explorer articles.

Introduction

With an ever-expanding ocean of data, more and more organizations need to perform advanced and fast analytics over their business data, logs, and telemetry while seeking to reduce costs. Many of them are shifting towards Azure Data Explorer (ADX) and taking advantage of the significant benefits it offers to analyze billions of records quickly and cost-effectively.

But sometimes they are already using other tools. One common scenario is that organizations are already using Elasticsearch, Logstash, and Kibana (The ELK Stack). Migration between big data platforms sounds like a long and complicated process. But that’s not always true. Switching from ELK to Azure Data Explorer offers the opportunity to significantly boost performance, reduce costs and improve the quality of insights by offering advanced query capabilities; all this without entering a long and complex migration, thanks to the tools described below.

This blog post covers the following topics:

- Why organizations are moving to Azure Data Explorer

- How you can use Azure Data Explorer with Kibana

- What additional tools for data exploration, visualizations, and dashboards are available

- How you can send data to Azure Data Explorer through Logstash (or other tools)

- How to use Logstash to migrate historical data from Elasticsearch to Azure Data Explorer

- Appendix: Step by step example – using Logstash to migrate historical data from Elasticsearch to Azure Data Explorer

1. Why organizations are moving to Azure Data Explorer

Azure Data Explorer is a highly scalable and fully managed data analytics service on the Microsoft Azure Cloud. ADX enables real-time analysis of large volumes of heterogeneous data in seconds and allows rapid iterations of data exploration to discover relevant insights. In short, the advantages of ADX can be summed up using the three Ps: Power, Performance, Price.

Power

Users state that they find it easier to get more value and new insights from their data, at unprecedented speed and scale, using KQL. Their business troubleshooting became much faster, too. They are more engaged and understand the data better, since they can efficiently explore the data and run ad-hoc text parsing, create run-time calculated columns, aggregations, use joins, and plenty of other capabilities.

These capabilities are natively supported without the need to modify the data. You don’t have to pre-organize the data, pre-define scripted fields, or de-normalize the data. There is no need to manage and take care of the hierarchy of objects like: Indices, Types, and IDs, as in other services.

Azure Data Explorer’s machine-learning capabilities can identify patterns that are not obvious and detect differences in data sets. With capabilities like time series analysis, anomaly detection, and forecasting, you can uncover hidden insights and easily point out issues or unusual relationships you may not even be aware of. You can also run inline Python and R as part of the queries.

Also, Azure Data Explorer supports many communication APIs and client libraries, all of which make programmatic access easy.

Performance

Price

You can configure and estimate the costs with our cost estimator.

2. How you can use Azure Data Explorer with Kibana

As announced in a separate blog post, we developed the K2Bridge (Kibana-Kusto Bridge), an open-source project that enables you to connect your familiar Kibana’s Discover tab to Azure Data Explorer. Starting with Kibana 6.8, you can store your data in Azure Data Explorer on the back end and use K2Bridge to connect to Kibana. This way, your end-users can keep using Kibana’s Discover tab as their data exploration tool.

3. What additional tools for data exploration, visualizations, and dashboards are available

Azure Data Explorer offers various other exploration and visualization capabilities that take advantage of the rich and built-in analyses options of KQL, including:

- Azure Data Explorer Web UI/Desktop application - to run queries, analyze and explore the data using powerful KQL queries.

- The KQL render operator offers various out-of-the-box visualizations such as tables, pie charts, anomaly charts, and bar charts to depict query results. Query visualizations are helpful in anomaly detection, forecasting, machine-learning scenarios, and more.

As described in the first chapter, you can efficiently run ad-hoc text parsing, create calculated columns, use joins and plenty of other capabilities, without any modifications or pre-organizations of the data.

- Azure Data Explorer dashboards - a web UI that enables you to run queries, build dashboards, and share them across your organization.

- Integrations with Azure Monitor Workbooks - a flexible canvas for the creation of rich visual reports within the Azure portal.

- Integrations with other dashboard services like Power BI and Grafana.

4. How you can send data to Azure Data Explorer through Logstash (or other tools)

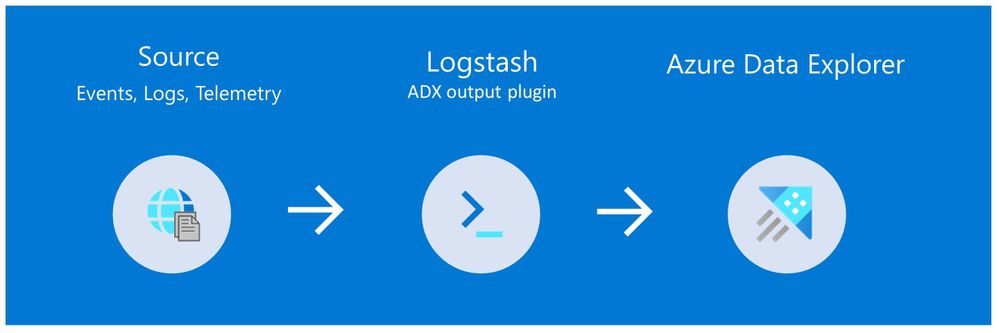

Are you already using Logstash as the data pipeline? If so, redirecting the data to ADX is easy! You can use the open-source Logstash Output Plugin for Azure Data Explorer (a detailed example is described in the next chapter), and keep using the Logstash input plugin according to your specific source of the ongoing event stream, as you use it today.

There are many other ways to ingest data into Azure Data Explorer, including:

- Ingestion using managed pipelines – using Azure Event Grid, Azure Data Factory (ADF), IoT Hub and Event Hub (Event Hub can receive data from several publishers, including Logstash and Filebeat, through Kafka).

- Ingestion using connectors and plugins - Logstash plugin, Kafka connector, Power Automate (Flow), Apache Spark connector

- Programmatic ingestion using SDKs

- Tools – LightIngest or One-click ingestion (a detailed example is described in the next chapter)

- KQL ingest control commands

For more information, please refer to the data ingestion overview.

5. How to use Logstash to migrate historical data from Elasticsearch to Azure Data Explorer

Choose the data you care about

When you decide to migrate historical data, it is a great opportunity to validate your data and needs. There is a good chance you can remove old, irrelevant, or unwanted data, and only move the data you care about. By migrating your freshest and latest data only, you can reduce costs and improve querying performance.

Usually, when organizations migrate from Elasticsearch to Azure Data Explorer, they do not migrate historical data at all. The approach is a “side-by-side” migration: they “fork” their current data pipeline and ingest the ongoing live data to Azure Data Explorer (by using Logstash/Kafka/Event Hub connectors, for example) and after a while, they deactivate their Elasticsearch. Anyway, we show how you can migrate your historical data using Logstash. For efficiency, the Logstash output plugin section in the next tutorials contains a ‘query’ section in which you specify the data you care about and would like to export from Elasticsearch.

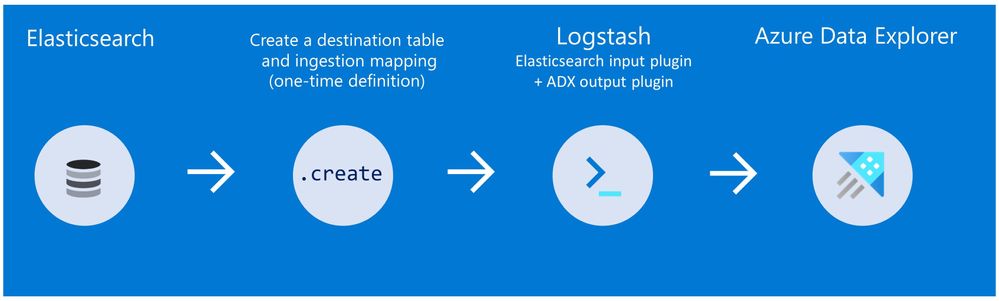

Data migration from Elasticsearch to Azure Data Explorer

Here we describe two methods to migrate historical data from Elasticsearch using Logstash. See the appendix for a step-by-step tutorial.

Method 1: Logstash and One-click Ingestion/LightIngest

Use Logstash to export the data from Elasticsearch into CSV or JSON file(s), and then use Azure Data Explorer’s One-Click Ingestion feature to ingest the data.

- This is an easy way to quickly ramp-up and migrate data because One-Click Ingestion automatically generates the destination table and the ingestion mapping based on the structure of the data source (of course, you can edit the table schema if you want to).

- One-Click Ingestion supports ingesting up to 1 GB at a time. To ingest a more massive amount of data, you can:

- Slice your data into multiple files and ingest them separately.

- Use LightIngest - a command-line utility for ad-hoc data ingestion. The utility can pull source data from a local folder (or from an Azure blob storage container).

- Use the second method described below.

Method 2: Using Logstash only (with the output plugin for Azure Data Explorer)

Use Logstash as a pipeline for both exporting data from Elasticsearch and ingesting it into Azure Data Explorer. When you use this method, you should manually create the Azure Data Explorer destination table and define the ingestion mapping. (You can automatically generate the destination table and the table mapping by using One-Click Ingestion with sample data, as described in method 1 first, and then use method 2 for the rest of the data)

Summary

In this blog post, we talked about the advantages of Azure Data Explorer, went over several visualizations options, including the open-source Kibana-Azure Data Explorer connector, and introduced a variety of ways you can ingest your ongoing data into Azure Data Explorer. Then, we presented two ways to migrate historical data from Elasticsearch to Azure Data Explorer.

In the appendix, you can find two step-by-step sample scenarios for historical data migration.

Please do not hesitate to contact our team or leave a comment if you have any questions or concerns.

Appendix: Step-by-step example of historical data migration

Method 1: Logstash and One-Click Ingestion

- Use Logstash to export the relevant data to migrate from Elasticsearch into a CSV or a JSON file. Define a Logstash configuration file that uses the Elasticsearch input plugin to receive events from Elasticsearch. The output will be a CSV or a JSON file.

- To export your data to a CSV file: use the CSV output plugin. For this example, the config file should look like this:

# Sample Logstash configuration: Elasticsearch -> CSV file input { # Read documents from Elasticsearch matching the given query elasticsearch { hosts => ["http://localhost:9200"] index => "storm_events" query => '{ "query": { "range" : { "StartTime" : { "gte": "2000-08-01 01:00:00.0000000", "lte": "now" }}}}' } } filter { ruby { init => " begin @@csv_file = 'data-csv-export.csv' @@csv_headers = ['StartTime','EndTime','EpisodeId','EventId','State','EventType'] if File.zero?(@@csv_file) || !File.exist?(@@csv_file) CSV.open(@@csv_file, 'w') do |csv| csv << @@csv_headers end end end " code => " begin event.get('@metadata')['csv_file'] = @@csv_file event.get('@metadata')['csv_headers'] = @@csv_headers end " } } output { csv { # elastic field name fields => ["StartTime","EndTime","EpisodeId","EventId","State","EventType"] # This is path where we store output. path => "./data-csv-export.csv" } } This config file specifies that the ‘input’ for this process is the Elasticsearch cluster, and the ‘output’ is the CSV file.

- Implementation note:The filter plugin adds a header with the field names to the CSV file's first line. This way, the destination table will be auto built with these column names. The plugin uses the ‘init’ option of the Ruby plugin to add the header at Logstash startup-time.

- Implementation note:The filter plugin adds a header with the field names to the CSV file's first line. This way, the destination table will be auto built with these column names. The plugin uses the ‘init’ option of the Ruby plugin to add the header at Logstash startup-time.

- Alternatively, you can export your data to a JSON file, using the file output format.

This is what our Logstash config file looks like:# Sample Logstash configuration: Elasticsearch -> JSON file input { # Read documents from Elasticsearch matching the given query elasticsearch { hosts => ["http://localhost:9200"] index => "storm_events" query => '{ "query": { "range" : { "StartTime" : { "gte": "2000-08-01 01:00:00.0000000", "lte": "now" }}}}' } } output { file { path => "./output_file.json" codec => json_lines } } - The advantage of using JSON over CSV is that later, with One-Click Ingestion, the Azure Data Explorer ‘create table’ and ‘create json mapping’ commands will be auto-generated for you. It will save you the need to manually create the JSON table mapping again (in case you want to ingest your ongoing data with Logstash later on. The Logstash output plugin uses json mapping).

- To export your data to a CSV file: use the CSV output plugin. For this example, the config file should look like this:

- Start Logstash with the following command, from Logstash’s bin folder:

logstash -f pipeline.conf - If your pipeline is working correctly, you should see a series of events written to the console.

- The CSV/JSON file should be created at the destination you specified in the config file.

- Ingest your data into Azure Data Explorer with One-Click Ingestion:

- Open the Azure Data Explorer web UI. If this is the first time you are creating an Azure Data Explorer cluster and database, see this doc.

- Right-click the database name and select Ingest new Data.

- In the Ingest new data page, use the Create new option to set the table name.

- Select Ingestion type from a file and browse your CSV/JSON file.

- Select Edit schema. You will be redirected to the schema of the table that will be created.

- Optionally, on the schema page, click the column headers to change the data type or rename a column. You can also double-click the new column name to edit it.

For more information about this page, see the doc. - Select Start Ingestion to ingest the data into Azure Data Explorer.

- After a few minutes, depending on the size of the data set, your data will be stored in Azure Data Explorer and ready for querying.

Method 2: Using Logstash only

- Create an Azure Data Explorer cluster and database.

Note: If you have already created your Azure Data Explorer cluster and database, you can skip this step.More information on creating an Azure Data Explorer cluster and database can be found here.

-

Create the destination table.

Note: If you have already created your table with One-Click Ingestion, or in other ways, skip this step.

Tip: The One-Click Ingestion tool auto-generates the table creation and the table mapping commands, based on the structure of sample JSON data you provide. If you use One-Click Ingestion with a JSON file, as described above, you can use the auto-generated commands, from the Editor section.Auto-generate the table and its mapping using One-Click IngestionIn the Azure portal, under your cluster page, on the left menu, select Query (or use Azure Data Explorer Web UI/Desktop application) and run the following command. This command creates a table with the name ‘MyStormEvents’, with columns according to the schema of the data.

.create tables MyStormEvents(StartTime:datetime,EndTime:datetime,EpisodeId:int,EventId:int,State:string,EventType:string) - Create ingestion mapping.

Note: If you used One-Click Ingestion with a JSON file, you can skip this step. This mapping is used at ingestion time to map incoming data to columns inside the ADX target table.

The following command creates a new mapping, named ‘mymapping’, according to the data's schema. It extracts properties from the incoming temporary JSON on files, that will be automatically generated later, as noted by the path, and outputs them to the relevant column..create table MyStormEvents ingestion json mapping 'mymapping' '[{"column":"StartTime","path":"$.StartTime"},{"column":"EndTime","path":"$.EndTime"} ,{"column":"EpisodeId","path":"$.EpisodeId"}, {"column":"EventId","path":"$.EventId"}, {"column":"State","path":"$.State"},{"column":"EventType","path":"$.EventType "}]' - Your table is ready to be ingested with data from your existing Elasticsearch index. To ingest the historical data from Elasticsearch, you can use the Elasticsearch input plugin to receive data from Elasticsearch, and the Azure Data Explorer (Kusto) output plugin to ingest the data to ADX.

- If you have not used Logstash, you should first install it

- Install the Logstash output plugin for Azure Data Explorer, which sends the data to Azure Data Explorer, by running:

bin/logstash-plugin install logstash-output-kusto - Define a Logstash configuration pipeline file in your home Logstash directory.

In the input plugin, you can specify a query to filter your data according to a specific time range or any other search criteria. This way, you can migrate only the data you care about.

In this example, the config file looks as follows (Replace all the placeholders with the relevant values for your setup. Credentials with ingest privileges are required to connect to ADX):input { # Read all documents from your Elasticsearch, from index “your_index_name” elasticsearch { hosts => ["http://localhost:9200"] index => " your_index_name " query => '{ "query": { "range" : { "StartTime" : {"gte": "2020-01-01 01:00:00.0000000", "lte": "now"}} } }' } } output { kusto { path => "/tmp/kusto/%{+YYYY-MM-dd-HH-mm }.txt" ingest_url => "https://<your cluster name>.<your cluster region>.kusto.windows.net” app_id => "<Your app id>" app_key => "<Your app key>" app_tenant => "<Your app tenant>" database => "<Your Azure Data Explorer DB name>" table => "<Your table name>" mapping => "<Yor mapping name>" } } - Edit your configuration pipeline file according to your Azure Data Explorer cluster details and start Logstash with the following command, from Logstash’s bin folder:

logstash -f pipeline.conf - If your pipeline is working correctly, you should see a series of events written to the console.

- After a few minutes, run the following Azure Data Explorer query to see the records in the table you defined:

MyStormEvents | count The result is the number of records that were ingested into the table. It might take several minutes to ingest the entire dataset, depending on the size of the data set. The result of this query reflects the quantity of ingested records. Your data is now stored in Azure Data Explorer and is ready for querying!