This post has been republished via RSS; it originally appeared at: Azure Developer Community Blog articles.

At the Microsoft Build Conference, we announced several new features extending the capabilities of Azure Stream Analytics, a managed service enabling real-time streaming analytics. With the new innovations announced today, Azure Stream Analytics brought down time-to-insight for Big Data streaming. These new capabilities will be demonstrated during the session “From cloud to the intelligent edge: Drive real-time decisions with Azure Stream Analytics”.

Ready to power your large-scale pipelines

Larger scale processing and higher throughput: We increased the maximum size of streaming pipelines by 40%, allowing you to use to 192 Streaming Units, compared to 120 before. This roughly enables you to process up to 12 million events per minute or 13 GB per minute (19 trillion events per day) on a single pipeline while delivering milliseconds latency. The exact number depends of the format of events and the type of analytics. Additionally, all sinks, with the exception of Power BI, now support parallel writers, enabling higher throughput, and newly released improvements to Cosmos DB output adapter doubles the throughput rate. In order to enable more users to take advantage of these changes, we are also enabling parallel processing by default. We do this by detecting and selecting partitioning key automatically, in the newly released compatibility level 1.2.

Custom deserializers (built-in C#) are now available in private preview for cloud customers, enabling users to process incoming data in any format. In addition to the formats supported out-of-the-box (JSON, CSV and AVRO), you can now ingest the format of your choice such as Protobuf, XML or binary format.

Native support for egress in Apache parquet format is now available in preview. Parquet is a columnar format enabling efficient big data processing. By outputting data in Parquet format in a data lake, you can take advantage of Azure Stream Analytics to power large scale streaming ETL and run batch processing, train machine learning algorithms or run interactive queries on your historical data.

Easier to build ASA queries

We are excited to announce new ways to create Azure Stream Analytics jobs and get insight on your streams of data.

We are announcing today the preview of one-click integration with Event Hubs. With this new integration, you will now be able to visualize incoming data and start to write a Stream Analytics query with one click from the Event Hub portal. Once your query is ready, you will be able to productize it in few clicks and start to get real time insights. This will significantly reduce the time and cost to develop real-time analytics solutions.

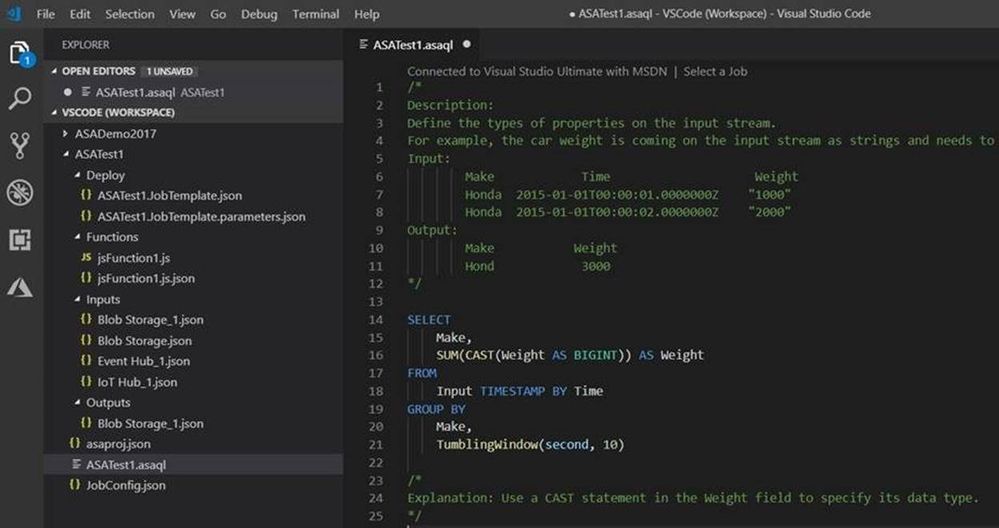

Today, we are also announcing a new VS Code Extension for Azure Stream Analytics. This extension enables cross-platform development of Stream Analytics jobs (Windows, Linux, Mac) and enables you to develop and test streaming jobs locally and deploy them to the cloud. Start using this extension today by following this quick start: “Create an Azure Stream Analytics cloud job in Visual Studio Code”.

Additionally, Azure Stream Analytics tools for Visual Studio are now GA. With Visual Studio, you can develop and test your jobs locally, deploy them to production with continuous integration and continuous deployments.

We are also announcing a new "Windows" aggregate extending windowing functions to simultaneously compute results of multiple windows.

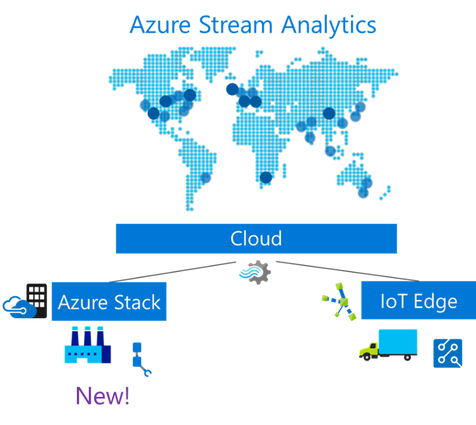

Azure Stream Analytics runs near your data

In addition to running in the cloud, and on Azure IoT Edge, we are announcing the support of Azure Stack, in private preview. This feature enabled on the Azure IoT Edge runtime, leverages custom Azure Stack features, such as native support for local inputs and outputs running on Azure Stack (e.g. Event Hubs, IoT Hub, Blob Storage). This enables to build hybrid architectures that can analyze your data close to where it is generated, lowering latency and maximizing insights.

Azure Stream Analytics on IoT Edge will also support new scenarios for local storage and data processing by integrating natively with the newly announced SQL Database on Edge. This will allow to combine the power of Stream Analytics with powerful SQL capabilities, such as in-database machine learning and graph.

Getting started

To get started with Azure Stream Analytics, you can use one of our quick starts on our documentation page. To access any of the preview features announced today, have a look at our “Stream Analytics Build 2019 preview” page.